Video abstract

Disclaimer

This is not to be construed as financial advice.

Acknowledgments

Thanks to Ben Todd, Kit Harris, Alexander Gordon Brown, and James Snowden for helpful discussion on this manuscript. Any mistakes are my own.

Introduction to “Mission Hedging”

How should a foundation whose only mission is to prevent dangerous climate change invest their endowment? Surprisingly, in order to maximize expected utility, it might use ‘mission hedging’ investment principles and invest in fossil fuel stocks. This way it has more money to give to organisations that combat climate change when more fossil fuels are burned, fossil fuel stocks go up and climate change will get particularly bad. When fewer fossil fuels are burnt and fossil fuels stocks go down - the foundation will have less money, but it does not need the money as much. Under certain conditions the mission hedging investment strategy maximizes expected utility.

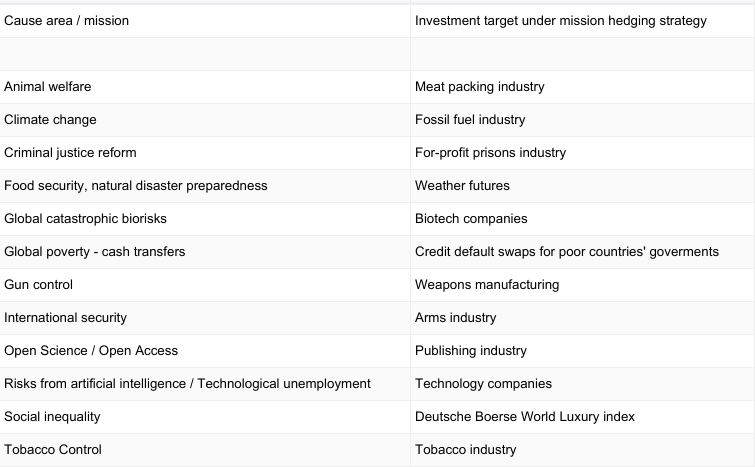

So generally, if you want to do more good, should you invest in ‘evil’ corporations with negative externalities? Corporations that cause harm such as those that sell arms, tobacco, factory farmed meat, fossil fuels, or advance potentially dangerous new technology? Here I argue that, though perhaps counterintuitively, that this might be the optimal investment strategy.

In this note, I extend the special case of an investment strategy for foundation endowments called 'Mission hedging', originally introduced by Brigitte Roth Tran. The generalized strategy proposed here suggests that, under certain conditions, agents should invest resources in entities that cause activity they want to prevent. I will focus only on the conceptual extension of mission hedging here, but more technical details, caveating and mathematical formalism can be found in Roth Trans original paper, all of which are also relevant to the more generalized theory.

Roth Tran [2] summarizes the basic mechanics of mission hedging for foundations as follows:

“”[M]ission hedging," [is] a new strategy in which the endowment “doubles down," skewing investments toward firms it opposes. If increased objectionable activities coincide with both higher firm returns and greater foundation revenue needs (with which to counteract the objectionable activities), then the foundation can align funding availability with need by increasing exposure to objectionable firms beyond that of a typical portfolio. Increasing investment in objectionable firms creates a hedge around the foundation's mission, maximizing expected utility.”

In other words, the basic idea is that, surprisingly, it might be optimal for an altruist whose mission is to combat global poverty, factory farming, mass unemployment, or existential risks from artificial intelligence, to invest in stocks of corporations that might make the problem worse and then give the profits to organisations that will counteract the problem.

For example, it might be a good strategy for donors or even other entities such as governmental organisations that are concerned with global health to invest in tobacco corporations and then give the profits to tobacco control lobbying efforts. Another example might be that animal welfare advocates should invest in companies engaged in factory farming such as those in meat packing industry, and then use the profits to invest in organisations that work to create lab grown meat. A final example: it might be optimal for donors who think that emerging risks from artificial intelligence is a pressing cause to invest in the technology companies that might speed up such dangerous technologies, and then donate the profits to organisations that work on guarding against such risks.

The basic mechanics of the mission hedging investment strategy are the following: when a bad industry does well, you as an investor can use your increased profits or dividends to counteract this trend. In other words, the more harm the industry does, e.g. increases CO2 emissions, sells more meat, or is on more on track to create technology that destroys jobs or is otherwise dangerous, the more you will profit and you can then use these profits to prevent the industry from doing bad things (potentially through donations or grants). If the bad industry is not doing well, then you will not profit as much, but you don't need to donate as much anyway because there is less bad activity. So either way, you will always donate a more optimal amount of money in proportion to the bad activity level.

Here is a simple toy model that illustrates the climate change case.

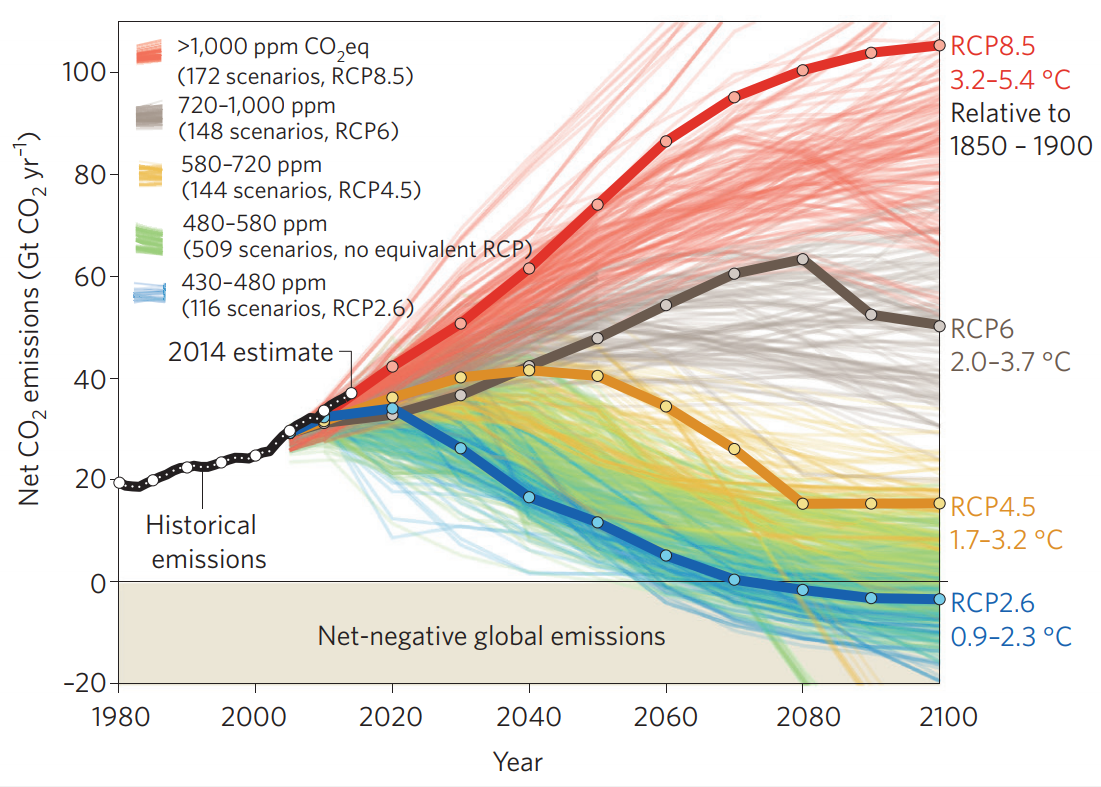

First consider, the following figure (taken from[3]) that shows that there is uncertainty about the world’s emission pathway and how how high warming will be above pre industrial levels:

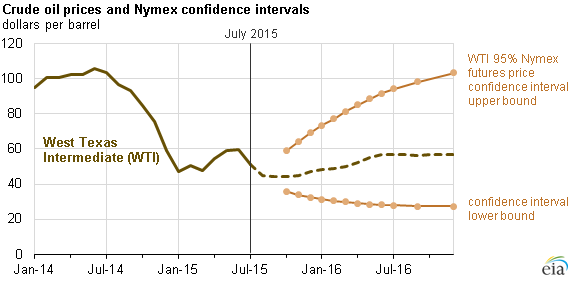

Now consider how the oil price will develop correspondingly:

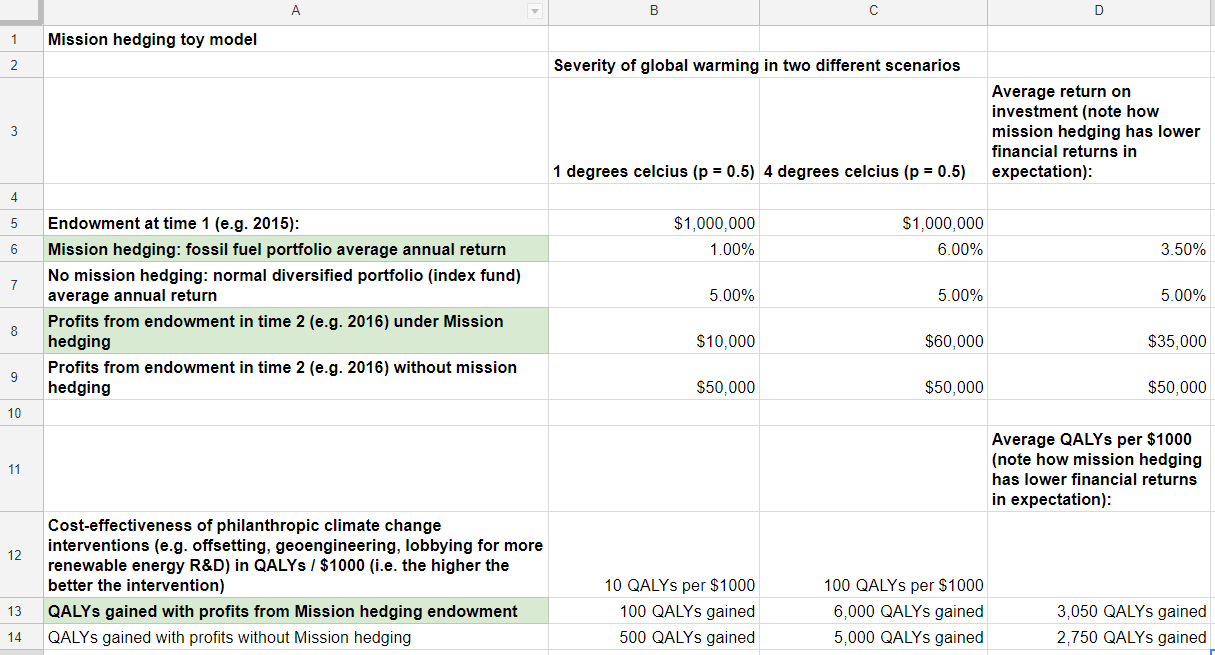

Now consider the following toy model (The spreadsheet can be found here):

As you can see a $1 million endowment will reap different profits if mission hedging investment principles are used under two different warming scenarios. If warming is relatively mild, at e.g. 1 degrees celsius above preindustrial levels, that means that fewer fossil fuels were burned, and fossil fuel stock didn’t do as well: the resulting profits are below market rate returns at a meager $10,000. However, in the second scenario with 4 degrees of warming above preindustrial levels, it’s likely that more fossil fuel were burned than expected and fossil fuel stocks did very well. The dividends in this case might be above market rate returns at $60,000. Note that the average return with mission hedging ($35,000) of these equally probable scenarios (p=0.5), is lower than the average financial returns if no mission hedging is used ($50,000) because the portfolio is optimally diversified (historically average rate of return is about 5% [4]).

Now note how the cost-effectiveness of climate change interventions (e.g. saving the rainforest, geoengineering,lobbying for more renewable energy R&D is different in the two warming scenarios): if warming is relatively mild then the cost-effectiveness of the average climate change intervention won’t be as high (here I assign 10 QALYs per $1000 spent). However, if warming gets really severe as in the 4 degrees warming scenario, then the cost-effectiveness of climate change interventions might be much higher at 100 QALYs per $1000 spent. In this case the average number of QALYs gained under mission hedging is higher (3,050 QALYs gained) than the number of QALYs gained without mission hedging (2,750).

However, mission hedging is not necessarily limited to investing endowments on the stock market. Someone interested in career choice, political campaign contributions, or budget allocation amongst different parts of government might also be advised to use mission hedging. For instance, someone worried about the risks associated with a, say, authoritarian leaning political party coming into power or a corporation having large negative externalities and outsized regulatory capture, might invest career capital into that said political party or company and later use it prevent the bad activity.

I list more examples in the table 1 below.

Table 1: Examples of mission hedging investments for different cause areas or missions an altruistic agent might pursue

Mission hedging and related concepts might also be a useful tool to provide partial answers to the following questions:

- When should one invest to tackle a certain problem? Now or later?

- How much of its endowment should a foundation spend within a given year? [5]

- How much should a government agency or international organisation tasked with a certain mission spend within a given time frame?

- What should we do with regards to uncertainty in the timeline of existential risks from climate change or artificial intelligence? [6]

- How should you allocate your resources between different mission objectives or causes due to uncertainty of whether some objectives or causes are more important than others?

Conditions for mission hedging to be optimal

Markets must be efficient

There are some obvious objections to this strategy. Intuitively, you might think it's better to divest from companies that do things that you think are making the problem worse, because you increase the capital supply to a company that will then create more supply of the socially harmful good. In contradistinction to divestment, the 'mission hedging' strategy explicitly asks you to actually double down on investments in companies you believe to be harmful. However, divestment is unlikely to affect the stock price or the cash flow of the targeted companies at all.

This argument has been made elsewhere in much detail 2,[7]. In brief, if you divest from say, fossil fuel for ethical reasons, then an investor who doesn't care about climate change will happily buy the now undervalued fossil fuel stocks. She might do so by selling her stocks of socially neutral companies (e.g. medical technology corporations) that you now need to buy because you don't want to invest in fossil fuels. The end result is no difference in the allocation in capital or stock price.

Thus, in efficient markets, such as the stock market, no matter how you invest, the companies and their share prices will likely not be affected substantially. Interestingly, the divestment strategy, which has become popular in the context climate change advocacy, is now also adopted in other areas such as open science, where socially responsible investment managers divest from pharma corporations which do not publish all their findings [8]. Thus, it might be increasingly important to prevent attention and resources to be diverted to ineffective divestment strategies.

In any case, the first condition that is crucially important for any area where mission hedging is successfully applied: the market, construed in the broadest sense, that you are investing in must be efficient or else a mission hedging investor risks doing harm instead of good.

So crucially, mission hedging will backfire if markets are inefficient and resources such as time, money and effort would counterfactually enable bad activity. For example, outside of the stock market where markets are less efficient it is easier to cause actually cause bad activity, by, for instance, providing seed funding for a startup to create a new harmful tobacco product . Unlike on the stock market, in this example, the seed investment might not have occurred otherwise and harm is caused by the investor through the invention of technology that might otherwise not have been invented.

Investment must be in entities that cause the bad activity and not merely covary

The second condition is that, ideally, your investment should not just covary with the bad activity but actually be causing the bad activity[9]. For example, one might have the intuition that an organisation tasked with ensuring global health such as the WHO, should invest their endowment in the medical device industry: as global health gets worse, more medical devices will be sold and so the WHO will profit, which gives them more resources to improve global health. As global health gets better, fewer medical devices are sold and so the WHO does not need as many resources to fulfill its mission of ensuring good global health. However, medical devices do not cause global ill-health. Even though medical device sales and the stock prices of corporations selling these devices should often covary, they are merely correlated. One can imagine cases where medical devices sell poorly, and yet global health is poor, or cases where medical devices sell very well, but global health is good. This is why it’s better to invest in corporations that directly cause the bad activity, in this case tobacco. However, a portfolio that strongly covaries with the bad activity level might still be good and have fewer reputational risks.

Corporation that one invests in must not themselves hedge against risks excessively

Corporations often hedge against their own mission risks by investing in things that could hurt their profits and they need to report the use of derivatives in their financial statements, including their purpose and its hedging effectiveness [10]. For instance, PHW — a billion-dollar German poultry company — recently invested in a lab grown meat startup and Tyson, a big meat packing corporation invests in plant-based burgers[11]. Similarly, oil companies often invest in solar. If these companies hedge to the extent that an investment in them just represents investing in a diversified portfolio of the whole industry (e.g. the food industry or the energy industry), then mission hedging will not work. However, it seems that in the cases above, the utility maximizing effect will simply be diluted.

Mission hedging might work well for extreme risks

The mission hedging investment principle might also work for risks from emerging technology such artificial intelligence, especially in a slow takeoff scenario[12] . However, note that the case has been made that hedging against extreme risks might be difficult (“You cannot short the apocalypse”[13]).

Also, the relationship between the stock price of the objectionable asset class and the cost-effectiveness of the intervention might be nonlinear. For instance, it might have an inverted U shape, in climate change scenario where at first mission hedging applies, the stock price of fossil fuel goes up, and the cost-effectiveness of interventions goes up, but then the stock price might go up even further, yet the cost-effectiveness of doing something against climate change might decrease, because it is too late to do something against climate change.

More complicated financial products might be used to hedge against a risk such as strangles, which are option derivatives that allows the holder to profit based on how much the price of the underlying security moves, with relatively minimal exposure to the direction of price movement.

Mission hedging might be non trivial in practise

It might be difficult to find the right corporations to invest in practise. Hedging is a research field within finance that is extensively studied: for instance, there is still active research around what the best hedge against inflation is [14]. It might be non-trivial to figure out how to properly hedge against mission risks.

Extension of the strategy to shareholder activism

A mission hedging collective might bring an harmful industry to its knees

Interestingly, unlike with divestment strategies where potentially a large majority of total global capital would be needed to divest from companies, collective action of a much smaller percentage of the market using the mission hedging strategy could in some cases actually hurt harmful industries in terms of their stock price.

A big investor or a like-minded, value aligned investor collective could use mission hedging instead of divestment to damage industries that cause bad activity. Recall that if a harmful industry does well the investor collective (or large foundation endowment) will profit relatively more and can ramp up their anti-industry activities (such as lobbying against it). If an investor collective and their activity became widely known, such that the market would anticipate its actions, stock prices would go down inevitably. Stock prices would not even go up in the face of ‘positive shocks’ in that industry. Why is that? Imagine a new oil reservoir being discovered. Normally, the market would respond by buying more shares in the company that has discovered this reservoir and the price of shares would go up. However, if the market knows that there's an investor collective that will profit from this and actually use these profits to fight the industry from making further profits of fossil fuels, they might not invest despite the positive shock. There would be nowhere for the stock price to go but down. The rest of the market might divest from industries that are known to be under 'attack' of such a collective.

However, it is important to carefully consider whether the combined capital of such a collective is really large enough to affect the industry and are not dwarfed by counter lobbying activities of the harmful corporations that are trying to maximize shareholder value of socially neutral investors.

By definition, ‘big X- industries’, i.e. Big Oil, Big Pharma, Big Tobacco etc., that have negative externalities make billions in profits. However, one can use a bit of game theory in order to not need to take on the entire industry: a mission hedging investor (collective) could publically commit to always take on the current market leader with investment and lobbying and thus threaten a larger share of the market at once. This is similar to the strategies employed by some animal advocacy groups, which declare that they will not stop putting public pressure on a fast food chain until they only use cage free eggs in their products[15].

In any case, the size of the mission hedging collective would have to be much smaller to drive down stock prices than the size of a divestment collective. This is because a small percentage of socially neutral investors with access to capital could always buy up sin stocks by using leverage, even if trillions of dollars of assets under management have already been divested from fossil fuel stocks[16]. They can do so with because they have the prospect of slightly higher than market rate returns due the exploitation of investors who use the divestment strategy and they can borrow for less than the market rate returns, thus clearing the market.

Another example: governments could use a fixed percentage of their tobacco tax revenue to counteract smoking (e.g. by running public health campaigns). This would make the industry less lucrative for investors, because the industry is working against itself - the better the companies do in terms of selling tobacco, the higher the tax receipts the government can use to educate smokers to quit.

One last example, one could buy life insurance for political dissidents in authoritarian regimes that receive death threats and announce that one would donate the proceeds to efforts to fight the regime.

One way to implement this mechanism would be using self-executing smart contracts on the blockchain, that would pay out in these cases.

Shareholder activism and inside information

When investing in a company that causes bad activity one can also use shareholder activism and try to reduce bad activity from within the industry. This is another advantage as compared to divestment.

Applications for resource allocation between different causes

Some foundations have trying to reduce some uncertainty on how to allocate resources amongst different causes to different buckets [17]. Investment using mission hedging might also be a way to optimize resource allocation between different missions or causes that an altruist thinks are likely to be important.

Imagine you want to make your donations or decide on your foundations strategy on how much you're going to spend on different causes. Let’s say you decide based on moral and cost-effectiveness grounds that you will allocate 60% of your resources to animal welfare causes, 30% to try to prevent risks from advanced technology and 10% to global health at the end of the year.

You might not want to simply allocate the profits of your endowment by allocating, 60% to animal welfare causes, 30% to technological risks and 10% global health, based on how pressing and important each of the causes you think are. Rather, you could use the wisdom of the crowds (here the crowd is the market) to finesse those estimates and decide at the beginning of the year to invest 60% in meat industry stocks, 30% in technology stock, and 10% in tobacco stock. At the end of the year you allocate the profits or dividends to organisations in the respective cause areas. Then, relative to how well the different industries do that year, you will assign relatively more or less money to each cause (e.g. because tobacco stocks did very well and you have relatively more money in the global health portfolio, you end up with 15% of your overall donations going to tobacco control and because technology profits were poor this year, you only donate 20% of your overall donation to nonprofits working on technological risk prevention).

Why is this be a more optimal strategy? There are two major reasons:

- There are also diminishing marginal returns to investing into a problem that becomes smaller and thus the cost-effectiveness of one's efforts to tackle a problem becomes less favourable. Take the global health example from above: as global health becomes better and there are fewer smokers, tobacco control efforts will be less effective at trying to get the last smokers to quit (e.g mass media interventions will not be as effective). Fewer resources should be invested relative to other causes or mission objective an investor might have.

- It reduces the uncertainty about the (future) scale of the problem by tapping into the ‘wisdom of the markets’. Mission hedging is theoretically equivalent to using a very efficient prediction market (e.g. the stock market) to inform your decisions. Given that an agent might be uncertain about the (future) scale of the problem, using the stock market as a prior might shine light on this issue. However, the stock market is not a perfect prediction device for cost-effectiveness of an intervention and can only serve as prior or parameter estimate in one’s model of the cost-effectiveness of an intervention

This strategy can be added on top off other prioritization considerations (e.g. scenario analyses as described in[18]).

Mission hedging might not only work for endowments of foundations, but also for budgets of big international organisations or government departments with multiple mandates, or even with government budgets. As a foundation one could also offer this as a ‘banking service’ where one pays the grant into an account that is invested according to mission hedging principles and the charity can spend based on this.

International organisations: example of the International Monetary Fund

It might be good to convince not only foundation to skew their endowments, but also convince international institutions or governments to use mission hedging for their ‘endowments’ or accounts.

Consider for instance the International Monetary Fund (IMF). The IMFs stated mission is “to foster global monetary cooperation, secure financial stability, facilitate international trade, promote high employment and sustainable economic growth, and reduce poverty around the world." [19].

The IMF has billions in assets[20]. However, even though the IMF diversifies its assets by geography and asset classes against market risks, and hedges against foreign exchange volatility etc., it seems to to not hedge against mission risks.

The IMF could consider skewing their portfolio towards technology companies which have been suggested to create technological unemployment, in order to have more money so that it has more resources to ensure its mission of promoting high employment in case technology companies do well.

There appear to be many such international funds which might want to invest their endowments using mission hedging principles (e.g. another is the Strategic Climate Fund [21]).

Applications outside of investment

Career choice

Mission hedging might also be a strategy for career choice, where time is invested to hedge against risks that threaten one's mission or cause. For instance, one might believe that tobacco companies are particularly harmful, but choose to work at a tobacco corporation. After investing career capital one can use this to work against the harmful corporation in case it becomes particularly harmful. This might occur in the form lobbying for more socially responsible behaviour within the company, whistleblowing, industrial sabotage or earning to give with higher wages or equity in a harmful industry. Potentially this behaviour might be particularly effective if the company is on the verge of performing a particularly harmful action that other competitors would likely not perform. Note that this related, but slightly different and extended argument from the classical ‘earning to give in socially harmful industries’[22] and not simply an argument for high replaceability.

As mentioned above, whether mission hedging will work when it comes to careers will crucially depend on whether the job market is efficient in the sector. Big publicly traded company or big bank has many job applicants and when one takes a job with them, one only replaces a competitor who is only marginally less capable worker from working at the company by taking a job in a harmful industry (this is of course not say that all work at big corporations or in finance is socially harmful)[23]. However, joining a smaller, socially harmful business that has a harder time finding competent workers and less efficient hiring, might actively cause harm because there is a better chance that contributing to the success of the business will cause counterfactual harm over and above what the next candidate in line would have caused.

Finally, this strategy might be particularly effective when dealing with extreme, high stakes, low probability risks. For instance, someone might have the highest impact in terms of expected value by having a career in the military or the secret service. Even though the modal outcome of this career might not be very impactful, in the unlikely event of a war, military government etc., one’s impact might be very high. Thus joining the military might have the highest counterfactual impact in terms of expected value. Historical analysis suggests that soldiers have sometimes single handedly prevented nuclear war[24]. Another example would be working at a leading artificial intelligence company that could create dangerous technology and using whistleblowing when they are on the verge of a breakthrough.

Political donations

One could argue that political donations occur in ‘efficient markets’ as well. In the past US elections, there have been cases in which billionaires donated a few millions, i.e. a tiny fraction of their networth (0.1% percent) to other billionaires campaigns (for instance, Peter Thiel donated to Donald Trump)[25]. These billionaires might have funded their campaigns themselves if it had not been for the donation. In exchange these billionaires might have gained political influence due to their contribution, which can be used to further their mission and to counteract bad activity from a bad politician, even though counterfactually the donation didn’t have any influence as the politician would have been funded anyway.

Retirement saving and hedging against unemployment in ‘earning to give’

Someone who does ‘earning to give' might want to skew their personal retirement fund towards technology companies, if they feel their job is at risk due to technological unemployment. They might also want to invest outside of the economy they work in [26], in case it does poorly and the person doing earning to give is unable to relocate. They might also want to buy disability insurance.

It would be interesting to know whether big sovereign wealth fund / pension funds such as the Norwegian pension fund already do this (hedge against risks to their economy, technological unemployment, shifting demographics to a population with fewer people in working age). Much thought goes into[27] how to invest $25 trillion in private pensions funds in OECD countries[28] in a way that is best for their investors. The answer to this question might elucidate whether this mission hedging strategy has flaws, is difficult to implement in practise, etc.

Limitations

Interestingly, mission hedging decidedly skews the portfolio away from diversification and thus does not maximize risk-adjusted financial returns. Most endowments are trying to maximize financial returns, but with mission hedging one moves away from an optimally diversified, (passively invested) global market portfolio[29]. Thus, one will likely sacrifice financial returns. For a ‘cause neutral’ agent, who doesn’t have a mission, favourite cause, or mandate, it might be best to not sacrifice the flexibility of switching causes and rather invest purely as to maximize financial returns. This is similar to the strategy of building up career capital in the face of uncertainty about what the most important cause is.

Also, because mission hedging is somewhat counterintuitive and people might be repulsed by being associated as profiting from e.g. evil corporations, there might be reputational risks associated with it that need to be factored in. If this is a decisive factor, then it might be better to buy stocks that are merely correlate with bad activity - such as buying stock in the pharmaceutical industry rather than the tobacco industry. One could also buy derivatives that merely track the stock price, and which would be de facto stocks for all intents and purposes, but an investor would not ‘own’ part the company.

However, there might be a way of using some upsides of mission hedging without investing in what might be seen as morally reprehensible companies. Say your foundation focuses on two rather than one focus areas (which in itself might be suboptimal[30]). Say area 1 is animal welfare and area 2 is global poverty. Your prior intuition is that these areas are equally important and you assign a 50-50 split of your annual disbursements to these two cause areas. Now, you could invest your entire endowment in stock in the developing world countries where your foundation supports say cash-transfer program. We will assume that corporations in those country doing well causes poverty reduction and they are not seen as morally reprehensible. Now, relative to how the developing world stock portfolio does you decide to invest excess profits (over the standard stock market return) to the other area (here animal welfare). If the developing world stock does well, you might not need to invest as much in poverty reduction, and have more funds for the more neglected animal welfare area. If the stock doesn’t do as well, and there are no excess profits, it is better to stick closer to the original 50-50 split.

Other quick thoughts

- The process of hedging might also help to put clarify one's mission itself. If there would be a lot of resistance for the IMF to skew their portfolio towards technology companies because they state their mission is to keep unemployment low, and the hedge is accepted to work, then maybe the IMF is not really pursuing its mission or mandate. Mission hedging might clarify the mission of an organisation or agent, because one ‘puts their money where their mouth is’.

- Catastrophe (CAT) bonds are now a $29 billion dollar market, and provide coverage against hurricanes, earthquakes, and pandemics [31]. There are new developments in this area of disaster insurance[32] with developments such as the creation over the counter catastrophe swaps[33].There is also ongoing research on cyber insurance and catastrophes on the cyberspace [34] and on liability of future robotics technology [35]

- “Betterment Investing just added a no-cost automatic donation feature. Using their existing tax-optimized system, they allow you to donate your most appreciated shares directly to any of their many connected charities. This gives you the maximum tax deduction right now, while reducing your taxes further when you later withdraw from your account later in life” [36]

- There are some artificial intelligence ETFs[37] that one could look into to hedge against risk from emerging technologies

[2] "Divest, Disregard, or Double Down? by Brigitte Roth Tran :: SSRN." 13 Apr. 2017, https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2952257. Accessed 2 Jun. 2017.

[3] "Betting on negative emissions | Nature Climate Change." 21 Sep. 2014, https://www.nature.com/articles/nclimate2392. Accessed 20 Feb. 2018.

[4] "What is the average annual return for the S&P 500? | Investopedia." https://www.investopedia.com/ask/answers/042415/what-average-annual-return-sp-500.asp. Accessed 20 Feb. 2018.

[5] "Should the Open Philanthropy Project be Recommending More/Larger ..." 2015. 19 Sep. 2016 <http://www.openphilanthropy.org/blog/should-open-philanthropy-project-be-recommending-morelarger-grants>

[6] "What Do We Know about AI Timelines? | Open Philanthropy Project." 2015. 19 Sep. 2016 <http://www.openphilanthropy.org/focus/global-catastrophic-risks/potential-risks-advanced-artificial-intelligence/ai-timelines>

[7] "How Investors Can (and Can't) Create Social Value | Stanford Social ...." 8 Dec. 2016, https://ssir.org/up_for_debate/article/how_investors_can_and_cant_create_social_value. Accessed 2 Jun. 2017.

[8] "Investment managers back greater transparency of clinical trials | The ...." 23 Jul. 2015, http://www.bmj.com/content/351/bmj.h4002. Accessed 5 Jun. 2017.

[9] "Selecting investments based on covariance with the value of charities ...." 4 Feb. 2017, http://effective-altruism.com/ea/16u/selecting_investments_based_on_covariance_with/. Accessed 17 Feb. 2018.

[10] https://link.springer.com/article/10.1007/s12197-017-9399-5

[11] "Taxes on Meat Could Join Carbon and Sugar to Help Limit Emissions ...." 11 Dec. 2017, http://www.fairr.org/news-item/taxes-meat-join-carbon-sugar-help-limit-emissions/. Accessed 17 Feb. 2018.

[12] "Strategic considerations about different speeds of AI takeoff - Future of ...." 12 Aug. 2014, https://www.fhi.ox.ac.uk/strategic-considerations-about-different-speeds-of-ai-takeoff/. Accessed 8 Oct. 2017.

[13] "Can You Short the Apocalypse? - Marginal REVOLUTION." 12 Aug. 2017, http://marginalrevolution.com/marginalrevolution/2017/08/can-short-apocalypse.html. Accessed 8 Oct. 2017.

[14] "IJFS | Free Full-Text | A Study of Perfect Hedges - MDPI." 14 Nov. 2017, http://www.mdpi.com/2227-7072/5/4/28. Accessed 17 Feb. 2018.

[15] "Ending factory farming as soon as possible - 80,000 Hours." 27 Sep. 2017, https://80000hours.org/2017/09/lewis-bollard-end-factory-farming/. Accessed 17 Feb. 2018.

[16] "Investment Funds Worth Trillions Are Dropping Fossil Fuel Stocks ...." 12 Dec. 2016, https://www.nytimes.com/2016/12/12/science/investment-funds-worth-trillions-are-dropping-fossil-fuel-stocks.html. Accessed 5 Jun. 2017.

[17] "Update on Cause Prioritization at Open Philanthropy | Open ...." 26 Jan. 2018, https://www.openphilanthropy.org/blog/update-cause-prioritization-open-philanthropy. Accessed 17 Feb. 2018.

[18] http://benjaminrosshoffman.com/givewell-case-study-effective-altruism-2

[19] "International Monetary Fund - Wikipedia." https://en.wikipedia.org/wiki/International_Monetary_Fund. Accessed 8 Oct. 2017.

[20] "The IMF at a Glance." http://www.imf.org/en/About/Factsheets/IMF-at-a-Glance. Accessed 8 Oct. 2017.

[21] "Clean Technology Fund | Climate Investment Funds." https://www.climateinvestmentfunds.org/fund/clean-technology-fund. Accessed 8 Oct. 2017.

[22] "Just how bad is being a CEO in big tobacco? - 80,000 Hours." 21 Jan. 2016, https://80000hours.org/2016/01/just-how-bad-is-being-a-ceo-in-big-tobacco/. Accessed 17 Feb. 2018.

[23] "The difference between true and tangible impact - 80,000 Hours." https://80000hours.org/articles/true-vs-tangible-impact/. Accessed 5 Jun. 2017.

[24] "Why the long-term future of humanity matters more than anything else ...." https://80000hours.org/articles/why-the-long-run-future-matters-more-than-anything-else-and-what-we-should-do-about-it/. Accessed 8 Oct. 2017.

[25] "Peter Thiel Has Been Hedging His Bet On Donald Trump - BuzzFeed." 7 Aug. 2017, https://www.buzzfeed.com/ryanmac/peter-thiel-and-donald-trump. Accessed 20 Aug. 2017.

[26] "Do Investors Put Too Much Stock in the U.S.? | Michael Dickens." 26 Mar. 2017, http://mdickens.me/2017/03/26/do_investors_put_too_much_stock_in_the_us/. Accessed 8 Oct. 2017.

[27] "A two-step hybrid investment strategy for pension funds - ScienceDirect." https://www.sciencedirect.com/science/article/pii/S1062940816301887. Accessed 18 Feb. 2018.

[28] "Macro- and micro-dimensions of supervision of large pension ... - IOPS." http://www.iopsweb.org/WP-30-Macro-Micro-Dimensions-Supervision-LPFs.pdf. Accessed 17 Feb. 2018.

[29] "Meet the Global Market Portfolio -- The 'Optimal Portfolio For ... - Forbes." 30 Jul. 2014, https://www.forbes.com/sites/phildemuth/2014/07/30/meet-the-global-market-portfolio-the-optimal-portfolio-for-the-average-investor/. Accessed 17 Feb. 2018.

[30]James Snowden - Does risk aversion give us a good reason to diversify our charitable portfolio? ceppa.wp.st-andrews.ac.uk/files/2016/04/snowden_eac.ppt

[31] "Pandemic bonds, a new idea - Fighting disease with finance." 27 Jul. 2017, https://www.economist.com/news/finance-and-economics/21725589-world-bank-creates-new-form-finance-pandemic-bonds-new-idea. Accessed 17 Feb. 2018.

[32] "Economic instruments - IIASA PURE." http://pure.iiasa.ac.at/13904/1/Chapter4-ENHANCE.pdf. Accessed 18 Feb. 2018.

[33] "Loading Pricing of Catastrophe Bonds and Other Long-Dated ...." 31 Oct. 2016, https://arxiv.org/abs/1610.09875. Accessed 18 Feb. 2018.

[34] "Cyber Insurance - Springer Link." 27 Jun. 2017, https://link.springer.com/10.1007/978-3-319-06091-0_25-1. Accessed 18 Feb. 2018.

[35] Baum (2017): https://books.google.co.uk/books?hl=en&lr=&id=N3QzDwAAQBAJ&oi=fnd&pg=PT73&ots=tknk3i2--K&sig=FbSwIynpOmYuYae-IXB2XdJgslE&redir_esc=y#v=onepage&q&f=false

[36] "How to Give Money (and Get Happiness) More Easily." 4 Dec. 2017, http://www.mrmoneymustache.com/2017/12/04/how-to-give-money-and-get-happiness-more-easily/comment-page-3/. Accessed 17 Feb. 2018.

[37] "Top 15 Artificial Intelligence ETFs - ETF ...." 18 Feb. 2018, http://etfdb.com/themes/artificial-intelligence-etfs/. Accessed 18 Feb. 2018.

I took your spreadsheet and made a quick estimate for an AI mission hedging portfolio. You can access it here.

The model assumes:

In the model, the extra utility from the AI portfolio is equivalent to an extra 2% annual return.

My guess is that this is less than the extra returns one might expect if one believes the market doesn't price in short AI timelines sufficiently, but it makes the case for investing in an AI portfolio more robust.

Caveat: I did this quickly. I haven't thought very carefully about the choice of parameters, haven't done sensitivity analyses, etc.

As an extension to this model, I wrote a solver that finds the optimal allocation between the AI portfolio and the global market portfolio. I don't think Google Sheets has a solver, so I wrote it in LibreOffice. Link to download

I don't know if the spreadsheet will work in Excel, but if you don't have LibreOffice, it's free to download. I don't see any way to save the solver parameters that I set, so you have to re-create the solver manually. Here's how to do it in LibreOffice:

Given the parameters I set, the optimal allocation is 91.8% to the global market portfolio and 8.2% to the AI portfolio. The parameters were fairly arbitrary, and it's easy to get allocations higher or lower than this.

As of yesterday, my position on mission hedging was that it was probably crowded out by other investments with better characteristics[1], and therefore not worth doing. But I didn't have any good justification for this, it was just my intuition. After messing around with the spreadsheet in the parent comment, I am inclined to believe that the optimal altruistic portfolio contains at least a little bit of mission hedging.

Some credences off the top of my head:

[1] See here for more on what investments I think have good characteristics. More precisely, my intuition was that the global market portfolio (GMP) + mission hedging was probably a better investment than pure GMP, but a more sophisticated portfolio that included GMP plus long/short value and momentum had good enough expected return/risk to outweigh the benefits of mission hedging.

EDIT: I should add that I think it's less likely that AI mission hedging is worth it on the margin, given that (at least in my anecdotal experience) EAs already tend to overweight AI-related companies. But the overweight is mostly incidental—my impression is EAs tend to overweight tech companies in general, not just AI companies. So a strategic mission hedger might want to focus on companies that are likely to benefit from AI, but that don't look like traditional tech companies. As a basic example, I'd probably favor Nvidia over Google or Tesla. Nvidia is still a tech company so maybe it's not an ideal example, but it's not as popular as Google/Tesla.

Very cool - thanks for doing this.

I agree that EA-related resources are skewed towards the US tech sector (see Ben Todd's recent post) and that should definitely be taken into account.

Thanks for making this model extension!

I believe the most important downside to a mission hedging portfolio is that it's poorly diversified, and thus experiences much more volatility than the global market portfolio. More volatility reduces the geometric return due to volatility drag.

Example case:

In geometric Brownian motion, arithmetic return = geometric return + stdev^2 / 2. Therefore, the geometric mean return of the AI portfolio is 5% + 15%^2/2 - 30%^2/2 = 1.6%. If we still assume a 20% return to AI stocks in the short-timelines scenario, that gives 1.3% return in the long-timelines scenario. And the annual return thanks to mission hedging is -1.1%.

(I'm only about 60% confident that I set up those calculations correctly. When to use arithmetic vs. geometric returns can be confusing.)

Of course, you could also tweak the model to make mission hedging look better. For instance, it's plausible that in the short-timeline world, money is 100x more valuable instead of 10x, in which case mission hedging is equivalent to a 24% higher return even with my more pessimistic assumption for the AI portfolio's return.

Yeah, in my model, I just assumed lower returns for simplicity. I don't think this is a crazy assumption – e.g., even if the AI portfolio has higher risk, you might keep your Sharpe ratio constant by reducing your equity exposure. Modelling an increase in risk would have been a bit more complicated, and would have resulted in a similar bottom line.

I don't really understand your model, but if it's correct, presumably the optimal exposure to the AI portfolio would be at least slightly greater than zero. (Though perhaps clearly lower than 100%.)

To be clear, my model is exactly the same as your model, I just changed one of the parameters—I changed the AI portfolio's overall expected return from 4.7% to 1.3%.

It's not intuitively obvious to me whether, given the 1.3%-return assumption, the optimal portfolio contains more AI than the global market portfolio. I know how I'd write a program to find the answer, but it's complicated enough that I don't want to do it right now.

(The way you'd do it is to model the correlation between the AI portfolio and the market, and set your assumptions such that the optimal value-neutral portfolio (given the two investments of "AI stocks" and "all other stocks") equals the global market portfolio. Then write a utility function that assigns more utility to money in the short-timelines world and maximize that function where the independent variable is % allocation to each portfolio. You can do this with Python's scipy.optimize, or any other similar library.)

EDIT: I wrote a spreadsheet to do this, see this comment

I can't follow this either but a study cited in Radical Markets suggests that a randomly chosen portfolio of as few as fifty stocks achieves 90% of the diversification benefits available from full diversification across the entire market.

Given that FAANG's market cap alone is already $3 trillion and for almost 10% of the U.S. stock market's total market capitalization of $31 trillion, AND you could further diversify then this, wouldn't you get quite a lot of the diversification benefits?

50 randomly-chosen stocks are much better diversified than 50 stocks that are specifically selected for having a high correlation to a particular outcome (e.g., AI development).

This paper provides some more in-depth explanation of what I was talking about with the math. It's fairly technical, but it doesn't use any math beyond high school algebra/statistics.

The key point I was making is that, if markets are efficient, then you shouldn't expect a 5% (or even 4.7%) geometric mean return from the AI portfolio. Instead, you should expect more like 1.3%. I might have messed up some of the details, but I'm confident that the geometric return for an un-diversified portfolio in an efficient market is meaningfully lower than the global market return. This is not to say that mission hedging is a bad idea, just that this is an important fact to take into account.

Very interesting- thanks for elaborating!

@Jonas: I think your model is interesting, but if we define transformative AI like OpenPhil does (" AI that precipitates a transition comparable to (or more significant than) the agricultural or industrial revolution."), and you invest for mission hedging in a diversified portfolio of AI companies (and perhaps other inputs such as hardware) , then it seems conceivable to me to have much higher returns - perhaps 100x of crypto? This is the basic idea for mission hedging for AI, and in line with my prior, and I think this difference in returns might be why I find the results of your model, that Mission hedging wouldn't have a bigger effect, surprising.

This piece provides an IMO pretty strong defense of divestment: https://sideways-view.com/2019/05/25/analyzing-divestment/

Do you agree, and if to some extent, how does it change the conclusions of this article?

I think I might not really understand Paul’s argument completely, but I really value his opinion generally, so I think more people should look into this more (but he also said he meant to write a new version soon).

Having said that, I still think divestment is not worth it for EAs and I still believe mission hedging is a better strategy, for four reasons:

Cool, thanks for the reply! Strong-upvoted.

Regarding #1 and #2, so far I found Paul's line of argument more convincing, but I have only followed the discussion superficially. But points #3 and #4 seem pretty strong and convincing to me, so I'm inclined to conclude that mission hedging is indeed the stronger consideration here.

For AI risk, #3 might not apply because there's no divestment movement for AI risk and tech giants are large compared to our philanthropic investments. For #4, using the same 10:1 ratio, we'd be faced with the choice between sacrificing around $10 billion to reduce the largest tech giants' output by 1%, or do something else with the money. We can probably do better than reducing output by 1%, especially because it's pretty unclear whether that would be net positive or negative.

My understanding is that 10x leverage would also mean ~10x cost (from forgone diversification).

Hi Everybody!

Did anyone take Hauke's advice and invest in "[c]orporations that . . . sell . . . factory farmed meat"?

If so, please reach out to me!!

Legal Impact for Chickens has a unique opportunity for you to help animals! ❤️

Sincerely,

Alene & LIC 🐥⚖️

PS this post is nonprofit attorney advertising brought to you by Legal Impact for Chickens, 2108 N Street, # 5239, Sacramento CA 95816-5712. We represent our clients for FREE. We aren't trying to sell you anything. We just want your help. Learn more here.

Hi alene! I think very few people will see this comment on a post from 6 years ago. You might have more luck posting this as a quick take (on the homepage, below the posts)

Ooh thank you Lorenzo!!! Will do!

The point I would most like to emphasise is that it's often unclear what will happen to an asset when cost-effectiveness goes up. If you're confident it'll go up at that time, you buy/overweight it. If you're confident it'll go down at that time, you sell/underweight it. If it could go either way, this approach is weaker. Most discussion I have seen on this topic assumes that the 'evil' asset can be expected to move in the same direction as cost-effectiveness. Finding something with reliable covariance in either direction seems like it might be most of the challenge.

For more detail on that, here are some notes on the most valuable insights and most significant errors of the original Federal Reserve paper.

My guess is that the best suggestions from this post appear in 'Applications outside of investment'. These do not fall prey to the abovementioned issues since the mechanisms are different to the investment case, directly exploiting the extra power one gains from being on the inside of an organisation rather than correlation/covariance.

(I might as well note that this comment represents my views on the matter, and no-one else's, while the main post represents the views of others, and not necessarily mine.)

I thought this was super interesting, thanks Hauke. The question that sprang to mind: in what circumstances would it do more good to engage in mission hedging vs trying to maximise expected returns?

Great question!

In theory, mission hedging can always beat maximizing expected returns in terms of maximizing expected utility.

In practice, I think the main considerations here are a) whether you can find a suitable hedge in practice and b) whether you are sufficiently certain that a cause is important, because you give up the flexibility of being cause neutral and tie yourself financially to a particular cause. You can remain cause neutral by trying to maximize expected financial returns.

To me, the two most promising applications seem to be AI safety, where people are often quite certain that it is one of the most pressing causes (as per maxipok or preventing s-risk), and it seems as if investing in AI companies is plausible to me (but note Kit Harris objections in the comment section here). And then also using mission hedging for ones career might be good by either joining the military, the secret service, or an AI company for the reasons outlined above i.e. historically people in the military have sometimes had outsized impact.

Okay, but can you explain why it would beat maximise expected returns?

Here's the thought: maximise expected returns gives me more money than mission hedging. That extra money is a pro tanto reason to think the former is better.

However, mission hedging seems to have advantages, such as in shareholder activism: if evil company X makes money, I will have more cash to undermine it, and other shareholders will know this, thus suppressing X's value. This is a pro tanto reason to favour mission hedging.

How should I think about weighing these pro tanto reasons against one another to establish the best strategy? Apologies if I've missed something here, thinking this way is new to me.

Thanks for asking for clarification - I'm sorry I think I've been unclear about the mechanism. It's not really about shareholder activism, this is just an extra.

I've now added a few graphs and a spreadsheet as a toy model of why mission hedging beats a strategy that maximizes financial returns in the introduction. Can you take a look and see whether it's more clear now? Or maybe I'm missing your question.

It seems to me that for mission hedging to work, there needs to be a strong positive relationship between production and stock price. That is, when (say) a fossil fuel company produces more oil, its stock price goes up. That might happen, but it might not. Several things need to happen:

Step 3 seems very likely to happen in the long run, but steps 1 and 2 seem more uncertain to me, and I don't have a great understanding of the relevant economics. Do we have good reason to expect increased production to translate into stock returns? Or do we at least understand the circumstances under which it will or will not translate?

(Alternatively, we could look at the relationship between, say, oil production and the price of oil futures. This is a simpler relationship, but I'd guess the two numbers are basically uncorrelated. They will move together if demand changes, and will move oppositely if supply changes.)

I'm not sure about this section. You just say that the covariance isn't perfect, therefore we must directly invest in the relevant industry. Sure, the imperfect covariance is a reason why we should expect it to be better to invest in the relevant industry, but that doesn't mean that hedging in covariant industries is not good at all. You're talking about investments in the relevant industry as if they are a necessary condition for hedging to make sense, when in reality you just give a presumption that it's better than doing it in other ways. There is usually a chance that your investments will fail when the rest of the industry does well anyway, even if you invest directly in the target sector. And investing in a separated, covariant industry has a major benefit in that, not only is it not a reputational risk, but it isn't a directly harmful activity if the EMH is false.

Also, there is another necessary condition which is that the marginal value of donations must increase when the problem gets worse. Companies hedge because they have a greater need for money when their stocks fail. They don't really maximize expected profits, they are somewhat risk averse. Now do our donations go further when the problems in the world get worse? I'm inclined to say "yes", but I think it's a very small effect. I wrote about this and tested some estimate numbers with a very rudimentary calculation, and it seemed to me that the benefit was arguably too small to worry about, and it doesn't seem sufficient to outweigh the risk of robustly improving the performance of bad industries in the strategy you outline here.

http://effective-altruism.com/ea/16u/selecting_investments_based_on_covariance_with/

https://imgur.com/9of14il

Also, I think that generalizing to selecting estimates based on covariance with charity value is the right framework to use here, instead of just looking at this sort of hedging.

These are excellent comments, thank you!

Regarding your first point on investing in industries that covary vs. are causally related: you're right that mission hedging can also work when there is just covariance. I think the main benefit of investing in companies that cause the bad activity is that it will have have a tighter covariance than investing in companies that do not cause the bad activity and we can know this ex ante. I do take your point that this is potentially more of a reputational risk in investing in companies that cause the bad activity (for some cases, for some people). I do not think the reputational risk argument applies much to either small investors or some investments such as investing in technology companies to hedge against AI risks. Now, your last point I find most interesting: if the efficient market hypothesis (EHM) doesn't hold then it's better to invest in things that have a high covariance. I have a strong intuition that EHM holds for publically traded stocks, especially for small investors, who don't make a big fuzz about investing. Overall, I feel drawn to selecting investments that cause the bad activity due to higher certainty about high future covariance.

Yes, this crucially depends on whether there are increasing returns to scale to charitable intervention, which is another assumption. However, for me the assumption has has intuitive appeal. I can imagine the effect size to be substantial in some cases (I now give a toy model in the beginning of the text). Think about the effect of public good type interventions where the cost-effectiveness scales pretty linearly with the problem (how many beings are affected).

I took a look at your calculation and I'm sorry to say that I don't quite understand it. However, based on the numbers that I see, I think that plugging in different parameters into the model would also not be entirely unreasonable. But yes, I agree think it might be interesting to have more empirical validation on this.

I think our disagreement might boil down to different intuitions about whether EMH holds on the stock market and whether there returns to scale i.e. whether a charity becomes more effective as the problem gets bigger. I think this is somewhat likely in some cases (but I'm not completely confident in this). So I'm still pretty convinced about this to the point where I would advice people to seriously, though carefully consider using mission hedging over your covariance approach.

I think investing in corporations that cause the bad activity is theoretically equivalent to this and in fact is based on finding a (distal) cause of charity effectiveness. However, as mentioned above it assumes increasing returns to scale.

But I just thought about finding a more proximal cause of charity effectiveness, that can still be directly implemented on the stock market and maybe this might be shorting the endowment of your favorite charity. Will Macaskill made a similar comment on your post saying that maybe it might be worth considering shorting FB if OpenPhil is still heavily reliant on it. Maybe your favourite charity has an endowment and it itself doesn't hedge against risks (because their portfolio is not optimally diversified).

okay let me explain the spreadsheet better. I was comparing investments in an irrelevant market, to investments in a relevant market. Each investment has a 1/3 chance of growing 0%, a 1/3 chance of growing 5%, and a 1/3 chance of growing 10%. The top spreadsheet shows the value of your money if you invest it in an irrelevant market. The bottom spreadsheet shows the value of your money if you invest it in a relevant market. For instance if you invest in a relevant market and the relevant market doesn't change, then you get 0% on your investments and 0% change in donation value so your donations are worth 100% what they were worth before. If you invest in an irrelevant market, and both markets go up by 5%, then your donations would be worth 1 1.05 1.05 = 110.25 % if the covariance is 100%, but here the covariance is 40% so the calculation is 1 1.05 1.02 = 107.10%. Both numbers on the right are the average of the nine grid squares to the left, so they are the expected value of your investment after one year.

It's really really simplistic math but I just tried to get a sense of the scale of the effect, it turned out to be small.

This is really fascinating. I think this is largely right and an interesting intellectual puzzle on top of it. Two comments:

1) I would think mission hedging is not as well suited to AI safety as it is to climate or animal activism because AI safety is not directly focused on opposing an industry. As has been noted elsewhere on this forum, AI safety advocates are not focused on slowing down AI development and in many cases tend to think it might be helpful, in which case mission hedging is counterproductive. I could also imagine a scenario in which AI problems also weigh down a company's stock. Maybe a big scandal occurs around AI that foreshadows future problems with AGI and also embarrasses AI developers.

2) As kbog notes, it doesn't seem clear that the growth in an industry one opposes means the marginal dollar is more effective. Even though an industry's growth increases the scale of a problem, it might lower its tractability or neglectedness by a greater amount.

Excellent comments- thanks!

I know people working on AI safety who would want to slow down progress in AI if it would be tractable. I actually think that it might be possible to slow down AI by reducing taxes on labor and increasing migration - see https://www.cgdev.org/blog/why-are-geniuses-destroying-jobs-uganda - which I think is a better idea than robot taxes: https://qz.com/911968/bill-gates-the-robot-that-takes-your-job-should-pay-taxes/ . Somebody should write about this.

But this not really about speed: mission hedging might work in this case because the stock price of an AI company merely reflects the probability of whether a company will come up with better artificial intelligence than the competition earlier, not when.

Note that it is important to diversify within mission hedging. So weighing down one company's stock doesn't matter. I feel that any scandals that are not really related to the actual ability of the AI industry to produce better AI faster will likely have very limited effect on the stock price dropping. I'm reminded here of fatalities with self-driving cars, which has not rocked investors confidence in investing in them. But even if it does, than that just means that self-driving cars are not as great as we thought they would be (presumably some fatalities are already 'priced in').

But yes, your point is valid in the that 'you can't short the apocalypse', as I mention above. Overall, I actually think, all things considered, mission hedging might work best for AI risk scenarios.

Small nit: the links in the table of contents lead to a Google Doc, rather than to the body of the article.

Other than that, I love the article. Thanks for the giant disclaimer ;)

This seems like a really powerful tool to have in one's cognitive toolbox when considering allocating EA resources. I have two questions on evaluating concrete opportunities.

First, if I can state what I take to be the idea (if I have this wrong, then probably both of my questions are based on understanding): we can move resources from lower-need (i.e. the problem continues as default or improves) to higher-need situations (i.e. the problem gets worse) by investing in instruments that will be doing well if the problem is getting worse (which because of efficient markets is balanced by the expectation they will be doing poorly if the problem is improving).

You mention the possibility that for some causes, the dynamics of the cause progression might mean hedging fails (like fast takeoff AI). Is another possible issue that some problems might unlock more funding as they get worse? For example, dramatic results of climate change might increase funding to fight it sufficiently early. While the possibility of this happening could just be taken to undermine the serious of the cause ("we will sort it out when it gets bad enough"), if different worsenings unlock different amounts of funding for the same badness, the cause could still be important. So should we focus on instruments that get more valuable when the problem gets worse AND the funding doesn't get better?

My other question was on retirement saving. When pursuing earning-to-give, doesn't it make more sense just to pursue straight expected value? If you think situations in which you don't have a job will be particularly bad, you should just be hedging those situations anyway. Couldn't you just try and make the most expected money, possibly storing some for later high-value interventions that become available?

Thank you for sharing this research! I will consider it when making investment decisions.

I replied about this before to one of your posts. Maybe I did not explain it well. In short, two guys wrote a paper about how combinations of heat and humidity above certain levels could kill everyone who lacks access to air conditioning in large regions of the world, or at least force them to evacuate their countries. Do you have any opinion on the priority level of understanding this compared with other climate causes?

Sorry, I missed your previous comment. I'm not an expert on climate change and this not necessarily the best place for this discussion of why this is neglected within effective altruism - I would recommend that you post your question to Effective Altruism Hangout facebook group and ask for an answer. The reason that you get downvoted is that you post on many different threads even though it's not really related to the discussion. I would recommend you reading this: before posting though: https://80000hours.org/2016/05/how-can-we-buy-more-insurance-against-extreme-climate-change/

However, here are my two cents:

the 'wet bulb' phenomenon is known and mortality from heatstrokes is included in most assessments of overall cost of climate change. see https://www.givingwhatwecan.org/cause/climate-change/ https://www.givingwhatwecan.org/report/climate-change-2/ https://www.givingwhatwecan.org/report/modelling-climate-change-cost-effectiveness/

most scientists agree that the most likely outcome is not that the whole planet will be pretty much uninhabitable. However, there is a chance that this will be true and extreme risks from climate change is a topic that many people in the EA community care about (see:(https://80000hours.org/problem-profiles/ ))