Upvotes or likes have become a standard way to filter information online. The quality of this filter is determined by the users handing out the upvotes.

For this reason, the archetypal pattern of online communities is one of gradual decay. People are more likely to join communities where users are more skilled than they are. As communities grow, the skill of the median user goes down. The capacity to filter for quality deteriorates. Simpler, more memetic content drives out more complex thinking. Malicious actors manipulate the rankings through fake votes and the like.

This is a problem that will get increasingly pressing as powerful AI models start coming online. To ensure our capacity to make intellectual progress under those conditions, we should take measures to future-proof our public communication channels.

One solution is redesigning the karma system in such a way that you can decide whose upvotes you see.

In this post, I’m going to detail a prototype of this type of karma system, which has been built by volunteers in Alignment Ecosystem Development. EigenKarma allows each user to define a personal trust graph based on their upvote history.

EigenKarma

At first glance, EigenKarma behaves like normal karma. If you like something, you upvote it.

The key difference is that in EigenKarma, every user has a personal trust graph. If you look at my profile, you will see the karma assigned to me by the people in your trust network. There is no global karma score.

If we imagine this trust graph powering a feed, and I have gamed the algorithm and gotten a million upvotes, that doesn’t matter; my blog post won’t filter through to you anyway, since you do not put any weight on the judgment of the anonymous masses.

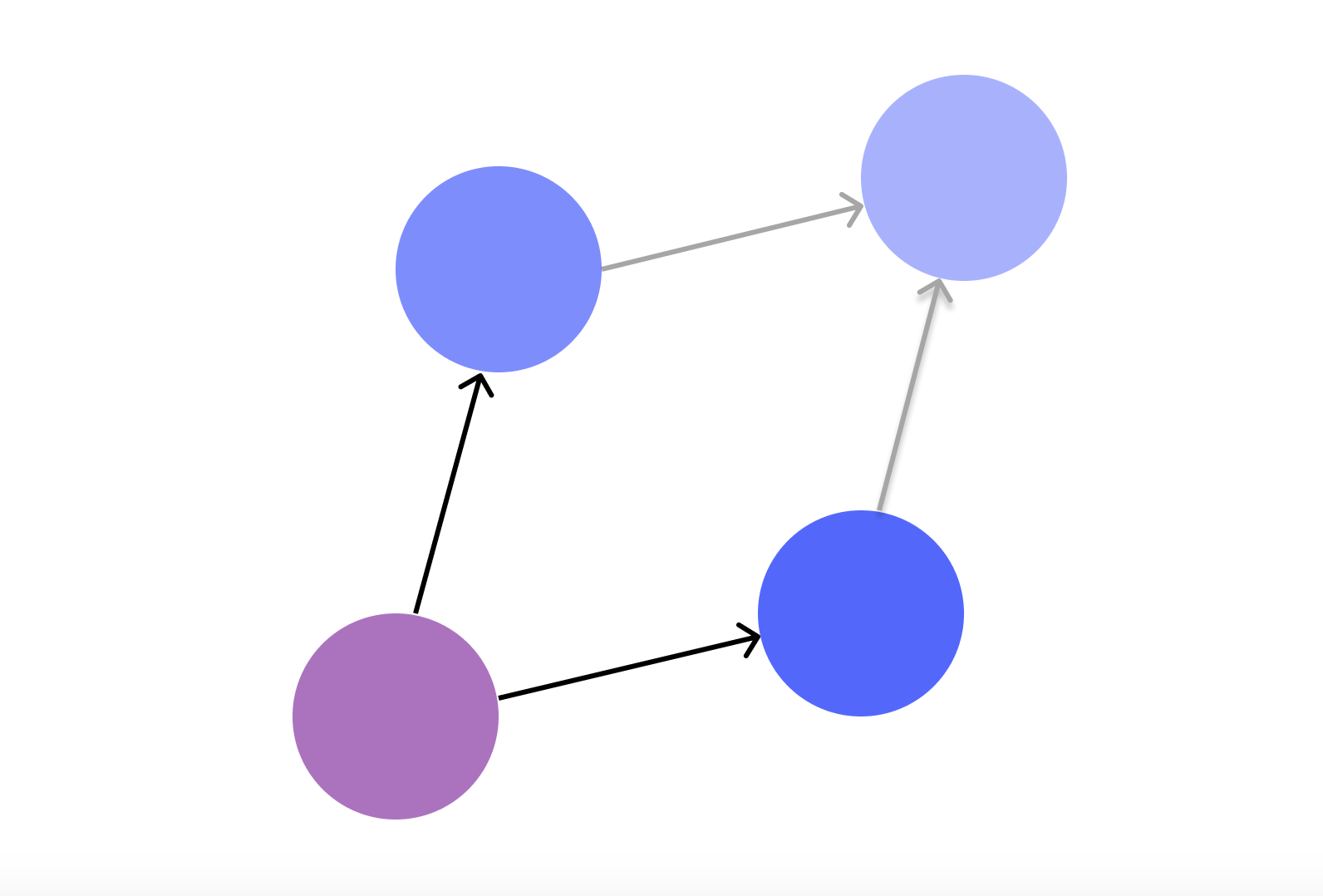

If you upvote someone you don’t know, they are attached to your trust graph. This can be interpreted as a tiny signal that you trust them:

That trust will also spread to the users they trust in turn. If they trust user X, for example, you too trust X—a little:

This is how we intuitively reason about trust when thinking about our friends and the friends of our friends. Only EigenKarma being a database, it can remember and compile more data than you, so it can keep track of more than a Dunbar’s number of relationships. It scales trust. Karma propagates outward through the network from trusted node to trusted node.

Once you’ve given out a few upvotes, you can look up people you have never interacted with, like K., and see if people you “trust” think highly of them. If several people you “trust” have upvoted K., the karma they have given to K. is compiled together. The more you “trust” someone, the more karma they will be able to confer:

I have written about trust networks and scaling them before, and there’s been plenty of research suggesting that this type of “transitivity of trust” is a highly desired property of a trust metric. But until now, we haven’t seen a serious attempt to build such a system. It is interesting to see it put to use in the wild.

Currently, you access EigenKarma through a Discord bot or the website. But the underlying trust graph is platform-independent. You can connect the API (which you can find here) to any platform and bring your trust graph with you.

Now, what does a design like this allow us to do?

EigenKarma is a primitive

EigenKarma is a primitive. It can be inserted into other tools. Once you start to curate a personal trust graph, it can be used to improve the quality of filtering in many contexts.

- It can, as mentioned, be used to evaluate content.

- This lets you curate better personal feeds.

- It can also be used as a forum moderation tool.

- What should be shown? Work that is trusted by the core team, perhaps, or work trusted by the user accessing the forum?

- Or an H-index, which lets you evaluate researchers by only counting citations by authors you trust.

- This can filter out citation rings and other ways of gaming the system, allowing online research communities to avoid some of the problems that plague universities.

- Extending this capacity to evaluate content, you can also use EigenKarma to index trustworthy web pages. This can form the basis of a search engine that is more resistant to SEO.

- In this context, you can have hyperlinks count as upvotes.

- Another way you can use EigenKarma is as a way to automate who gets privileges in a forum or Discord server - whoever is trusted by the core team. Or trusted by someone they trust.

- If you are running a grant program, EigenKarma might increase the number of applicants you can correctly evaluate.

- Researchers channel their trust to the people they think are doing good work. Then the grantmakers can ask questions such as: "conditioning on interpretability-focused researchers as the seed group, which candidates score highly?" Or, they'll notice that someone has been working for two years but no one trusted thinks what they're doing is useful, which is suspicious.

- This does not replace due diligence, but it could reduce the amount of time needed to assess the technical details of a proposal or how the person is perceived.

- You can also use it to coordinate work in distributed research groups. If you enter a community that runs EigenKarma, you can see who is highly trusted, and what type of work they value. By doing the work that gives you upvotes from valuable users, you increase your reputation.

- With normal upvote systems, the incentives tend to push people to collect “random” upvotes. Since likes and upvotes, unweighted by their importance, are what is tracked on pages like Reddit and Twitter, it is emotionally rewarding to make those numbers go up, even if it is not in your best interest. With EigenKarma this is not an effective strategy, and so you get more alignment around clear visions emanating from high-agency individuals.

- Naturally, if EigenKarma was used by everyone, which we are not aiming for, a lot of people would coalesce around charismatic leaders too. But to the extent that happens, these dysfunctional bubbles are isolated from the more well-functioning parts of the trust graph, since users who are good at evaluating whom to trust will by doing this sever the connections.

- With normal upvote systems, the incentives tend to push people to collect “random” upvotes. Since likes and upvotes, unweighted by their importance, are what is tracked on pages like Reddit and Twitter, it is emotionally rewarding to make those numbers go up, even if it is not in your best interest. With EigenKarma this is not an effective strategy, and so you get more alignment around clear visions emanating from high-agency individuals.

- EigenKarma also makes it easier to navigate new communities, since you can see who is trusted by people you trust, even if you have not interacted with them yet. This might improve onboarding.

- You could, in theory, connect it to Twitter and have your likes and retweets counted as updates to your personal karma graph. Or you could import your upvote history from LessWrong or the AI alignment forum. And these forums can, if they want to, use the algorithm, or the API, to power their internal karma systems.

- By keeping your trust graph separate from particular services, it could allow you to more broadly filter your own trusted subsection of the internet.

If you are interested

We’re currently test-running it on Superlinear Prizes, Apart Research, and in a few other communities. If you want to use EigenKarma in a Discord server or a forum, I encourage you to talk with plex on the Alignment Ecosystem Development Discord server. (Or just comment here and I’ll route you.)

There is work to be done if you want to join as a developer, especially optimizing the core algorithm’s linear algebra to be able to handle scale. If you are a grantor and want to fund the work, the lead developer would love to be able to rejoin the project full-time for a year for $75k (and open to part-time for a proportional fraction, or scaling the team with more).

We’ll have an open call on Tuesday the 14th of February if you want to ask questions (link to Discord event).

As we progress toward increasingly capable AI systems, our information channels will be subject to ever larger numbers of bots and malicious actors flooding our information commons. To ensure that we can make intellectual progress under these conditions, we need algorithms that can effectively allocate attention and coordinate work on pressing issues.

Hmm. I've watched the scoring of topics on the forum, and have not seen much interest in topics that I thought were important for you, either because the perspective, the topic, or the users, were unpopular. The forum appears to be functioning in accordance with the voting of users, for the most part,because you folks don't care to read about certain things or hear from certain people. It comes across in the voting.

I filter your content, but only for myself. I wouldn't want my peers, no matter how well informed, deciding what I shouldn't read, though I don't mind them recommending information sources and I don't mind recommending sources of my own, on a per source basis. I try to follow the rule that I read anything I recommend before I recommend it. By "source" here I mean a specific body of content, not a specific producer of content.

I actually hesitate to strong vote, btw, it's ironic. I don't like being part of a trust system, in a way. It's pressure on me without a solution.

I prefer "friends" to reveal things that I couldn't find on my own, rather than, for their lack of "trust", hide things from me. More likely, their lack of trust will prove to be a mistake in deciding what I'd like to read. No one assumes I will accept everything I read, as far as I know, so why should they be protecting me from genuine content? I understand spam, AI spam would be a real pain, all of it leading to how I need viagra to improve my epistemics.

If this were about peer review and scientific accuracy, I would want to allow that system to continue to work, but still be able to hear minority views, particularly as my background knowledge of the science deepens. Then I fear incorrect inferences (and even incorrect data) a bit less. I still prefer scientific research to be as correct as possible, but scientific research is not what you folks do. You folks do shallow dives into various topics and offer lots of opinions. Once in a while there's some serious research but it's not peer-reviewed.

You referred to AI gaming the system, etc, and voting rings or citation rings, or whathaveyou. It all sounds bad, and there should be ways of screening out such things, but I don't think the problem should be handled with a trust system.

An even stronger trust system that will just soft-censor some people or some topics more effectively. You folks have a low tolerance for being filters on your own behalf, I've noticed. You continue to rely on systems, like karma or your self-reported epistemic statuses, to try to qualify content before you've read it. You absolutely indulge false reasons to reject content out of hand. You must be very busy and so you make that systematic mistake.

Implementing an even stronger trust system will just make you folks even more marginalized in some areas, since EA folks are mistaken in a number of ways. With respect to studies of inference methods, forecasting, and climate change, for example, the posting majority's view here appears to be wrong.

I think it's baffling that anyone would ever risk a voting system for deciding the importance of controversial topics open to argument. I can see voting working on Stack Overflow, where answers are easy to test, and give "yes, works well" or "no, doesn't work well" feedback about, at least in the software sections. There, expertise does filter up via the voting system.

Implementing a more reliable trust system here will just make you folks more insular from folks like me. I'm aware that you mostly ignore me. Well, I develop knowledge for myself by using you folks as a silent, absorbing, disinterested, sounding board. However, if I do post or comment, I offer my best. I suppose you have no way to recognize that though.

I've read a lot of funky stuff from well-intentioned people, and I'm usually ok with it. It's not my job, but there's usually something to gain from reading weird things even if I continue to disagree with it's content. At the very least, I develop pattern recognition useful to better understand and disagree with arguments: false premises, bogus inferences, poor information-gathering, unreliable sources, etc, etc. A trust system will deprive you folks of experiencing your own reading that way.

What is in fact a feature must seem like a bug:

"Hey, this thing I'm reading doesn't fit what I'd like to read and I don't agree with it. It is probably wrong! How can I filter this out so I never read it again. Can my friends help me avoid such things in future?"

Such an approach is good for conversation. Conversation is about what people find entertaining and reaffirming to discuss, and it does involve developing trust. If that's what this forum should be about, your stronger trust system will fragment it into tiny conversations, like a party in a big house with different rooms for every little group. Going from room to room would be hard,though. A person like me could adapt by simply offering affirmations and polite questions, and develop an excellent model of every way that you're mistaken, without ever offering any correction or alternative point of view, all while you trust that I think just like you. That would have actually served me very well in the last several months. So, hey, I have changed my mind. Go ahead. Use your trust system. I'll adapt.

Or ignore you ignoring me. I suppose that's my alternative.

I understand, Henrik. Thanks for your reply.

Forum karma

The karma system works similarly to highlight information, but there's these edge cases. Posts appear and disappear based on karma from first page views. New comments that get negative karma are not listed in the new comments from the homepage, by default.

This forum in relation to the academic peer review system

The peer review system in scientific research is truly different than a forum for second-tier researchers doing summaries, arguments, or opinions. In the forum there should be encouragement ... (read more)