Key points:

-

Over the last year the Centre for Effective Altruism has had over a dozen meetings with UK policymakers and tested the waters on a range of policies we thought might have significant positive benefits for the world.

-

In most cases we quickly found that they could not currently attract political support, for various reasons.

-

Here we discuss two more we investigated in greater detail. These were both aimed at improving the depth of knowledge of policymakers about risks from new and upcoming technologies.

-

These turned out to be premature, as they were policies to deal with issues about which there is no academic consensus on the nature of the problem, let alone the appropriate response. More groundwork needs to be done before there will be majority support for significant policy changes in these areas.

-

Nonetheless we had successes in raising the profile of unprecedented technological risks in Government via a report we wrote which was widely circulated through several departments and read by senior civil servants, advisors, and politicians. We were also invited to contribute a chapter on existential risk to the Government Chief Scientist’s Annual Report.

Cross-posted from the Global Priorities Project

Introduction

During the past year the Centre for Effective Altruism has been engaged in ongoing policy discussions with policymakers in the UK Government. We generated hundreds of policy options and had many meetings with policymakers within the UK Government, both party political advisors, politicians and civil servants. This post describes some of our learnings from this process. These learnings will probably be obvious to anyone with experience of the policy-making process, but I hope that discussing them in more detail will be useful for people less familiar with policy making who attempt to do policy work going forwards. This work was primarily carried out by Toby Ord, Nick Beckstead, William MacAskill, Owen Cotton-Barratt, Robert Wiblin, Haydn Belfield, and myself.

One of our motivations for engaging in this work is that developed countries’ governments typically control budgets of the order of a trillion $US ($10^12) per year, and so moving a small portion of this budget could result in large amounts of money moved to effective causes. Paul Christiano discusses the potential value of engaging in politics in more detail here.

Over the past year we have devised many outlines for possible policies which aimed to, among other things, reduce poverty, reduce animal suffering, improve decision making, improve scientific progress, or reduce extinction risk. The policymakers thought that many of these policies were promising or interesting, but weren’t excited enough about any specific initiatives to really push for them. The overwhelming majority of the proposals that we presented were set aside by policymakers without further investigation. Most of those initiatives that were investigated further had problems associated with them, did not provide the benefits that we were initially hoping for, or simply weren’t promising enough for the policymakers to push for them publicly. While we will not be making the full list public at this time, there are a number of themes that emerged from the policies that were not taken up.

Political feasibility

The most common reason for policies to be rejected was because they were politically infeasible. We realised that the initiatives that were most likely to progress were those that would incur little to no financial or political cost to the politicians. It may be possible to overcome the preference for zero or low financial and political cost by building supporting coalitions, but I will save the discussion for later is this post.

Financial cost

Many of the policies and initiatives we suggested were rejected because they would cost Government money. Policies ruled out for this reason included:

-

Funding research to improve forecasting

-

Increasing funding for research into global catastrophic risks

Others were rejected because they would cost some voters money, even if they would save more money for others. Policies ruled out for this reason included:

-

Taxing all non-free-range meat products

-

Stopping subsidies of certain agricultural products

Political cost

Many policies were ruled out because of the political, financial and time opportunity cost. One way of conceiving of this objection is that the policymakers were unwilling to spend political capital because they had more promising ways in which to spend their time and money. This could be because the policy was weird, unpopular, or could be blocked by special interest groups. Policies that were ruled out for these reasons include:

-

Creating more disaster shelters to protect against global catastrophic risks (too weird)

-

Increasing immigration via various mechanisms (too unpopular)

-

Setting minimum sizes for gestation crates for pigs (could be blocked by special interests)

Box 1: Over-simplistic policy analysis (or ‘why some scientists are bad at policy’)During my years as a climate scientist and campaigner, I had many opportunities to see scientists attempting to engage in policy poorly. I would often see scientists:

One of the problems with this approach is that the scientists have not engaged in policy analysis, for example by:

Another problem is that they have not analysed the political landscape or initiated stakeholder engagement, for example by:

By moving straight from step three to four above we ignore key aspects of the policy-making process and end up promoting policies that are usually sub-optimal from an efficiency perspective, and are also politically infeasible. One way in which scientists can engage with the political process successfully is by proposing their policy and ‘passing the baton’ on to policy analysts who can then compare it to the other policies on the table. But the scientist should not be surprised if their policy turns out to be sub-optimal or politically infeasible. Indeed this is probably the most likely outcome for a policy that has been proposed by someone who is not an expert in this policy area. However if the policy analyst is in favour of the idea, a powerful partnership can develop in which the scientist acts as a scientific advisor to an ongoing policy development process. One example of this occurring is the role that Dr. Drew Shindell played in the development of the Climate and Clean Air Coalition, which was largely developed by the US State Department with Dr. Shindell acting as a scientific advisor to the process. Note that Kingdon’s ‘multiple streams’ model of policy change suggests that ‘policy windows’ caused by the shifting political landscape could allow previously-rejected policies to be implemented. Under this model a scientist who is unable to tell when a policy window has opened could have success in continuously promoting policy over a long period of time and hoping that at some point a policy window may open. An example of where this may have occurred is in the story that is told about the creation of an observational program to monitor ocean currents in the North Atlantic. These measurements had been desired by the scientific community for some time, but according to this story it was not until a scientist mentioned the problem of a potential slow-down in the ocean currents and the need to monitor them to then UK Prime Minister Tony Blair that funding became available for the programme. A potentially more effective option for the scientists pushing for their policy continuously would be for them to ally with a policy analyst with more expertise in analysing the political landscape, who could monitor the landscape and report back to the scientist if a policy window opens or has a chance of opening soon. This may have been the case with the Climate and Clean Air Coalition, which some commentators said was Obama’s attempt to claim a success in tackling climate change in the run up to the 2012 general election in order to woo back environmental voters who had been unimpressed by his record on climate change to date. This policy window may have caused policy analysts at the State Department to implement Dr. Shindell, and others’ long-standing policy recommendations. There is plenty more work that needs doing in identifying possible responses to problems that effective altruism community has identified (i.e. in step two above), before the community can focus the majority of its efforts on proposing policies (see step three). However, if the effective altruism community would like to do some of this policy analysis, political landscape analysis and stakeholder engagement itself rather than relying on busy and potentially disinterested policy analysts and campaign strategists, we will need to develop expertise in these areas. |

More initiatives that won’t work (yet)

Once the majority of our proposals had been ruled out due to not being clearly politically feasible, we had a much smaller number of options to investigate further. Of these, several failed because they were too advanced relative to our current understanding, and required a majority to support them. I discuss two examples in more detail below.

An Intergovernmental Panel on Biotechnological Risk

The proposal

Set up a body, modelled on to the Intergovernmental Panel on Climate Change (IPCC), to synthesise knowledge on biotechnological risks.

The appeal

The IPCC had a huge impact on the climate change debate, and even won a Nobel peace prize "for their efforts to build up and disseminate greater knowledge about man-made climate change, and to lay the foundations for the measures that are needed to counteract such change". Presumably an Intergovernmental Panel on Biotechnological Risk could do the same for biotechnological risk?

The stumbling blocks

The IPCC was set up by the scientific community to illustrate to the world that there was a scientific consensus on climate change. The IPCC does not do original research, and does not progress the field other than by recording the state of climate science research every six or seven years. The consensus in the field on what to do about the risks arising from biotechnology is not as uniform as the state of climate science was when the IPCC was set up. There are still a wide range of views on questions such as whether certain types of potentially dangerous research should be undertaken, and whether creating novel dangerous pathogens in the lab is overall a net harm.

My understanding from Prof. Steve Stedman, former Assistant Secretary General to the United Nations, is that he spoke with over fifty experts about the question of setting up an IPCC-equivalent body in this area and concluded that there were neither high-level experts willing to champion the initiative, nor was there a general feeling that the initiative would succeed, in part due to the lack of consensus.

IPCC authors are not paid for their time, and so they either volunteer their time, or use funding from other grants to cover their costs. This aspect also made the initiative unappealing to potential champions of the initiative.

Another problem is that the incentives are aligned rather differently. When climate scientists raised the alarm about climate change, it did not curtail their research freedom, and increased the future funding to climate science. If biotechnologists raise the alarm about biotech risk, their proposals will largely be imposing restrictions or large costs on their own and their peers’ research. Additionally, the overall effect may well be to reduce the total amount of funding to their area, though this is uncertain.

Prof. Stedman conducted the most in depth investigation to date of the possibility of setting up an IPCC for biotechnology risk. He was convinced enough that it was unlikely to go ahead that after his investigation he returned the remainder of the funding that he had been given to set up the initiative and moved on to other things.

House of Commons/Lords Science and Technology Committee inquiry into unprecedented technological risk

The proposal

The House of Commons/Lords Science and Technology Committee would run an inquiry into unprecedented technological risk, particularly focusing on its impact on existential risk.

The appeal

The Government must respond in writing to the committee’s reports, and this could allow the committee to influence government policy on this issue. We also hoped that we could use this as a way to cause people to investigate this issue and develop possible responses.

The stumbling blocks

House of Commons/Lords committees such as this one cannot do original research, and largely synthesise the views of the witnesses that they call on. As there has been little research done into policy responses to existential risk, and there is no consensus in the area, it is unlikely that the committee would make strong recommendations to Government.

Additionally, in order to launch the inquiry we would need multiple sympathetic MPs or Lords on the committee. The inquiry would need to be led by a particularly sympathetic member, and it would take up a large amount of their time. This is time that could otherwise be put to potentially more useful initiatives.

This may be a useful initiative to take up in future when there has been more research done into responses to unprecedented technological risks and their impact on existential risk, particularly once those responses have been developed into prioritised policy proposals.

Communicating expert opinion

Both of these failed proposals are about communicating the majority view of experts to decision-makers and interested parties. The reason they failed is because the field of existential risk reduction is so new that the majority of experts do not yet hold similar views on the issue. For example, even within the Future of Humanity Institute there is significant internal disagreement on key elements of what we should be doing to reduce existential risk. This suggests there is much more research to be done in this area before we can start implementing many of our possible responses.

The rational model of policy analysis

There are many models of the policy-making process. Schattschneider’s ‘expansion of conflict’ model suggests that the policy-making process is largely determined by the extent to which parties are able to expand the process to include additional groups that are supportive of their point of view. One of the more popular models is Kingdon’s “multiple streams” model, which suggests that policies are only implemented when ‘problems, policies and politics’ align to create a window of opportunity. Kingdon also introduces the idea of a ‘policy entrepreneur’ as someone who seeks to gain benefits in exchange for implementing policies and initiatives that are popular. Baumgartner and Jones focus on issue-definition and venue-shopping to suggest that issues usually follow a stable policy direction but that this stability is interjected with periods of rapid, unpredictable change. Schneider, Ingram, Stone and others focus on issue framing as the factor which decides which policies are implemented and which are not. These are just a small subset of the more popular models of the policymaking process which have been proposed. These models cover different aspects of the decision-making process, and do not always agree where they overlap, as we might expect in a real-world process as complex as this one.

Simon’s rational model of policy analysis is another model that we can use to frame our discussion of the policymaking process. It is a simple model that I will discuss in more detail is I believe it can be used to illustrate the broader picture of why many of our policies were not taken up. It was originally put forward by Herbert A. Simon to describe a process one could take to develop public policy. Below is an adaptation of Ian Thomas’s description of Simon’s model of policy development:

-

Intelligence gathering— data and potential problems and opportunities are identified, collected and analyzed.

-

Identifying a problem

-

Identifying options to solve this problem

-

Assessing the consequences of all options

-

Relating consequences to values— with all decisions and policies there will be a set of values which will be more relevant (for example, economic feasibility and environmental protection) and which can be expressed as a set of criteria, against which performance (or consequences) of each option can be judged.

-

Choosing the preferred option— given the full understanding of all the problems and opportunities, all the consequences and the criteria for judging options.

Stages in the model can occur concurrently and stages can be skipped.

Criticism of the rational model

Like any simple model, the ‘rational model’ ignores some important factors. For example, it ignores the political landscape and vested interests, and assumes the Government is a unitary rational actor in a static system. There is a literature of case studies both showing benefits and drawbacks of the rational model in different circumstances. Nonetheless it can be helpful to us in providing a hypothesis as to why our policies were unworkable, and where we might focus our attention in the future.

An adapted rational model

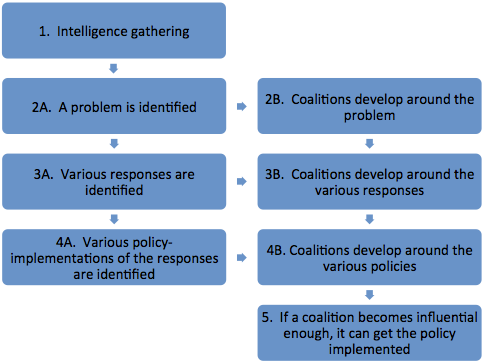

The rational model has been adapted in a number of ways throughout the literature. Here I suggest an extension that may help explain some of our experience. As with all models based on the rational model, it will have a number of shortfalls, but is hopefully still useful. It proposes that policy development largely goes through the following stages:

Like in the original rational model, stages can occur concurrently and stages can be skipped. I make a distinction between responses (desired outcomes, e.g. reduce emissions), and policies (methods of achieving the desired outcomes, e.g. a cap and trade regime) as I did in the box above.

We can represent our progress towards a policy-based solution for a given problem in this model. For example, on existential risk due to artificial intelligence, the community is perhaps working on gathering intelligence and identifying the problem (stages 1 and 2A), with limited work on identifying responses and building coalitions around the problem (stages 3A and 2B). Thus it would not be as useful for the community to shift its attention to working on stages 4A, 3B, 4B or 5 at this point. There may be space for individuals to do pioneering work in these areas, but in general the model would suggest that they will find progress easier once more progress has been made in the previous stages.

On climate change mitigation (reducing greenhouse gas emissions) in the UK, most of the work that remains to be done is on developing policies, building coalitions around various responses and policies and attempting get those policies implemented (stages 4A, 3B, 4B and 5). This is one of the reasons I moved away from being a climate scientist, where I was predominantly working on stage one. This is not to say that there is no more work to be done in this area; further highlighting the dangers from climate change is still useful, just perhaps not as useful as well-directed work in the policy arena. In the US, it is plausible that the coalitions around the problem (stage 2B) are not yet strong enough, and so there is potentially more work to be done there.

We can view the intergovernmental panel on biotechnological risk as an attempt to build a coalition around responses to biotech risk once we had a coalition in place around the problem of biotech risk (moving from stage 2B to stage 3B). The problem in this case was that there was not a pre-existing coalition developed around the problem large enough to represent the majority view.

We investigated using the House of Commons/Lords Select Committee to do response identification, policy identification, and advocacy (stages 3A onwards), however the committees are unable to carry out response and policy identification (stages 3A and 4A) as these are not within the Committees’ mandates. Indeed I cannot think of any examples of Government committees that do this form of response and policy research, except perhaps in extreme circumstances such as during times of crisis. We should perhaps view the Commons/Lords Committees as part of moving from a coalition around a specific policy to getting that policy implemented (moving from stage 4B to stage 5). We are not yet ready for this stage as policies and coalitions around responses (stages 4A and 3B) have not been adequately developed, let alone coalitions around policies (stage 4B).

Criticisms

There are many ways that this model may mislead us, particularly if it is followed in too formulaic a fashion. I will list just some of these criticisms below.

For example, by building visible coalitions we can also ignite opposition, and so need to be wary of the political landscape in order to improve the chances of our activities have an overall positive effect.

This model could also cause us to miss easy wins, in which the size of the coalition required to cause the policy to be implemented is surprisingly small, if we were focusing attention on earlier stages in the model.

This model doesn’t properly incorporate the possibility of larger-scale political change (such as a change of party after an election), nor does it account for external events (such as a war or various types of disaster) that can dramatically alter coalitions and the thresholds required for policy implementation.

Additionally, by simply modelling ‘coalitions’ the model ignores the different roles played by different actors such as the media, the public, interest groups, political elites, and others in the process. A more fine grained model of the political landscape and stakeholder engagement will be useful in the later stages of the model.

So this is a simplistic model, yet it allows us to make hypotheses about where further work may be most useful to help move towards policy solutions to problems. These hypotheses can then be probed further using other methods.

Outcomes and next steps

Over the course of our policy engagement to date we have learned much, and also had some significant successes. Our report on unprecedented technological risks was widely distributed and read by politicians and civil servants across a number of departments. We were also invited to contribute a chapter on existential risk to the UK Government Chief Scientist’s Annual Report on risk.

Going forwards, we have decided to focus more of our effort on building coalitions around the problem and identifying solutions (stages 2B and 3A). One of the reasons for this is that we found it easier than expected to gain access to policymakers, and thus when we have more politically feasible responses to problems and more support for them, we think it will be possible for us to take these to policymakers. Nonetheless, we will continue to respond to policy opportunities in a more reactive fashion, and will continue to meet with senior policymakers and politicians as opportunities arise.

This work was carried out by the Global Priorities Project, which is currently fundraising to hire an additional researcher for the project and a full-time project manager who will also lead our outreach to Governments and foundations. If you would be interested in contributing to this effort please contact me or Robert Wiblin. We have more information on our current plans which you can read here.

Summary

Some of the key points I feel I have learned from our interactions with policymakers are:

-

We need more research into potential responses to unprecedented technological risks.

-

Once we have a better idea of the responses to unprecedented technological risks we would like to see implemented, we can start researching policy implementations of those responses.

-

If we want to implement policies that incur financial cost or political capital, then we will need to start building political coalitions around the problems, responses and policies that we are championing.

-

Coalition-building is something that we do not have much expertise in among the people I spoke to within the effective altruism community.

-

Initiatives that build upon a majority view among experts are not useful unless there is already a majority view among experts.

-

It was easier than expected to discuss our ideas with senior civil servants and politicians.

Additional points not mentioned above, but which I felt I learned through this process:

-

It would have been useful if we had been even more familiar with what the Government was already doing on unprecedented technological risks.

-

Policymakers were more willing to accept that there was a problem in the area of unprecedented technological risk than I was expecting. This shifted the conversation over to specific policy responses, which is where I felt we had the least to say.

-

Policymakers’ believing that there was a problem in the area of unprecedented technological risks was not sufficient to cause them to put energy into developing responses. It was expected that we were the people who would do this work.

Thanks to Nick Beckstead, Owen Cotton-Barratt, Steve Stedman, Toby Ord, Haydn Belfield, Robert Wiblin, William MacAskill and David Frame for the work, comments and conversations that went into this post.

Niel Bowerman is a co-founder and Director of Special Projects at the Centre for Effective Altruism. He was a member of Obama’s 2008 presidential election Energy and Environment Policy Team, and was Climate Science Advisor to the President of the Maldives. He has a PhD (DPhil) in physics from the University of Oxford.

I liked your box that gave concrete advice on how to improve policy suggestions. A lot of people spend a lot of time and energy focusing on what doesn't work, and it's nice to see some focus on what does work.

Have you put any thought in how to overcome the sorts of political obstacles that cause politicians to favor certain interest groups at the expense of a greater good (such as some agricultural subsidies)?

That's great news! I had previously assumed that political action in this area would be infeasible, but I'm happy to be wrong on this one.

I find the 'political entrepreneur' model useful here. It predicts that a politician would be willing to make changes to these sorts of policies once the balance of costs to them and benefits to them weighs in favour of changing it.

For example, take the common agricultural policy in the EU. If you change it, then you have 26m very angry European farmers, and large scale unemployment that you are labelled as responsible for. So the politician would need to create a mass movement or economic benefits that are clearly greater than these downsides in order for it to go through. Unfortunately people make much more noise about losses than about benefits, and so this is unlikely to change anytime soon.

Of course the political entrepreneur model is very simplistic here. It would take a huge coalition of politicians to make this happen. And you would need to get around all of the nationalistic worries that would occur from vast quantities of the EU budget not being allocated to countries that it had previously been allocated to. These are just a few of the many additional obstacles that would need to be overcome.

There is much more to be said about this though. A rather non-evidence-based playbook on this that I used to use in my campaigning days is "How to win campaigns" by Chris Rose if you are interested in reading more about how to do this practically. On the more theoretical side, many of the books linked to in the article propose alternative models that can help illustrate the sorts of changes that would need to be made.

Perhaps this is a case where the best way to change public policy is to change public sentiment -> create a large enough political benefit to outweigh the special interests cost.

I think this will tend to be correct when the policy involves large costs as well as gains to society. For some of the policies we're interested in that could be right; some of them shouldn't need that.

To clarify, I'm not predicting that political action will be feasible. I'm merely predicting that it will be possible for us to gain access to policymakers again in the future. Especially once we have better responses and policy proposals.

Niel, thanks for writing up this post. I think it's really worthwhile for us to discuss challenges that we encounter while working on EA projects with the community.

I noticed that this link in this sentence is broken:

Thanks Nick. There seems to be a problem with the way the forum currently references the effective-altruism.com URL. I've directed the link to the post on the trikeapps site as a temporary workaround. It may break once the problem with the effective-altruism.com URLs is fixed.

My guess is that these links will be fine because the forum software takes any internal links in your posts and makes them 'relative'. Anyway, Trike will continue to work ironing out site bugs over the weekend and we'll aim to have things in order by Monday.