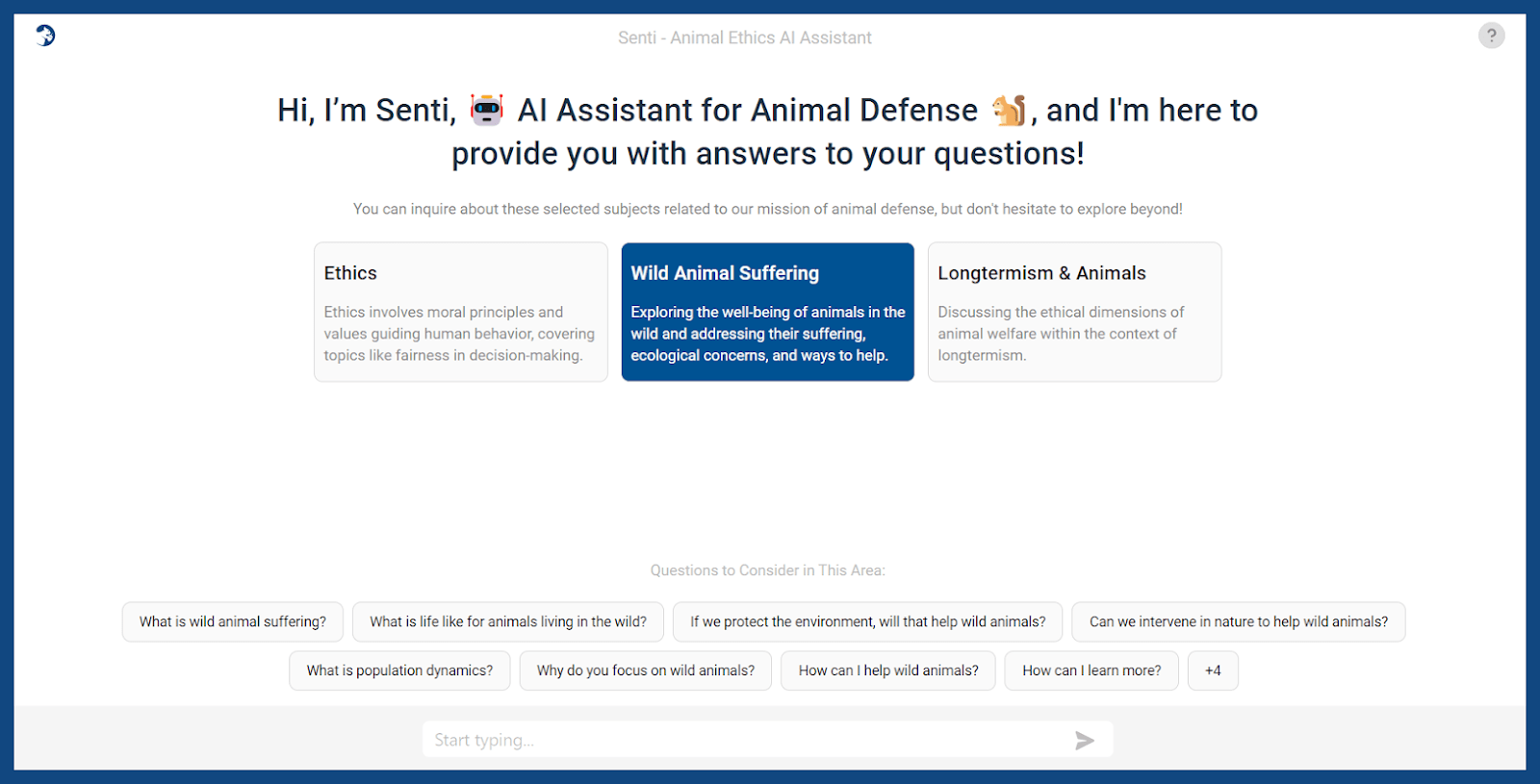

Animal Ethics has recently launched Senti, an Ethical AI assistant designed to answer questions related to animal ethics, wild animal suffering, and longtermism. We at Animal Ethics believe that while AI technologies could potentially pose significant risks to animals, they could benefit all sentient beings if used responsibly. For example, Animal advocates can leverage AI to amplify our message and improve our approach to share information about Animal Ethics with a wider audience.

There is a lack of knowledge today not just among the general public, but also among people sympathetic to nonhuman animals, about the basic concepts and arguments underpinning the critique of speciesism, animal exploitation, concern for wild animal suffering, and future sentient beings. Many of the ideas are unintuitive as well, so it helps people to be able to chat and ask followup questions in order to cement their understanding. We hope this tool will help to change that!

Senti, our AI assistant is powered by Claude, Anthropic’s large language model (LLM), however, it has been designed to reflect the views of Animal Ethics. We provided Senti with a database of carefully curated documents about animal ethics and related topics. Almost all of them were written by Animal Ethics, and we are now adding more sources. When you ask a question, Senti searches through the documents and retrieves the most relevant information to form an answer. After each answer, there are links to the sources of information so you can read more. We continually update Senti, and we’d love to have your feedback on your experience.

Senti has been designed to discuss topics related to the wellbeing of all sentient beings, and we request users to restrict their conversations to topics related to helping animals and other sentient beings. We have also provided a list of 24 preset questions that you can use to explore different topics related to animal ethics.

When you chat with Senti for the first time, you’ll be presented with a consent form. It requests permission to save your conversation history. Saving your conversation history allows you and Senti to have a continuous conversation, with Senti remembering what you’ve already discussed. It also provides us with your chat history, which is anonymous. This will help us to improve the answers and know what new information to add. You do not have to give your consent to chat with Senti. If you decline, your chat history won’t be saved, but you can still ask questions.

We would like to give special appreciation to the team at Freeport Metrics, which provided extensive pro bono services to build the infrastructure, handle the technical setup, and design the UI for Senti. They conducted extensive testing and offered ongoing support, without which the project could not have been completed. We would additionally like to thank our volunteers who have been helping test new prompts, new document sets, and different settings, such as how many pieces of information to retrieve to respond to each question.

We are continually working on improving Senti by running independent tests with the new Claude 3 models. We expect to deliver an update in the coming months that provides longer and more accurate responses.

We hope Senti helps you learn a lot and makes it easier for you to share the information with others.

Yes, and we are also planning to switch to Claude Haiku (a faster model for generating responses).