EA clearly don't know how to handle power dynamics, and until we figure this out, we should avoid (as much as possible) to create concentration of power. I say this in full knowledge that avoiding concentration of power is not without cost.

Some examples of broken power dynamics:

- Owen Cotton-Barratt's severe mistakes seems to be largely down steam from not understanding power dynamics.

- I don't know what's the reason behind the CEA community health team's lack of understanding for the need to be fully separate from funding conidiations, but my best guess is that a lack of understanding of power dynamics is involved.

- People not being able to trust that it's ok to post criticism of EA under their own names, seems like a break down of power relations. For the record, I think the worry is well founded. "It's not good for your career to criticise powerful people" is the default outcome if you don't put in effort to mitigate this, and I don't see such effort.

- I have had several interactions with and observations of people how are better connected with in EA than me, which have left me baffled by their lack of understanding they have of the experience of being a less connected EA. This keeps happening, but I'm no longer surprised when it does.

- A handfull of lower level community organisers who have told me in privet that their impression of CEA is that they are incompetent and/or unprofessional. But also that they have not spoken up about this because CEA is their sole source of funding.

What to do:

- Don't default to trusting CEA, 80k and other central orgs. Most of their power comes from your trust. Treat the word of high status people same as the word of any other EA.

- Don't donate to EA funds. We can't democratise billionaire money, for lots of reasons. But we can avoid centralising the money that starts out as being dis-centralised. Instead decide for your self where to donate, or donate to your local or national EA group, or join the donation lottery, or delegate your decision to someone you trust personally (not based on community stanning).

I'm not accusing specific people of specific things. My current best model is that everything we see is what naturally happens when power is centralised. This is not about specific people, this is systemic. For example it's not the fault of the central orgs that too many people defer to them too much, that's on the rest of us.

I'm also not saying that no specific person is blameworthy. I'm just not getting into that discussion at all.

For me personally, the core of Effective Altruism is "it's not about you". Everything else follows from there.

This is very much in contrast to other cultures of altruism I have encountered, which focus very much on the mental state of the giver. When you stop questioning if you are pure and have the right motives, ect, and just focus on results, that's when you get EA.

But also, don't be 100% altruistic. Some of your efforts should be about you. If you only take care of your self for instrumental reasons, you will systematically under invest in your self. So be just genuinely egoistic with some parts of your effort, where "be egoistic" just means "do what ever you want".

[Epistemic status: Mostly a ranting, but I'm also open to the possibility that I'm missing something important about how other people communicate.]

When people write "we should..." about EA. What does this mean exactly?

When ever I read this phrase I'm getting the sense that this person is confused about how EA works. But maybe I'm the one who is missing something.

There are groups that is all about coordinated actions, and for them it does make sense to make suggestions of the form "we should...". But EA is not like that. Coordinated action is not our thing, and I don't think it should be. We are not that type of movement.

I love that EA is to a large extent a do-occrasy. The way to get something done in a do-occrasy is not to suggest that "we should" but to say, "I'm starting this project. Anyone want's to join?".

The main exception where we're not do-occrasy is the funding, and everything down stream from that, which is admittedly a large part of EA. If you want funding for your project you do need to convince others to donate to you. But I don't think the path to getting funding is to write "we should..." on EA forum? Maybe I'm wrong in which case please tell me!

To avoid potential misunderstanding: I'm not any normative claims about funding in this short form!

I'm more understanding of people who write "someone should...". I've done that. It usually doesn't work, but at least I can see where that would come from.

Ooo look!

Someone already said all the things I wanted to say, except even better. This is great. I feel instantly less annoyed. Thanks :)

EA Forum feature request

(I'm not sure where to post this, so I'm writing it here)

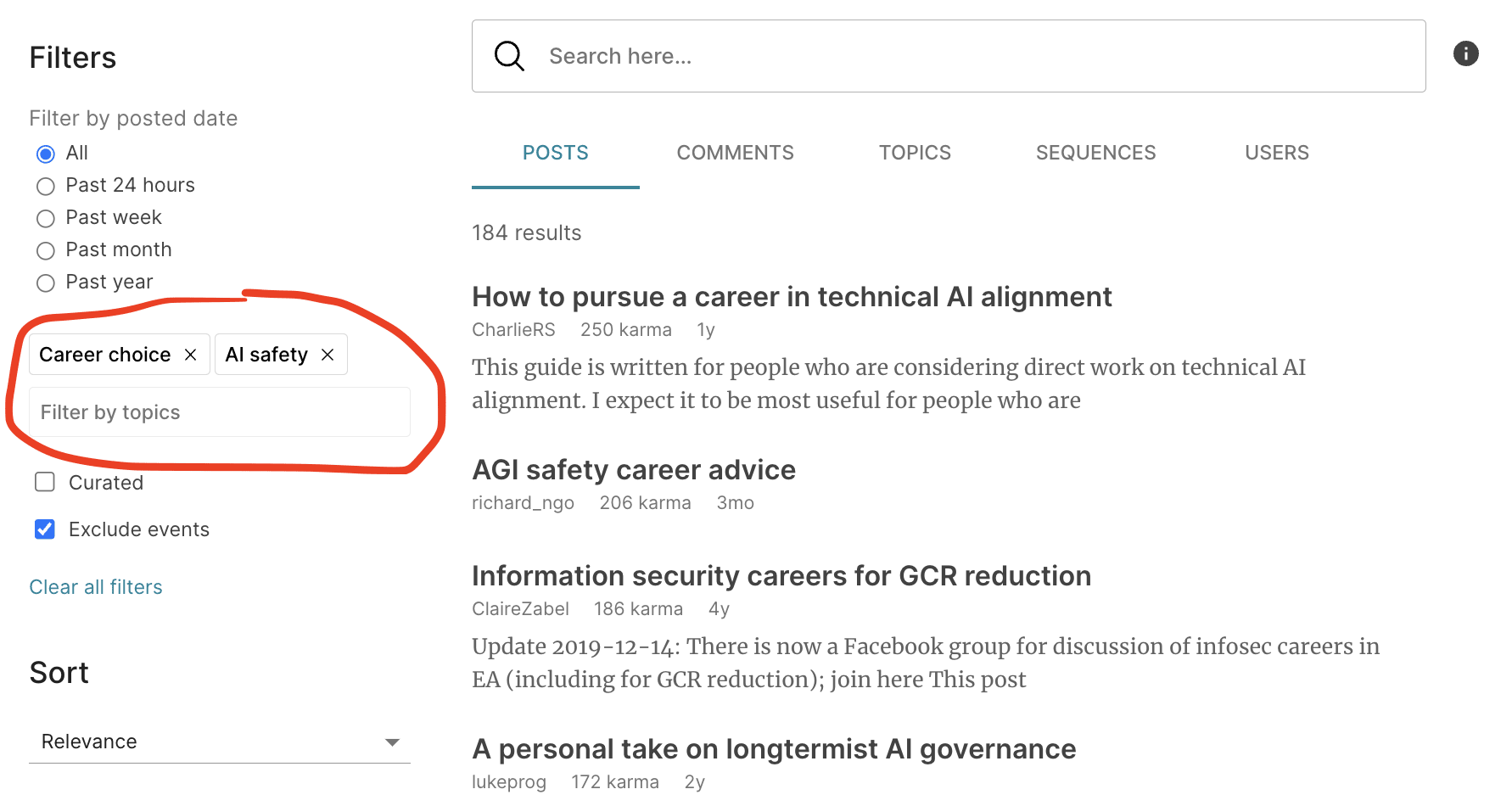

1) Being able to filter for multiple tags simultaneously. Mostly I want to be able to filter for "Career choice" + any other tag of my choice. E.g. AI or Academia to get career advice specifically for those career paths. But there are probably other useful combos too.

(Just for future reference, I think “EA Forum feature suggestion thread” is the designated place to post feature requests.)

Thanks for the feedback Linda! I believe you can accomplish this using the topic filters on our current search page, but please let me know if you run into any issues.

"Filter by topics" lets you search for and select any number of topics, and the results will show anything that has all of the selected topics. Hope that helps!

(Just for future reference, I think “EA Forum feature suggestion thread” is the designated place to post feature requests.)