I would like to propose a simple idea to improve the expected returns of donations: conditional donations. Suppose you want to donate a lump sum of money. With a conditional donation, you would be able to select conditions that determine where your donation ultimately goes. The motivation I see for conditional donations is that donors may be willing to donate to different charities depending on how much other people are or would be donating. Someone might wish to donate to charity A only if charity A receives, in total, over $X in donations, including your contribution. If the donation would not push charity A over that threshold, the donor might prefer donating to charity B.

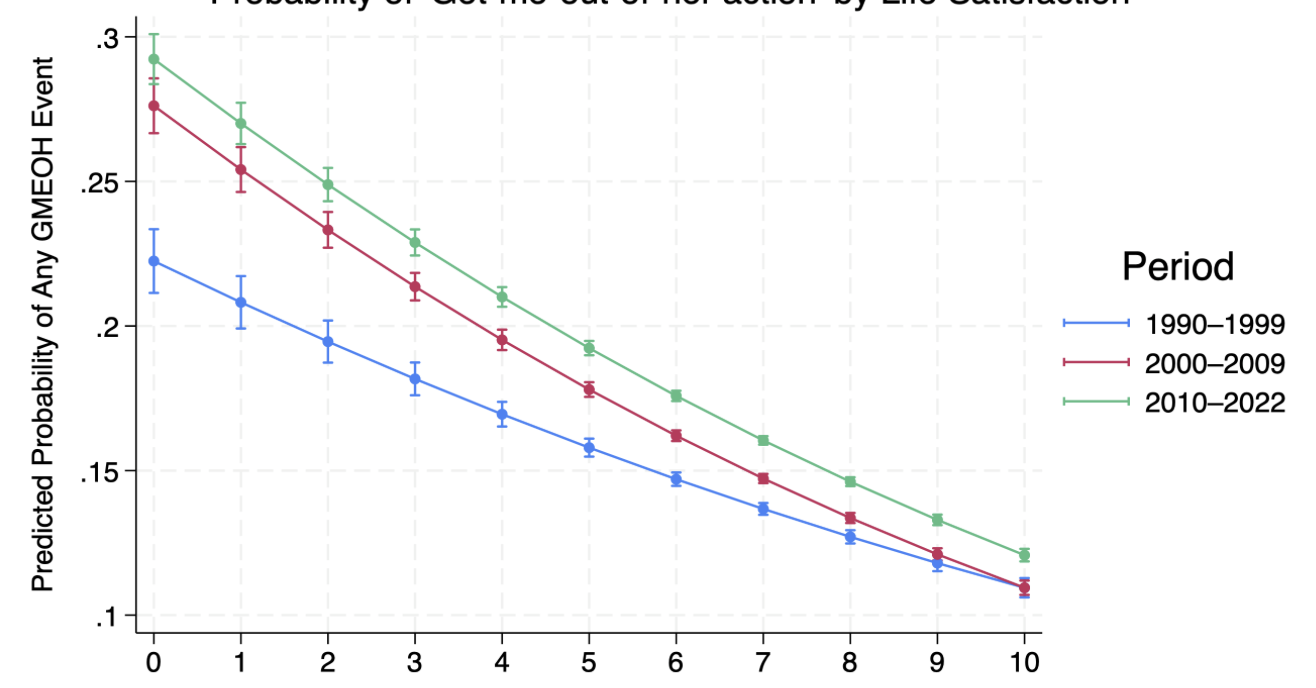

Although conditional donations might have other interesting uses, I see the force of conditional donations as coming from this possibility of setting thresholds for funding that change donor’s preferences, so I will focus on why this kind of possibility is valuable. Conditional donations would allow us to better allocate resources to take advantage of non-linear returns, or economies/diseconomies of scale. That is, the per dollar returns in terms of impact of donations to a specific charity are almost certainly not linear. Depending on each charity’s circumstance, the return per donation might increase or decrease with additional donations. The graph below compares a charity facing decreasing returns (red) and a charity facing increasing returns (blue).

Let’s assume both charities currently have zero funding. If you wanted to donate $50, you would face a higher expected impact by donating to the charity with decreasing returns (red) in this example. But if you had $2000 to donate, you would have a higher expected impact by donating to the charity with increasing returns (blue).

So suppose that some charity A has been picking low-hanging fruits for some time and it is now ever harder for them to create impact, so their expected lives saved curve has a concave down shape (like the red curve above). Now charity B, on the other hand, is just starting out and we have good reason to believe that a small donation to them may be comparatively less impactful than a small donation to A. However, charity B needs a total of $1,000,000 in donations in order to scale up by, say, building a new life-saving facility, after which their impact would skyrocket. The following graph represents these assumptions.

So, conditional donations would allow you to maximize the expected impact of your donation by donating to B only if your donation would get B over the $1,000,000 they need to scale up, otherwise your donation would go to A, where it’s marginal impact would be greater. In practice, I imagine you would set a condition of the sort: donate to B if at or before [enter date here] B accumulates more than $1,000,000 minus [amount you want to donate] in donations or conditional donations, otherwise donate to A.

In addition to allowing you to individually maximize the expected returns on your donation, the conditional donation mechanism could allow for more efficient coordination. If many people set similar conditions for their donations, then the condition to donate to B, for example, might be met via other people’s conditions. That is, there could be 10 individuals, each intending to donate $100,000 who would donate to B in case their donation got B to $1,000,000. Then, all those individuals’ conditions would be met because of each other’s conditions and all would donate to B at the same time.

The possibility of conditional donations might also affect EA-aligned charities, which could themselves aid donors in making informed decisions by estimating how their ability to create impact changes with scaling up.

In terms of the limitations of conditional donations, I expect that similar coordination can be achieved by donating money directly to managed funds such as Effective Altruism Funds, which probably already allocate money taking into account the sort of non-linear returns behavior I am trying to address. Additionally, it might be that the reason something of this sort doesn’t already exist is that there isn’t sufficient information to allow us to derive curves of expected impact in the way I’ve shown above.

I would love to hear people’s thoughts about how to implement this idea as well as potential problems with it. I should say that this seemed so obvious of an idea to me that I thought someone else might have written about it, but I found no other mention in my research.

Two short points.

First, there was a lot of work by Robin Hanson 15-20 years ago on Conditional contracts and prediction-based contracts that might be relevant.

Second, a key issue with this sort of donation is that the organizations themselves are left with a lot of uncertainty until the contract resolves. If the contracts are really transparent, they might have some idea what is happening, but it seems likely that tons of such contracts would lead to really messy and highly uncertain future cash flows that would make planning much harder. I'm unsure if there's a clear way to fix this, but it's probably worth thinking about more. (The alternative is for people to just wait on making the donation, which is not at all transparent and makes precommitment and coordination around joint giving impossible, but obviously requires much less complexity.)