tl;dr: Metaculus is an EA-adjacent forecasting platform. By my estimation, most Metaculus questions fail to directly influence decisions, but a few fall at the sweet spot between large scope, high decision relevance, and good fit for forecasters. That said, perhaps only a fraction of Metaculus’ impact is captured by the impact of their questions. In any case, the EA community could perhaps use evaluations of the value of Metaculus questions to incentivize it to produce more valuable questions.

Overall impressions

The holy grail for Metaculus would be questions on important topics which the kind of person who encounters Metaculus is in a position to do something about.

However, there is a tension between questions being decision-relevant and having a large scope because smaller entities might be more amenable than larger ones to being influenced. So it could turn out that the impact sweet spot is asking intimately decision-relevant questions that small organizations are willing to listen to. Conversely, as Metaculus grows its audience, perhaps questions with a large scope which change small decisions for many people might be more valuable.

But a large number of Metaculus questions fall in neither of those categories. On the one hand, we have very narrow questions which do not affect any decisions, such as What will the women’s winning 100m time in the 2024 Olympic final be? On the other hand, we also have questions such as “Will Israel recognize Palestine by 2070?” or “When will Hong Kong stop being a Special Administrative Region of China?”. These events seem so large as to essentially be non-influenceable, and thus I’d tend to think that their Metaculus questions are not valuable [3].

For Metaculus, another constraint is to have questions that interest forecasters. Interestingness is necessary to build a community around forecasting that may later have a large instrumental value.

Below, I outline a simple rubric that I think captures an important part of how Metaculus questions lead to value in the world. I look at questions’ decision-relevance, forecasting fit, and scope.

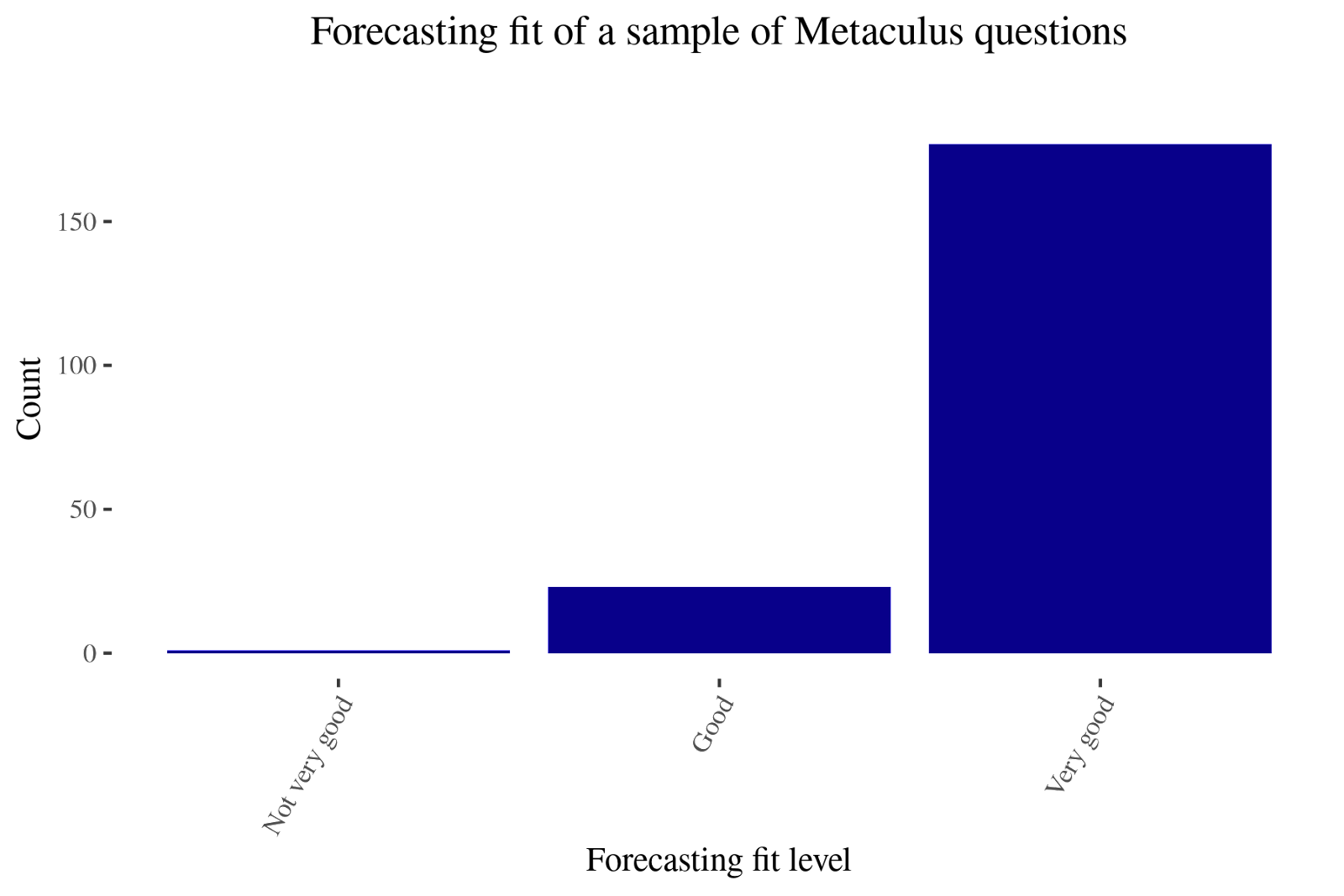

Perhaps predictably, Metaculus is very good at making questions that are a good fit for being forecastable and suitable for forecasters instead of things like financial markets. Now that a forecasting community already exists and is known to be accurate and calibrated, it seems to me that the next bottleneck is to make forecasts action-guiding, perhaps by tweaking the scope of questions that Metaculus asks, or by reaching out to specific organizations.

Overall, the driving motivation behind this post is the perspective that:

- even though I think that medium or long-term interventions can have a larger impact than spending their funds on the best near-term interventions,

- I'd still like to have a healthy degree of paranoia

- because I think that the default might be to have essentially no impact,

- and I would dislike for that to be the case for Metaculus

Methodology

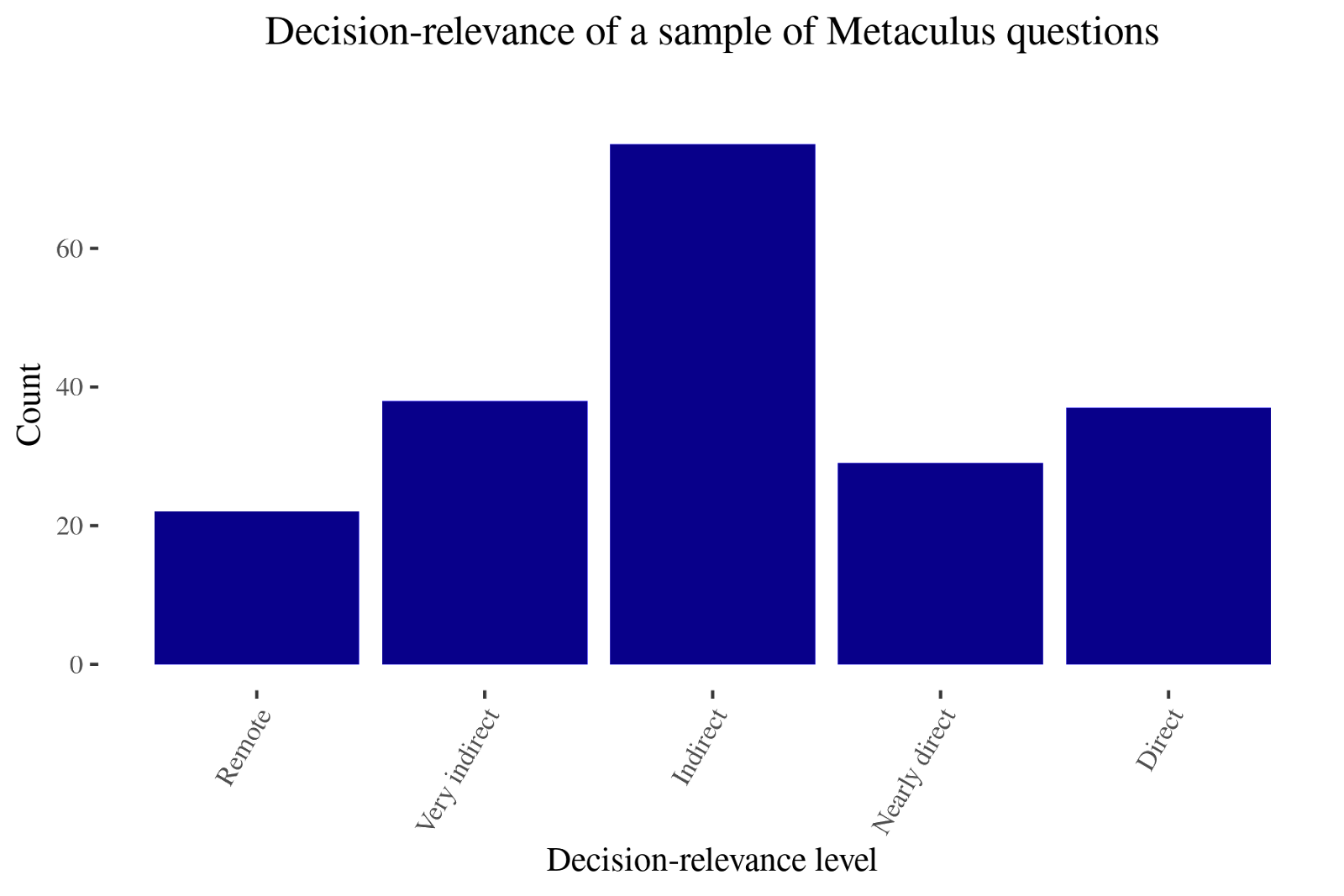

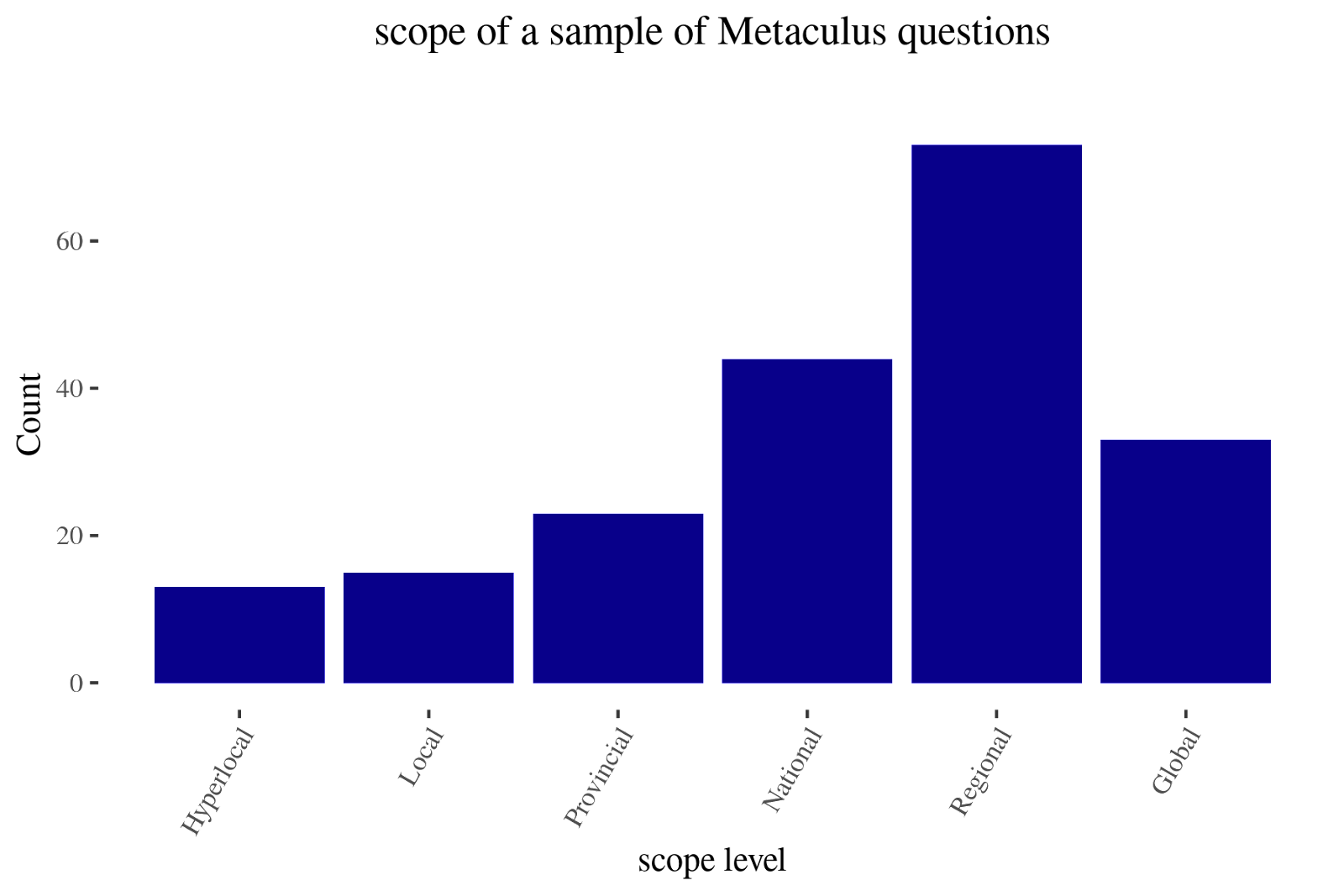

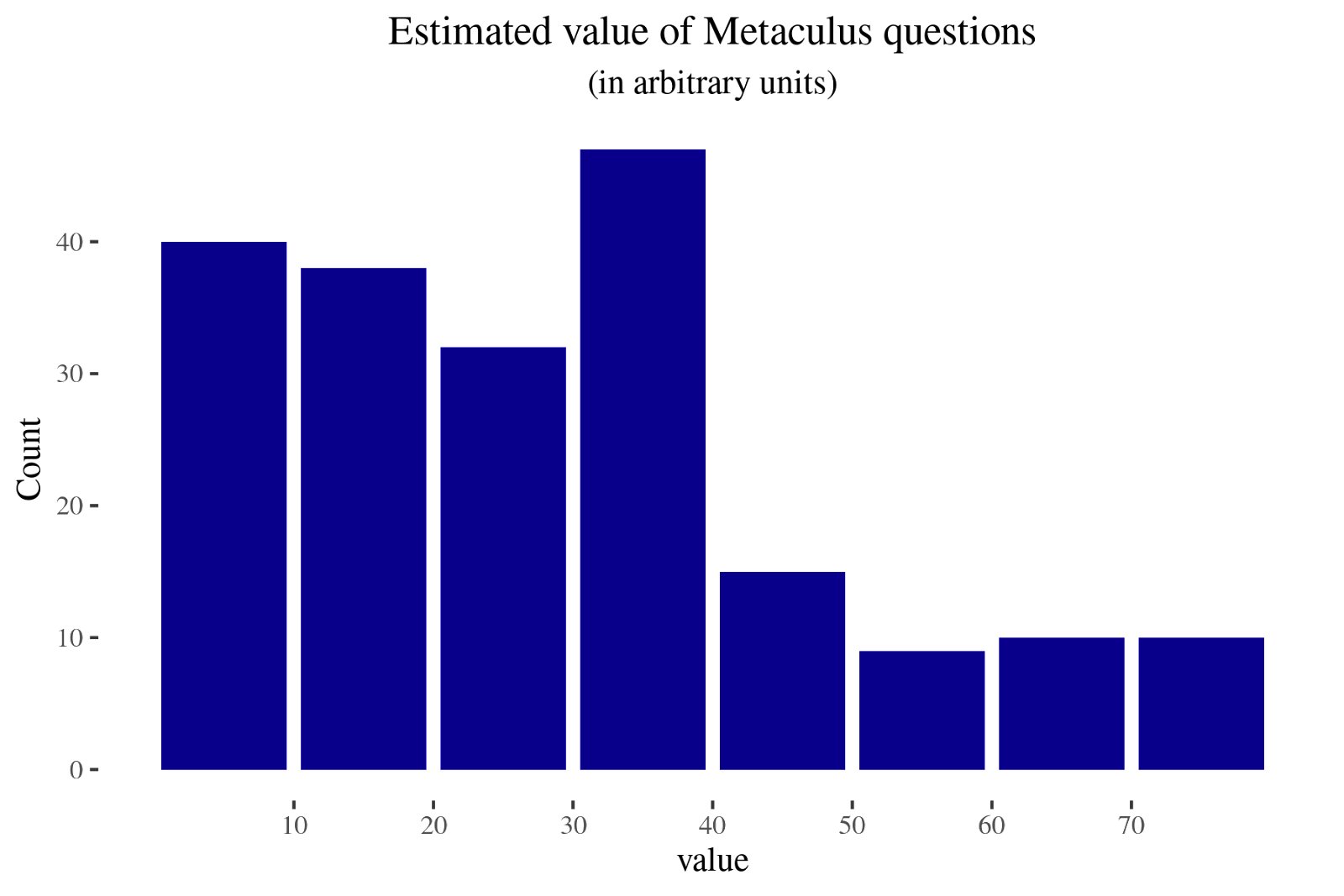

I took a random sample of 200 questions and rated them according to:

- how far removed I think they are from influencing decisions

- how good of a fit they are for forecasters, and

- the scope of the matters they ask about

My results can be found here [1]. This work is rough and not meant to be definitive. In particular, I think that some of the rankings might be subject to some degree of idiosyncrasy. Nonetheless, I hope that this might still be informative, and lead to some reflection about if and how Metaculus questions can be valuable.

Decision-relevance

How likely is this to change actual decisions?

- Directly: Someone will read this and change their decisions as a result (e.g., micro-covid calculations). Numeric value: 4

- Indirectly with one degree of separation. Numeric value: 3

- Indirectly with more than one degree of separation. Numeric value: 2 (e.g, most CSET-Foretell questions)

- Indirectly, with an unknown number of degrees of separation. Numeric value: 1

- Almost certainly won't change any decisions. Numeric value: 0

Indirect effects, such as finding out if forecasters are calibrated on some domain, or improving one's models of the world, are difficult to capture in a simple rubric. I've tried to capture this in "degrees away from being decision-relevant", but this might be a bad approximation.

Forecasting fit

How valuable is it to generate insight on this topic from a forecasting perspective? Is anybody else trying?

- Very valuable: This question is perfectly suited to forecasters and makes its platform shine. Numeric value: 4

- Valuable: This question isn't uniquely suited to forecasters specifically, but forecasts are still useful. Numeric value: 3

- Not very valuable: It's free labour, so people will take it, but people wouldn't pay for it. Numeric value: 2

- Not valuable: Forecasting doesn't solve the problem which the question seeks to address. Numeric value: 1

For example, if other groups are looking at similar questions, I would rate the forecasting fit lower. Other groups might be liquid financial markets, sports betting markets, politics prediction markets, Nate Silver’s group at FiveThirtyEight, or experts I deem to be reliable.

Otherwise, I would use my intuition about what makes a question more forecastable. For instance, binary events are more straightforward to forecast (and to construct base rates about) than distributions. I also rated questions as more forecastable if they were about areas where forecasters live (Europe, US and UK.)

Scope

I categorized Metaculus questions as one of:

- Global: Affects many nations or a few very important nations (e.g., US/China conflict, space exploration). Numeric value: = 5

- Regional: Affects a whole region, with more than one nation or US state (e.g., Balcan conflict, Brexit, GERD dam). Numeric value: 4.

- National: Affects one nation/US state or large non-state actor (e.g., Brazil elections, ExxonMobile scandals). Numeric value: 3

- Provincial: Affects part of a nation/US state (e.g., California forest fires, local corruption scandal). Numeric value: 2

- Local: Affects a more restricted area and doesn't have global implications (e.g., one particular organization). Numeric value: 1

- Hyperlocal: Pertains to a particular detail of something which would otherwise have a local scope (e.g., one very particular detail of one particular organization). Numeric value: 0.5

Given Metaculus’ readership, local questions most likely end up being more decision-relevant than global ones. For instance, California wildfires could influence Metaculus users in California, or questions about Virginia could influence their health department. As expanded below, I attempt to consider this by multiplying scope, decision-relevance and forecasting fit, but this might be too crude a system.

Considerations regarding scope could be made more robust by considering how many people the event under consideration affects (e.g., 1k, 10k, 100k, 1M, 10M, 100M, 1B+), and a measure of how much it affects them.

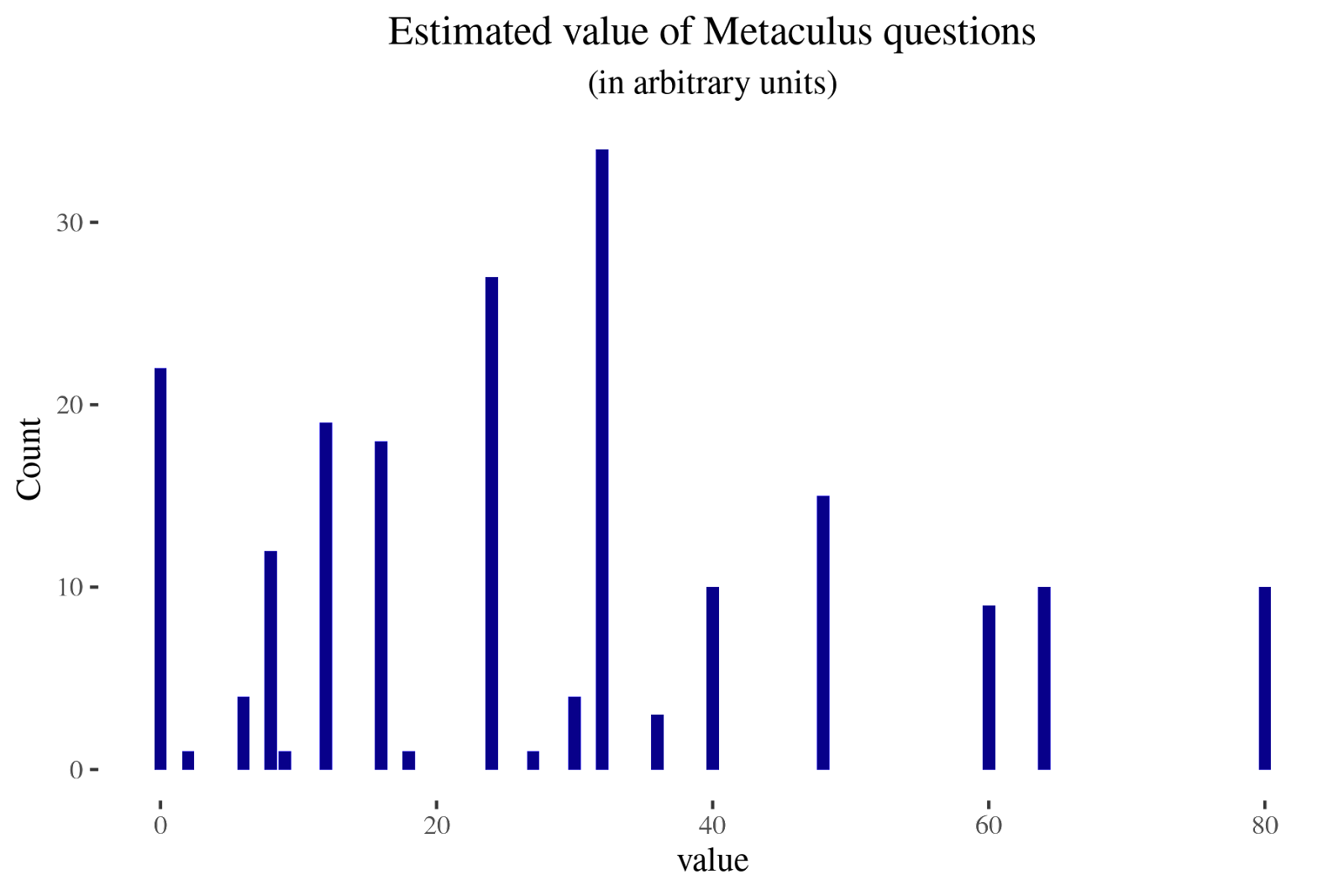

Overall value

The three elements (sort of) map to: scale, tractability and neglectedness, but not completely. One could also create more elaborate and robust rubrics.

In any case, I get a measure of the overall value by multiplying the numeric values of the three factors. For instance, if decision-relevance is zero, the overall value is zero as well. I am aware that this is not very methodologically elegant [2]. Nonetheless, I still wanted to have some measure of aggregate impact.

Conclusion

Given Metaculus’ historic roots as a general-purpose forecasting platform [4], it’s not surprising that their questions don’t have that much of an impact from the narrow perspective considered in this post: legibly changing decisions. In particular, many of Metaculus’ questions seem optimized for being fairly interesting to forecasters rather than directly valuable. However, given that Metaculus does appeal to the EA community for funding—see the 2019 and 2020 grants—it still feels fair game to evaluate them based on their expected impact.

That said, I can imagine other pathways to impact besides the impact of their questions. Two I can think of are:

- As a platform for identifying talent. I know of a few people working at EA organizations that gained a fair bit of career capital from their work at Metaculus. Going forward, Rethink Priorities might be happy to give research opportunities to excellent Metaculus forecasters.

- As a way to ground and sharpen EA’s epistemic health, sanity, models of the world, etc.

- For instance, Metaculus could provide value by being a better, more realistic, less fake version of OpenPhilanthopy’s calibration training. This could conceivably happen without any particular question being all that valuable, though I would find this surprising.

- For instance, Metaculus could sanity-check claims of interest to EAs. For example, this question on a livestock farming ban by 2041 makes it clear that some claims by DxE, an animal rights organization, are very overconfident.

It’s also possible that Metaculus is most valuable at the onset of emergencies, like the COVID pandemic, and less useful now that there are fewer unknown unknowns in the immediate horizon [5]. Because of the absence of these and other considerations or possible pathways to impact, this post does feel somewhat rough.

But suppose one determined that most of Metaculus’s impact came from the effects of their questions. In that case, the EA community could try to directly estimate its willingness to pay for Metaculus questions and just pay Metaculus and Metaculus forecasters that amount as a reward and an incentive.

For instance, the highest-scoring questions [6] in my dataset—those with a score of 80—were:

- Will there be at least one fatality from nuclear detonation in North Korea by 2050, if any detonation occurs?

- 50 years after the first AGI becomes publicly known, how many hours earlier will historical consensus determine it came online?

- Will more than two nuclear weapons in total have been detonated as an act of war by 2050?

- When will 100 babies be born whose embryos were selected for genetic scores for intelligence?

- Will armed conflict between the national military forces or law enforcement personnel of the Republic of China (Taiwan) and the People's Republic of China (PRC) cause at least 100 deaths before 2050?

- Will the EU have a mandatory multi-tiered animal welfare labelling scheme in place by 2025?

- Will there be armed conflict between the national military forces, militia and/or law enforcement personnel of Republic of China (Taiwan) and the People's Republic of China (PRC) before Jan 1, 2024?

- Will armed conflicts between the Republic of China (Taiwan) and the People's Republic of China (PRC) lead to at least 100 deaths before Jan 1, 2026?

- Will the human condition change fundamentally before 2100?

- When will AI achieve competency on multi-choice questions across diverse fields of expertise?

If one estimates, arguendo, that good forecasts for each of those questions are worth something on the order of $2000 per year, one could get an estimate of $2000 * (10 questions) * (1818 questions with more than 10 predictions on Metaculus) / (200 questions in my sample) / (0.8 as a Pareto coefficient [7]) ~ $225,000 / year.

I’d be interested in getting pushback and other perspectives on any of these points.

Thanks to Ozzie Gooen and Kelsey Rodríguez for comments and editing help.

Footnotes

[1]: The code to extract these questions from Metaculus—using Metaforecast as a middle-point—can be found here. Note that by design, Metaforecast excludes questions with less than ten forecasts. The code to produce the R plots can be found here.

[2]: For instance—given equal forecasting fit—a question with a decision relevance of 3 and a scope of 1 might be more valuable than a question with a decision relevance of 1 and a scope of 3.

[3]: One could make the case that these questions could be valuable if they influenced people to emigrate away from unstable regions. Still, I don’t expect prospective emigrant Metaculus readership in Hong Kong to be very high, nor Metaculus readers in Israel to have high property ownership rates in places that would be given back to Palestine.

[4]: I am aware that Metaculus has always aimed to produce useful probabilities, particularly around topics of scientific interest and AI. But the aim of being directly useful to the EA community in particular feels relatively recent.

[5]: Or, are there?

[6]: Because of the limitations of my methodology, these might not ultimately be the most valuable questions in my 200 question dataset. Conversely, some of the lowest-scoring questions (those with a value of 5 or lower) in my dataset were:

- When will China legalise same-sex marriage?

- Will George R. R. Martin die before the final book of A Song Of Ice And Fire is published?

- How many Computer Vision and Pattern Recognition e-prints will be published on arXiv over the 2021-01-14 to 2022-01-14 period?

- If Elizabeth Holmes is convicted in Theranos fraud trial, how long will her sentence be?

- Will Elon Musk's Tesla Roadster be visited by a spacecraft before 2050?

- Will Bill Gates implant a brain-computer interface in anyone by 2030?

- Will Roger Federer win another Grand Slam title?

- What will the largest number of digits of π to have been computed be, by the end of 2025?

- How many medals will the USA win at Paris 2024?

- When will there be a mile-high building?

- How many e-prints on Few-Shot Learning will be published on ArXiv over the 2021-02-14 to 2023-02-14 period?

- What will the space traveler fatality rate due to spacecraft anomalies be in the 2020's?

- Will France place in the Top 5 at the 2024 Paris Olympics?

- Will Kyle Rittenhouse be convicted of first-degree intentional homicide?

- When will a first-class Royal Mail stamp cost at least £1?

- When will SpaceX's Starship carry a human to orbit?

- What percentage of seats will the PAP win in the next Singaporean general election?

- What will the mean of the year-over-year growth rate of the sum of teraflops of all 500 supercomputers in the TOP500 be, in the three year period ending in November 2023?

- When will the first human mission to Venus take place?

- When will The Simpsons air its final episode?

- How many federal judges will the US Senate confirm in 2021?

- When will Virgin Galactic's first paid flight occur?

- When will the Twin Prime Conjecture be resolved?

I know that there is an argument to be made that the oddly specific AI arxiv questions are valuable because they help inform how accurate other AI predictions might be, but I don't buy it.

[7]: Assuming that 80% of the value of Metaculus questions comes from the most valuable ones, per something akin to the Pareto principle.

A complementary approach to estimating the value of Metaculus questions (focusing just on decisions affects, not improving epistemics, vetting potential researchers, etc.) would be to actually ask a bunch of people whether they look at Metaculus questions, whether they think they seem decision-relevant and valuable, and whether Metaculus questions influenced their decisions. This could be similar to the impact survey Rethink does and the impact survey I did last year. See also https://forum.effectivealtruism.org/posts/EYToYzxoe2fxpwYBQ/should-surveys-about-the-quality-impact-of-research-outputs-1

I think Metaculus indeed intends to do something like this soon-ish (iirc, it was mentioned in the recent job ad for an EA Question Author).

Sounds about right.

Motte: We should give $225k to Metaculus every year

Bailey: This very specific method of estimating the value of Metaculus questions leads to a back-of-the-napkin guess that Metaculus questions might be worth on the order of $225k/year to the EA community, but I could imagine this being an overestimate, particularly if nobody ends up changing any decisions because of Metaculus predictions, or if the estimate of $2000 per highly valuable question is too high.

What I actually think: I think that Metaculus is great, but I was worried that their questions might not have any effects on decisions, and thus ultimately not be valuable. After a brief investigation, I think that a fair number of its questions are valuable. To incentivize questions that the EA community finds valuable, and to ensure that Metaculus remains on a good financial footing, the EA community could each year try to estimate the value Metaculus questions produce and pay Metaculus (proportionally to) that amount. I think this would require a bit more effort than my back-of-the-napkin calculation right there, but not that much if EA is still vetting constrained.

I wonder how valuable the training and talent selection effects are for the community. RP seems to think they are valuable, e.g. they targeted Metaculus forecasters specifically for their current hiring round. Maybe RP people can estimate how valuable legible forecasting experience and skill is to them?

Also, forecasting is a concrete and useful practice related to EA that anyone can do and skill up in. E.g. the EA forecasting tournament last year was a great shared experience for my local chapter (not only cause we won :P).

Good question; I am not sure what the answer would be.

Thanks for this post!

I've been thinking a lot about related topics lately. I haven't written anything very polished yet, but here are some rough things you or readers of this post may find interesting (in descending order of predicted worth-checking-out-ness):

Less directly relevant:

The three metrics feel more logarithmic than linear, so it'd probably make more sense to use addition rather than multiplication. However, I've tested it and it practically doesn't change the ordering for the top 50% and mostly influences the lower results (especially those that multiply to 0 😊).

(Also, it's clearly an irrelevant level of analysis, as I'd expect the problems to be more in the choice and definition of the metrics, and the valuations thereof)

I see what you mean, particularly for scale. But not so much for decision-relevance and forecasting fit.

Also, the threshold for decision-relevance is in a sense lower for larger events, so I think that evens out some of the variance.

Even though I think that something like a US-China war would be hard to prevent, I think that forecasts on its likelihood are still valuable because they affect many possible plans. A post that goes through Metaculus questions about whether “shit is going to hit the fan” (US-China war, a war between nuclear powers, nuclear weapons used, etc.), and tries to outline what implications those scenarios would have for the EA community—and perhaps what cheap mitigation steps could be taken—might be a small but valuable project. Note that per Laplace’s law, the likelihood of another great war in the medium term is not that low.

I agree with this. I'm planning to write 1 or more posts of vaguely that type, but I don't know precisely when and it seems very unlikely I'll 100% cover this. So if someone is interested in doing that, maybe contact me (michael AT rethinkpriorities DOT org), and perhaps we could collaborate or I could give some useful pointers?

Nitpick: I think interestingness is very helpful but not necessary. Other potential incentives to forecast include the opportunity to be impactful/helpful, status/respect on Metaculus and maybe elsewhere, Metaculus points, getting feedback that improves epistemics, sense of mastery, and money (e.g. tournament prizes).

I agree, but I do think that interestingness is important. In particular, putting on my forecaster hat, I think that avoiding drudgerous questions is very important.

Key point of this comment: It seems to me a mistake to think forecasting questions are usually useful only if it's feasible to influence whether the asked-about event happens. I think there are just many ways in which our actions can be improved by knowing more about the world's past, present, and likely future states.

As an analogy, I'd find a map more useful if it notes where booby traps are even if I can't disable the traps (since I can side step them), and I'm better able to act in the world if I'm aware that China exists and that its GDP is larger than that of most countries (even though I can't really influence that), and a huge amount of learning is about things that happened previously and yet is still useful.

Your footnote nods to that idea, but as if that's like a special case.

For example, the first of those questions could be relevant to decisions like how much to invest in reducing Israel-Palestine tensions or the chance of a major nuclear weapons buildup by Israel or Iran.

I also think people influenced directly or indirectly by Metaculus could take actions with substantial leverage over major events, so I'd focus less on "large" and more on "neglectedness / crowdedness". E.g., EAs seem to be some of the biggest players for extreme AI risk, extreme biorisk, and possibly nuclear risk, which are all actually very large in terms of complexity and impact, but are sufficiently uncrowded that a big impact can still be made.

(Though I do of course agree that questions can differ hugely in decision-relevance, that considering who will be directly or indirectly influenced by the questions matters, that those questions you highlighted are probably less impactful than e.g. many AI risk or nuclear risk questions on Metaculus.)

Here is this point in a picture.

In particular, I'd tend to think that the changing decisions and influencing the event which is being forecasted might be the main pathways to impact, but I could be wrong.

Version 2:

I think this is cool.

Maybe "Changing decisions" should be "Changing other decisions"? Since I think influencing the forecasting event occurs via influencing decisions about that event?

When I did some research on the use of forecasting to support government policymaking, one of the issues I quickly encountered was that for some questions, if your forecast is counterfactually (upon making the forecast) accurate and influential upon decision makers, it can lead to policies which prevent the event from occurring and thus making it an inaccurate forecast. Of course, some decisions are not about preventing some event from occurring but rather responding to such an event (e.g., preparedness for a hurricane), in which case there’s not much issue. I could only skim and keyword search the post and failed to see an emphasis on that, but apologies if I just missed it. Do you think this is less of an issue in EA-relevant forecasting than, e.g., international security policymaking? My extremely underdeveloped intuition has been “probably yes,” but what are your thoughts?

My thoughts are that this problem is, well, not exactly solved, but perhaps solved in practice if you have competent and aligned forecasters, because then you can ask conditional questions which don't resolve.

Then you can still get forecasts for both, even if you only expect the first to go through.

This does require forecasters to give probabilities even when the question they are going to forecast on doesn't resolve.

This is easier to do with EAs, because then you can just disambiguate the training and the deployment step for forecasters. That is, once you have an EA that is a trustworthy forecaster, you could in principle query them without paying that much attention to scoring rules.

i got the feedback that this post was too verbose & rambling, so here is a condensed twitter thread instead.