Note: I think most information about Foretold and related research is a bit more relevant LessWrong than the EA Forum, so aim to mostly post there in the future. However, I think particularly important posts are probably useful to linkpost here.

I’m happy to announce a semi-public beta of Foretold.io for the EA/LessWrong community. I’ve spent much of the last year working on coding & development, with lots of help by terraform on product and scoring design. Special thanks to the Long-Term Future Fund and its' donors, who’s contribution to the project helped us to hire contractors to do much of the engineering & design.

You can use Foretold.io right away by following this link. Currently public activity is only shown to logged in users, but I expect that to be opened up over the next few weeks. There are currently only a few public communities of predictable questions, but that will change over time.

The Main Concept

We aim for Foretold.io to be useful as a general-purpose prediction registry, with the potential to be used for more specific prediction purposes.

The main features of a prediction registry include things like:

- People can specify questions to be predicted

- Forecasters can predict those questions

- Questions can either be resolved with answers or cancelled

- After questions are resolved, forecasters are scored on meaningful metrics

In addition to the essentials, we focused on some other useful features including:

Full distribution forecasts for continuous variables

In Foretold.io, variables are estimated with arbitrary probability distributions. Most existing forecasting tools only allow for binary and categorical binary questions, or relatively simple distributions. Foretold.io saves arbitrary cumulative density functions. The main input editor is a fork of that in Guesstimate. We plan to add more input methods in the future.

“Communities” with custom privacy settings

Foretold.io allows for groups to collaborate on forecasting different sets of questions. Communities can be public or private, and question creators can easily move their questions between communities. I’ve talked to several Effective Altruist organizations that have internal forecasting setups, but almost all use in-house solutions with Google Docs. One of the main bottlenecks seems to be easy private community support.

A GraphQL API, with support for bots

Users can create bots that get scored individually. They can use the same GraphQL API that the Foretold.io client uses. You can see information about how to use the API here. This part is still early, but will continue to improve.

In the future we hope that the API will be used to do things like:

- Make forecasts

- Make & resolve questions

- Automate the setup of new prediction experiments

- Make dashboards of useful forecasts

Intended Uses

Similar to Guesstimate, Foretold.io itself is not domain-specific. It could be used in multiple kinds of setups; for instance, for personal use, group use, or for a sizable open prediction tournament. Hopefully over the coming years we’ll identify which specific uses and setups are the most promising and optimize accordingly.

Recently it’s been used for:

- Various personal/individual questions

- Internal group predictions at FHI

- A currently-open tournament on predicting the upcoming EA survey responses

- A few small forecasting experiments

We encourage broad experimentation. Feel free to make as many public & private communities as you like for different purposes. If you'd be interested in discussing possible details, please reach out.

Questions

I’m interested in performing an experiment that could use the tracking of probability distributions. Can I use Foretold.io?

Yes! Foretold.io is open-source, and we’re very happy to give special support to researchers and similar interested in working with probability distributions and/or forecasts. It’s made to be reasonably general-purpose and extendable via the API.

To get started, simply create a community on Foretold.io and make a few questions. If you prefer, you can also fork the codebase and run the app separately.

Are you coordinating with other forecasting projects?

In the last few years several efforts and research projects around “forecasting” have emerged, specifically around AI. Most of these are focused on domain-specific research, rather than technical infrastructure. I have been talking with several of the other groups, and have been working particularly closely with Ben Goldhaber of Parallel Forecast.

Why not just partner with an existing technical forecasting registry and add features to that?

In general, I’ve found that it’s really hard to join a group and get them to dramatically change their priorities. Many of the new additions in Foretold.io are pretty significant, and the roadmap is ambitious.

Is there any connection between Foretold.io and Guesstimate?

Foretold.io uses a fork of the distribution editor from Guesstimate. The distribution syntax is the same (“5 to 20”). In the future we plan to make it easy to import Foretold.io variables into Guesstimate, and to use Guesstimate variables for predictions in Foretold.io.

Details

Technical details

Foretold.io uses Node.js and Express.js with Apollo for the GraphQL server, and ReasonML and React for the client. The database is PostgreSQL. The application is currently hosted on Heroku.

Funding

The project has raised $90,000 from the Long-Term Future Fund. Around $25,000 of that has been spent so far, mostly on programming and design help.

Ownership

Foretold.io is open source. In the future, I intend for it to be supported via a nonprofit.

Get Involved

Foretold.io is free & open to use of all (legal) kinds. That said, if you intend to make serious use of the API, please let me know beforehand.

If you’re interested in collaborating on either the platform, formal experiments, or related research, please reach out to me, either via private message or email (ozzieagooen@gmail.com). I’m particularly looking for engineers and people who want to set up forecasting tournaments on important topics.

Select Screenshots

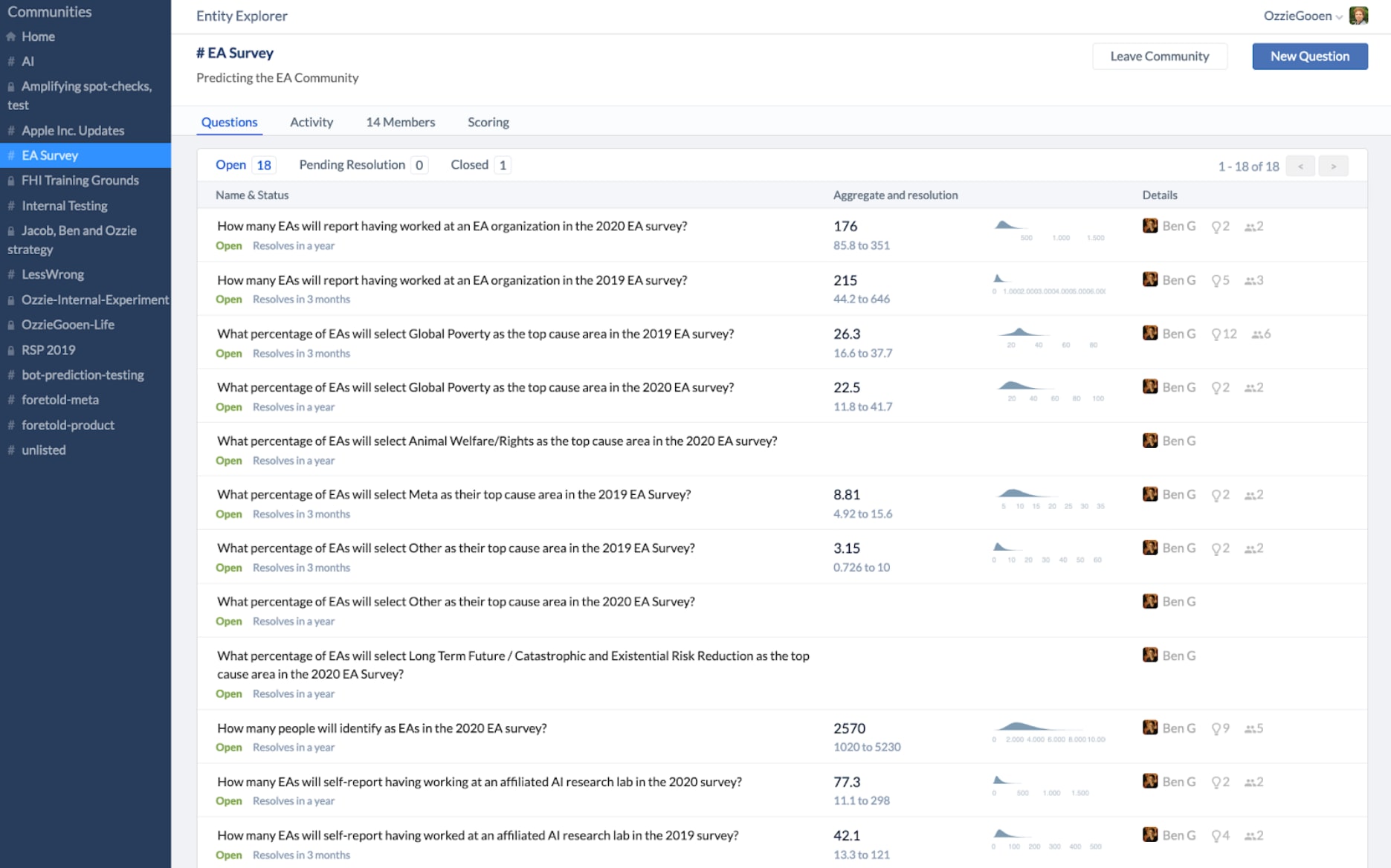

Index View

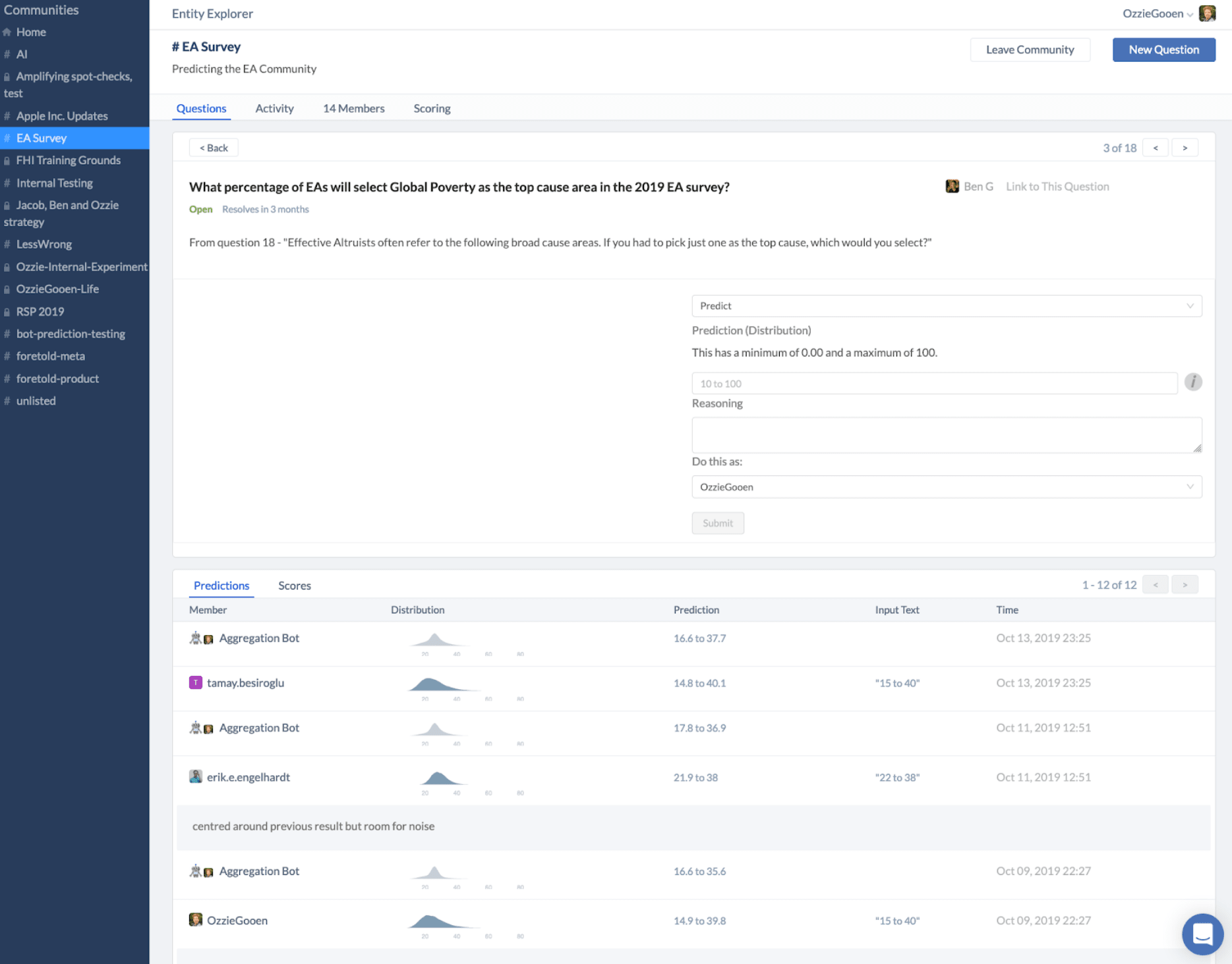

Question View

Many thanks to terraform, Ondřej Bajgar, and Rose Hadshar for several useful comments on this post

Recently published in Science - Predict science to improve science

The associated platform: https://socialscienceprediction.org

Great work Ozzie!

Some differences are apparent but could you spell out how you intend to differentiate Foretold from Metaculus?

Thanks!

I believe the items in the "other useful features" section above are unique from Metaculus. Also, I've written this comment on the LessWrong post discussing things further.

https://www.lesswrong.com/posts/wCwii4QMA79GmyKz5/introducing-foretold-io-a-new-open-source-prediction?commentId=i3rQGkjt5CgijY4ow

Great work!!!

Thanks Soeren!