It's fashionable these days to ask people about their AI timelines. And it's fashionable to have things to say in response.

But relative to the number of people who report their timelines, I suspect that only a small fraction have put in the effort to form independent impressions about them. And, when asked about their timelines, I don't often hear people also reporting how they arrived at their views.

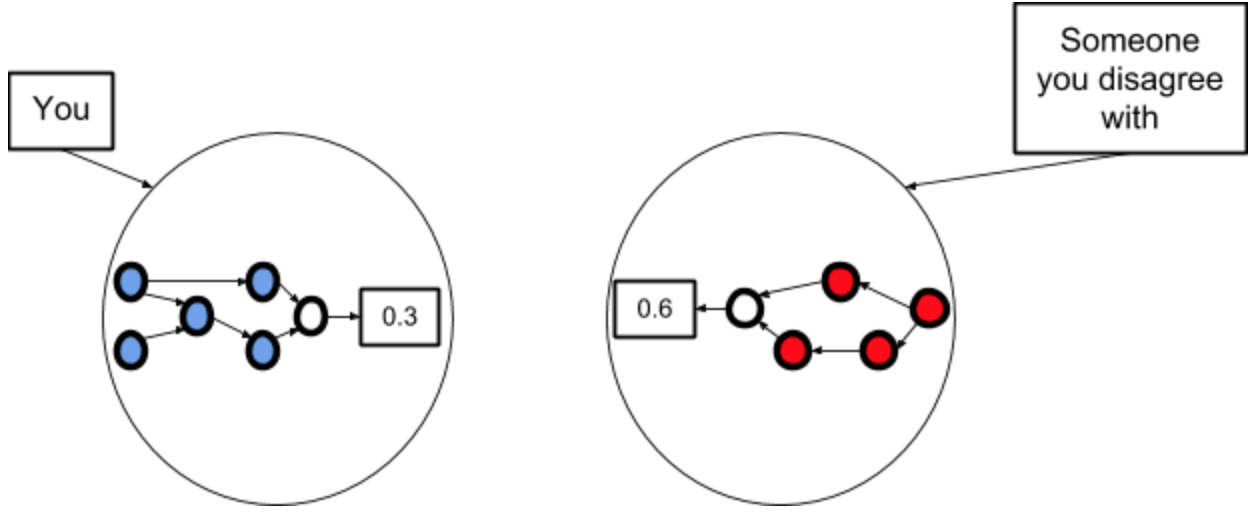

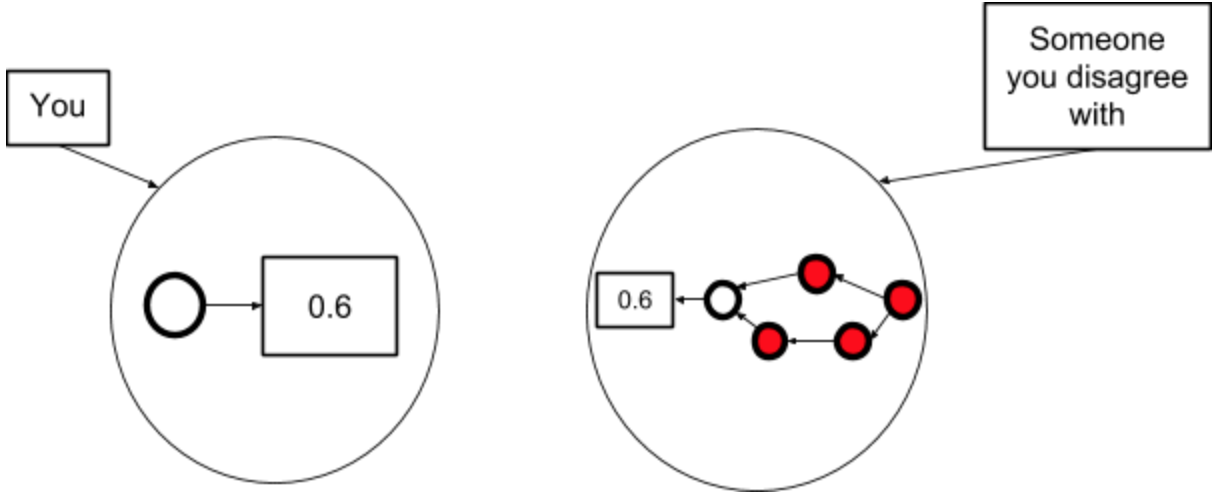

If this is true, then I suspect everyone is updating on everyone else's views as if they were independent impressions, when in fact all our knowledge about timelines stems from the same (e.g.) ten people.

This could have several worrying effects:

- People's timelines being overconfident (i.e. too resilient), because they think they have more evidence than they actually do.

- In particular, people in this community could come to believe that we have the timelines question pretty worked out (when we don't), because they keep hearing the same views being reported.

- Weird subgroups forming where people who talk to each other most converge to similar timelines, without good reason.[1]

- People using faulty deference processes. Deference is hard and confusing, and if you don't discuss how you’re deferring then you're not forced to check if your process makes sense.

So: if (like most people) you don't have time to form your own views about AI timelines, then I suggest being clear who you're deferring to (and how), rather than just saying "median 2040" or something.[2]

And: if you’re asking someone about their timelines, also ask how they arrived at their views.

(Of course, the arguments here apply more widely too. Whilst I think AI timelines is a particularly worrying case, being unclear if/how you're deferring is a generally poor way of communicating. Discussions about p(doom) are another case where I suspect we could benefit from being clearer about deference.)

Finally: if you have 30 seconds and want to help work out who people do in fact defer to, take the timelines deference survey!

Thanks to Daniel Kokotajlo and Rose Hadshar for conversation/feedback, and to Daniel for suggesting the survey.

- ^

This sort of thing may not always be bad. There should be people doing serious work based on various different assumptions about timelines. And in practice, since people tend to work in groups, this will often mean groups doing serious work based on various different assumptions about timelines.

- ^

Here are some things you might say, which exemplify clear communication about deference:

- "I plugged my own numbers into the bio anchors framework (after 30 minutes of reflection) and my median is 2030. I haven't engaged with the report enough to know if I buy all of its assumptions, though"

- "I just defer to Ajeya's timelines because she seems to have thought the most about it"

- "I don't have independent views and I honestly don't know who to defer to"

Cool idea to run this survey and I agree with many of your points on the dangers of faulty deference.

A few thoughts:

(Edit: I think my characterisation of what deference means in formal epistemology is wrong. After a few minutes of checking this, I think what I described is a somewhat common way of modelling how we ought to respond to experts)

The use of the concept of deference within the EA community is unclear to me. When I encountered the concept in formal epistemology I remember "deference to someone on claim X" literally meaning (a) that you adopt that persons probability judgement on X. Within EA and your post (?) the concept often doesn't seem to be used in this way. Instead, I guess people think of deference as something like (b) "updating in the direction of a persons probability judgement on X" or (c)"taking that person's probability estimate as significant evidence for (against) X if that person leans towards X (not-X)"?

I think (a) - (c) are importantly different. For instance, adopting someones credence doesn't always mean that you are taking their opinion as evidence for the claim in question even if they lean towards it being true: you might adopt someones high credence in X and thereby lowering your credence (because yours was even higher before). In that case, you update as though their high credence was evidence against X. You might also update in the direction of someones credence without taking on their credence. Lastly, you might lower your credence in X by updating in someones direction even if they lean towards X.

Bottom line: these three concepts don't refer to the same "epistemic process" so I think its good to make clear what we mean by deference.

(I) deference to someones credence in X = you adopt their probability in X (II) positively updating on someone's view = increasing your confidence in X upon hearing their probability on X (III) negatively updating on someones view = decreasing your confidence in X upon hearing their probability in X

I hope this comment was legible, please ask for clarification if anything was unclearly expressed :)

I wonder if it would be good to create another survey to get some data not only on who people update on but also on how they update on others (regarding AGI timelines or something else). I was thinking of running a survey where I ask EAs about their prior on different claims (perhaps related to AGI development), present them with someone's probability judgements and then ask them about their posterior. That someone could be a domain expert, non-domain expert (e.g., professor in a different field) or layperson (inside or outside EA).

At least if ... (read more)