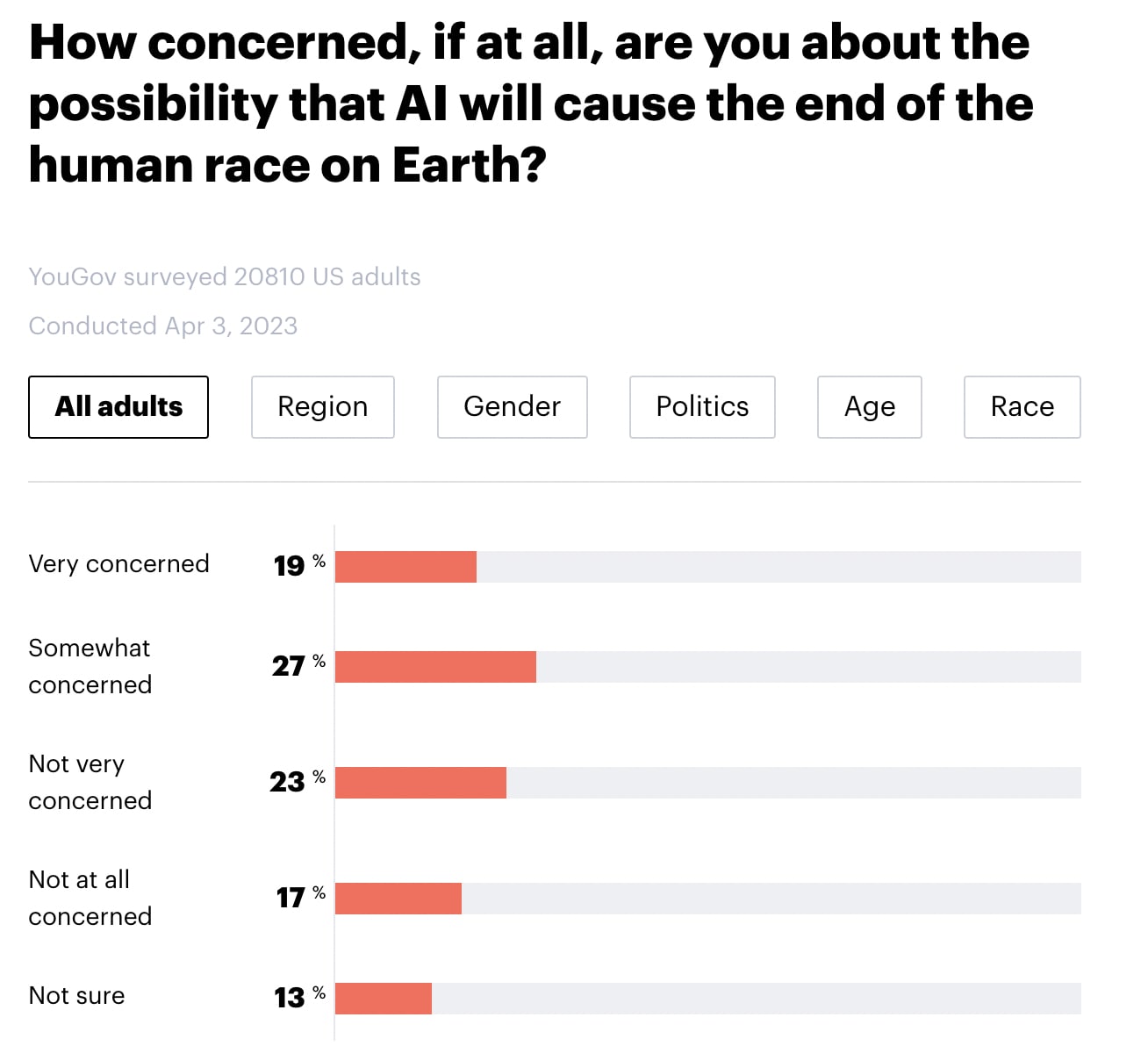

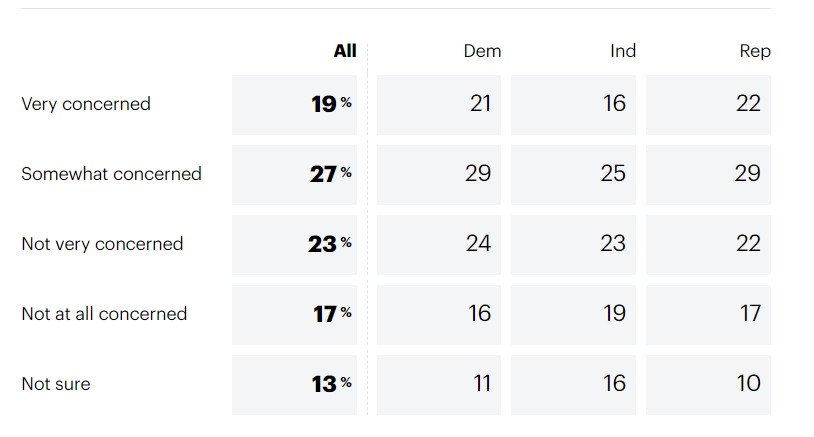

YouGov America released a survey of 20,810 American adults. Highlights below. Note that I didn't run any statistical tests, so any claims of group differences are just "eyeballed."

- 46% say that they are "very concerned" or "somewhat concerned" about the possibility that AI will cause the end of the human race on Earth (with 23% "not very concerned, 17% not concerned at all, and 13% not sure).

- There do not seem to be meaningful differences by region, gender, or political party.

- Younger people seem more concerned than older people.

- Black individuals appear to be somewhat more concerned than people who identified as White, Hispanic, or Other.

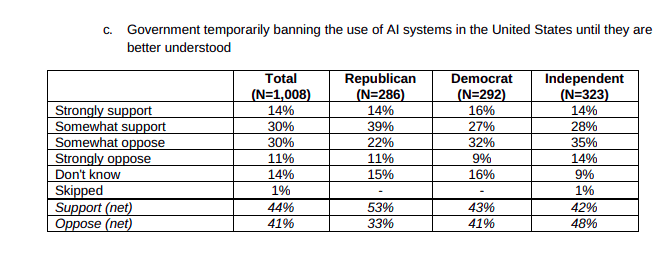

Furthermore, 69% of Americans appear to support a six-month pause in "some kinds of AI development". Note that there doesn't seem to be a clear effect of age or race for this question. (Particularly if you lump "strongly support" and "somewhat support" into the same bucket). Note also that the question mentions that 1000 tech leaders signed an open letter calling for a pause and cites their concern over "profound risks to society and humanity", which may have influenced participants' responses.

In my quick skim, I haven't been able to find details about the survey's methodology (see here for info about YouGov's general methodology) or the credibility of YouGov (EDIT: Several people I trust have told me that YouGov is credible, well-respected, and widely quoted for US polls).

See also:

Yeah, I think I sort of Aumann-absorbed the idea that AIs would skip over the human level because it has no special significance for them without wondering exactly how wide that human level should be. I think what I had in mind was competence greater than what I thought was humanly possible in one intellectual field that I respected, so more like physics or infosec than climbing. I think my prior was that it would be easier to build specialized systems, so that it had probably not crossed my mind that an AI could be superhuman by being a bit above average in way more fields than any human could.

Eliezer mentioned in a recent interview that he also considers himself to have been wrong about that. This could be a bit of a silver lining. If AI goes from capybara levels of smart straight to NZT-48 levels of smart, no one will be prepared. As it stands, no one will be prepared either, but it’s at least a bit less dignified to not be prepared now.