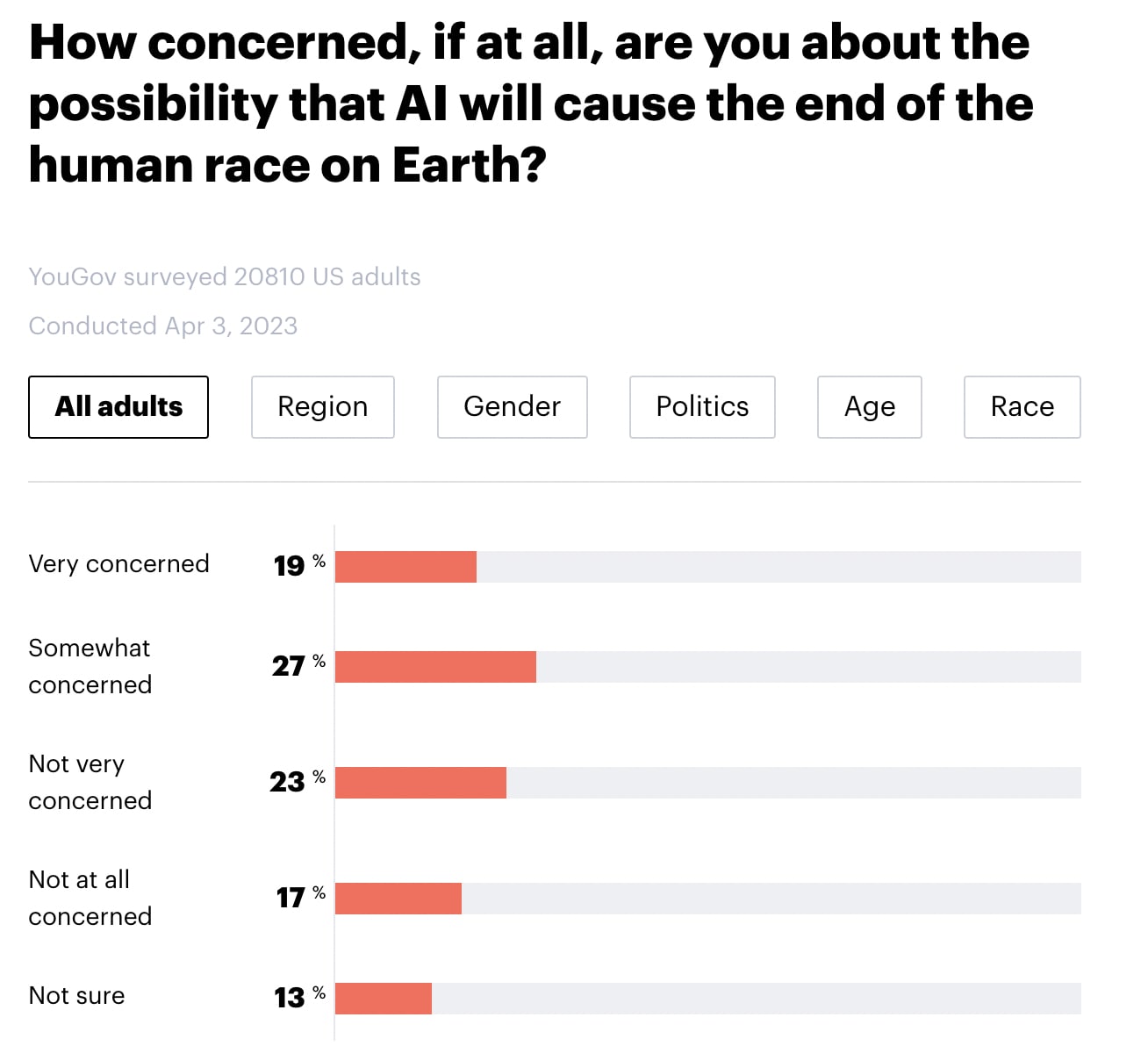

YouGov America released a survey of 20,810 American adults. Highlights below. Note that I didn't run any statistical tests, so any claims of group differences are just "eyeballed."

- 46% say that they are "very concerned" or "somewhat concerned" about the possibility that AI will cause the end of the human race on Earth (with 23% "not very concerned, 17% not concerned at all, and 13% not sure).

- There do not seem to be meaningful differences by region, gender, or political party.

- Younger people seem more concerned than older people.

- Black individuals appear to be somewhat more concerned than people who identified as White, Hispanic, or Other.

Furthermore, 69% of Americans appear to support a six-month pause in "some kinds of AI development". Note that there doesn't seem to be a clear effect of age or race for this question. (Particularly if you lump "strongly support" and "somewhat support" into the same bucket). Note also that the question mentions that 1000 tech leaders signed an open letter calling for a pause and cites their concern over "profound risks to society and humanity", which may have influenced participants' responses.

In my quick skim, I haven't been able to find details about the survey's methodology (see here for info about YouGov's general methodology) or the credibility of YouGov (EDIT: Several people I trust have told me that YouGov is credible, well-respected, and widely quoted for US polls).

See also:

This is a great poll and YouGov is a highly reputable pollster, but there is a significant caveat to note about the pause finding.

The way the question is framed provides information about "1000 technology leaders" who have signed a letter in favor of the pause but does not mention any opposition to the pause. I think this would push respondents to favor the pause. Ideal question construction would present more neutrally with both support and oppose statements.

Looks like YouGov had the same concern and ran a second poll where they split respondents into three groups for that question (one with no framing, one with the support framing from the post above, and one with a support + oppose framing):

https://today.yougov.com/topics/technology/articles-reports/2023/04/15/ai-nuclear-weapons-world-war-humanity-poll

The simple / no context framing ("Would you support or oppose a six-month pause on some kinds of AI development?") got the lowest support, but still pretty high at 58%.

YouGov summarizes it as

Which sounds right to me, especially for total support (58%/61%/60% for no framing, support, support+oppose respectively).

It's nice that this topic doesn't seem to be polarized

(@nadavb )

Don’t worry, there’s still time to polarize it

Any guess as to which direction on the Republican/Democratic axis?

My best guess is it would eventually become a liberal issue, but to be clear this is not inevitable

It's almost as if there's no difference between the two, until someone tells them which side their team supports.

Actually rather entertaining to me that independents are slightly less sure.

Independents are less decisive on almost everything.

I would caution people against reading too much into this. If you poll people about a concept they know nothing about ("AI will cause the end of the human race") you will always get answers that don't reflect real belief. These answers are very easily swayed, they don't cause people to take action like real beliefs would, they are not going to affect how people vote or which elites they trust, etc.

Largely agree, but results like this (1) indicate that if AI does become more salient the public will be super concerned about risks and (2) might help nudge policy elites to be more interested in regulating AI. (And it's not like there's some other "real belief" that the survey fails to elicit-- most people just don't have 'real beliefs' on most topics.)

Well, maybe to both parts; it's a good sign, but a weak one. Also concerns about response bias, etc., especially since YouGov doesn't specialize in polling these types of questions and there's no "ground truth" here to compare to.

This is an important warning but to be clear it also isn’t necessarily always the case. Rethink Priorities has studied low salience issue polling a lot and we think there are some good methods. I don’t think YouGov has been very good about using those methods here though.

This survey item may represent a circumstance under which YouGov estimates would be biased upwards. My understanding is that YouGov uses quota samples of respondents who have opted-in to panel membership through non-random means, such as responses to advertisements and referrals. They do not have access to respondents without internet access, and those who do but are not internet-savvy are also less likely to opt in. If internet savviness is correlated with item response, then we should expect a bias in the point estimate. I would speculate that internet savviness is positively correlated with worrying about AI risk because they understand the issue better (though I could imagine arguments in the opposite direction--e.g., people who are afraid of computers don't use them).

To give a concrete example, Sturgis and Kuha (2022) report that YouGov's estimate of problem gambling in the U.K. was far higher than estimates from firms that used probability sampling that can reach people who don't use the internet, especially when the interviews were conducted in person. The presumed reasons are that online gambling is more addictive and that people at higher risk of problem gambling prefer online gambling to in-person gambling.

It’s happening!!

There is often quite a large gap between what these kinds of surveys seem to imply and actual voter behaviour. We see this in climate change all the time. Consider the recent survey that reported that over half of young people think humanity is doomed (with regards to climate change) https://www.bbc.com/news/world-58549373.

Yet we are not seeing a huge surge in support for european green parties.

I'm not sure what's going on with these surveys, but it's an interesting comparison.

This is not necessarily contradictory since European green parties do not always improve on the climate situation. E.g., they tend to be very opposed to nuclear energy even though it might be quite helpful to combat climate change.

Agreed that YouGov are a reputable pollster. That said, I think the wording of their concern question has some unfortunate features which likely bias the results (which is common even among both reputable pollsters).

Asking "How concerned, if at all, are you about the possibility that AI will cause the end of the human race on Earth" is, on its face, ambiguous between (i) asking how concerned you are given your estimate of how probable the outcome is and (ii) asking how concerned you are about the possible outcome. It doesn't distinguish how probable respondents think the outcome is from how concerning they think the outcome would be were it to occur. As such, respondents may respond that they are "very concerned" about "the possibility that AI will cause the end of the human race on Earth" to indicate that they believe this possibility (AI causes the end of the human race) is very bad. This is particularly so given that respondents are typically not responding to questions completely literalistically, but (like in normal human communication) interpreting them pragmatically based on what they think the questioners are likely to be interested in asking, and based on what they themselves want to signal. I would predict that this is slightly elevating reported levels of concern.

Looks to me like Yudkowsky was wrong and there was a fire alarm. (To be fair, if you had asked me in 2017 there is no way I'd have predicted that AI risk is as mainstream as it is now.)

It could still turn out that the current concern will last for ~1 year, and then 2–4 years from now we get dangerously powerful and agentic AGI. Then there’s no fire alarm anymore, and people dismiss it as a stale 2023 problem until it’s too late.

That's certainly possible though I think it's more likely that the public will become more and more concerned as more and more powerful AIs will be deployed.

Covid has become less dangerous, but the public concern about it has dropped off at what seems to me unreasonably steeply. So all I think I can learn from that is that public concern will not increase proportionally over the course of years, though it might still increase and it may spike at times.

I’m surprised, though, that AI progress has make stops around the human level. GPT-3 seemed a bit less smart than the average human at what it’s doing; GPT-4 seems a bit smarter than the average human. But there has not been this discontinuity where it completely skips the human level. That seems weird to me. Maybe it’s because of the human training data or the AI is trying to imitate human-level intelligence, but perhaps there’s also an actual soft ceiling somewhere in this area so that progress will stall for long enough for us to collectively react.

The "human range" at various tasks is much larger than one would naively think because most people don't obsess over becoming good at any metric, let alone the types of metrics on which GPT-4 seems impressive. Most people don't obsessively practice anything. There's a huge difference between "the entire human range at some skill" and "the range of top human experts at some skill." (By "top experts," I mean people who've practiced the skill for at least a decade and consider it their life's primary calling.)

GPT-4 hasn't entered the range of "top human experts" for domains that are significantly more complex than stuff for which it's easy to write an evaluative function. If we made a list of the world's best 10,000 CEOs, GPT-4 wouldn't make the list. Once it ranks at 9,999, I'm pretty sure we're <1 year away from superintelligence. (The same argument goes for top-10,000 Lesswrong-type generalists or top-10,000 machine learning researchers who occasionally make new discoveries. Also top-10,000 novelists, but maybe not on a gamifiable metric like "popular appeal.")

I think it's plausible that top-10,000 human range is fairly wide. Eg in a pretty quantifiable domain like chess 10,000th position is not even international master (the level below GM) and will be ~2,400 Elo,[1] while the top player is ~2850, for a difference of >450 Elo points. If I'm reading the AI impacts analysis correctly, this type of progress took on the order of 7-8 years.

The bottom 1% of chess players is maybe 500 rating.[2] So measured by Elo, the entire practical human range is about ~2400 (2900-500), or about 5.3x the difference at the top 10k range.

Estimates put the number of people who ever played chess at hundreds of millions, so this isn't a problem of not many people play chess.

I wouldn't be surprised if there's similarly large differences at the top in other domains.

For example, the difference between the 10,000th ML researcher and the best is probably the difference between a recent PhD graduate from a decent-but-not-amazing ML university and a Turing award winner.

Deduced from the table here. By assumption, almost all top chess players have a FIDE rating. https://en.wikipedia.org/wiki/FIDE_titles#cite_ref-fideratings_5-0

https://i.redd.it/s5smrjgqhjnx.png (using chess.com figures rather than FIDE because I assume FIDE ratings are pretty truncated, very casual players do not go to clubs).

Great points! I think "top-1,000" would've worked better for the point I wanted to convey.

I had the intuition that there are more (aspiring) novelists than competitive game players, but on reflection, I'm not sure that's correct.

I think the AI history for chess is somewhat unusual compared to the games where AI made headlines more recently because AI spent a lot longer within the range of human chess professionals. We can try to tell various stories about why that is. On the "hard takeoff" side of arguments, maybe chess is particularly suited for AI and maybe humans including Kasparov simply weren't that good before chess AI helped them understand better strategies. On the "slow(er) takeoff" side, maybe the progress in Go or poker or Diplomacy looks more rapid mostly because there was a hardware overhang and researchers didn't bother to put a lot of effort into these games before it became clear that they can beat human experts.

Yeah, I think I sort of Aumann-absorbed the idea that AIs would skip over the human level because it has no special significance for them without wondering exactly how wide that human level should be. I think what I had in mind was competence greater than what I thought was humanly possible in one intellectual field that I respected, so more like physics or infosec than climbing. I think my prior was that it would be easier to build specialized systems, so that it had probably not crossed my mind that an AI could be superhuman by being a bit above average in way more fields than any human could.

Eliezer mentioned in a recent interview that he also considers himself to have been wrong about that. This could be a bit of a silver lining. If AI goes from capybara levels of smart straight to NZT-48 levels of smart, no one will be prepared. As it stands, no one will be prepared either, but it’s at least a bit less dignified to not be prepared now.

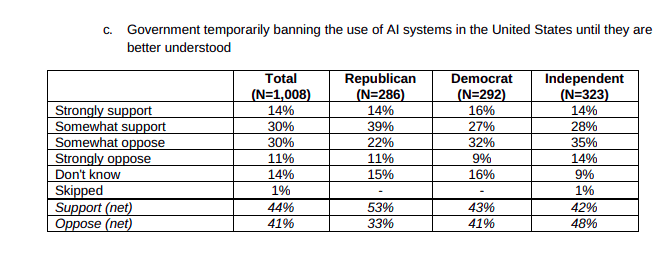

IPSOS conducted a survey recently about concerns in the US population in general. 71% of respondents answered that they were concerned about "The impact of AI (Artificial Intelligence) on jobs and society" but other areas that seem to be less pressing from an EA perspective scored higher. For example:

What is more, respondents seem to be taking the impact of AI rather lightly compared to other concerns: AI "only" gets 28% of respondents very concerned, which is lower than other issues, which get around 40% of people very concerned. The survey also asked a very similar question to the one YouGov asked: "Do you support or oppose the following policies? The government temporarily banning the use of AI systems in the United States until they are better understood". The results are very different, but that might be due to phrasing. Only 44% of respondents support the temporary ban. I think that the YouGov survey might be overestimating the number and degree of concern about AI.

You can find the full survey here.

FiveThirtyEight podcast scrutinizes this poll (segment starts 30 minutes in)

https://fivethirtyeight.com/features/politics-podcast-the-politics-of-ai/

Disappointingly shallow analysis, though.

I think we should make a survey to investigate if EA-outsiders think reducing extinction risk is right or wrong, and how many people think the net value of future human is positive/negative. Though reducing x-risks may be the mainstream in EA, but we should respect outsider's idea.

Sorry, it seems some of you don't agree with my opinion. Would you share your objections? I'm more of a negative utilitarianism, so I'm really not sure about how possible would reducing x-risks be not so good. I think people in EA can't be too confident of ourselves, considering lots of non-EA philosophers/activists(VHEMT) also did a lot of thinking about human extinction, we should at least know&respect their opinion.

Hi, I was the disagree-voter. I disagree because whether shifting probability mass from extinction and an empty future to a flourishing future is good (under empirical, moral, etc. uncertainty) is a very different question from whether people think it's good, and I consider the answer to the first question to be (clearly) yes, and if you're uncertain about something it's worth engaging with others who have thought about it but substantially deferring to a survey goes too far in this case, and in particular some people go funny in the head when thinking about extinction.

Wow, this is much higher support than I would have ever imagined for the topic. I guess Terminator is pretty convincing as a documentary!

Nice.