Katja Grace, 8 March 2023

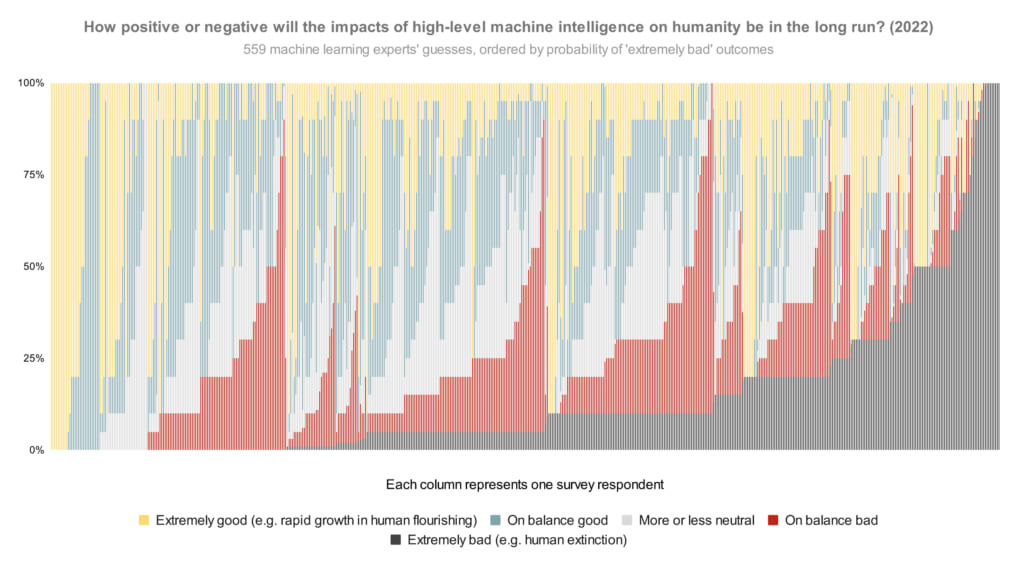

In our survey last year, we asked publishing machine learning researchers how they would divide probability over the future impacts of high-level machine intelligence between five buckets ranging from ‘extremely good (e.g. rapid growth in human flourishing)’ to ‘extremely bad (e.g. human extinction).1 The median respondent put 5% on the worst bucket. But what does the whole distribution look like? Here is every person’s answer, lined up in order of probability on that worst bucket:

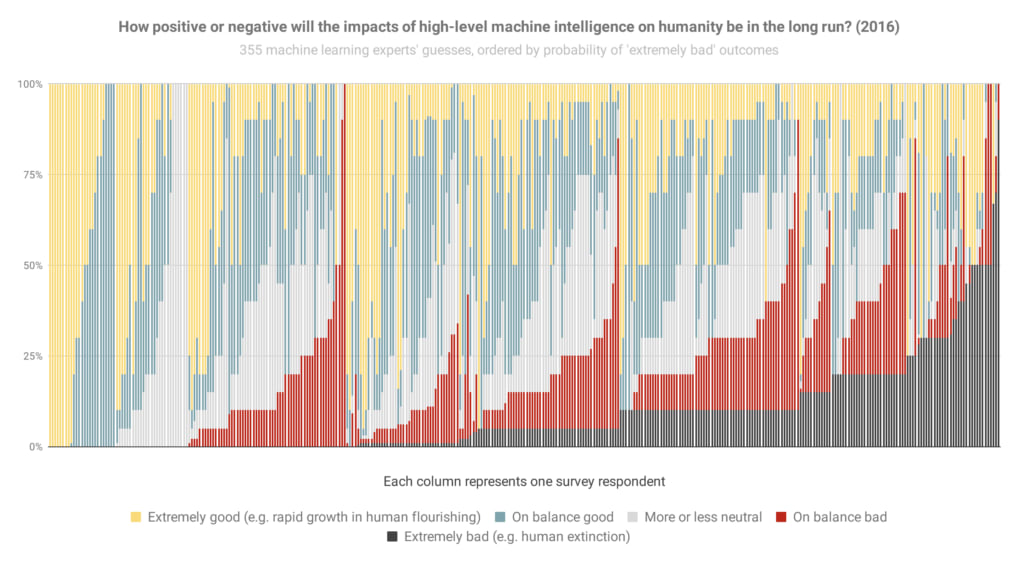

And here’s basically that again from the 2016 survey (though it looks like sorted slightly differently when optimism was equal), so you can see how things have changed:

The most notable change to me is the new big black bar of doom at the end: people who think extremely bad outcomes are at least 50% have gone from 3% of the population to 9% in six years.

Here are the overall areas dedicated to different scenarios in the 2022 graph (equivalent to averages):

- Extremely good: 24%

- On balance good: 26%

- More or less neutral: 18%

- On balance bad: 17%

- Extremely bad: 14%

That is, between them, these researchers put 31% of their credence on AI making the world markedly worse.

Some things to keep in mind in looking at these:

- If you hear ‘median 5%’ thrown around, that refers to how the researcher right in the middle of the opinion spectrum thinks there’s a 5% chance of extremely bad outcomes. (It does not mean, ‘about 5% of people expect extremely bad outcomes’, which would be much less alarming.) Nearly half of people are at ten percent or more.

- The question illustrated above doesn’t ask about human extinction specifically, so you might wonder if ‘extremely bad’ includes a lot of scenarios less bad than human extinction. To check, we added two more questions in 2022 explicitly about ‘human extinction or similarly permanent and severe disempowerment of the human species’. For these, the median researcher also gave 5% and 10% answers. So my guess is that a lot of the extremely bad bucket in this question is pointing at human extinction levels of disaster.

- You might wonder whether the respondents were selected for being worried about AI risk. We tried to mitigate that possibility by usually offering money for completing the survey ($50 for those in the final round, after some experimentation), and describing the topic in very broad terms in the invitation (e.g. not mentioning AI risk). Last survey we checked in more detail—see ‘Was our sample representative?’ in the paper on the 2016 survey.

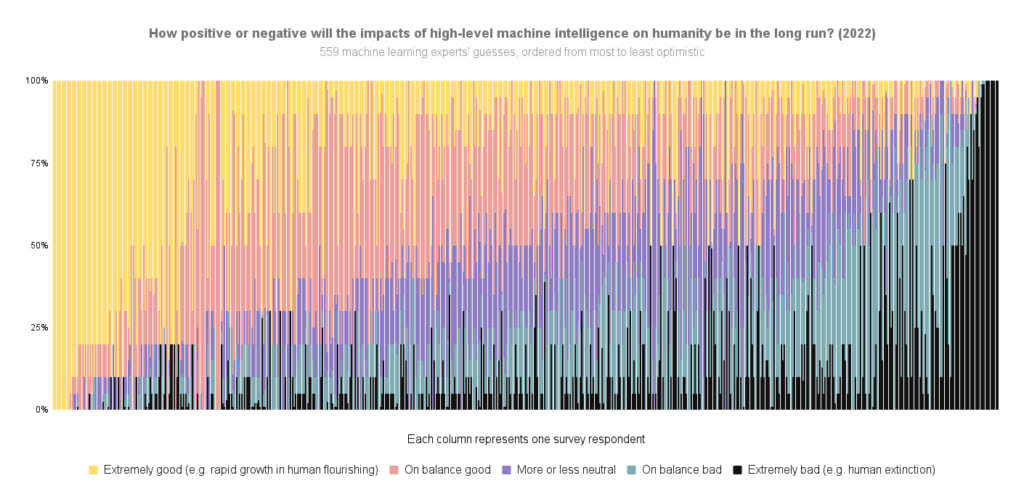

Here’s the 2022 data again, but ordered by overall optimism-to-pessimism rather than probability of extremely bad outcomes specifically:

For more survey takeaways, see this blog post. For all the data we have put up on it so far, see this page.

See here for more details.

Thanks to Harlan Stewart for helping make these 2022 figures, Zach Stein-Perlman for generally getting this data in order, and Nathan Young for pointing out that figures like this would be good.

The graphs above indicate there is no consensus in the industry that AI is on the road to inevitable catastrophe, as the graphs themselves above indicate. The median researcher still considers the field a net positive. But let's set that aside for now and talk only about AI doomers.

Most researchers predicting bad outcomes also have high confidence that they themselves exiting the industry would do nothing to prevent bad outcomes. Somebody will get the bomb first. The day that person gets the bomb, unless you're a 100% doomer, humanity's chances are better the more "alignment-ready" the person who has it is, and if you had to pick an entity to receive the bomb today you could easily do worse than than OpenAI. At least they would have the good sense to turn it off before deciding to do anything else dumb with it.

The "other actors aren't here yet" argument is literally just a punt. They won't stay behind forever. When they catch up, we'll be in the exact same boat, except with the "overwhelming tech lead by institutions nominally pursuing alignment" solutions closed out of the book, a net loss in humanity's chances of survival however thin you think that slice is.

The notion that people simply haven't "taken a step back, reassessed, and rethought their position" ascribes a breathtaking amount of NPC-quality to people in the field, as if they somehow manage to believe in an impending apocalypse and still not spend any time thinking about it. The reason that doomers don't quit the industry themselves, a few deranged cultists aside, is the same reason people have never succeeded into baiting Yudkowsky into endorsing anti-AI terrorism: the impact you have on the final outcome is somewhere between net negative and net zero, as you will inevitably be replaced with another researcher who cares even less about alignment.

The only way quitting the industry actually leads to a better outcome is if you decide that the game is already 100% lost, you only want to maximize years of ignorant bliss before the fall, and you're certain that your competence is so high and your persuasive power so low relative to replacement that you personally quitting represents a net delay on the doom clock. The same argument applies, rescaled, to individual organizations themselves backing out of the game. There's a reason very few people think you can put the genie back in the bottle.