The Rapid Effective Action Development Initiative (READI) recently completed a meta-review of interventions that influence animal-product consumption.

The work was led by Emily Grundy and conducted in collaboration with EA-aligned researchers and organisations.

We screened 11989 papers to find 18 relevant reviews.

We planned to convert effect sizes from reviews to a common metric and conduct meta-meta-analyses, however we needed to deviate from our protocol because so few reviews reported effect sizes of primary studies.

Instead, we undertook vote counting based on direction of effect— an acceptable statistical synthesis method for when meta-analysis of effect estimates is not possible and consistent effect measures or data are not reported across studies.

Summary of evidence for interventions

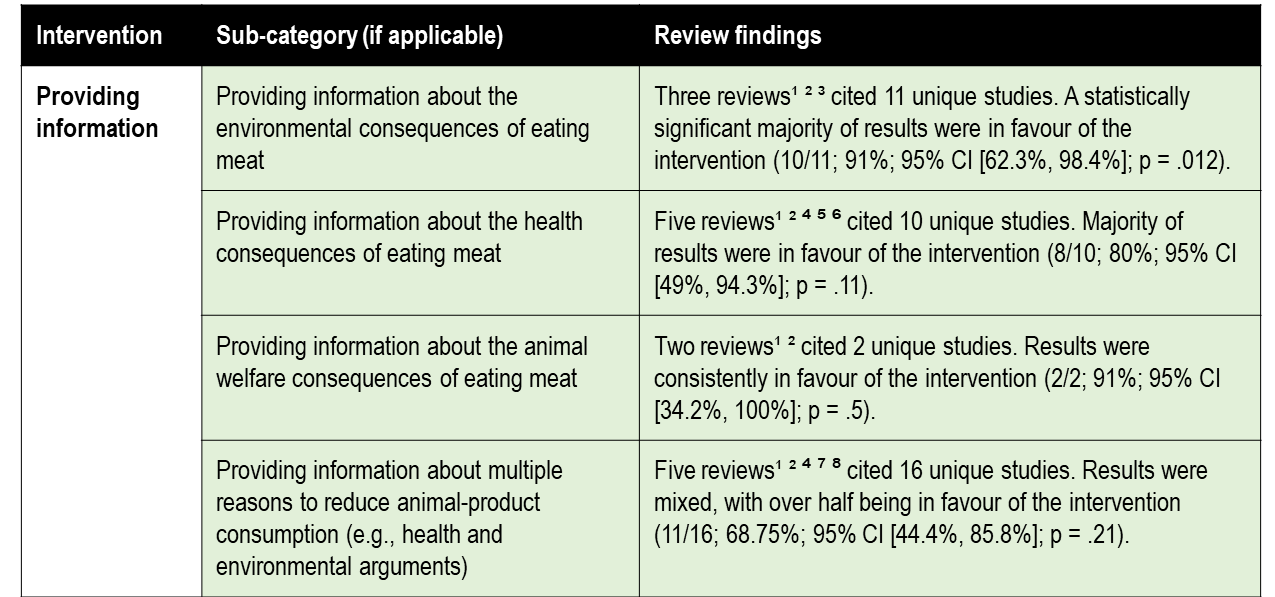

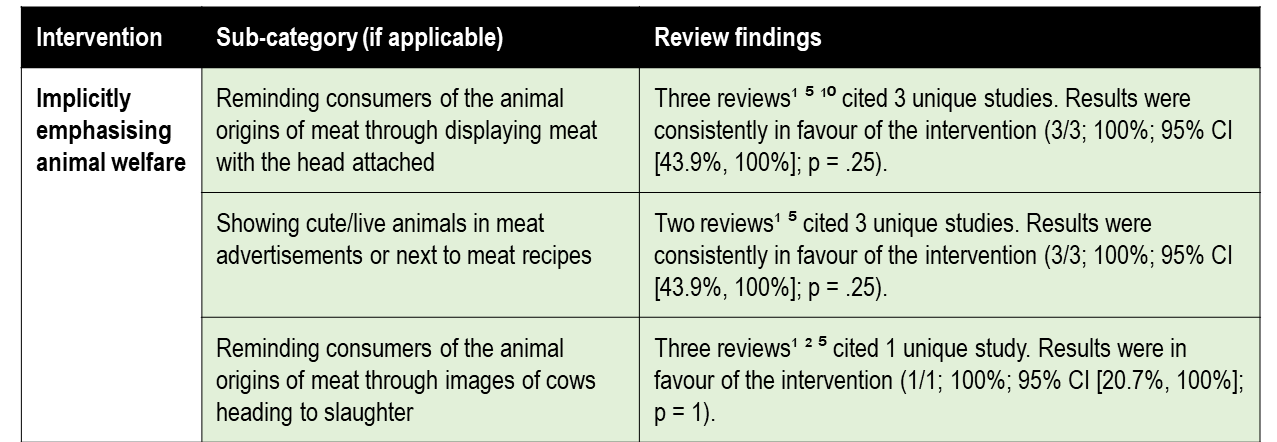

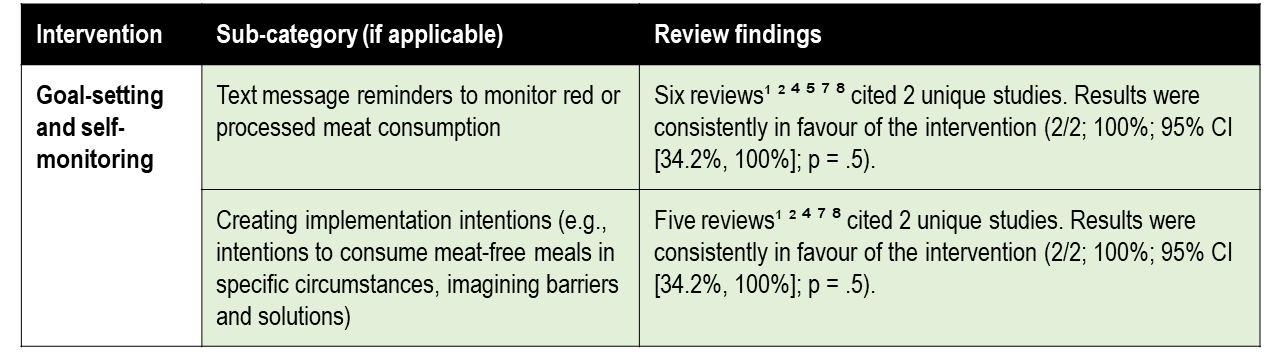

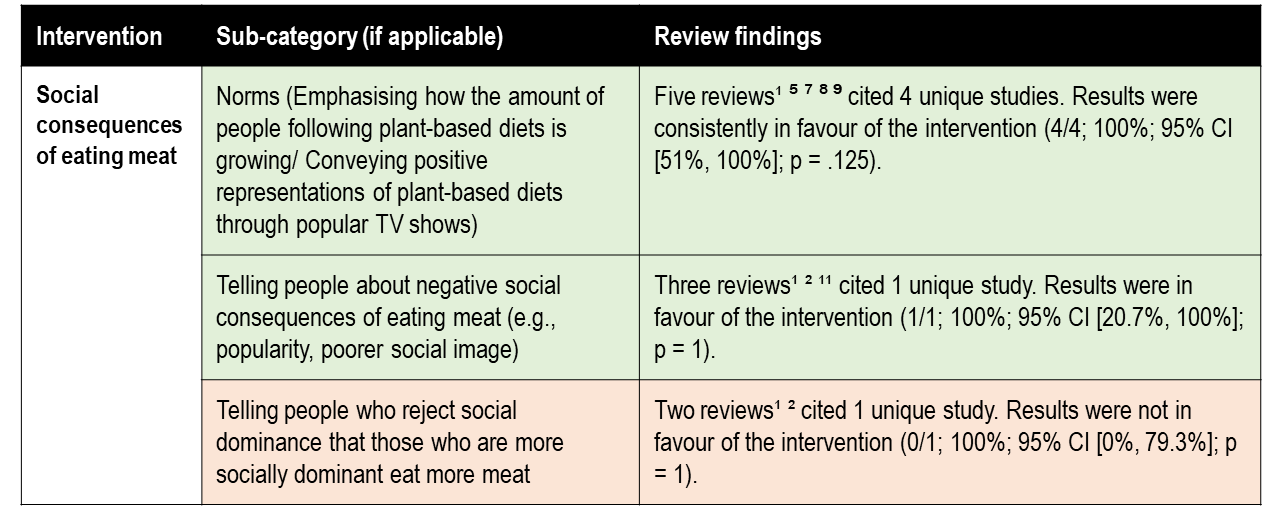

Below is a quick visual summary of the evidence for the each of interventions covered (see PowerPoint here, or table here).

Providing information

Implicitly emphasising animal welfare

Goal-setting and self-monitoring

Social consequences of eating meat

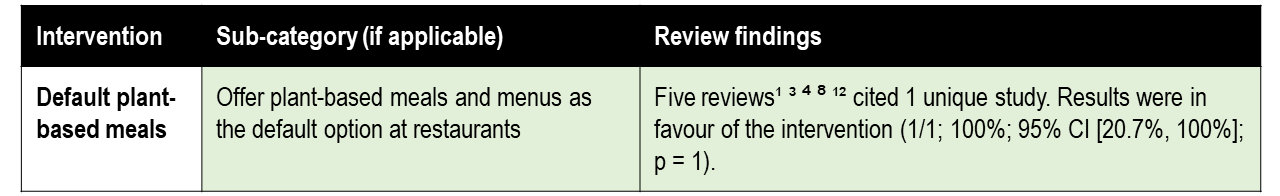

Default plant-based meals

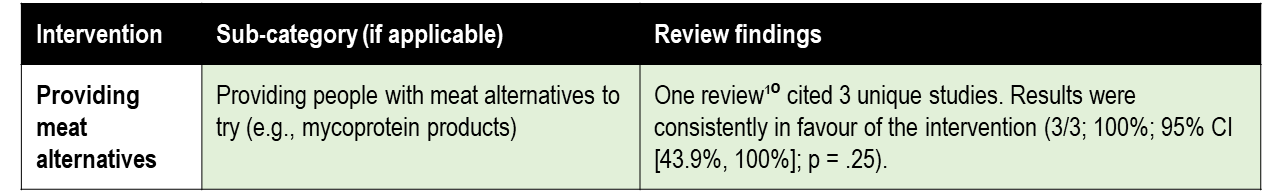

Providing meat alternatives

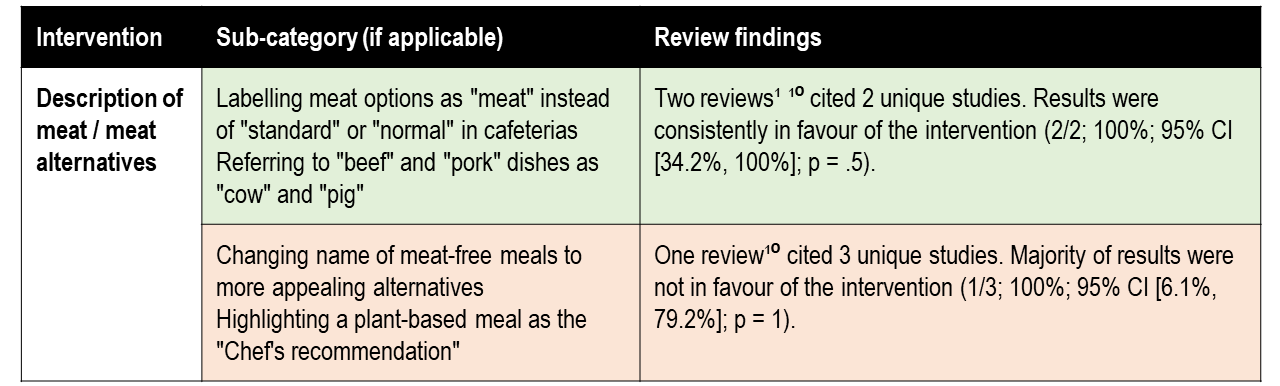

Description of meat / meat alternatives

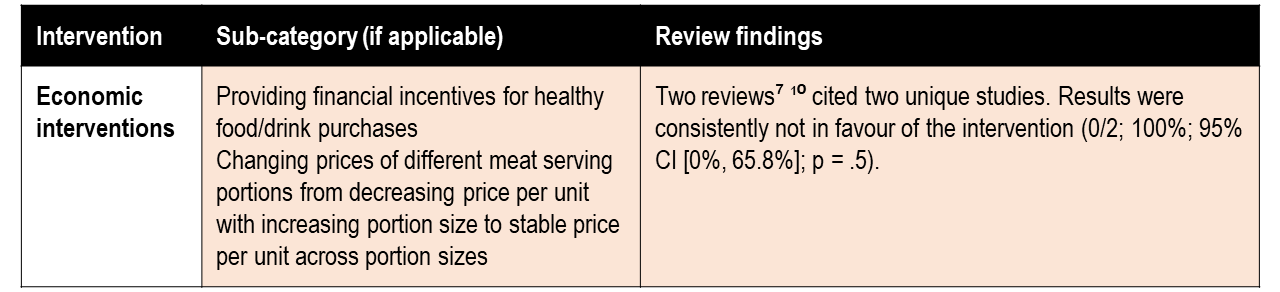

Economic interventions

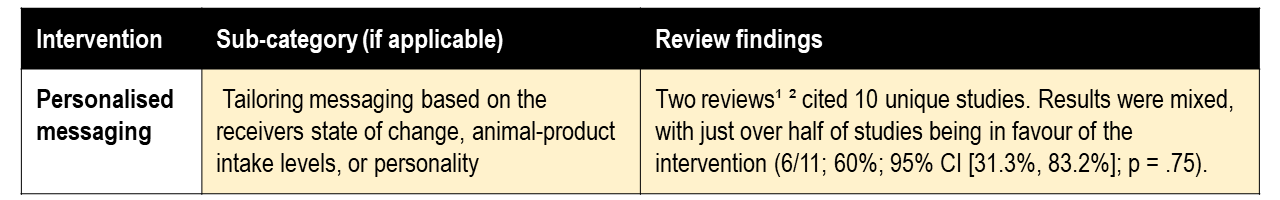

Personalised messaging

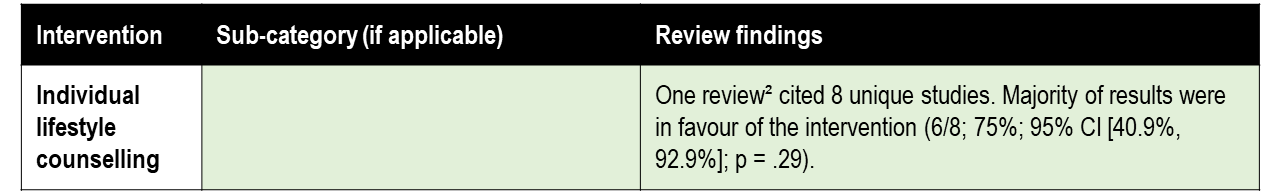

Individual lifestyle counselling

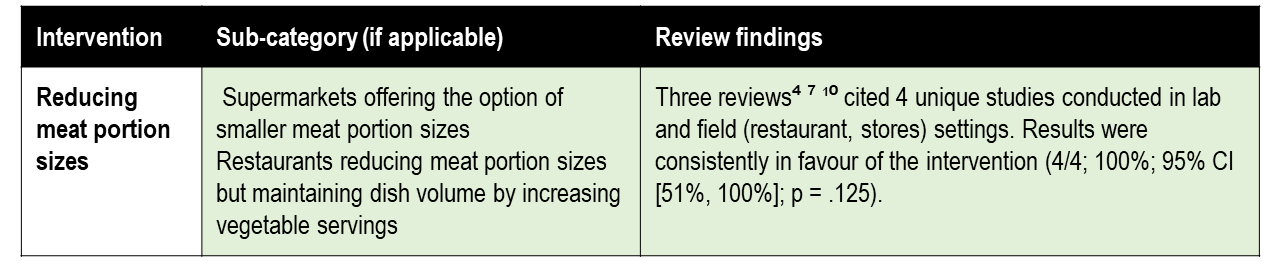

Reducing meat portion sizes

References

¹Harguess and colleagues (2020), ²Bianchi and colleagues (2018), ³Hartmann and Siegrist (2017), ⁴ Veul (2018), ⁵Graça and colleagues (2019), ⁶Valli and colleagues (2019), ⁷Taufik and colleagues (2019, ⁸Wynes and colleagues (2018), ⁹Nisa and colleagues (2019), ¹⁰Bianchi and colleagues (2018b), ¹¹Sanchez-Sabate and Sabaté (2019) ,¹²Byerly and colleagues (2018). Full citations are available in the preprint.

A detailed academic report on the project is being revised for submission. Here is a preview (PDF here):

Depending on feedback, we may release a longer plain language summary in the future or develop a related database to help researchers and practitioners to build on the research.

Feedback

If you have any feedback on this post, our research, or anything else then please complete our short feedback form or comment below.

Previous and ongoing READI work

We have previously completed a review and public summary for 'What works to promote charitable donations?

In the Survey of COVID-19 Responses to Understand Behaviour (SCRUB) we aim to provide current and future policy makers with actionable insights into public attitudes and behaviours relating to the COVID-19 pandemic. Over the past 16 months, we (mainly Emily Grundy and Alexander Saeri) have been receiving ongoing funding (as employees of BehaviourWorks Australia) from the Victorian Government in Australia to run the survey periodically . Our results directly inform Covid-19 response policy. Several related publications (e.g., 1, 2, ,3) have been accepted in academic journals. Several more are planned once data embargoes are lifted.

Our next project is likely to focus on Improving Institutional Decision Making (IIDM).

Opportunities to engage with future research

If relevant then please consider applying for Micheal's PhD Scholarships for EA projects in psychology, health, IIDM, or education.

Help us with our website

We are evaluating the priority of improving our website. As part of this we are curious about how our current website affects our impact. Please complete this short form to help.

Acknowledgements

We would like to thank everyone involved in completing and supporting the research: Emily A. C. Grundy1, Peter Slattery1, Alexander K. Saeri1, Kieren Watkins2, Thomas Houlden3, Neil Farr, Henry Askin, Joannie Lee3, Alexandria Mintoft-Jones, Sophia Cyna4, Alyssa Dziegielewski5, Romy Gelber3, Amy Rowe6, Maya B. Mathur7, Shane Timmons8, 9, Kun Zhao1, Matti Wilks10, Jacob Peacock11, Jamie Harris12, Daniel L. Rosenfeld13, Chris Bryant14, David Moss15, Michael Noetel16

1BehaviourWorks Australia, Monash Sustainable Development Institute, Monash University, 2School of Agriculture and Food, University of Melbourne, 3University of New South Wales, 4University of Sydney, 5Macquarie University, 6University of Adelaide, 7Quantitative Sciences Unit, Stanford University, 8Economic and Social Research Institute, Dublin, Ireland, 9School of Psychology, Trinity College Dublin, Dublin, Ireland., 10Yale University, 11Director of The Humane League Labs, 12Sentience Institute, 13Department of Psychology, University of California, Los Angeles, 14Department of Psychology, University of Bath, UK, 15Faculty of Education, Canterbury Christ Church University, 16School of Health and Behavioural Sciences, Australian Catholic University

We thank Annet Hoek and Jo Anderson for their contributions to the research direction of this project and the manuscript.

Disclaimer

I wrote this summary quickly with relatively little oversight from the rest of the research team. I take responsibility for any mistakes or misrepresentation.

Great work everyone! Very interesting

Thanks Rowan!