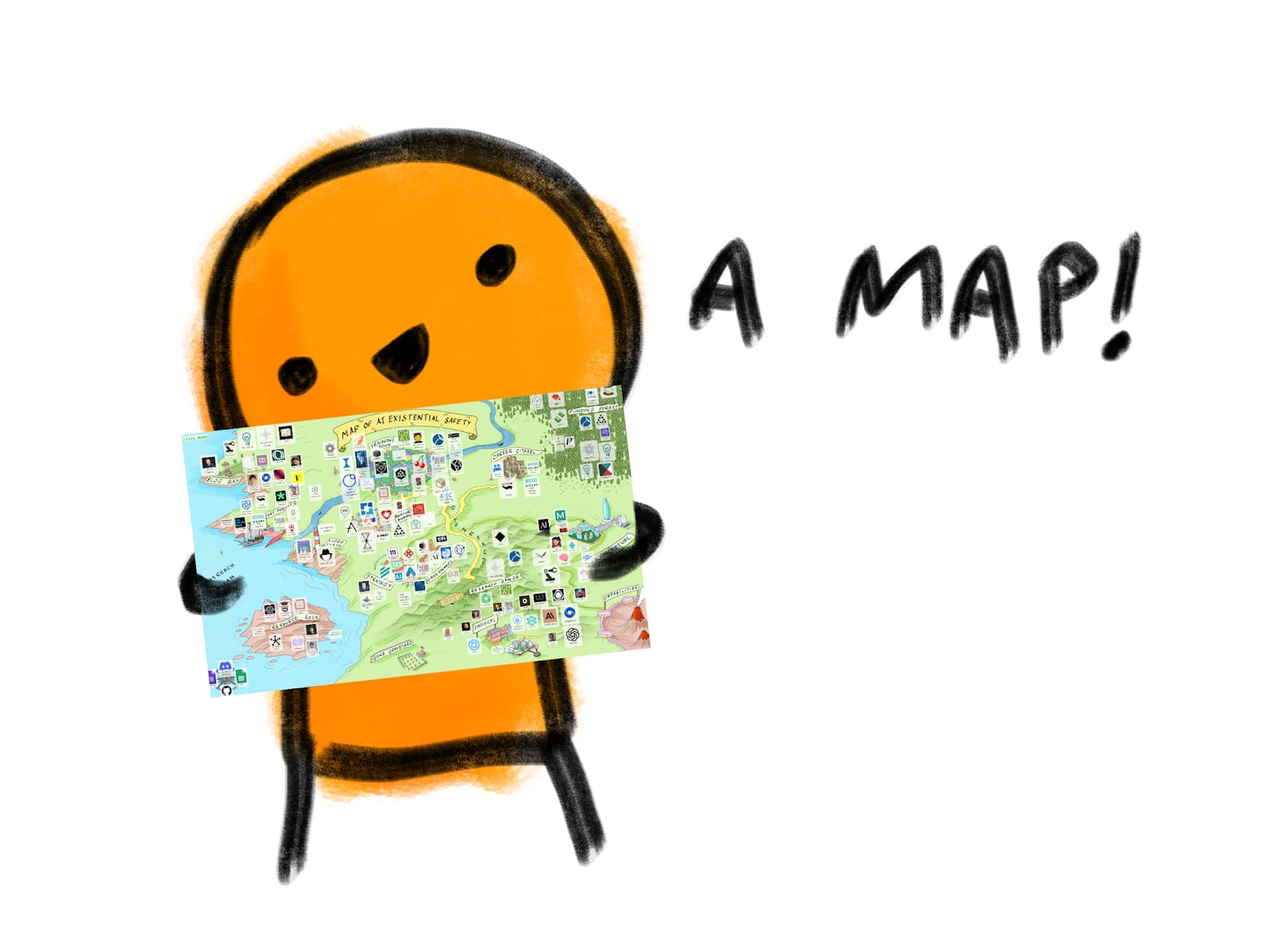

The URL is aisafety.world

The map displays a reasonably comprehensive list of organizations, people, and resources in the AI safety space, including:

- research organizations

- blogs/forums

- podcasts

- youtube channels

- training programs

- career support

- funders

You can hover over each item to get a short description, and click on each item to go to the relevant web page.

The map is populated by this spreadsheet, so if you have corrections or suggestions please leave a comment.

There's also a google form and a Discord channel for suggestions.

Thanks to plex for getting this project off the ground, and Nonlinear for motivating/funding it through a bounty.

PS, If you find this helpful, here are some other projects you may be interested in (these have nothing to do with me):

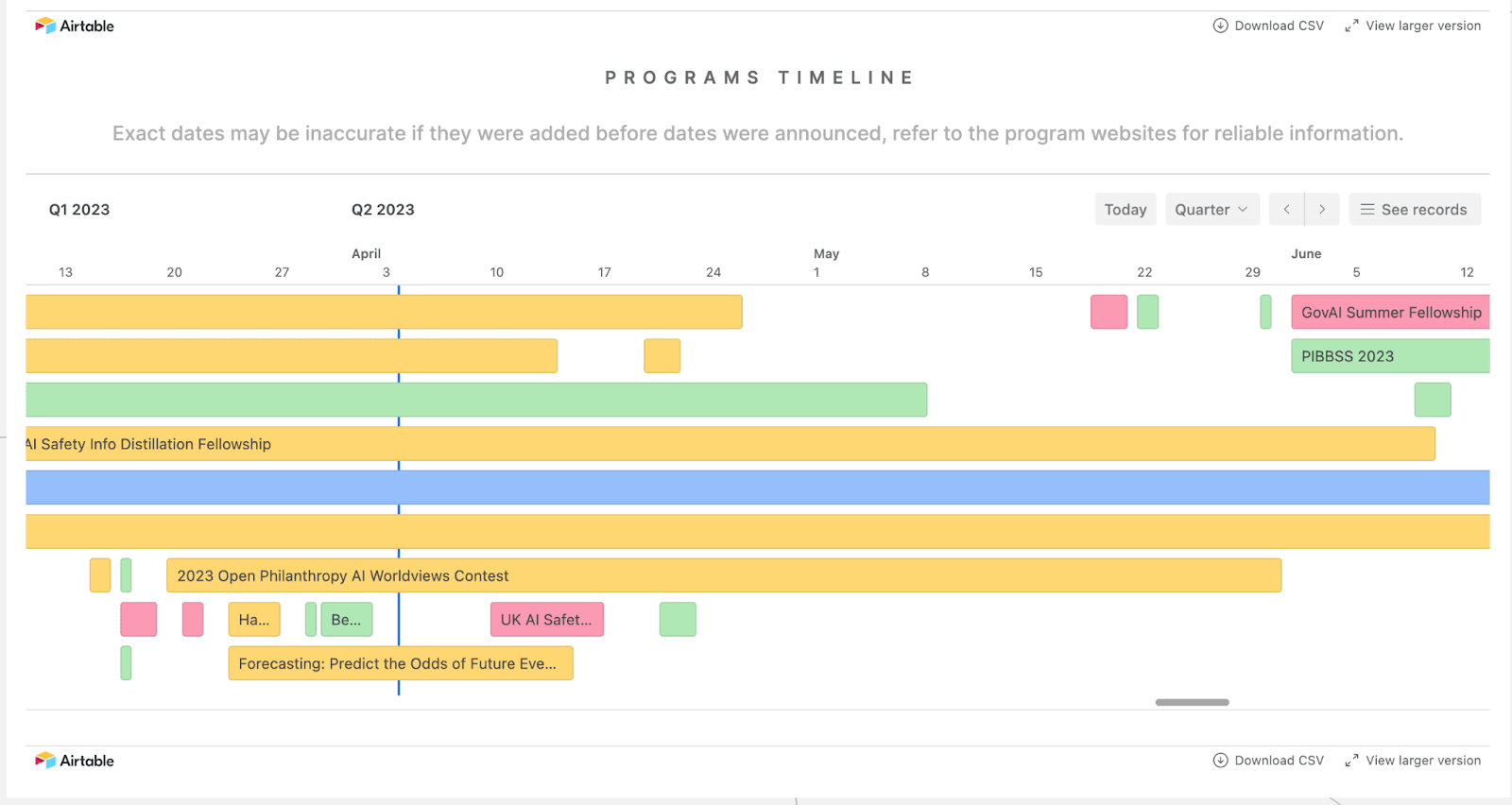

aisafety.training gives a timeline of AI safety training opportunities available by plex using AISS's database

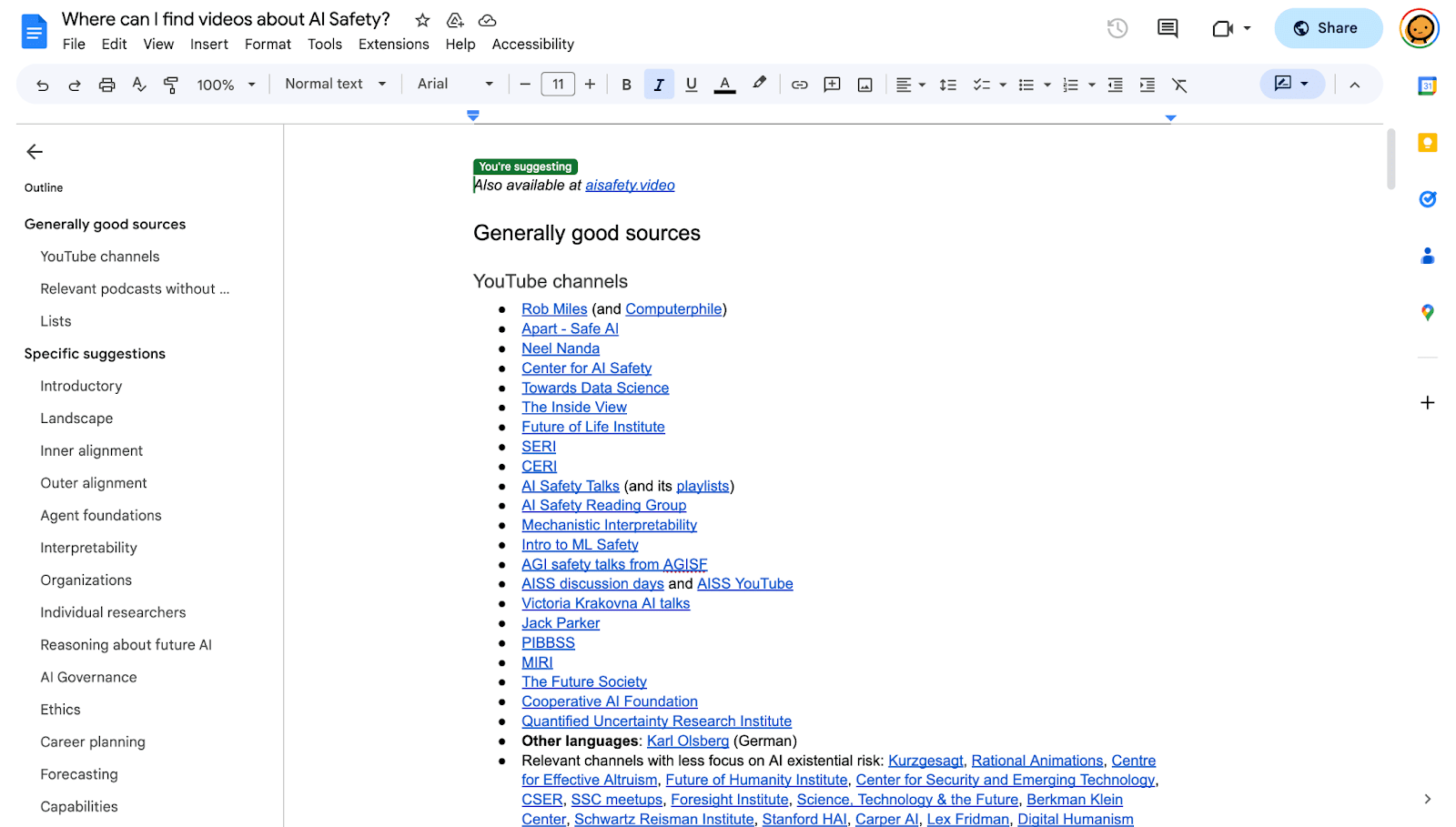

aisafety.video gives a list of video/audio resources on AI safety by Jakub Kraus.

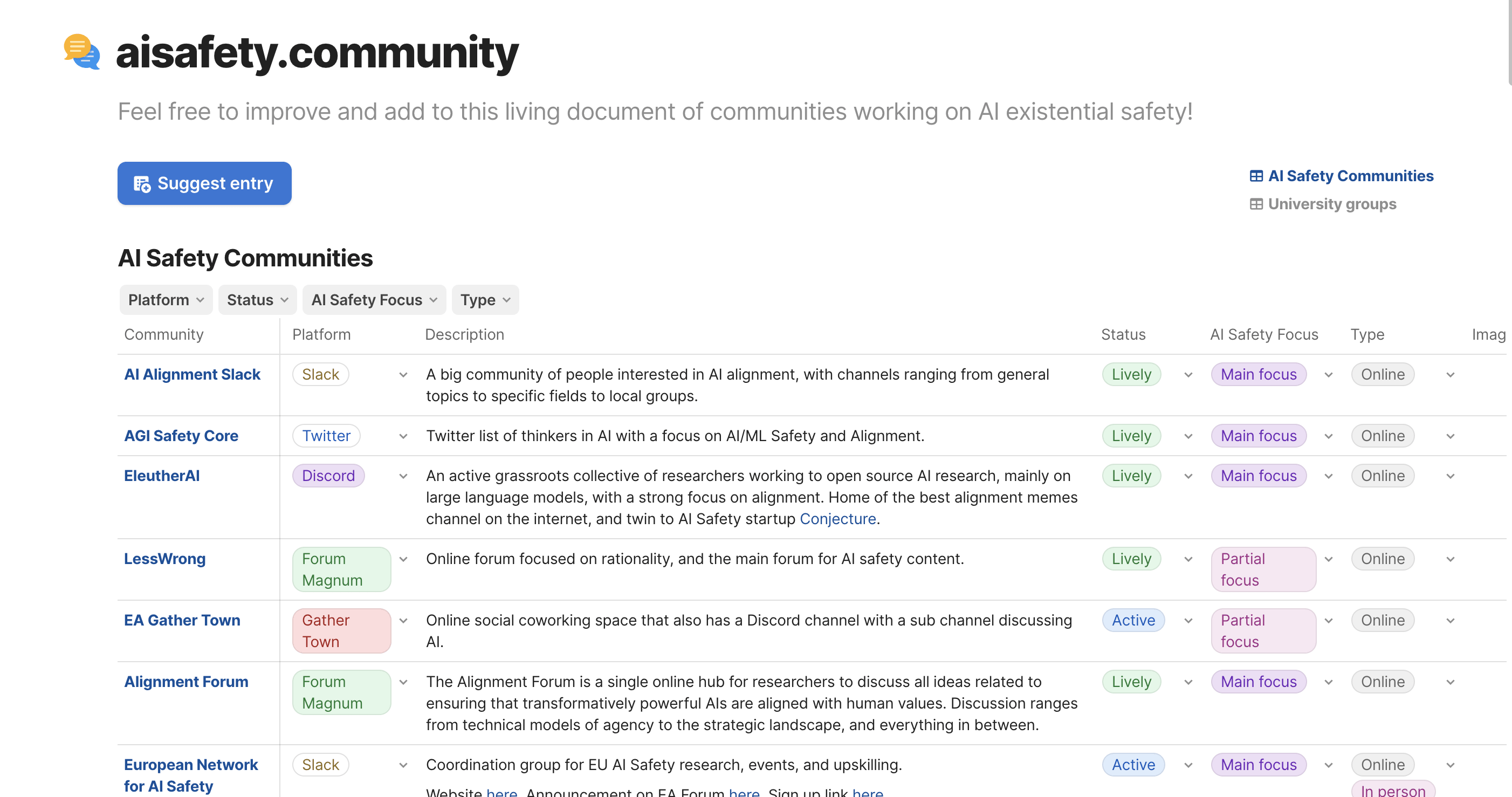

aisafety.community lists communities working on AI safety (made by volunteers in Alignment Ecosystem Development)

And plex wanted me to mention that Alignment Ecosystem Development has monthly calls to collect volunteers. So... go do that maybe!

This is great, thanks for doing it! I think that what I would call 'coordination' work, like this, has been, and still is, undervalued relative to say training. I am definitely in favour of more curation, simplification, synthesis and prioritisation work etc.

What is the plan for resourcing this in the future? Is funded or volunteer based work?

Nonlinear funded it through a bounty, but I'm unaware of any future plans. If anyone has any ideas for improvement or expansion, feel free to reach out.