I love seeing cool visualizations (maps) of interesting intellectual fields. I’m also a big fan of making lists!

Accordingly, I compiled a bunch of maps of fields that I thought were interesting. Please comment if you know of more such maps, and I’ll include them.

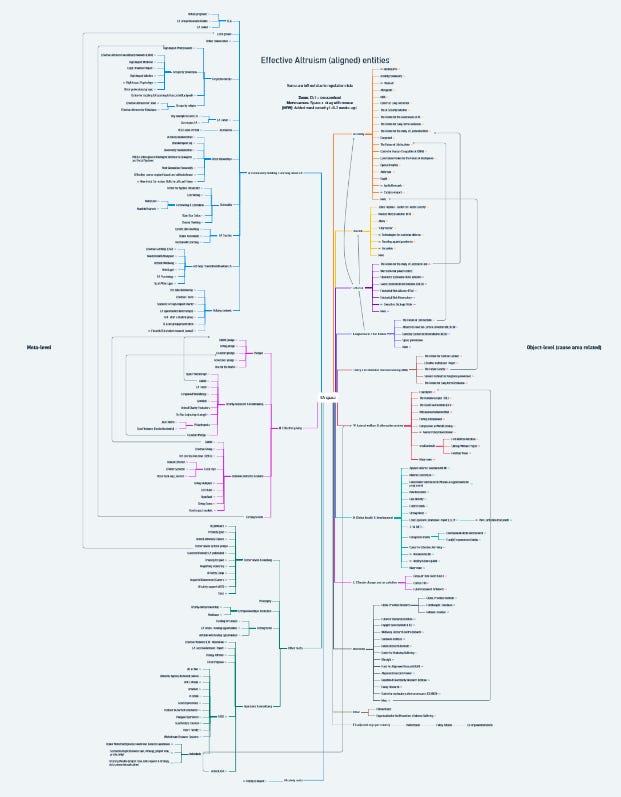

Scott Alexander’s map of Effective Altruism (2020)

mariekedev’s mindmap of EA organisations (2023)

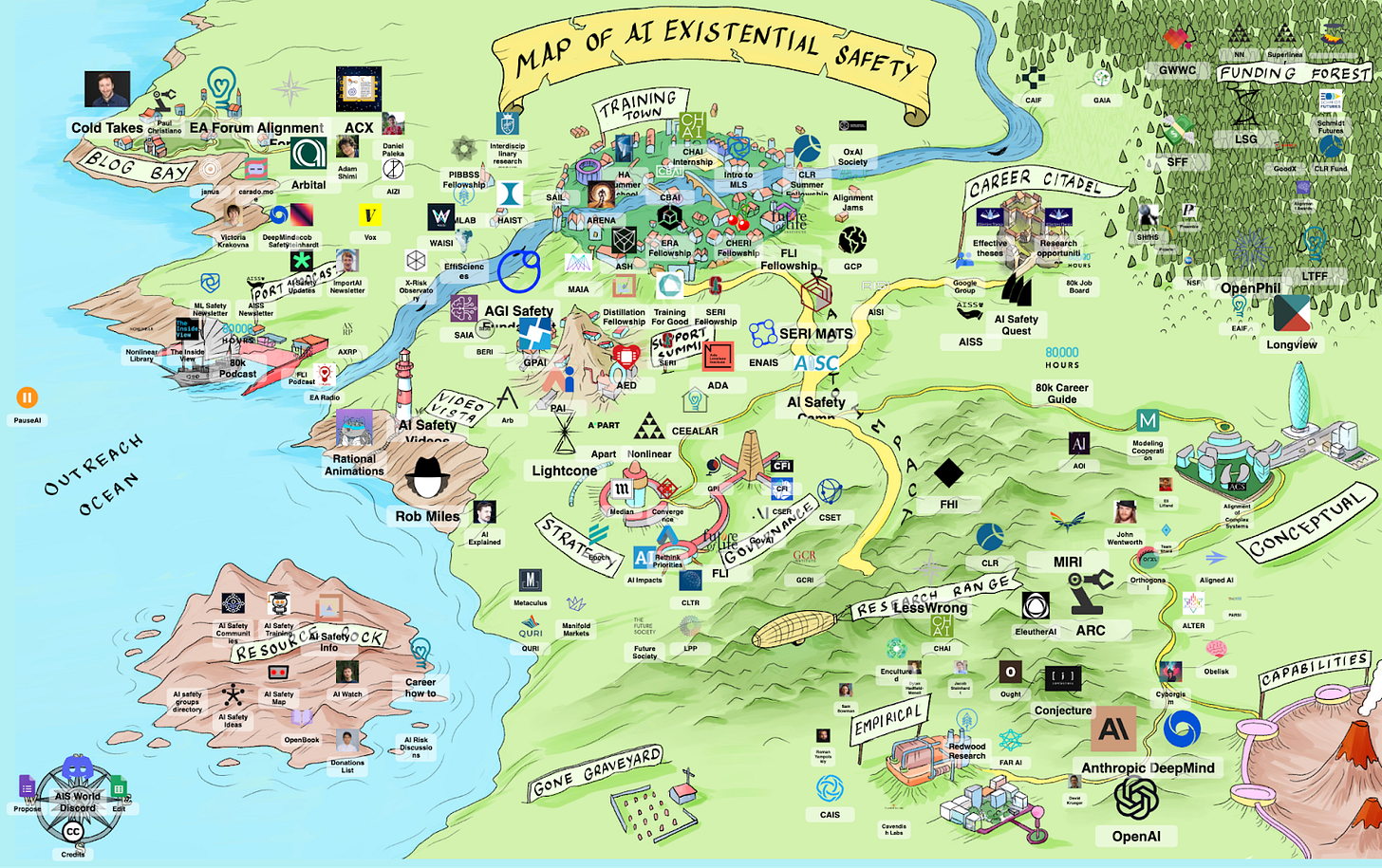

Hamish Doodles’ aisafety.world (2023)

On a side note, I would be very keen for someone to create a similar map of relevant organizations in the biosecurity & pandemic preparedness space. I plan to post a minimum viable product (bullet point list) soon.

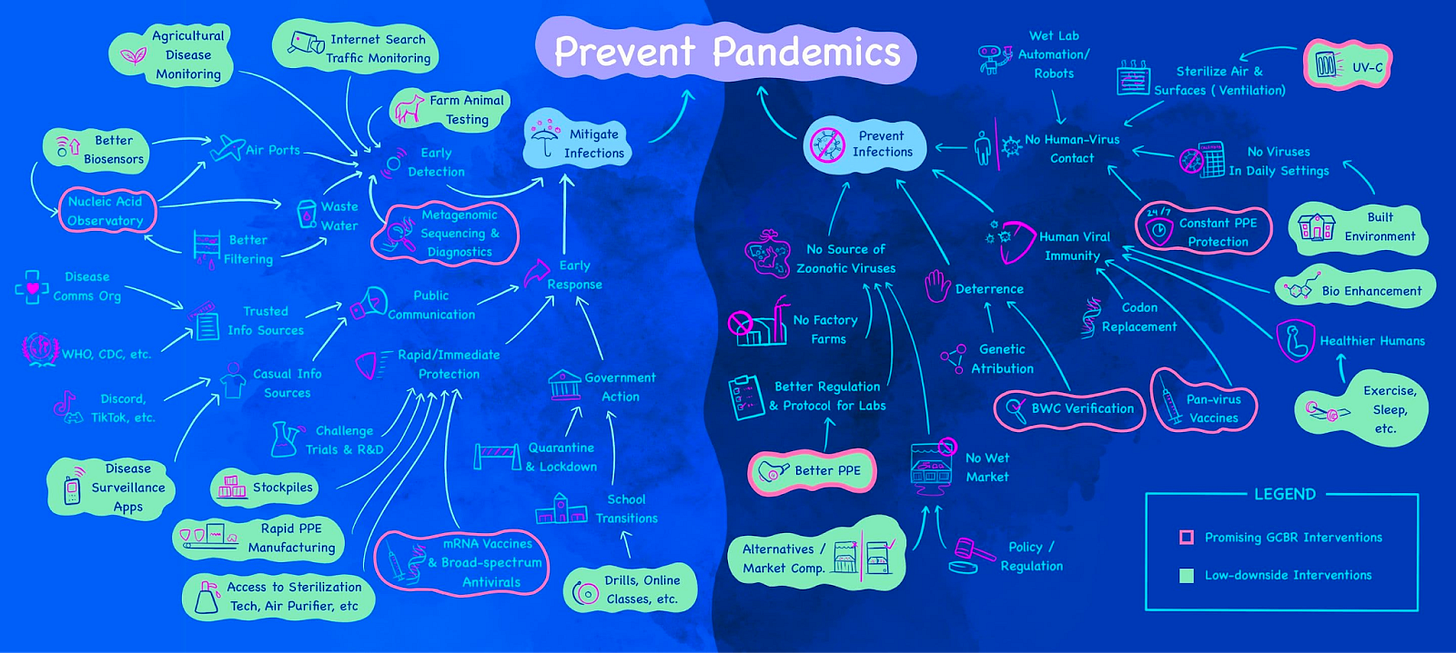

James Lin’s map of biosecurity interventions (2022)

Scott Alexander’s map of the rationality community (2014)

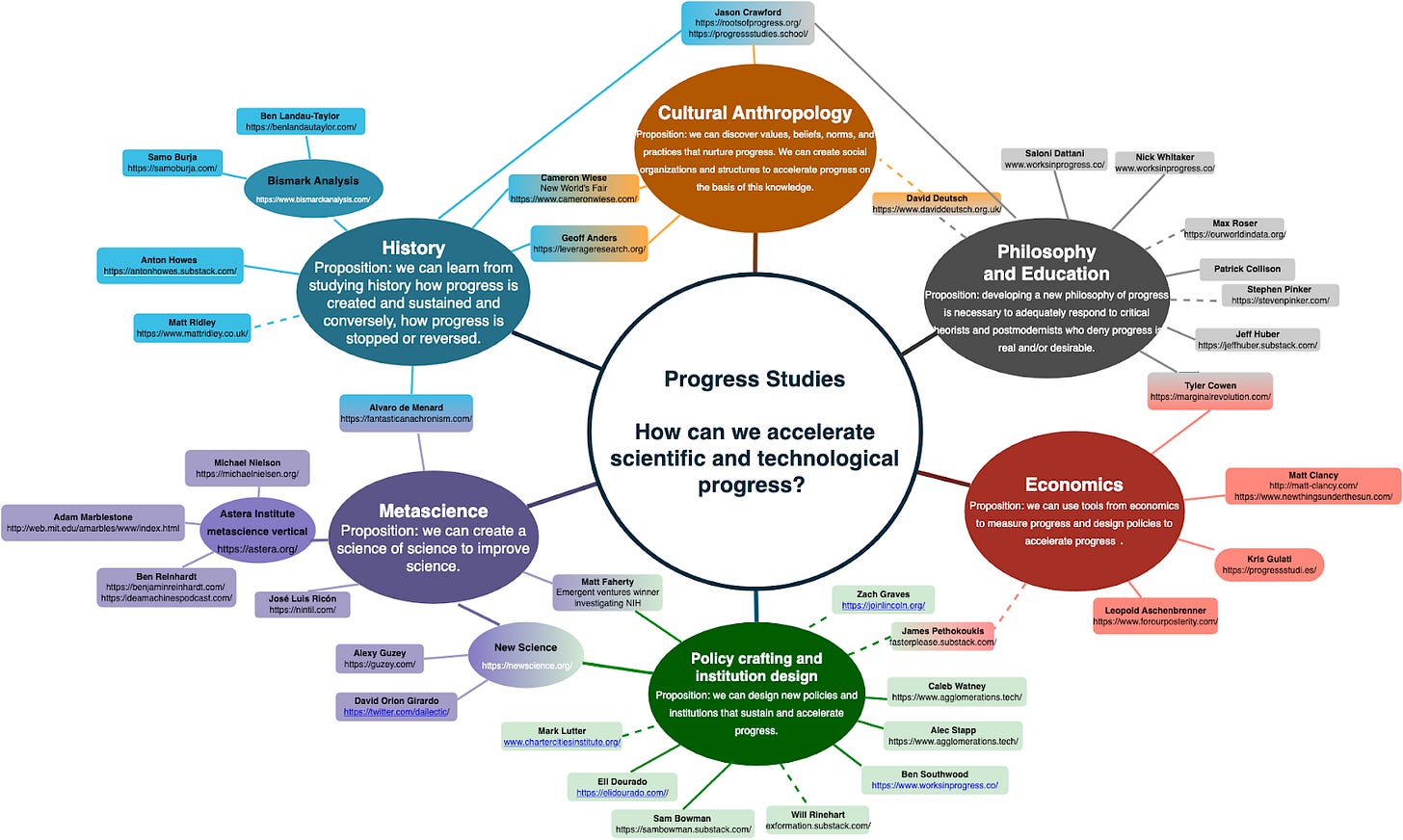

Dan Elton’s map of progress studies (2021)

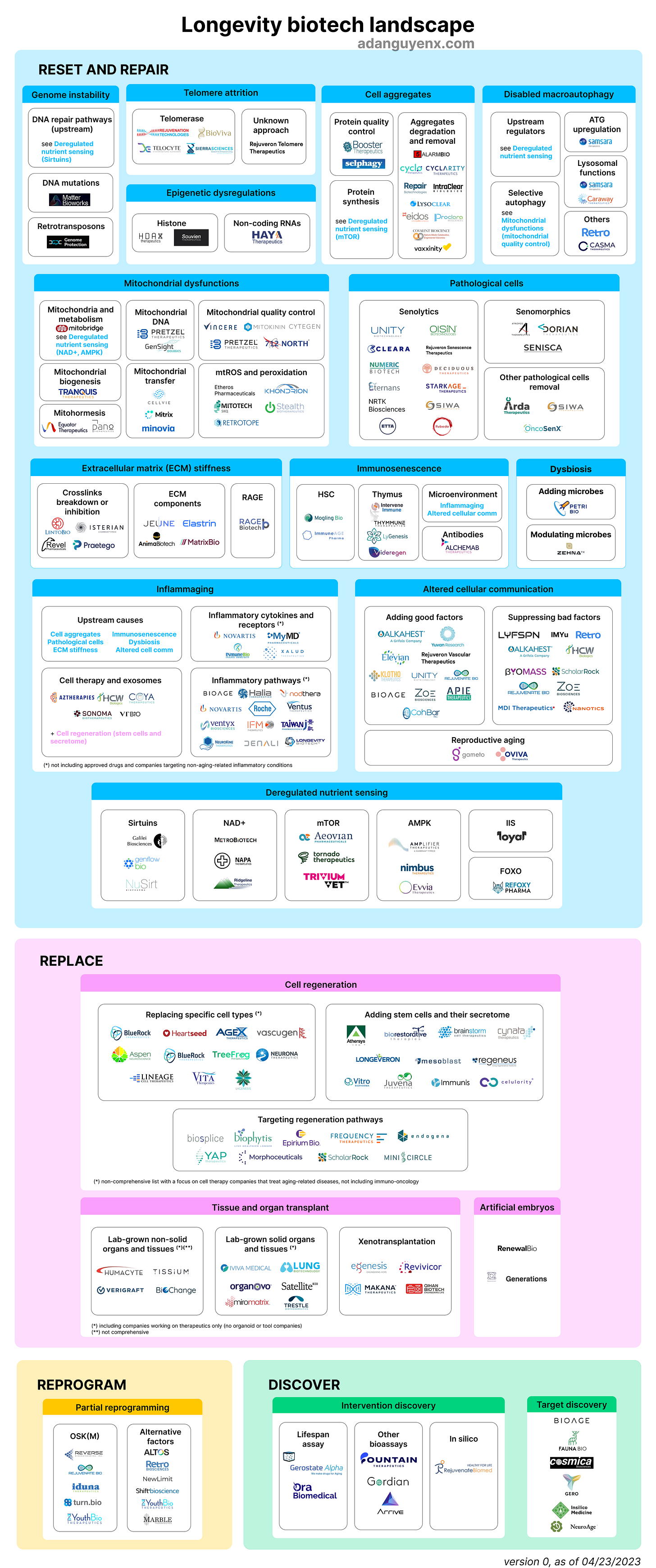

Ada Nguyen’s map of the Longevity Biotech Landscape (2023)

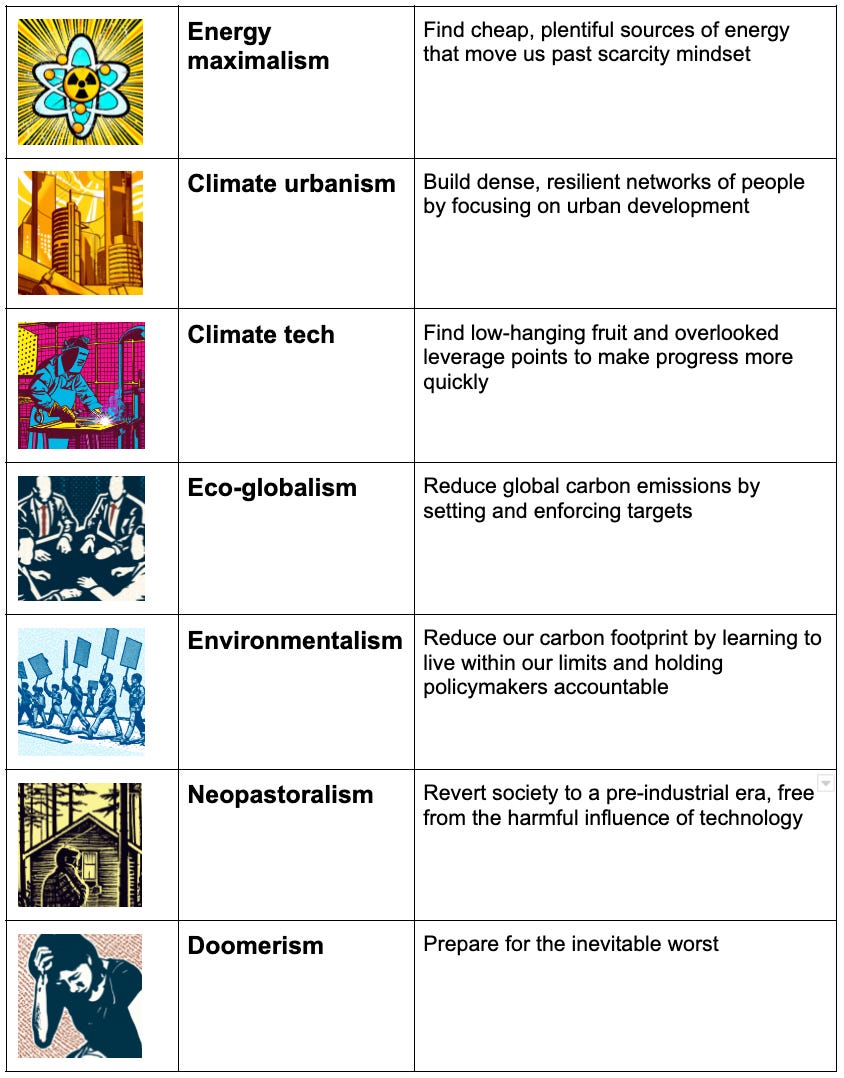

Nadia Asparouhova’s map of climate tribes (2022)

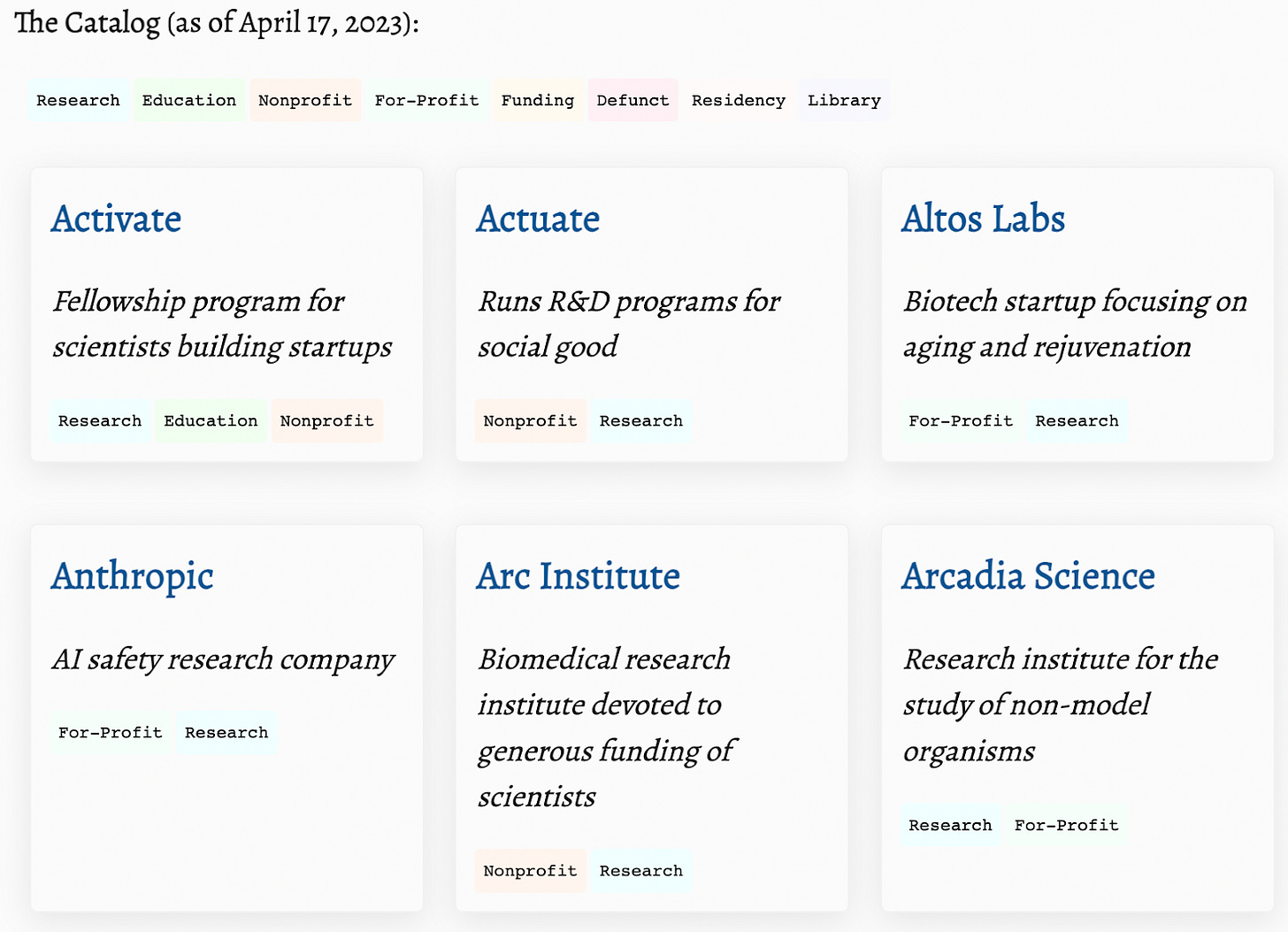

Samuel Arbesman’s Catalog of New Types of Research Organizations (2023)

This one is more of a long list, but I thought it was very interesting nonetheless!

Honorable mentions

These are other mapping efforts that met my "I'm curious about it" but not my "This is super interesting" bar. Some of them also seem outdated.

- xkcd’s map of online communities (2010)

- Julia Galef’s map of the Bay Area memespace (2013)

- Joe Lightfoot’s The Liminal Web: Mapping An Emergent Subculture Of Sensemakers, Meta-Theorists & Systems Poets (2021 & updated in 2023)

Shoutout to Rival Voices, who created a similar-ish collection of maps (2023), and Nadia Asparouhova, who wrote a more meta-level post about Mapping digital worlds (2023).

oh this is a cool and useful resource

ty for the mention

re: the biosecurity map

did you realise that the AIS map is just pulling all the coordinates, descriptions, etc from a google sheet

if you've already got a list of orgs and stuff it's not hard to turn it into a map like the AIS one by copying the code, drawing a new background, and swapping out the URL of the spreadsheet

I didn't know that! Thanks for the info.

In the meantime, I have created an MVP google doc of the biosecurity landscape.

I'd also include this mindmap focused on suffering ethics and s-risks (sorry idk how to shorten URLs on my phone):

https://www.mindomo.com/mindmap/04-abolition-of-suffering-cause-areas-and-initiatives-copy-492fdd15b27e4dd1bf787a2a93373678

(Source website: https://suffering-abolition.github.io/)

Olivia Fox Cabane has an alt protein industry landscape map.

Maps are great!

I also love the Maps of Science, by Dominic Walliman (@Domain of Science): https://youtube.com/playlist?list=PLOYRlicwLG3St5aEm02ncj-sPDJwmojIS