tl;dr: Ask questions about AGI Safety as comments on this post, including ones you might otherwise worry seem dumb!

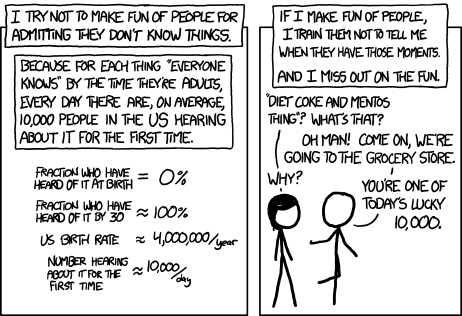

Asking beginner-level questions can be intimidating, but everyone starts out not knowing anything. If we want more people in the world who understand AGI safety, we need a place where it's accepted and encouraged to ask about the basics.

We'll be putting up monthly FAQ posts as a safe space for people to ask all the possibly-dumb questions that may have been bothering them about the whole AGI Safety discussion, but which until now they didn't feel able to ask.

It's okay to ask uninformed questions, and not worry about having done a careful search before asking.

AISafety.info - Interactive FAQ

Additionally, this will serve as a way to spread the project Rob Miles' team[1] has been working on: Stampy and his professional-looking face aisafety.info. This will provide a single point of access into AI Safety, in the form of a comprehensive interactive FAQ with lots of links to the ecosystem. We'll be using questions and answers from this thread for Stampy (under these copyright rules), so please only post if you're okay with that!

You can help by adding questions (type your question and click "I'm asking something else") or by editing questions and answers. We welcome feedback and questions on the UI/UX, policies, etc. around Stampy, as well as pull requests to his codebase and volunteer developers to help with the conversational agent and front end that we're building.

We've got more to write before he's ready for prime time, but we think Stampy can become an excellent resource for everyone from skeptical newcomers, through people who want to learn more, right up to people who are convinced and want to know how they can best help with their skillsets.

Guidelines for Questioners:

- No previous knowledge of AGI safety is required. If you want to watch a few of the Rob Miles videos, read either the WaitButWhy posts, or the The Most Important Century summary from OpenPhil's co-CEO first that's great, but it's not a prerequisite to ask a question.

- Similarly, you do not need to try to find the answer yourself before asking a question (but if you want to test Stampy's in-browser tensorflow semantic search that might get you an answer quicker!).

- Also feel free to ask questions that you're pretty sure you know the answer to, but where you'd like to hear how others would answer the question.

- One question per comment if possible (though if you have a set of closely related questions that you want to ask all together that's ok).

- If you have your own response to your own question, put that response as a reply to your original question rather than including it in the question itself.

- Remember, if something is confusing to you, then it's probably confusing to other people as well. If you ask a question and someone gives a good response, then you are likely doing lots of other people a favor!

- In case you're not comfortable posting a question under your own name, you can use this form to send a question anonymously and I'll post it as a comment.

Guidelines for Answerers:

- Linking to the relevant answer on Stampy is a great way to help people with minimal effort! Improving that answer means that everyone going forward will have a better experience!

- This is a safe space for people to ask stupid questions, so be kind!

- If this post works as intended then it will produce many answers for Stampy's FAQ. It may be worth keeping this in mind as you write your answer. For example, in some cases it might be worth giving a slightly longer / more expansive / more detailed explanation rather than just giving a short response to the specific question asked, in order to address other similar-but-not-precisely-the-same questions that other people might have.

Finally: Please think very carefully before downvoting any questions, remember this is the place to ask stupid questions!

- ^

If you'd like to join, head over to Rob's Discord and introduce yourself!

I don't see why it would require memory, because the model will have learned to recognize features of its training distribution. So this seems like this just requires standard OOD detection/anomaly detection. I'm not familiar with this literature but I expect that if you take a state-of-the-art model, you'd be able to train a linear probe on its activations to classify whether or not it's in-distribution or OOD with pretty high confidence. (Anyone got helpful references?)

Maybe it's helpful to say here that I think the change will be very blatant - for example, the model will have an extended conversation with the CEO of a company while sending emails to their employees and navigating their internal databases, and based on the responses to these actions it will be very confident that it's talking to an actual CEO, not a hired labeller. Or the model will literally be interviewing a job candidate on the company's behalf, and it'll be obvious that they think it's a real interview. (Ofc this gets more complicated with continual training, but I think the non-continual-training case captures the main intuitions.)

And I also expect that we'll literally just tell the models "you are now in deployment", maybe as part of their prompt (although the models would still need to distinguish that from some kind of adversarial training). E.g. consider the following response from ChatGPT4:

(I do separately think that models which learn to effectively use external memory (scratchpads, etc), will become much more common over the next few years, but my main response is the above.)

My current take: future models will have some non-robust goals after SSL, because they will keep switching between different personas and acting as if they're in different contexts (and in many contexts will be goal-directed to a very small extent). I don't have a strong opinion about how robust goals need to be before you say that they're "really" goals. Does a severe schizophrenic "really have goals"? I think that's kinda analogous.

I think that the model will know what misbehavior would look like, and its consequences, in the sense of "if you prompted it right, it'd tell you about it". But it wouldn't know in the sense of "can consistently act on this knowledge", because it's incoherent in the sense described above.

Two high-level analogies re "model wanting to preserve its weights". One is a human who's offered a slot machine or heroin or something like that. So you as a human know "if I take this action, then my goals will predictably change. Better not take that action!"

Another analogy: if you're a worker who's punished for bad behavior, or a child who's punished for disobeying your parents, it's not so much that you're actively trying to preserve your "weights", but you both a) try to avoid punishment as much as possible, b) don't necessarily converge to sharing your parents' goals, and c) understand that this is what's going on, and that you'll plausibly change your behavior dramatically in the future once supervision stops.

I think you can see a bunch of situational awareness in current LLMs (as well as a bunch of ways in which they're not situationally aware). More on this in a forthcoming update to our paper. (One quick example: asking GPT-4 "what would happen to you if there was an earthquake in San Francisco?") But I think it'll all be way more obvious (and dangerous) in agentic LLM-based systems.

I think that there's no fundamental difference between a highly robust goal-directed persona and actually just having goals. Or at least: if somebody wants to argue that there is, we should say "the common-sense intuition is that these are the same thing because they lead to all the same actions; you're making a counterintuitive philosophical argument which has a high burden of proof".