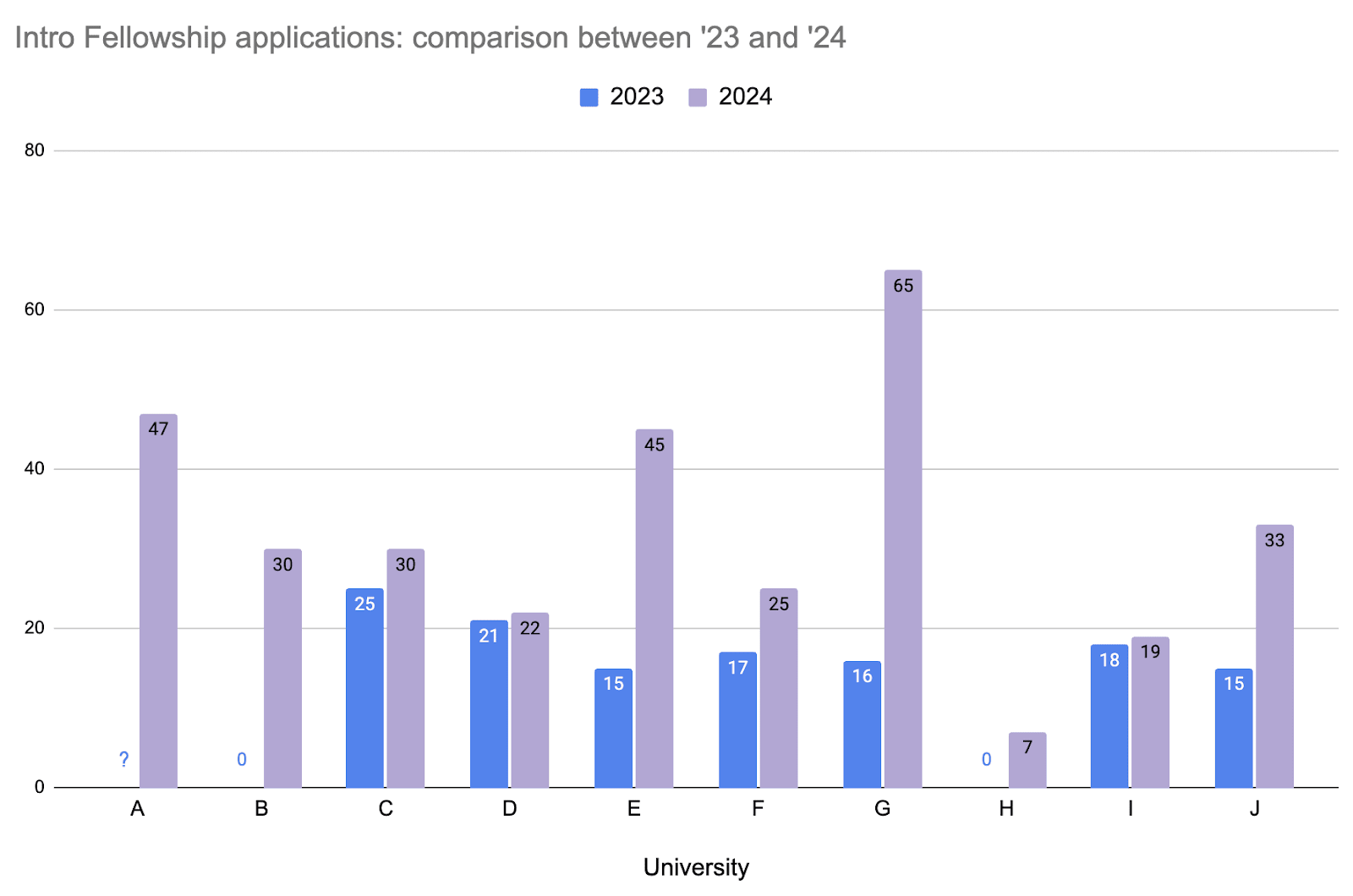

Intro fellowship sign-ups at EA groups participating in Early OSP doubled this Fall.

CEA’s University Groups Team is increasingly focusing its marginal efforts on piloting more involved support to a subset of EA university groups. This pilot program – Early OSP or EOSP[1] – includes early mentorship (starting in the summer), a semester planning retreat in August, and a workshop around EAG Boston, among other initiatives.

With the Fall semester now complete, we are analyzing initial outcomes. One standout result is that intro fellowship applications at EOSP groups averaged 32 per group, up from 14 the prior year[2]. Although we lack full baseline data, there are promising indicators – two groups, for instance, went from zero applications in Fall 2023 to meaningful engagement this year.

Of course, this is just one metric among the many that matter[3]. It is, however, an encouraging signal, that we’re hoping to build on as we continue to build out principles-first EA.

- ^

Early OSP (EOSP) is modeled after our regular Organizer Support Program. EOSP kicked off with organizers from these groups: Harvard, Yale, Stanford, MIT, Columbia, UC Berkeley, UChicago, UPenn, Oxford, and Cambridge. They have been anonymized on the graph.

- ^

Thank you to everyone who made this happen, especially the group organizers at all these universities!

- ^

We’re still collecting data on other metrics, and hope to share a more all-things-considered take in the future.

Many semesters are about to kick off in the next ~month, meaning the busiest and most important time of the year is coming up for many EA university group organizers.

I'm very grateful for the work of university group organizers around the world. University groups have been a place where so many people learned about EA ideas and met others who are equally motivated to do good in an impartial and scope-sensitive way. Many of the people who got involved with EA through university groups are now making progress on fighting very difficult problems in the world. Thank you to everyone who was and is making that possible by helping run a university group!

If you know a university group organizer, please consider sending them a message to wish them all the best with promoting EA ideas this month and beyond!

If you have some experience in a relevant field, you could also consider offering to speak at an event :) When I was organising my university group, I know I was pretty nervous about reaching out to people working in EA-aligned careers. I expect having alumni speak might make those career paths particularly salient ("I used to be exactly like you, and now I do this").

Many semesters are about to kick off in the next ~month, meaning the busiest and most important time of the year is coming up for many EA university group organizers.

I'm very grateful for the work of university group organizers around the world. University groups have been a place where so many people learned about EA ideas and met others who are equally motivated to do good in an impartial and scope-sensitive way. Many of the people who got involved with EA through university groups are now making progress on fighting very difficult problems in the world. Thank you to everyone who was and is making that possible by helping run a university group!

If you know a university group organizer, please consider sending them a message to wish them all the best with promoting EA ideas this month and beyond!

If you have some experience in a relevant field, you could also consider offering to speak at an event :) When I was organising my university group, I know I was pretty nervous about reaching out to people working in EA-aligned careers. I expect having alumni speak might make those career paths particularly salient ("I used to be exactly like you, and now I do this").