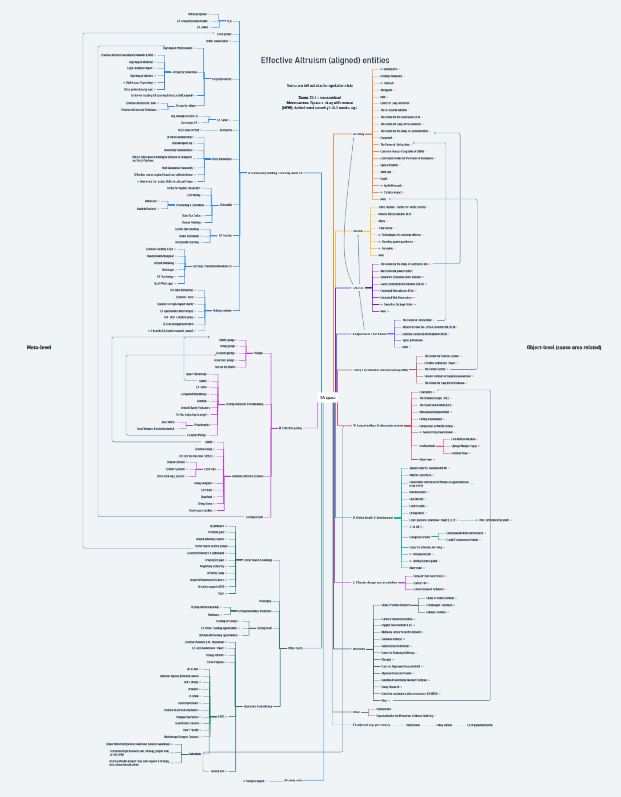

TLDR: I've assembled a mindmap of all EA (-related) entities I could find. You can access it at tinyurl.com/eamindmap. If I missed or misrepresented anything: leave a note (in the mindmap) and I'll add it or correct it. I've also added other existing lists of orgs to this post.

Context

Starting my position at EA Netherlands as a co-director, I was advised by EA Pathfinder (now Successif) to make an overview of the EA space. In this way it would be easier to onboard, support community members and follow conversations.

I made this mindmap of EA(-related) entities and this quickly got out of hand. I asked around, whether such overviews already exists. There were a few:

Overview of EA organizations[1]

- EA Org list (EA Opportunity Board) - From this list, I use this overview most often as it seems to be very comprehensive and includes meta data such as 'involvement with EA'.

- EA Org wiki (Jamie Gittins) - Introduced via a Forum post. I'd share this link if someone is new, or looking for an EA aligned career, as it has clear descriptions for every org

- EA/Rationalist-Related Orgs (Arden P. B. W.) - Also contains fellowships, courses and YouTube channels

- Database of orgs relevant to longtermist/x-risk work (Michael Aird) - Very comprehensive list

- Overview of effective giving organisations ->Overview of the public effective giving ecosystem (supply and demand) [shared] (Sjir Hoeijmakers) - Amazing to see all these giving orgs, I had no idea

- Let's advertise EA infrastructure projects, Feb 2023 - A list of EA-related projects and orgs that offer free and useful services

- EASE: directory of independent agencies and freelancers offering expertise to EA-aligned organizations

- AISafety.world is a map of the AIS ecosystem - Very hard to compete with this :)

- Other somewhat related lists:

- Let me know if I missed an important list of orgs here.

Somehow I still ended up sharing my mindmap with others, as it gives a clear visual overview of the orgs per main category or cause area. I know it is not perfect, but it is easy to maintain and people seem to like it. I was asked multiple times to add it to the forum, so here it is.

Disclaimer

- Organisation inclusion doesn't imply my endorsement.

- The branches (the categories or cause areas) are a bit arbitrary, as some organisations serve multiple categories or cause areas. For me the aim was to get an overview, not a perfect mindmap.

- It's incomplete. I'm still adding orgs weekly. Feel free to add notes (in the mindmap) where this overview can be corrected or orgs can be added or deleted.

- I use the words orgs, organisations and entities in this post. That is because 'orgs' is more accessible and clear as a word, but the entities aren't all official organisations.

- I changed the title of the mindmap from EA(-aligned) to EA(-related), due to this comment. I'm still a bit uncomfortable with it, because there are many more EA-related orgs which won't fit all into this mindmap (see 80k jobboard), but it seems more right (for example: I added the AI labs..). I try to add only the orgs that self-identify as EA-aligned or the ones that many EAs refer to.

How to use this

- However you like :) the left side contains more meta entities and the right side contains more object-level (cause area related) orgs.

- I heard people use it in order to quickly get an overview of existing entities in one category (like EA career support or biorisk).

- Sometimes I use it in an intro presentation to make people more aware of the global EA space.

- Zoom in/zoom out: Pinch or Ctrl + mousewheel; Navigate: Spacebar + move with left mouse button.

To post or not to post

I had reservations about sharing the mindmap, considering some organisations might not wish to be associated with EA. Guided by @Diego Oliveira's advise, I've published it but will retract if any substantial concerns arise due to its posting.

Furthermore, it might not be ideal that a random person is making mindmaps. I might stop maintaining it tomorrow, which further increases entropy, without adding value. I'm aware of this and I wouldn't mind it at all if an official EA org mindmap (or otherwise clear overview) would replace mine or if I was asked to remove everything.

- ^

Thanks to @Irene H from EA Eindhoven: Masterdocs of EA community building guides and resources!

Thanks, this is great. I added all the orgs you listed and removed the confusing arrow. I'll go over the list with a few AI experts from our community to check if the selected orgs/initiatives can be better selected and organised.