Open Philanthropy, Survival and Flourishing Fund, EA Funds and several other "EA-aligned" grantmakers publish most/all of their grants. But they're kind of hard to search, and it would take a lot of work to assemble a complete picture of all the publicly-available grantmaking that EA Forum users might care about the most. Has anyone done anything like this? I think it would be pretty interesting (and maybe useful!) to have a spreadsheet which automatically pulled in all grants from the top funders we care about.

Hide table of contents

33

0

0

Reactions

0

0

New Answer

New Comment

4 Answers sorted by

[Just to be clear, this was silly of me]

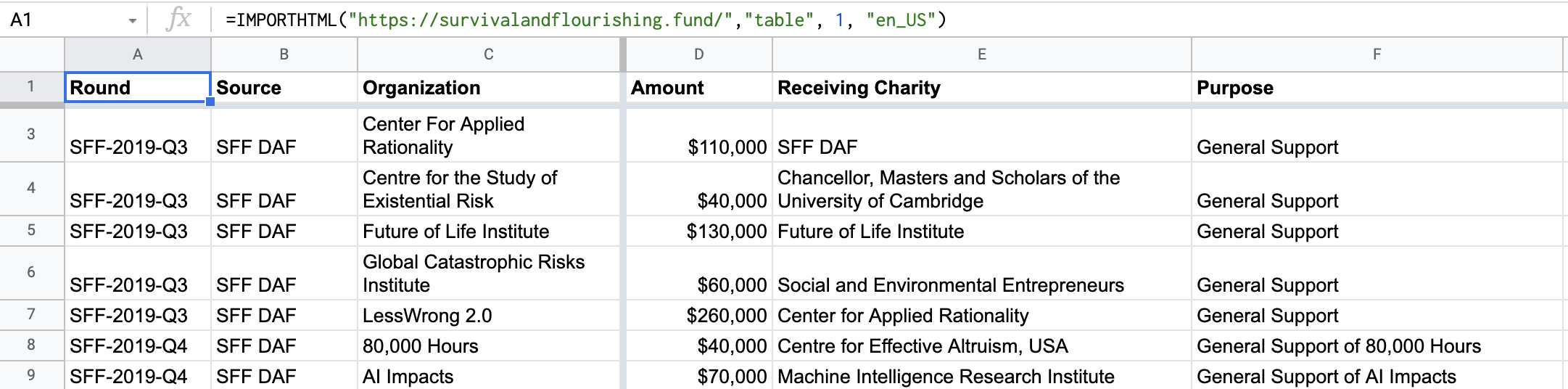

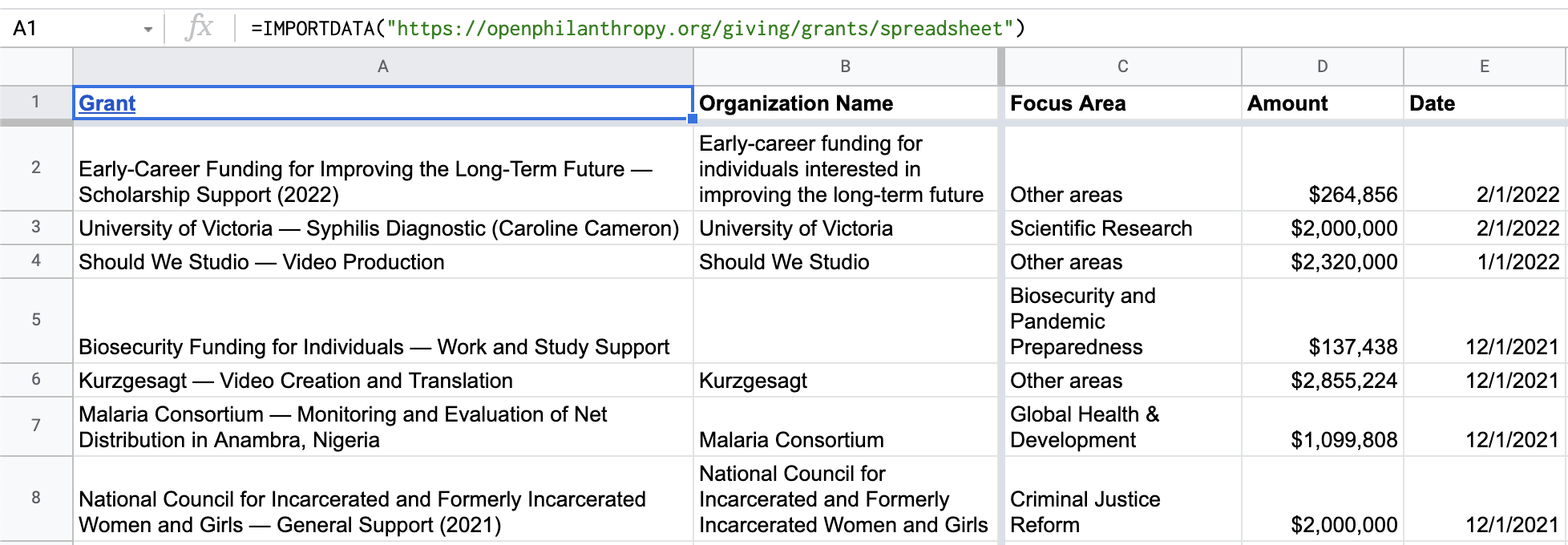

Ok so I made a sheet that will automatically update with OpenPhil and SurvivalAndFlurishing's grants:

Technicalities:

- Each got it's own tab, but I can combine them

- The link gives edit access. This isn't a serious project, I don't mind if it's ruined

- I couldn't figure out the ea.funds easily, but I could try some more if it would matter

Credit to xkcd for teaching me everything I know:

I recently commissioned one for EA Funds, it would be fairly easy to add OP and SFF into it.

2

Misha_Yagudin

Yes, I intend to but like everything, it might rod. What are your other needs here?

3

sawyer🔸

(Assuming you mean "rot")

As far as specific needs, nothing very specific. Sometimes I wonder how much overlap there is in grantees between the different grantmakers, and having more of them in one table where they can be collectively sorted and filtered would be more useful. I just generally think it's good to have transparency in grantmaking, and a single source that covers >90% of what people might consider "EA grantmaking" is more transparent than asking people to look at several different HTML tables or non-tabular lists.

EA Funders could put their grants on https://www.threesixtygiving.org/ which would make them available to be searched using their search engine: https://grantnav.threesixtygiving.org/

It seems to be just UK focussed but there might be an international one.

Curated and popular this week

·

Advanced AI could unlock an era of enlightened and competent government action. But without smart, active investment, we’ll squander that opportunity and barrel blindly into danger.

Executive summary

See also a summary on Twitter / X.

The US federal government is falling behind the private sector on AI adoption. As AI improves, a growing gap would leave the government unable to effectively respond to AI-driven existential challenges and threaten the legitimacy of its democratic institutions.

A dual imperative

→ Government adoption of AI can’t wait. Making steady progress is critical to:

* Boost the government’s capacity to effectively respond to AI-driven existential challenges

* Help democratic oversight keep up with the technological power of other groups

* Defuse the risk of rushed AI adoption in a crisis

→ But hasty AI adoption could backfire. Without care, integration of AI could:

* Be exploited, subverting independent government action

* Lead to unsafe deployment of AI systems

* Accelerate arms races or compress safety research timelines

Summary of the recommendations

1. Work with the US federal government to help it effectively adopt AI

Simplistic “pro-security” or “pro-speed” attitudes miss the point. Both are important — and many interventions would help with both. We should:

* Invest in win-win measures that both facilitate adoption and reduce the risks involved, e.g.:

* Build technical expertise within government (invest in AI and technical talent, ensure NIST is well resourced)

* Streamline procurement processes for AI products and related tech (like cloud services)

* Modernize the government’s digital infrastructure and data management practices

* Prioritize high-leverage interventions that have strong adoption-boosting benefits with minor security costs or vice versa, e.g.:

* On the security side: investing in cyber security, pre-deployment testing of AI in high-stakes areas, and advancing research on mitigating the ris

Summary

In this article, I estimate the cost-effectiveness of five Anima International programs in Poland: improving cage-free and broiler welfare, blocking new factory farms, banning fur farming, and encouraging retailers to sell more plant-based protein. I estimate that together, these programs help roughly 136 animals—or 32 years of farmed animal life—per dollar spent. Animal years affected per dollar spent was within an order of magnitude for all five evaluated interventions.

I also tried to estimate how much suffering each program alleviates. Using SADs (Suffering-Adjusted Days)—a metric developed by Ambitious Impact (AIM) that accounts for species differences and pain intensity—Anima’s programs appear highly cost-effective, even compared to charities recommended by Animal Charity Evaluators.

However, I also ran a small informal survey to understand how people intuitively weigh different categories of pain defined by the Welfare Footprint Institute. The results suggested that SADs may heavily underweight brief but intense suffering. Based on those findings, I created my own metric DCDE (Disabling Chicken Day Equivalent) with different weightings. Under this approach, interventions focused on humane slaughter look more promising, while cage-free campaigns appear less impactful. These results are highly uncertain but show how sensitive conclusions are to how we value different kinds of suffering.

My estimates are highly speculative, often relying on subjective judgments from Anima International staff regarding factors such as the likelihood of success for various interventions. This introduces potential bias. Another major source of uncertainty is how long the effects of reforms will last if achieved. To address this, I developed a methodology to estimate impact duration for chicken welfare campaigns. However, I’m essentially guessing when it comes to how long the impact of farm-blocking or fur bans might last—there’s just too much uncertainty.

Background

In

Holly Elmore ⏸️ 🔸

+ 0 more

·

In my opinion, we have known that the risk of AI catastrophe is too high and too close for at least two years. At that point, it’s time to work on solutions (in my case, advocating an indefinite pause on frontier model development until it’s safe to proceed through protests and lobbying as leader of PauseAI US).

Not every policy proposal is as robust to timeline length as PauseAI. It can be totally worth it to make a quality timeline estimate, both to inform your own work and as a tool for outreach (like ai-2027.com). But most of these timeline updates simply are not decision-relevant if you have a strong intervention. If your intervention is so fragile and contingent that every little update to timeline forecasts matters, it’s probably too finicky to be working on in the first place.

I think people are psychologically drawn to discussing timelines all the time so that they can have the “right” answer and because it feels like a game, not because it really matters the day and the hour of… what are these timelines even leading up to anymore? They used to be to “AGI”, but (in my opinion) we’re basically already there. Point of no return? Some level of superintelligence? It’s telling that they are almost never measured in terms of actions we can take or opportunities for intervention.

Indeed, it’s not really the purpose of timelines to help us to act. I see people make bad updates on them all the time. I see people give up projects that have a chance of working but might not reach their peak returns until 2029 to spend a few precious months looking for a faster project that is, not surprisingly, also worse (or else why weren’t they doing it already?) and probably even lower EV over the same time period! For some reason, people tend to think they have to have their work completed by the “end” of the (median) timeline or else it won’t count, rather than seeing their impact as the integral over the entire project that does fall within the median timeline estimate or

Recent opportunities in Building effective altruism

Thanks Yonatan, this is great! Glad to see this was so straightforward, I appreciate you putting it together. Misha seems to have taken care of the EA Funds part, at least up to mid-2021, so we're getting close. I'm planning to merge them in one direction or another.