tl;dr: Ask questions about AGI Safety as comments on this post, including ones you might otherwise worry seem dumb!

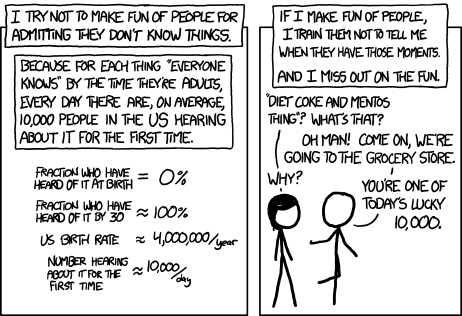

Asking beginner-level questions can be intimidating, but everyone starts out not knowing anything. If we want more people in the world who understand AGI safety, we need a place where it's accepted and encouraged to ask about the basics.

We'll be putting up monthly FAQ posts as a safe space for people to ask all the possibly-dumb questions that may have been bothering them about the whole AGI Safety discussion, but which until now they didn't feel able to ask.

It's okay to ask uninformed questions, and not worry about having done a careful search before asking.

AISafety.info - Interactive FAQ

Additionally, this will serve as a way to spread the project Rob Miles' team[1] has been working on: Stampy and his professional-looking face aisafety.info. This will provide a single point of access into AI Safety, in the form of a comprehensive interactive FAQ with lots of links to the ecosystem. We'll be using questions and answers from this thread for Stampy (under these copyright rules), so please only post if you're okay with that!

You can help by adding questions (type your question and click "I'm asking something else") or by editing questions and answers. We welcome feedback and questions on the UI/UX, policies, etc. around Stampy, as well as pull requests to his codebase and volunteer developers to help with the conversational agent and front end that we're building.

We've got more to write before he's ready for prime time, but we think Stampy can become an excellent resource for everyone from skeptical newcomers, through people who want to learn more, right up to people who are convinced and want to know how they can best help with their skillsets.

Guidelines for Questioners:

- No previous knowledge of AGI safety is required. If you want to watch a few of the Rob Miles videos, read either the WaitButWhy posts, or the The Most Important Century summary from OpenPhil's co-CEO first that's great, but it's not a prerequisite to ask a question.

- Similarly, you do not need to try to find the answer yourself before asking a question (but if you want to test Stampy's in-browser tensorflow semantic search that might get you an answer quicker!).

- Also feel free to ask questions that you're pretty sure you know the answer to, but where you'd like to hear how others would answer the question.

- One question per comment if possible (though if you have a set of closely related questions that you want to ask all together that's ok).

- If you have your own response to your own question, put that response as a reply to your original question rather than including it in the question itself.

- Remember, if something is confusing to you, then it's probably confusing to other people as well. If you ask a question and someone gives a good response, then you are likely doing lots of other people a favor!

- In case you're not comfortable posting a question under your own name, you can use this form to send a question anonymously and I'll post it as a comment.

Guidelines for Answerers:

- Linking to the relevant answer on Stampy is a great way to help people with minimal effort! Improving that answer means that everyone going forward will have a better experience!

- This is a safe space for people to ask stupid questions, so be kind!

- If this post works as intended then it will produce many answers for Stampy's FAQ. It may be worth keeping this in mind as you write your answer. For example, in some cases it might be worth giving a slightly longer / more expansive / more detailed explanation rather than just giving a short response to the specific question asked, in order to address other similar-but-not-precisely-the-same questions that other people might have.

Finally: Please think very carefully before downvoting any questions, remember this is the place to ask stupid questions!

- ^

If you'd like to join, head over to Rob's Discord and introduce yourself!

@aaron_mai @RachelM

I agree that we should come up with a few ways that make the dangers / advantages of AI very clear to people so you can communicate more effectively. You can make a much stronger point if you have a concrete scenario to point to as an example that feels relatable.

I'll list a few I thought of at the end.

But the problem I see is that this space is evolving so quickly that things change all the time. Scenarios I can imagine being plausible right now might seem unlikely as we learn more about the possibilities and limitations. So just because in the coming month some of the examples I will give below might become unlikely doesn't necessarily mean that therefor the risk / advantages of AI have also become more limited.

That also makes communication more difficult because if you use an "outdated" example, people might dismiss your point prematurely.

One other aspect is that we're on human level intelligence and are limited in our reasoning compared to a smarter than human AI, this quote puts it quite nicely:

> "There are no hard problems, only problems that are hard to a certain level of intelligence. Move the smallest bit upwards [in level of intelligence], and some problems will suddenly move from “impossible” to “obvious.” Move a substantial degree upwards, and all of them will become obvious." - Yudkowsky, Staring into the Singularity.

Two examples I can see possible within the next few iterations of something like GPT-4:

- maleware that causes very bad things to happen (you can read up on Stuxnet to see what humans have been already capable of 15 years ago, or if you don't like to read Wikipedia there is a great podcast episode about it)

- detonate nuclear bombs

- destroy the electrical grid

- get access to genetic engineering like crisper and then

- engineer a virus way worse than Covid

- this virus doesn't even have to be deadly, imagine it causes sterilization of humans

Both of the above seem very scary to me because they require a lot of intelligence initially, but then the "deployment" of them almost works by itself. Also both scenarios seem within reach because in the case of the computer virus we have already done this as humans ourselves in a more controlled way. And for the biological virus we still don't know with certainty if Covid didn't come from a lab, so it doesn't seem to far fetched that given that we know how fast covid spread a similar virus with different "properties", potentially no symptoms other than infertility would be terrible.

Please delete this comment if you think that this is an infohazard, I have seen other people mention this term, but honestly to me I didn't have to spend much time thinking about 2 scenarios I deem as not unlikely bad outcomes, so certainly people much smarter and experienced then me will be able to come up with those and much worse. Not to mention an AI that will be much smarter than any human.