Contributors: David Janků, David Reinstein, Edo Arad, Georgios Kaklamanos, Sophie Schauman

Introduction

Research has produced, and is producing, a lot of value for society. Research also takes up a lot of resources: Global spending on R&D is almost US$ 1.7 trillion annually (approx 2 % of global GDP). This is an attempt to map challenges and inefficiencies in the research system. If we addressed these challenges and inefficiencies, research could produce a lot more value for the same amount of resources.

The main takeaways of this post are:

- There are a lot of different issues that cause waste of resources in the research system

- In this post, these issues are categorized as related to (1) choice of research questions, (2) the quality of research and (3) the use of the results produced

- Though solutions and reform initiatives are outside the scope of this post, there does seem to be a lot of room for improvement

The terms “value” and “impact” are used very broadly here: it could be lives saved, technological progress, or a better understanding of the universe. The question of what types of value or impact we should expect research to produce is a big one, and not the one I want to focus on here. Instead, I will just assume that when we dedicate resources to research we are expecting some form of valuable outcome or impact. I will attempt to map up inefficiencies in how that is produced.

This post is a result of a collaboration that came out of the EA Global Reconnect conference. After the conference, a group of EA’s interested in improving science started recurring coworking sessions to discuss ideas for related projects and forum posts. I have received invaluable support and feedback from many people in this group, particularly those mentioned as contributors above.

The plan is that contributors to this post and perhaps others in the group will follow up with additional forum posts on metascience that focus more on specific issues, initiatives or solutions. David Reinstein is working on a post titled “Slaying the journals”, a proposal for peer review/rating, archiving, and open science aimed at avoiding rent-extracting publishers, reducing careerist gamesmanship, and making research more effective. There are many previous initiatives that I am not aiming to cover here: just as examples, Open Philanthropy has previously published several pieces related to metascience, and Center for Open Science is working to improve scientific research with a focus on improving transparency and reproducibility.

My hope is that this mapping could be of use to people who want to get an overview of issues in the research system, and to initiate a discussion about potential valuable projects or interventions.

1. Overview

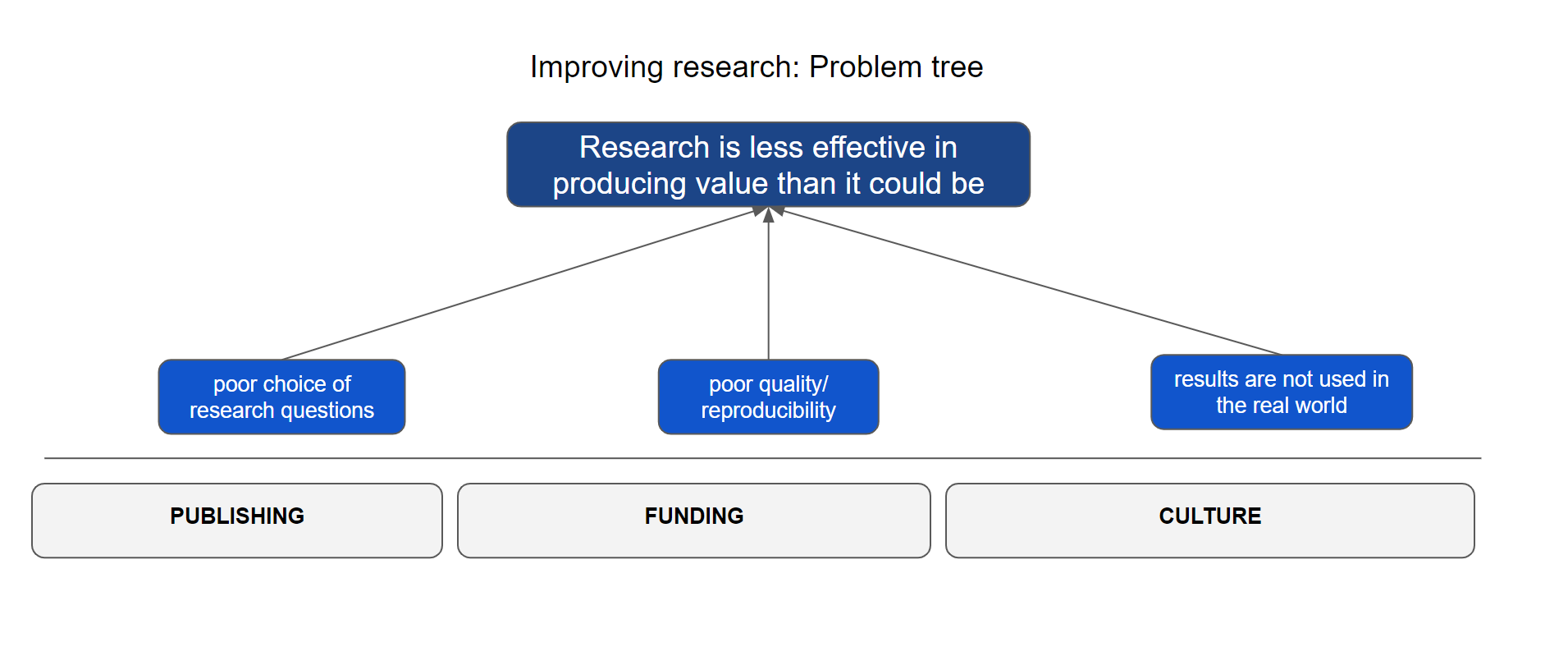

The causes of inefficiencies in the research system can be roughly categorized in three areas: (1) the choice and design of research questions can be flawed, (2) the research that is carried out can suffer from poor methodological quality and/or low reproducibility, and (3) even when research successfully leads to valuable results, they are not adequately incorporated into real-world solutions and decision-making. Each of these three areas can be broken up into underlying drivers, many of which fit into the broad categories of i. publishing, ii. funding and iii. culture.

Figure 1 shows the three main problem categories described in this post in the structure that builds on the idea of a problem tree. The main problem that is in focus (“Research is less effective in producing value than it could be”) is placed at the top and “root causes” of that problem are written out below, with arrows indicating causal relationships.

In the sections below, this problem tree will be expanded by focusing on one of these three issues at a time and exploring the underlying causes for why it occurs. Concepts that correspond to a box in the problem tree will be written in bold.

There are clearly also other ways in which resources are wasted within the research system that are not covered here (e.g. time spent on resubmitting proposals or papers without having received constructive feedback, or just poor time- and project management). To make this mapping a manageable task, I have opted to only include issues that could jeopardize the entire value of the research output, and not anything that only slows down progress of a given project (or makes it more expensive).

Finally, many of the identified root causes might not be consistently problematic: they might be an issue in one field but not in another, or it might be that some people see them as a problem while others see them as functional. I have chosen to include root causes where there seems to be either 1) good reason to believe that they are a significant driver of the problem they are pointing to, or 2) there seems to be a widespread notion of them as a significant driver. The reasoning behind this is on the one hand that it would be very difficult to consistently judge and rank the magnitude of different drivers, and on the other hand that just eliminating drivers that I don’t believe are significant could make the mapping appear spotty. As an example, I might not think that restricted access is among the most important problems in science, but excluding it from the mapping would seem weird since so much of the attention in metascience is focused on open access solutions.

2. Poor choice of research questions

The choice and design of research questions for a project are fundamental for the potential value of the results. Many times it will be a matter of values, priorities, and ideology to determine what constitutes a good research question: one person might find it extremely important to investigate how the wellbeing of a cat is affected when it is left alone during the day, while someone else would think this is a terrible waste of resources. My main focus here though is that a research question may be flawed in ways where it does not fulfill the intentions of either the entity that funded the research or the needs of the intended target group for the results.

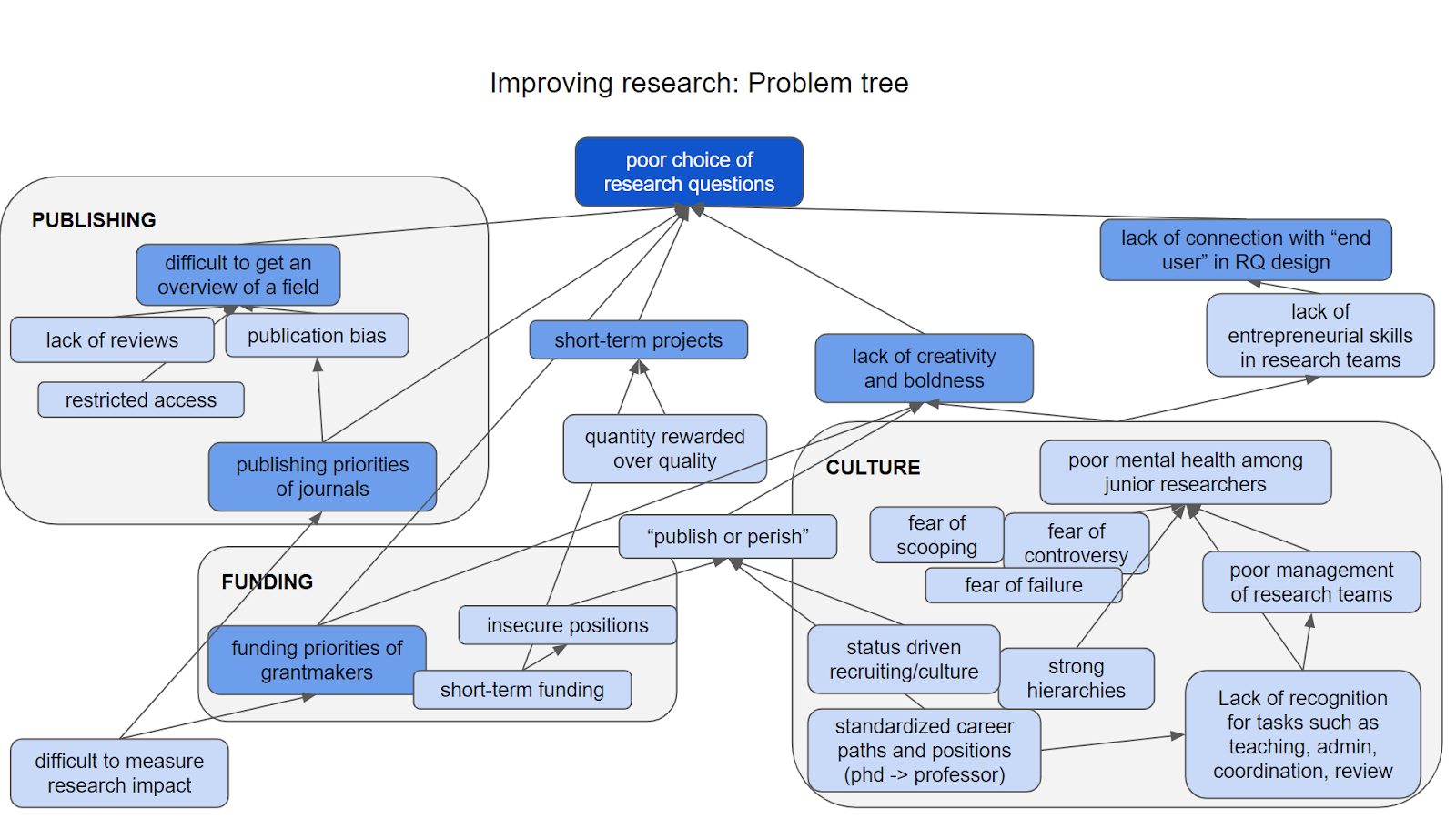

Figure 2 presents a mapping of underlying drivers for poor choice of research questions. The direct causes that have been identified are the difficulties with getting an overview of a field, the publishing priorities of journals, the funding priorities of grantmakers, short-term projects, lack of creativity and boldness and a lack of connection with the “end user” in the design of research questions. All of these have additional underlying drivers. Some are also interlinked with each other. As an example, the arrows illustrate how publishing priorities of journals is a cause for publication bias which in turn is a cause of that it is difficult to get an overview of a field.

Note that this kind of problem mapping can be used to quickly construct a theory of change for an intervention targeting one of the root causes; improving the publishing priorities of journals could reduce publication bias and make it more manageable to get an overview of a field, which could lead to better choice of research questions (and more effective value production of research, if we take it all the way to Figure 1).

2.1 Difficulties in getting an overview of the field

One of the reasons why research questions might be poorly chosen can be that it’s difficult for the researcher to get a good overview of the field, identify the relevant unknowns, and have a clear picture of the state of the evidence. Partly, this might be an unavoidable consequence of a rich and intricate research field with a long history. It may take a lot of time to go through previous work, and to understand the underlying building blocks and tools supporting later work. Nonetheless, there are a few factors that make the situation worse than it would have to be.

One important factor is publication bias. This is the tendency to let the outcome of a research study influence the decision whether to publish it or not. Publication bias influences both what researchers choose to submit for publication and what the journals choose to publish. Generally speaking, a study is much more likely to be published if a statistically significant result was found or if an experiment was perceived as “successful” in some sense. This may give the impression that a specific hypothesis has never been tested, when in fact it has been tested once or more but the results were not deemed publication-worthy. The tests may have proved inconclusive, perhaps because of methodological barriers (mistakes that may be repeated in future work) or extremely noisy processes. Even with sound methodology and strong statistical power the result might simply be deemed as too ‘boring’ for publication.

Another hindering factor is the lack of good review articles. Review articles survey and summarize previously published studies in a specific field, making them an invaluable resource to researchers. However, reviews are not always done in a systematic and transparently reported way, which can lead to a misleading impression of the current knowledge. It also happens that both previous research and reviews are ignored when designing research questions.

A third factor could be the issue that much research is published with restricted access. This makes it very expensive to legitimately access research papers for someone who does not belong to a university that pays subscriptions. However, there are library websites, SciHub (papers) and Libgen (books), that provide free access to millions of research papers and books without regard for copyright. This is not an optimal solution and there are issues with the sites being blocked in many countries, but they do make restricted access less of a practical problem than it would be otherwise.

2.2 Publishing priorities of journals

Since publishing research (preferably in the most high-status journals) is so important to a researcher's career, the publishing priorities of scientific journals have a great influence on the choice of research questions. Researchers tend to pick and design research questions based on what they believe can yield publishable results, and this might not align with which questions are most important or valuable to study.

Journals generally favour novel, significant and positive results. From one perspective, this makes a lot of sense. These kinds of results seem likely to have the most value or impact, either for the research field or for society at large. However, if these are the only results that can be published in respected journals, it can make researchers hesitant to choose more “risky” research questions, even if they would be important.

A specific type of studies that can be hard to publish are replication studies, where the aim is to replicate the results of a previously conducted study in order to verify that the results are valid. One could argue that this type of research is less valuable than novel studies of high quality, but there are instances where replication studies might be very important. For example, if the results are to be implemented on a very large and expensive scale so that it is especially important to verify their validity. It can also be the case that the first study done on an important question has significant flaws or that there is suspicion of bias or irregularities.

So, why do the publication priorities of journals not align with what is best for value creation? That question in itself could probably be the basis for a separate post, but I believe one reason might be that it’s really hard to measure the impact of research. If the impact or societal value of research were easier to measure and demonstrate, it seems likely that at least some journals would use this to guide their publication priorities.

Scientific publishing can be a very profitable business. There is a lot of criticism towards publishers regarding both their business models and the incentives they create for science, as shown for example in this article covering the historical background of large science journals.

2.3 Funding priorities of grantmakers

Edit: Alternative heading "Inefficient grant-giving" - see discussion in comments.

Researchers depend on funding to carry out their work. Just as the choice of research question is influenced by what could generate publishable results, it is also directly influenced by what type of research questions attract funding. To be clear, funding priorities vary between different grantmakers and this is not necessarily a problem.

Public opinion influences research funding, both through political decisions that influence governmental funding and through independent grantmakers that fundraise from private donations. Detailed decisions about what to fund are generally done by academic experts, but high-level priorities about funding for example clean energy research can be directly influenced by political decisions.

When a specific field gets a lot of hype, this can influence the direction of funding a lot. Such trends often result in researchers tweaking their applications to include concepts that are likely to attract funding (AI, machine-learning, nanotechnology, cancer…), even though that might not be the best choice from a perspective of picking the most important or valuable research question. Hype of an applied field can also incentivize researchers to communicate their research in a way that makes it seem more relevant to those applications than it really is. An example of this could be basic research biologists spinning their work so it seems like it will have important biomedical outcomes.

An interesting feature, especially considering that journals often value novelty very highly, is that grant proposals are less likely to get funding if the degree of novelty is high. The observed pattern does not seem to be explained by novel proposals being of lesser quality or feasibility.

Many funders have a stated goal of achieving impact with their funding, but as noted in the previous section it is really hard to measure the impact of research (almost no matter what type of impact it is you try to optimize for). Also, many funders have specific fields that they focus on, e.g. cancer research, so that even if they attempt to optimize for impact they can only do that within that specific field.

One specific challenge might be that working with research funding is generally not a high-status job. This means it can be difficult to attract highly skilled people, even though doing the job well is indeed very difficult. Since there are no clear feedback loops on how well different funding agencies succeed in spending their money on impactful research, there is not much incentive for improvements.

2.4 Short-term projects

Many research questions are designed with the consideration that they should be possible to answer in a relatively short study, perhaps in 1-3 years. This can be a problem when a short study is not enough to answer an important question. For a study of a healthcare intervention, for example, we might only study and report the impact 6 months after the intervention, when in reality it might be more relevant to know the impact after 10 years.

One reason for this can be short-term funding, with small grants that only cover a couple of years. Also, there is a tendency that quantity is rewarded over quality so that researchers are rewarded more (career-wise) for having published a lot of small studies than a few large studies.

However, shorter grants can in fact often be combined to finance long-term projects. There are also large funders that work with large, long-term grants that are more directed to funding excellent researchers rather than specific projects. For specific cases this seems to have generated great results. Still it seems unclear if this would be a scalable solution to improve research effectiveness more generally.

2.5 Lack of creativity and boldness

When choosing and designing research questions, some choices are going to be riskier than others. A safe choice would be to go for research questions that lead the researcher into a field with a lot of funding (e.g. cancer research), while the study itself is something that is almost sure to generate publishable results.

A lot of important research might be risky. It might be in a novel or neglected research field where funding is more scarce or where there are fewer career opportunities, and it might be very unclear initially if the study will generate publishable results. To design and pursue such novel and high-risk research questions requires creativity and boldness. Meanwhile, there are many factors in the academic system that work against having bold and creative researchers.

A concept familiar to anyone in research is “publish or perish” - the idea that unless you keep publishing regularly in scientific journals your research career will quickly fail. Since publications are the output of science, it might seem obvious that productive researchers should be rewarded. However, it becomes problematic when a researcher has a better likelihood of career success from producing multiple low-quality papers on unimportant topics, than from doing high-quality work on important questions that generate fewer publications.

The “publish or perish” pressure is driven by several factors: it links to short-term funding and insecure positions, but also to status driven recruiting and culture.

A lack of creativity and boldness is also driven by a lot of interlinked factors of the academic culture: fear of failure and controversy, poor mental health among junior researchers, and a hierarchical system where it could be risky for your career to stand out in the wrong way. The careers of researchers are generally set in very standardized career paths, where every researcher is supposed to advance in the same manner from PhD student to professor. This is clearly not a realistic expectation, considering that there are vastly more PhD students than professors.

The funding priorities of grantmakers also appear to play a role in holding back creativity and boldness, with funding agencies becoming more risk averse.

2.6 Lack of connection with end-user in the design of research question

Poor design of research questions in applied research is often linked to little or no contact with the intended end-user of the results. For example, a researcher who develops a new medical treatment might not have had much contact with the patients to understand if it would really improve their life quality. A researcher on economic policy might not have understood the considerations of the political decision-maker that would implement a new policy, and a materials researcher may have neglected important priorities in the industry that would use a new material. It seems common that researchers believe that they know the needs of their target groups without having actually asked them, which leads to research questions being framed in a way that does not yield useful results.

One reason for this disconnect could be a lack of entrepreneurial skills in research groups - it is uncommon to reach out to the end users and make new connections outside academia. Researchers often get their understanding of the field through scientific conferences and through what is published in journals, neglecting informal non-academic sources of information. It might also be difficult to communicate with non-experts or with experts of other fields if they are used to speaking in very specialized terminology.

It seems that entrepreneurial skills are not encouraged by the academic culture and system. The fact that career paths are standardized and hierarchies are often very strong could deter entrepreneurial people from an academic career.

Many grant-makers do request end-user participation in applied research projects, but in practice this is often established in a shallow way just to tick the box in the grant application.

3. Poor quality and reproducibility

If the research question is “good” in the sense that answering it would provide value of some kind for society, the value of the output still depends on the quality of the research. The Center for Open Science does a lot of work in this area, and many metascience publications have been done on this topic.

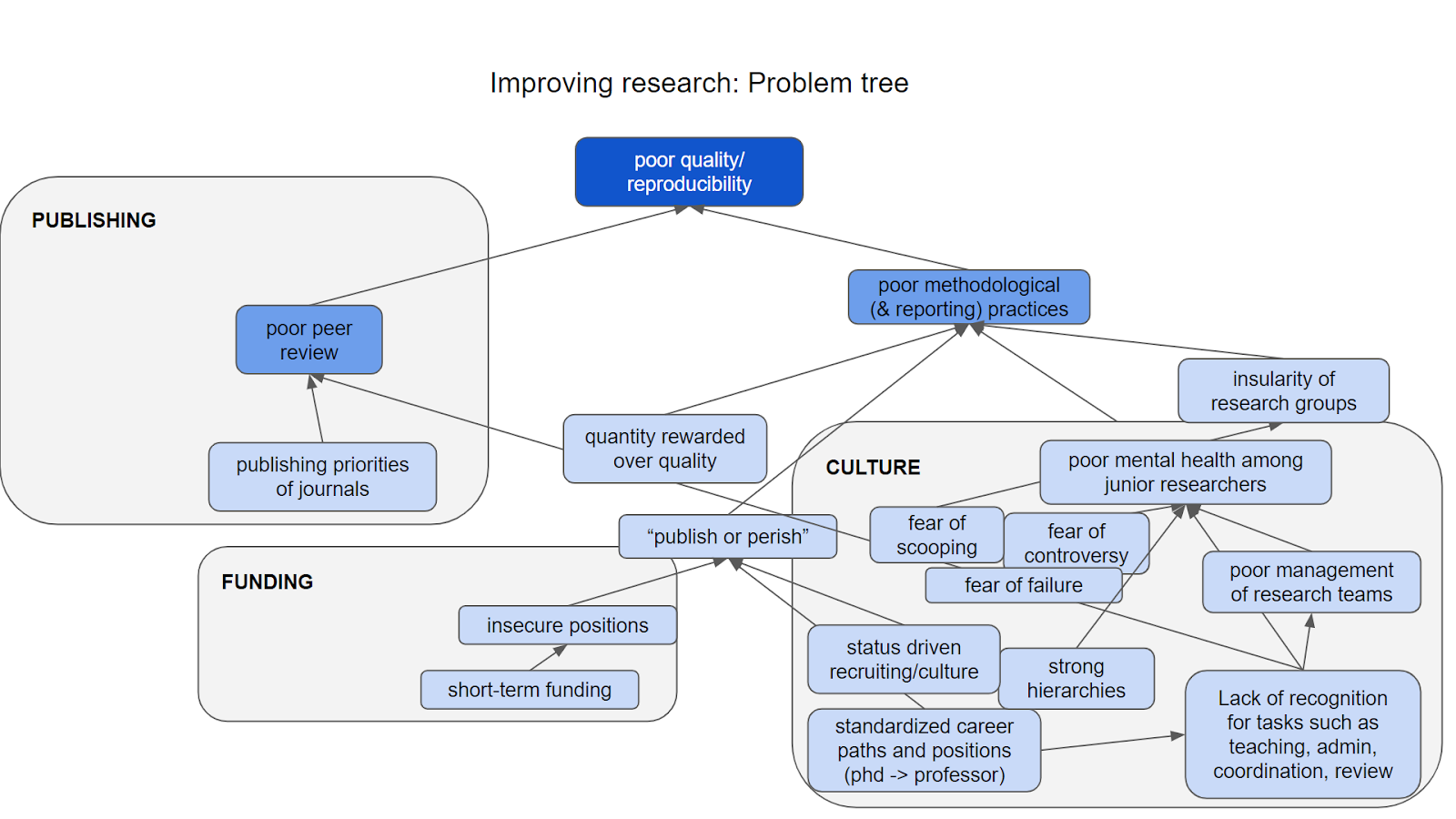

The reproducibility crisis (or replication crisis) refers to the realization that many scientific studies are difficult or impossible to replicate or reproduce. This means that the value of published research results is questionable. Figure 3 maps underlying drivers of poor research quality and reproducibility. The direct causes that have been identified are poor peer review as well as the poor methodological and reporting practices themselves. These practices are driven by many root causes, primarily linked to academic culture.

3.1 Poor peer review

Peer review is the process by which experts in the field review scientific publications before they are published. In theory, this system is supposed to guarantee quality. If there are flaws in the study design, the methodology, or in the reporting of results, the reviewers should see this and either make the author improve their work or reject the paper completely.

In practice though, this doesn’t work very well, and even top scientific journals have run seriously flawed papers. One reason for this is that even though reviewing is viewed as being important for academic career progression, it is done on a volunteer basis without direct compensation to the reviewer. Reviews are usually anonymous, so there is no accountability, and no real incentive to do peer review well. This leads to reviews being done either hastily or delegated to more junior researchers that might not have the experience to do it well.

High scientific quality should be among the top publishing priorities of journals, but it seems as if in practice that is not the case. If large, profitable and well-respected journals had scientific quality as a top priority, they should be able to achieve it. In reality, it appears that top science journals might even be attracting low-quality science, partly because they prioritize publishing spectacular results. Since getting published in a top journal can be extremely valuable to a researcher's career, there is a great incentive to cut corners or even cheat to achieve such spectacular results.

3.2 Poor methodological and reporting practices

Apart from flaws in peer review, the main driver for poor research quality and reproducibility is poor methodological and reporting practices. Poor methodology refers to when the study and/or data analysis is poorly done. Of course, there are many field-specific examples of poor methodology, but there are also questionable research practices that occur in many different fields of research.

One prominent example is p-hacking (or “data dredging”), which describes when exploratory, or hypothesis-generating, research is not kept apart from confirmatory, or hypothesis-testing research. This means that the same data set that gave rise to a specific hypothesis is also used to confirm it. P-hacking can lead to seemingly significant patterns or correlations in cases where in fact, no such pattern or correlation exists.

Poor reporting overlaps with poor methodology, but can also be a separate issue where the actual method is scientifically sound. If for example details of experiment design, raw data or code for data analysis is not shared publicly, it is very difficult for someone else to independently repeat a study to confirm the results. In theory such data could be obtained from the corresponding author, but in practice records are often so poor that more than 70% of researchers have tried and failed to reproduce another scientist's experiments, and more than half have failed to reproduce their own experiments.

Methodological and reporting practices depend on academic culture. Methodology and routines for documentation and reporting are often taught informally within research groups. Informal training combined with the insularity of research groups, where methodological practices are not shared and discussed between different groups, leads to lack of transparency. Strong hierarchies also contribute to lack of scrutiny as it can be hard for junior researchers to challenge the judgement of their supervisors.

There is also the issue of quantity over quality. The pressure to publish (“publish or perish”) can push researchers to submit flawed studies for publication, as there is a widespread perception that quantity is rewarded over quality in a researcher’s career. There are a number of declarations, manifestos, and groups, claiming that the metrics we are currently using --which focus on quantity-- are flawed with (potentially) catastrophic consequences.

Meanwhile, a study made on Swedish researchers states that such criticism lacks empirical support. A full evaluation of this is outside the scope of this post, however it might be worth reflecting on Goodhart’s Law: “When a measure becomes a target, it ceases to be a good measure”. In other words: Evaluating the quality of scientists based on the amount of their publications, would only lead to scientists who produce a lot of publications. And in order to produce studies faster, researchers would neglect the “boring things”: documentation, organization of research artifacts, and might also end up with flawed methodologies.

4 Results are not used in the real world

Even when research produces valuable results, their actual impact will often depend on their implementation outside of academia. In some cases (e.g. basic research) this might be less relevant as the end users are also in academia. Thus impact will be generated through the accumulated work of other researchers that builds further on these results.

However, for most applied research, the results have essentially no impact unless they are used “in the real world” outside academia. Use of results looks very different for different fields and involves different types of end-users: it could be a change of laws or policy that is implemented by politicians and public servants, or the use of a new material that is used to produce better consumer electronics by a private company, or the implementation of better infection prevention methods in hospitals by healthcare staff. Neither of these are likely to happen as a direct result of a researcher publishing a paper in a scientific journal unless they also involve other forms of communication and collaboration.

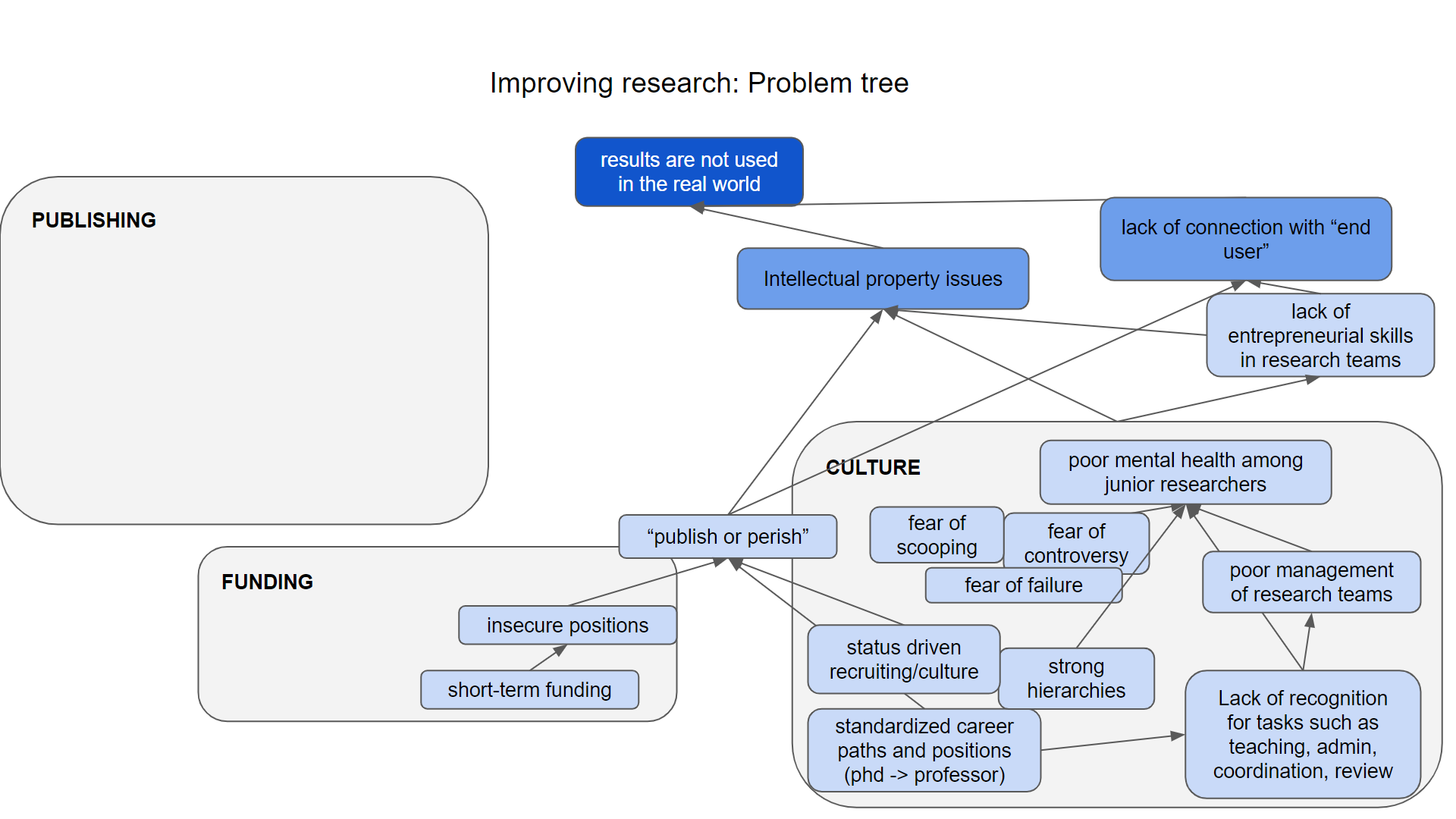

Figure 4 maps underlying reasons for why research results are not put to use. The direct causes that have been identified are intellectual property issues and lack of connection with the end user. The underlying causes seem to mostly depend on problems with the academic culture.

4.1 Intellectual property issues

For research that results in Intellectual Property (IP) -- typically an invention that can be protected by a patent -- the handling of the IP rights is extremely important for how likely the results are to be used. The ownership of the IP generated by research works in different ways in different locations. In Sweden and Italy, for example, the researcher has full personal ownership of any IP their research generates through the so-called “professor’s privilege”. In other locations, for example in the US and in the UK, IP rights or results of publicly-funded research are owned by the institution where the researcher works (institutional ownership). There also exist more complicated variations where IP ownership is split between the researcher and the institution.

Protecting IP means that nobody else can use the invention without permission, and it is a common perception that a patent is most of all a barrier to implementation. The practice of patenting discoveries, especially those that come as a result of publicly or philanthropically funded research, has been heavily criticised for creating barriers to innovation and collaboration, being a bureaucratic and administrative burden for universities and for creating distorted incentives for researchers. For example, researchers may be deterred from investigating the potential of a compound that is patented by somebody else as they know they will be dependent on the patent holder for permission to use any new results.

On the other hand, IP protection can be crucial to attract investments to take an invention all the way to practical use. Say, for example, that a research group has identified a compound that could be a very promising new medication. It is generally only if the IP is protected that investments will be made to develop this promising compound into a drug. The process of developing and commercialising a new product is expensive and risky, and patent ownership is what makes it possible to recoup these investments if the project is successful. This means that if the group does not patent the compound (or otherwise protect the IP), it will never be developed into a drug.

One way that IP issues can prevent results from being used is therefore simply sloppiness, when nobody realized there was IP that should be protected, or nobody bothered to do it. A patent can only be granted for a novel and unknown invention. If the IP has ever been described in public it can not be protected, and the possibilities to get investments into commercial development then become very slim even for promising inventions. Protection of intellectual property can thereby be in direct conflict with the focus on rapid publication of results (the “publish or perish” culture).

On the other hand, filing and maintaining patents is no guarantee for implementation. A lot depends on the patent owner, and it’s unclear what policies generate the best results. When the individual researcher holds the patent personally, a lot depends on that researcher's skills and motivations. If they don’t have the interest or the ability to commercialize the invention, it will generally never happen. The academic culture is not encouraging entrepreneurship, and many research groups lack members with entrepreneurial skills.

When patents are instead owned and managed by the university through some kind of venture or holding company, a little less depends on the individual researcher. However, this leads to other issues: the staff managing IP exploitation do not have a personal stake in the potential startup, which decreases their incentives to do a great job. Also, since they manage a portfolio of patents, different patents generated from the same university compete with each other for resources and priority.

4.2 Lack of connection with end-user

It seems common that a researcher has the view that their job is done by producing and publishing results in scientific journals, which is logical since that is how a research career is measured and rewarded. For a result to be used in the real world, however, publishing is rarely enough.

Section 2.6 covers the lack of connection with end-users in the design of research questions. The most serious consequence of this is when the research question itself is fundamentally irrelevant for the stakeholders. When there is no direct contact between researcher and end-user, the researcher also does not get any clear feedback on why the results remain unused which makes it difficult to improve.

Contact with the end-user is crucial also after the results have been obtained. Working with implementation is often neglected as it is time-consuming,difficult, and often not rewarded by the academic system. Implementation work can look very different between fields - in political science or economy, the stakeholders could be politicians or staff at government bodies, while in biotechnology or physics it might be private companies. Either way, close collaboration between researcher and end-user is often a prerequisite for proper implementation of results.

Conclusions

The academic research system is very complex, and there are many different issues that cause waste of resources within it. Though research already produces a lot of value of different kinds - e.g. improved medical treatments, more efficient food production, sustainable energy technologies, or improved understanding of the universe - there also seems to be a lot of room for improvement.

An issue that came up in the process of creating this piece but that was not included in the mapping is a general scarcity of flexible research funding. Increased (and less restricted) funding might be a possible solution to some of the problems mentioned here. Still, it’s unclear if it would be more effective to increase funding or to address other problems in the system.

As mentioned in the introduction, to make this writeup manageable I opted not to include issues that seem to just slow progress towards results without jeopardizing their value. Such issues would include requirements for researchers to spend their time on other things than research, and I think a discussion on the subject of what researchers should and should not spend time on would fit better in a separate post.

Proposed solutions and reform initiatives are outside the scope of this post, but it’s worth mentioning that a lot of work is being done by different organizations. There are plans for follow-up posts by the contributors to this one that focus more on the solution side.

I/we would love to get input on this mapping - particularly, if you think that: i. there are significant issues that jeopardize the value of research results which are not included in this post, ii. any of the problems described here is overstated, iii. some challenges in the research system might be more or less valuable to target from an EA perspective. Looking forward to your feedback on the comments!

Thanks a lot for writing this post. I'm interested in these topics and was just thinking the other day that a write up of this sort would be valuable.

A relevant and fairly detailed write-up (not mine) of this problem area and how meta-research might help is available here: https://lets-fund.org/better-science/ (I didn't see it cited but may have missed it).

In terms of the content of the post, a couple of things that I might push back on a little:

I'd be interested in learning what projects you have planned and discussing some solutions to the problems that you have mapped.

So glad to hear that, and thanks for the added reference to letsfund!

On peer review I agree with Edo's comment, I think it's more about setting a standard than about improving specific papers.

On IP, I think this is very complex and I think "IP issues" can be a barrier both when something is protected and when it's not. I have personally worked in the periphery of projects where failing to protect/maintain IP has been the end of road for potentially great discoveries, but also seen the other phenomena where researchers avoid a specific area because someone else holds the IP. It would be interesting to get a better understanding both of the scale of these problems and if any of the initiatives that currently exists seem promising for improving it.

Great points! Re peer-review, I think that your argument makes sense but I feel like most of the impact on quality from better peer review would actually be in raising standards for the field as a whole, rather than the direct impact on the papers who didn't pass peer review. I'd love to have a much clearer analysis of the whole situation :)

That view seems reasonable to me and I agree that a clearer analysis would be useful.

An additional and very minor point I missed out from my comment is that I'm sceptical that the relationship between impact factor and retraction (original paper here) is causal. It seems very likely to me that something like "number of views of articles" would be a confounder, and it is not adjusted for as far as I can tell. I'm not totally sure that is the part of the article that you were referring to when citing this, so apologies if not!

My experience talking with scientists and reading science in the regenerative medicine field has shifted my opinion against this critique somewhat. Published papers are not the fundamental unit of science. Most labs are 2 years ahead of whatever they’ve published. There’s a lot of knowledge within the team that is not in the papers they put out.

Developing a field is a process of investment not in creating papers, but in creating skilled workers using a new array of developing technologies and techniques. The paper is a way of stimulating conversation and a loose measure of that productivity. But just because the papers aren’t good doesn’t mean there’s no useful learning going on, or that science is progressing in a wasteful manner. It’s just less legible to the public.

For example, I read and discussed with the authors a paper on a bioprinting experiment. They produced a one centimeter cube of human tissue via extrusion bioprinting. The materials and methods aren’t rigorously controllable enough for reproducibility. They use decellularized pig hearts from the local butcher (what’s it been eating, what were its genetics, how was it raised?), and an involved manual process to process and extrude the materials.

Several scientists in the field have cautioned me against assuming that figures in published data are reproducible. Yet does that mean the field is worthless? Not at all. New bioprinting methods continue to be developed. The limits of achievement continue to expand. Humanity is developing a cadre of bioengineers who know how to work with this stuff and sometimes go on to found companies with their refined techniques.

It’s the ability to create skilled workers in new manufacturing and measurement techniques, skilled thinkers in some line of theory, that is an important product of science. Reproducibility is important, but that’s what you get after a lot of preliminary work to figure out how to work with the materials and equipment and ideas.

Without weighing in on your perspective/position here, I'd like to share a section of Allan Dafoe's post AI Governance: Opportunity and Theory of Impact that you/some readers may find interesting:

This reminds me of a conversation I had with John Wentworth on LessWrong, exploring the idea that establishing a scientific field is a capital investment for efficient knowledge extraction. Also of a piece of writing I just completed there on expected value calculations, outlining some of the challenges in acting strategically to diminish our uncertainty.

One interesting thing to consider is how to control such a capital investment, once it is made. Institutions have a way of defending themselves. Decades ago, people launched the field of AI research. Now, it's questionable whether humanity can ever gain sufficient control over it to steer toward safe AI. It seems that instead, "AI safety" had to be created as a new field, one that seeks to impose itself on the world of AI research partly from the outside.

It's hard enough to create and grow a network of researchers. To become a researcher at all, you have to be unusually smart and independent-minded, and willing to brave the skepticism of people who don't understand what you do even a fraction as well as you do yourself. You have to know how to plow through to an achievement that will clearly stand out to others as an accomplishment, and persuade them to keep sustaining your funding. That's the sort of person who becomes a scientist. Anybody with those characteristics is a hot commodity.

How do you convince a whole lot of people with that sort of mindset to work toward a new goal? That might be one measure of a "good research product" for a nascent field. If it's good enough to convince more scientists, especially more powerful scientists, that your research question is worth additional money and labor relative to whatever else they could fund or work on, you've succeeded. That's an adversarial contest. After all, you have to fight to get and keep their attention, and then to persuade them. And these are some very intelligent, high-status people. They absolutely have better things to do, and they're at least as bright as you are.

Thank you for this perspective, very interesting.

I definitely agree with you that a field is not worthless just because the published figures are not reproducible. My assumption would be that even if it has value now, it could be a lot more valuable if reporting were more rigorous and transparent (and that potential increase in value would justify some serious effort to improve the rigorousness and transparency).

Do I understand your comment correctly that you think that in your field that the purpose of publishing is mainly to communicate to the public, and that publications are not very important for communicating within the field to other researchers or towards end users in the industry?

That got me thinking - if that were the case, would we actually need peer-reviewed publications at all for such a field? I'm thinking that the public would anyway rather read popular science articles, and that this could be produced with much less effort by science journalists? (Maybe I'm totally misunderstanding your point here, but if not I would be very curious to hear your take on such a model).

Rigor and transparency are good things. What would we have to do to get more of them, and what would the tradeoffs be?

No, the purpose of publishing is not mainly to communicate to the public. After all, very few members of the public read scientific literature. The truth-seeking or engineering achievement the lab is aiming for is one thing. The experiments they run to get closer are another. And the descriptions of those experiments are a third thing. That third thing is what you get from the paper.

I find it useful at this early stage in my career because it helps me find labs doing work that's of interest to me. Grantmakers and universities find them useful to decide who to give money to or who to hire. Publications show your work in a way that a letter of reference or a line on a resume just can't. Fellow researchers find them useful to see who's trying what approach to the phenomena of interest. Sometimes, an experiment and its writeup are so persuasive that they actually persuade somebody that the universe works differently than they'd thought.

As you read more literature and speak with more scientists, you start to develop more of a sense of skepticism and of importance. What is the paper choosing to highlight, and what is it leaving out? Is the justification for this research really compelling, or is this just a hasty grab at a publication? Should I be impressed by this result?

It would be nice for the reader if papers were a crystal-clear guide for a novice to the field. Instead, you need a decent amount of sophistication with the field to know what to make of it all. Conversations with researchers can help a lot. Read their work and then ask if you can have 20 minutes of their time; they'll often be happy to answer your questions.

And yes, fields do seem to go down dead ends from time to time. My guess is it's some sort of self-reinforcing selection for biased, corrupt, gullible scientists who've come to depend on a cycle of hype-building to get the next grant. Homophilia attracts more people of the same stripe, and the field gets confused.

Tissue engineering is an example. 20-30 years ago, the scientists in that field hyped up the idea that we were chugging toward tissue-engineered solid organs. Didn't pan out, at least not yet. And when I look at tissue engineering papers today, I fear the same thing might repeat itself. Now we have bioprinters and iPSCs to amuse ourselves with. On the other hand, maybe that'll be enough to do the trick? Hard to know. Keep your skeptical hat on.

I think that we have a rather similar view actually - maybe it's just the topic of the post that makes it seem like I am more pessimistic than I am? Even though this post focuses on mapping up problems in the research system, my point is not in any way that scientific research would be useless - rather the opposite, I think it is very valuable, and that is why I'm so interested in exploring if there are ways that it can be improved. It's not at all my intention to say that research, or researchers, or any other people working in the system for that matter, are "bad".

My concern is not that published papers are not clear guides that a novice could follow or understand. Especially now that there is an active debate around reproducibility I would also not expect (good) researchers to be naive about it (and that has not at all been my personal experience from working with researchers). Still it seems to me that if reproducibility is lacking in fields that produce a lot of value, initiatives that would improve reproducibility would be very valuable?

From what I have seen so far, I think that the work by OSF (particularly on preregistration) and publications from METRICS seems like it could be impactful - what do you think of these? The ARRIVE guidelines also seem like a very valuable initiative for reporting of research with animals.

All these projects seem beneficial. I hadn't heard of any of them, so thanks for pointing them out. It's useful to frame this as "research on research," in that it's subject to the same challenges with reproducibility, and with aligning empirical data with theoretical predictions to develop a paradigm, as in any other field of science. Hence, I support the work, while being skeptical of whether such interventions will be useful and potent enough to make a positive change.

The reason I brought this up is that the conversation on improving the productivity of science seems to focus almost exclusively on problems with publishing and reproducibility, while neglecting the skill-building and internal-knowledge aspects of scientific research. Scientists seem to get a feel through their interactions with their colleagues for who is trustworthy and capable, and who is not. Without taking into account the sociology of science, it's hard to know whether measures taken to address problems with publishing and reproducibility will be focusing on the mechanisms by which progress can best be accelerated.

Honest, hardworking academic STEM PIs seem to struggle with money and labor shortages. Why isn't there more money flowing into academic scientific research? Why aren't more people becoming scientists?

The lack of money in STEM academia seems to me a consequence of politics. Why is there political reluctance to fund academic science at higher levels? Is academia to blame for part of this reluctance, or is the reason purely external to academia? I don't know the answers to these questions, but they seem important to address.

Why don't more people strive to become academic STEM scientists? Partly, industry draws them away with better pay. Part of the fault lies in our school system, although I really don't know what exactly we should change. And part of the fault is probably in our cultural attitudes toward STEM.

Many of the pro-reproducibility measures seem to assume that the fastest road to better science is to make more efficient use of what we already have. I would also like to see us figure out a way to produce more labor and capital in this industry. To be clear, I mean that I would like to see fewer people going into non-STEM fields - I am personally comfortable with viewing people's decision to go into many non-STEM fields as a form of failure to achieve their potential. That failure isn't necessarily their fault. It might be the fault of how we've set up our school, governance, cultural or economic system.

This is a really great post, and I particularly appreciated the visual diagrams laying out the "problem tree." A number of aspects of what you're writing about (particularly choice of research questions, the lack of connection with the end user in designing research questions, challenges around research/evidence use in the real world, and incentives created by funders and organizational culture) strongly resonated with me. You might find it interesting to read a couple of articles I've written along these lines:

Finally, I just wanted to note a number of overlaps between this post (as well as the meta-science conversation more generally) and issues we're exploring in the improving institutional decision-making community. If you haven't already, I'd like to invite you to join our discussion spaces on Facebook and Slack, and it may be worth a conversation down the line to explore how we can support each other's efforts.

Thank you! Joined both and looking forward to reading your posts!

"Lack of connection with end-user" reminds me of this essay from a psychology professor who recently left academia.

As someone who wanted to be a psychology professor for a year or so, I felt a spark of recognition when I read this section (emphasis mine):

(I'm a colleague of david_reinstein, one of the authors of this post. All opinions are my own).

Intro

I've incidentally started thinking about metascience-related questions in the last 3 months, as it has independently came up in two different projects I was involved in. I think the paradigm I was operating out of is somewhat different than the explicit and implicit mapping here, so I'm sharing it here in the hopes that there can be some useful crossfertilization of ideas. Note that I've spent very little time thinking about this (likely <10 hours total), and even less time reading papers from others in this field.

Perspective/Toy Model/Paradigm

The perspective I currently have* is viewing "research/science" from 10,000 steps up and consider research as :

And then an important follow-up question here is:

Paradigm Scope/Limitations

Notably, my definition is a broader tent (in the context of metascience) than prioritization of science/metascience entirely from a purely impartial EA perspective. From an EA perspective, we'd also care about:

I'm deliberately using a lower bar ("research as an industry that converts $s and highly talented people into (eventually) actionable insights") than EA because I think it better captures the claimed ethos of research and researchers.

However, even with this lower bar, I think having this precise conceptualization (we have an industry that converts resources into actionable insights, how can we make the industry more efficient?) helps us prioritize a little within metascience.

Potentially Valuable: Operations Research of Research Outputs?

Things I would currently guess are quite valuable + understudied, from an inside view:

(note that I have not read enough of the literature to be confident that specific claims about neglectedness are true)

I'm not exactly sure what the umbrella of concepts above I've gestured to above should be called, but roughly what I'm interested in is

alternatively,

What I mean about this is that I think it's plausible that there are immense (tens of billions to trillions) dollar bills laying on the floor in figuring out optimal allocation of the above. I think a lot of these decisions are, in practice, based on lore, political incentives, and intuition. I believe (could definitely be wrong), there's very little careful theorizing and even less empirical data.

Other Potentially Valuable Things

Things I also think are very valuable + understudied, but feels more speculative and I have a even less firm inside view on:

Comparatively Less Interesting/Useful Things Within Metascience

I don't think anything within "research on research" is obviously oversubscribed (in the sense that we as a society should clearly devote less resources to the marginal metascience project in that domain, compared to marginal resources on random science projects).

Nonetheless, here are things I would guess is less marginally valuable than things I'm personally interested in within metascience*:

Additional EA Work

In addition to the points I've identified above, I'd also be excited to see more work on:

*which I'm not satisfied with but I'm much happier with compared to my previously even more confused thoughts

Meta: I strung together a bunch of Slack, etc, comments together in a hopefully coherent/readable way, and then realized my comment is too long so added approximate section headings in case it's helpful.

Requesting feedback:

1. Is the above comment in fact coherent and readable?

2. Are the headings useful for you? Or are they just kind of annoying/jarring and didn't actually add much useful structure?

FWIW:

(I work at the same org as Linch and David Reinstein, but all opinions here are my own, of course, and I'd be happy to disagree with them publicly if indeed I did disagree.)

I hadn't formulated it so clearly for myself, but at this stage I would say I'm using the same perspective as you - I think one would have to have a lot clearer view of the field / problems /potential to be able to do across-cause prioritization and prioritization in the context of differential technological progress in a meaningful way.

I think this seems like a really exciting opportunity!

On your listing of things that would be valuable vs less valuable, I have a roughly similar view at this stage though I think I might be thinking a bit more about institutional/global incentives and a bit less about improving specific teams (e.g. improving publishing standards vs improving the productivity of a promising research group). But at this stage, I have very little basis for any kind of ranking of how pressing different issues are. I agree with your view that replication crisis stuff seems important but relatively less neglected.

I think it would be very interesting/valuable to investigate what impactful careers in meta-research or improving research could be, and specifically to identify gaps where there are problems that are not currently being addressed in a useful way.

(This may sound harsher than my actual views - I do think this post was a useful contribution.)

Point 2.3 "founding priorities of grantmakers " does not sound a problem to me in the context of your analysis. In the opening of your post, you show concern in the production of a valuable results:

Who is supposed to define the valuable outcome if not a grantmaker? Are you perhaps saying that specific grantmakers are not suitable to define the valuable outcome?

Nonetheless you can see that this a circular problem, you cannot defend the idea of efficient research toward a favourable outcome without allowing the idea that somebody has the authority to define that outcome and thus become a grantmaker.

Perhaps, would 'lack of alignment between grantmakers values and researchers values' be a better definition of the issue?

I would say that boldness itself is a problem as much as lack of boldness is.

As there is a competition to do more with less resources, researchers are incentivised to underestimate what they need when writing grant proposals. Research is an uncertain activity and likely benefits from a safety budget. So when project problems arise, researches need to cut some planned activities (for example, they might avoid collecting quality data for reproducibility results).

Researchers are also incentivised to keep their options open and distribute their energy across several efforts. Thus, an approved short-term project might not receive full attention (as well as it happens to long-term projects toward their ends) or might not be perfectly aligned with the researchers interests.

Thanks for this!

You make a good point, the part on funding priorities does become kind of circular. Initially the heading there was "Grantmakers are not driven by impact" - but that got confusing since I wanted to avoid defining impact (because that seemed like a rabbit hole that would make it impossible to finish the post). So I just changed it to "Funding priorities of grantmakers" - but your comment is valid with either wording, it does make sense that the one who spends the resources should set the priorities for what they want to achieve.

I think there is still something there though - maybe as you say, a lack of alignment in values - but maybe even more that a lack of skill in how the priorities are enforced or translated to incentives? It seems like even though the high-level priorities of a grant-maker is theirs to define, the execution of the grantmaking sometimes promotes something else? E.g. a grantmaker that has a high-level objective of improving public health, but where the actual grants go to very hyped-up fields that are already getting enough funding, or where the investments are mismatched with disease burdens or patient needs. In a way, this is similar to ineffective philantropy in general - perhaps "ineffective grantmaking" would be an appropriate heading?

That sounds a better heading indeed. Although grantmakers define the value of a research outcome, they might not be able to correctly promote their vision due to their limited resources.

However, as the grantmaking process is what defines the value of a research, your heading might be misinterpreted as the inability to define valuable outcomes (which is in contradiction with your working hypothesis)

What about "inefficient grant-giving"? "inefficient" because sometimes resources are lost pursing secondary goals, "grant-giving" because it specifically involves the process of selecting motivated and effective researchers teams.

I added an edit with a link to this thread now =)

Thank you so much for this excellent work. I am very interested in in this area. I look forward to seeing your suggested solutions! Please keep me in the loop.

Here is some related copy from a recent paper I wrote (not sure if useful, but I think it makes some issues very salient so may be useful)

"It can take up to 17 years for research evidence to disseminate into health care practice (Balas & Boren, 2000; Morris, Wooding, & Grant, 2011) and perhaps only 14 percent of available evidence enters daily clinical practice (Westfall, Mold, & Fagnan, 2007). For example, Antman, Lau, Kupelnick, Mosteller, & Chalmers (1992) found that it took 13 years after the publication of supportive evidence before medical experts began to recommend a new drug."

Let me know if you can't find the references.

The intro of this paper may also have useful copy.

This post is now a podcast! Not just 15 minutes of fame but two and a half hours of me reading this...

https://anchor.fm/david-reinstein/episodes/Why-scientific-research-is-less-effective-in-producing-value-than-it-could-be-a-mapping----reading-C-Tillis-EA-Forum-post--comments-my-own-and-others-e13i5ru

I also make some comments (and explainers and link-readings), and I also read much of this comments section.

I hope it's helpful! I like to have things in audio format to read during long drives, etc., so I hoped others would too. Let me know if you have any feedback on the podcast.

(I also made a few comments during the podcast which I should also get around to posting as written comments here).

I was quite surprised/excited to see this on the forum, as I had literally just been thinking about it 5 minutes before opening up the forum!

From what I skimmed so far, I think it makes some good points/is a good start (although I'm not familiar enough in the area to judge); I definitely look forward to seeing some more work on this.

However, I was hoping to see some more discussion of the idea/problem that's been bugging me for a while, which builds on your second point: what happens when later studies criticize/fail to replicate earlier studies' findings? Is there any kind of repository/system where people can go to check if a given experiment/finding has received some form of published criticism? (i.e., one that is more efficient than scanning through every article that references the older study in the hopes of finding one that critically analyzes it).

I have been searching for such a system (in social sciences, not medicine/STEM) but thus far been unsuccessful--although I recognize that I may simply not know what to look for or may have overlooked it.

However, especially if such a system does not exist/has not been tried before, I would be really interested to get people's feedback on such an idea. I was particularly motivated to look into this because in the field I'm currently researching, I came across one experiment/study that had very strange results--and when I looked a bit deeper, it appeared that either the experiment was just very poorly set up (i.e., it had loopholes for gaming the system) or the researcher accidentally switched treatment group labels (based on internal labeling inconsistencies in an early version of the paper). As I came to see how the results may have just been produced by undergrad students gaming the real-money incentive system, I've had less confidence in the latter outcome, but especially if the latter case were true it would be shocking... and perhaps unnoticed.[1] Regardless of what the actual cause was, this paper was cited by over 70 articles; a handful explicitly said that the paper's findings led them to use one experimental method instead of another.

In light of this example (and other examples I've encountered in my time), I've thought it would be very beneficial to have some kind of system which tracks criticisms as well as dependency on/usage of earlier findings--beyond just "paper X is cited by paper Y" (which says nothing about whether paper Y cited paper X positively or negatively). By doing this, 1) there could be some academic standard that papers which emphasize or rely on (not just reference) earlier studies at least report whether those studies have received any criticism[2]; 2) If some study X reports that it relies on study/experiment Y, and study/experiment Y is later found to be flawed (e.g., methodological errors, flawed dataset, didn't reproduce), the repository system could automatically flag study X's findings as something appropriate like "needs review."

But I'm curious what other people think! (In particular, are there already alternatives/things that try to deal with this problem, is the underlying problem actually that significant/widespread, is such a system feasible, would it have enough buy-in and would it help that much even if it did have buy-in, etc.)

(More broadly, I've long had a pipe dream of some kind of system that allows detailed, collaborative literature mapping--an "Epistemap", if you will--but I think the system I describe above would be much less ambitious/more practical).

[1] This is a moderately-long digression/is not really all that important, but I can provide details if anyone is curious.

[2] Of course, especially in its early stage with limited adoption this system would be prone to false negatives (e.g., a criticism exists but is not listed in the repository), but aside from the "false sense of confidence" argument I don't see how this could make it worse than the status quo.

A system somewhat similar to what you are talking about exists. Pubpeer, for example, is a place where post-publication peer reviews of papers are posted publicly (https://pubpeer.com/static/about). I'm not sure at this stage how much it is used, but in principle it allows you to see criticism on any article.

Scite.ai is also relevant - it uses AI to try and say whether citations of an article are positive or negative. I don't know about its accuracy.

Neither of these address the problem of what happens if a study fails to replicate - often what happens is that the original study continues to be cited more than the replication effort.

Thanks for sharing those sources! I think a system like Pubpeer could partially address some of the issues/functions I mentioned, although I don't think it quite went as far as I was hoping (in part because it doesn't seem to have the "relies upon" aspect, but I also couldn't find that many criticisms/analyses in the fields I'm more familiar with so it is hard to tell what kinds of analysis takes place there). The Scite.ai system seems more interesting--in part because I have specifically thought that it would be interesting to see whether machine learning could assist with this kind of semantic-richer bibliometrics.

Also, I wouldn't judge based solely off of this, but the Nature article you linked has this quote regarding Scite's accuracy: "According to Nicholson, eight out of every ten papers flagged by the tool as supporting or contradicting a study are correctly categorized."

A couple of other new publication models that might be worth looking at are discussed here (Octopus and hypergraph, both of which are modular). Also this recent article about 'publomics' might have interesting ideas. Happy to talk about any of this if you are thinking about doing something in the space.

Those both seem interesting! I'll definitely try to remember to reach out if I start doing more work in this field/on this project. Right now it's just a couple of ideas that keep nagging at me but I'm not exactly sure what to do with them and they aren't currently the focus of my research, but if I could see options for progress (or even just some kind of literature/discussion on the epistemap/repository concept, which I have not really found yet) I'd probably be interested.

I think it's a really interesting, but also very difficult, idea. Perhaps one could identify a limited field of research where this would be especially valuable (or especially feasible, or ideally both), and try it out within that field as an experiment?

I would be very interested to know more if you have specific ideas of how to go about it.

Yeah, I have thought that it would probably be nice to find a field where it would be valuable (based on how much the field is struggling with these issues X the importance of the research), but I've also wondered if it might be best to first look for a field that has a fitting/acceptive ethos--i.e., a field where a lot of researchers are open to trying the idea. (Of course, that would raise questions about whether it could see similar buy-in when applied to different fields, but the purpose of such an early test would be to identify "how useful is this when there is buy-in?")

At the same time, I have also recognized that it would probably be difficult... although I do wonder just how difficult it would be--or at least, why exactly it might be difficult. Especially if the problem is mainly about buy-in, I have thought that it would probably be helpful to look at similar movements like the shift towards peer-reviewing as well as the push for open data/data transparency: how did they convince journals/researchers to be more transparent and collaborative? If this system actually proved useful and feasible, I feel like it might have a decent chance of eventually getting traction (even if it may go slow).

The main concern I've had with the broader pipe dream I hinted at has been "who does the mapping/manages the systems?" Are the maps run by centralized authorities like journals or scientific associations (e.g., the APA), or is it mostly decentralized in that objects in the literature (individual studies, datasets, regressions, findings) have centrally-defined IDs but all of the connections (e.g., "X finding depends on Y dataset", "X finding conflicts with Z finding") are defined by packages/layers that researchers can contribute to and download from, like a library/buffet. (The latter option could allow "curation" by journals, scientific associations, or anyone else.) However, I think the narrower system I initially described would not suffer from this problem to the same extent--at least, the problems would not be more significant than those incurred with peer-review (since it is mainly just asking "1) does your research criticize another study? 2) What studies and datasets does your research rely on?")

But I would definitely be interested to hear you elaborate on potential problems you see with the system. I have been interested in a project of this sort for years: I even did a small project last year to try the literature mapping (which had mixed-positive results in that it seemed potentially feasible/useful but I couldn't find a great existing software platform to do both visually-nice mapping + rudimentary logic operations). I just can't shake the desire to continue trying this/looking for research or commentary on the idea, but so far I really haven't found all that much... which in some ways just makes me more interested in pursuing the idea (since that could suggest it's a neglected idea... although it could also suggest that it's been deemed impractical)