On 17 February 2024, the mean length of the main text of the write-ups of Open Philanthropy’s largest grants in each of its 30 focus areas was only 2.50 paragraphs, whereas the mean amount was 14.2 M 2022-$[1]. For 23 of the 30 largest grants, it was just 1 paragraph. The calculations and information about the grants is in this Sheet.

Should the main text of the write-ups of Open Philanthropy’s large grants (e.g. at least 1 M$) be longer than 1 paragraph? I think greater reasoning transparency would be good, so I would like it if Open Philanthropy had longer write-ups.

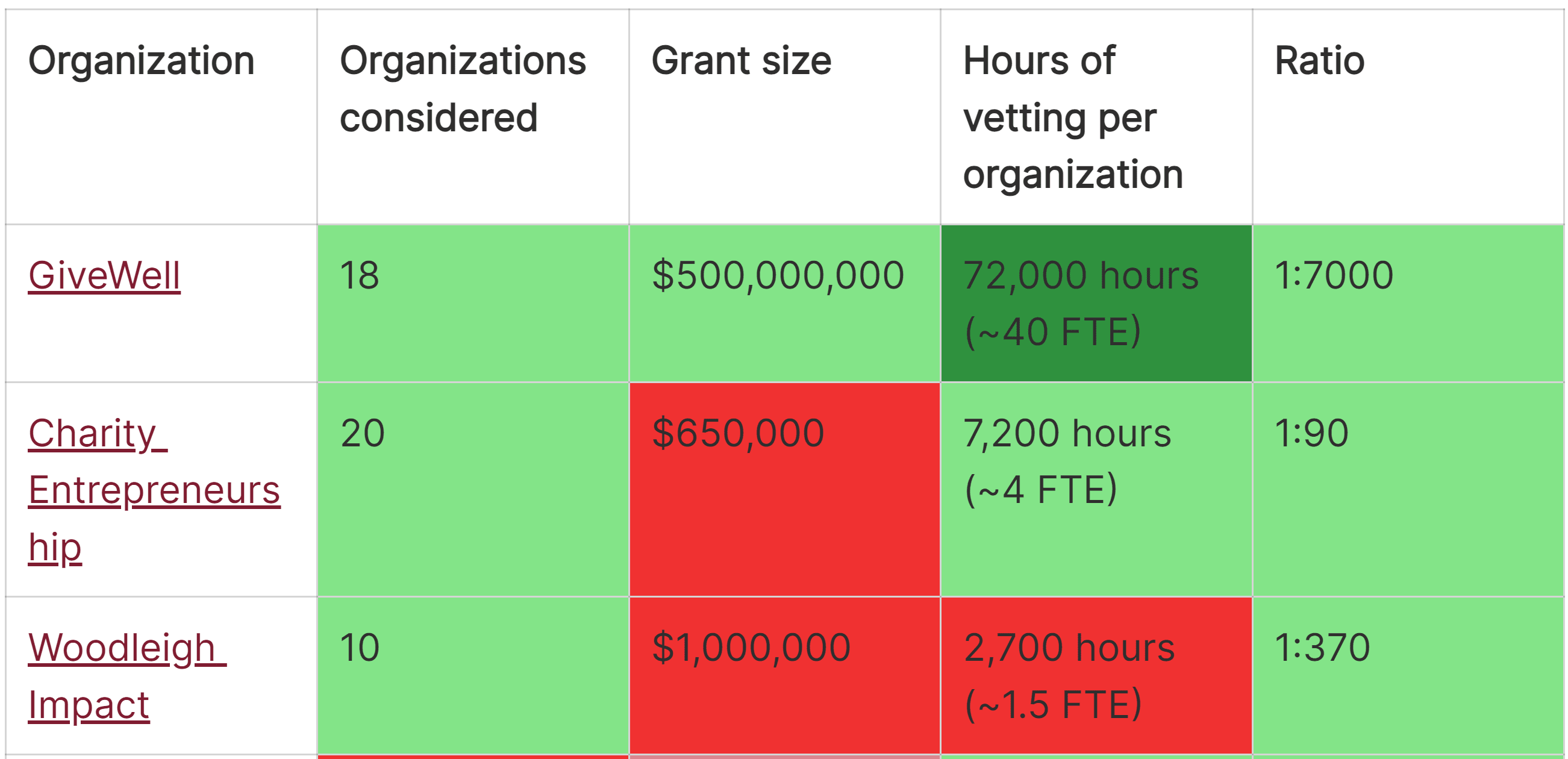

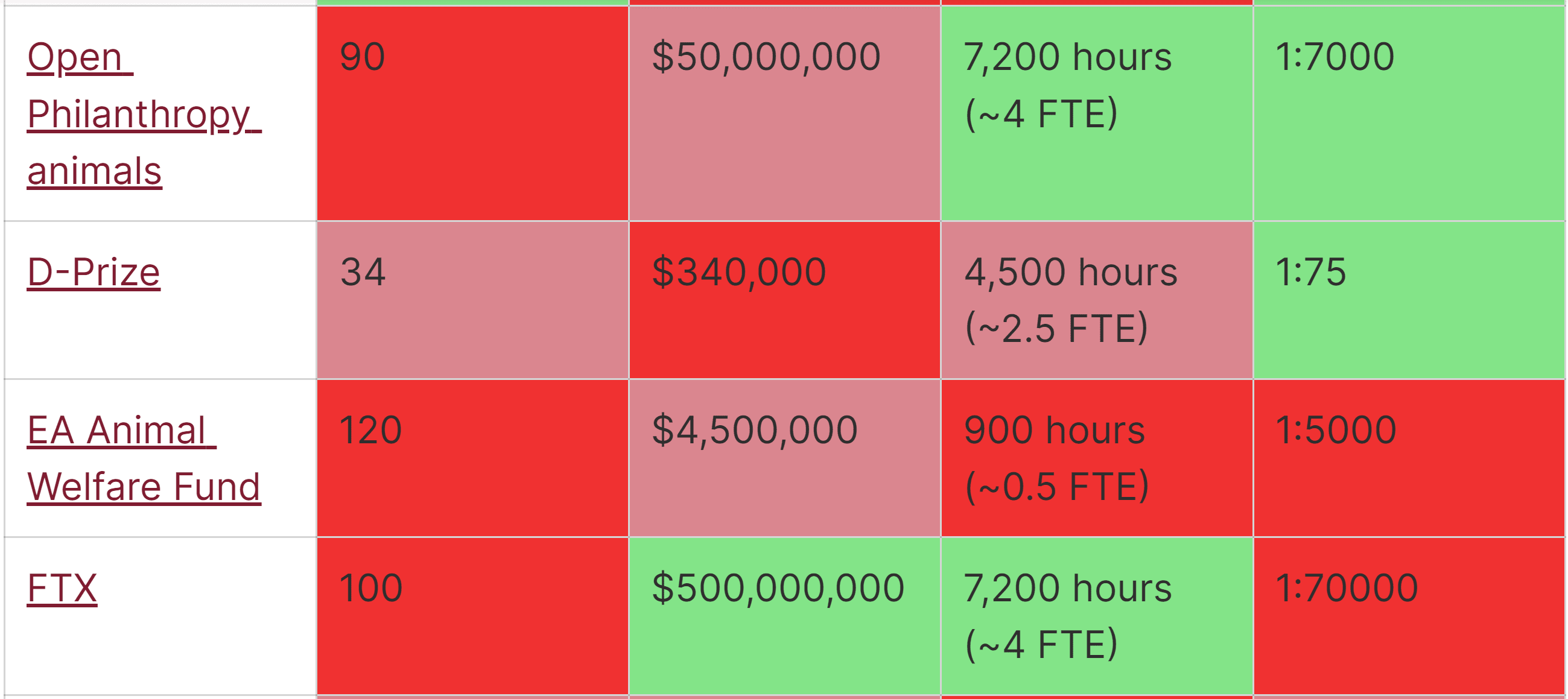

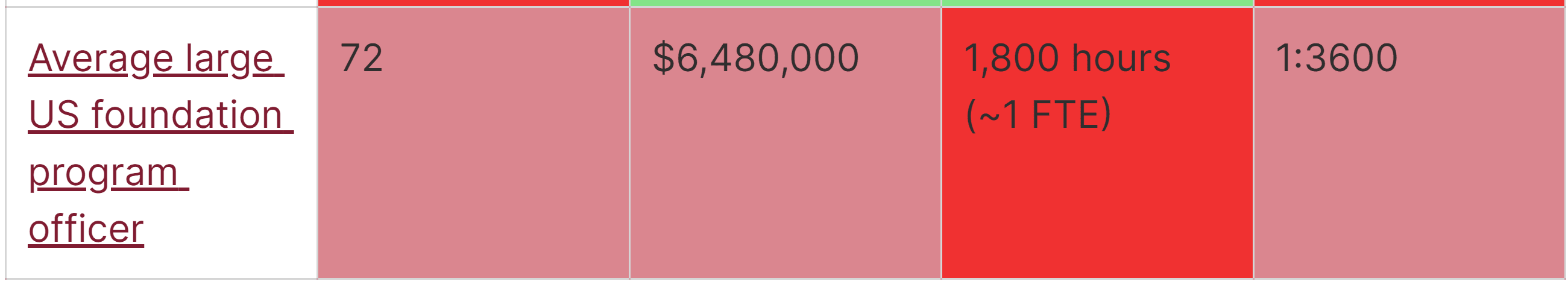

In terms of other grantmakers aligned with effective altruism[2]:

- Charity Entrepreneurship (CE) produces an in-depth report for each organisation it incubates (see CE’s research).

- Effective Altruism Funds has write-ups of 1 sentence for the vast majority of the grants of its 4 funds.

- Founders Pledge has write-ups of 1 sentence for the vast majority of the grants of its 4 funds.

- Future of Life Institute’s grants have write-ups roughly as long as Open Philanthropy.

- Longview Philanthropy’s grants have write-ups roughly as long as Open Philanthropy.

- Manifund's grants have write-ups (comments) of a few paragraphs.

- Survival and Flourishing Fund has write-ups of a few words for the vast majority of its grants.

I encourage all of the above except for CE to have longer write-ups. I focussed on Open Philanthropy in this post given it accounts for the vast majority of the grants aligned with effective altruism.

Some context:

- Holden Karnofsky posted about how Open Philanthropy was thinking about openness and information sharing in 2016.

- There was a discussion in early 2023 about whether Open Philanthropy should share a ranking of grants it produced then.

- ^

Open Philanthropy has 17 broad focus areas, 9 under global health and wellbeing, 4 under global catastrophic risks (GCRs), and 4 under other areas. However, its grants are associated with 30 areas.

I define main text as that besides headings, and not including paragraphs of the type:

- “Grant investigator: [name]”.

- “This page was reviewed but not written by the grant investigator. [Organisation] staff also reviewed this page prior to publication”.

- “This follows our [dates with links to previous grants to the organisation] support, and falls within our focus area of [area]”.

- “The grant amount was updated in [date(s)]”.

- “See [organisation's] page on this grant for more details”.

- “This grant is part of our Regranting Challenge. See the Regranting Challenge website for more details on this grant”.

- “This is a discretionary grant”.

I count lists of bullets as 1 paragraph.

- ^

The grantmakers are ordered alphabetically.

Thanks for the detailed comment. I strongly upvoted it.

I agree the number of words per grant is far from an ideal proxy. At the same time, the median length of the write-ups on the database of EA Funds is 15.0 words, and accounting for what you write elsewhere does not impact the median length because you only write longer write-ups for a small fraction of the grants, so the median information shared per grant is just a short sentence. So I assume donors do not have enough information to assess the median grant.

On the other hand, donors do not necessarily need detailed information about all grants because they could infer how much to trust EA Funds based on longer write-ups for a minority of them, such as the ones in your posts. I think I have to recheck your longer write-ups, but I am not confident I can assess the quality of the grants with longer write-ups based on these alone. I suspect trusting the reasoning of EA Funds' fund managers is a major reason for supporting EA Funds. I guess me and others like longer write-ups because transparency is often a proxy for good reasoning, but we had better look into the longer write-ups, and assess EA Funds based on them rather than the median information shared per grant.

At least a priori, I would expect the information shared about a grant to be proportional to the amount of effort put into assessing it, and this to be proportional to the amount granted, in which case the information shared about a grant would be proportional to the amount granted. The grants you assessed in LTFF's most recent report were of 200 and 71 k$, and you wrote a few paragraphs about each of them. In contrast, CE's seed funding per charity in 2023 ranged from 93 to 190 k$, but they wrote reports of dozens of pages for each of them. This illustrates CE shares much more information about the interventions they support than EA Funds' shares about the grants for which there are longer write-ups. So it is possible to have a better picture of CE's work than EA Funds'. This is not to say CE's donors actually have a better picture of CE's work than EA Funds' donors have of EA Funds' work. I do not know how whether CE's donors look into their reports. However, I guess it would still be good for EA Funds to share some in-depth analyses of their grants.

I guess EA Funds' would benefit from having researchers in that sense. I like that Founders Pledge produces lots of research informing the grantmaking of their funds.

How about just making some applications public, as Austin suggested? I actually think it would be good to make public the applications of all grants EA Funds makes, and maybe even rejected applications. Information which had better remain confidential could be removed from the public version of the application.