Adult film star Abella Danger apparently took an class on EA at University of Miami, became convinced, and posted about EA to raise $10k for One for the World. She was PornHub's most popular female performer in 2023 and has ~10M followers on instagram. Her post has ~15k likes, comments seem mostly positive.

I think this might be the class that @Richard Y Chappell🔸 teaches?

Thanks Abella and kudos to whoever introduced her to EA!

It looks like she did a giving season fundraiser for Helen Keller International, which she credits to the EA class she took. Maybe we will see her at a future EAG!

If you can get a better score than our human subjects did on any of METR's RE-Bench evals, send it to me and we will fly you out for an onsite interview

Caveats:

- you're employable (we can sponsor visas from most but not all countries)

- use same hardware

- honor system that you didn't take more time than our human subjects (8 hours). If you take more still send it to me and we probably will still be interested in talking

(Crossposted from twitter.)

EA Awards

- I feel worried that the ratio of the amount of criticism that one gets for doing EA stuff to the amount of positive feedback one gets is too high

- Awards are a standard way to counteract this

- I would like to explore having some sort of awards thingy

- I currently feel most excited about something like: a small group of people solicit nominations and then choose a short list of people to be voted on by Forum members, and then the winners are presented at a session at EAG BA

- I would appreciate feedback on:

- whether people think this is a good idea

- How to frame this - I want to avoid being seen as speaking on behalf of all EAs

- Also if anyone wants to volunteer to co-organize with me I would appreciate hearing that

The way they're usually done, awards counteract the negative:positive feedback ratio for a tiny group of people. I think it would be better to give positive feedback to a much larger group of people, but I don't have any good ideas about how to do that. Maybe just give a lot of awards?

Animal Justice Appreciation Note

Animal Justice et al. v A.G of Ontario 2024 was recently decided and struck down large portions of Ontario's ag-gag law. A blog post is here. The suit was partially funded by ACE, which presumably means that many of the people reading this deserve partial credit for donating to support it.

Thanks to Animal Justice (Andrea Gonsalves, Fredrick Schumann, Kaitlyn Mitchell, Scott Tinney), co-applicants Jessica Scott-Reid and Louise Jorgensen, and everyone who supported this work!

EA in a World Where People Actually Listen to Us

I had considered calling the third wave of EA "EA in a World Where People Actually Listen to Us".

Leopold's situational awareness memo has become a salient example of this for me. I used to sometimes think that arguments about whether we should avoid discussing the power of AI in order to avoid triggering an arms race were a bit silly and self important because obviously defense leaders aren't going to be listening to some random internet charity nerds and changing policy as a result.

Well, they are and they are. Let's hope it's for the better.

Seems plausible the impact of that single individual act is so negative that aggregate impact of EA is negative.

I think people should reflect seriously upon this possibility and not fall prey to wishful thinking (let's hope speeding up the AI race and making it superpower powered is the best intervention! it's better if everyone warning about this was wrong and Leopold is right!).

The broader story here is that EA prioritization methodology is really good for finding highly leveraged spots in the world, but there isn't a good methodology for figuring out what to do in such places, and there also isn't a robust pipeline for promoting virtues and virtuous actors to such places.

I'm not sure to what extent the Situational Awareness Memo or Leopold himself are representatives of 'EA'

In the pro-side:

- Leopold thinks AGI is coming soon, will be a big deal, and that solving the alignment problem is one of the world's most important priorities

- He used to work at GPI & FTX, and formerly identified with EA

- He (

probablyalmost certainly) personally knows lots of EA people in the Bay

On the con-side:

- EA isn't just AI Safety (yet), so having short timelines/high importance on AI shouldn't be sufficient to make someone an EA?[1]

- EA shouldn't also just refer to a specific subset of the Bay Culture (please), or at least we need some more labels to distinguish different parts of it in that case

- Many EAs have disagreed with various parts of the memo, e.g. Gideon's well received post here

- Since his EA institutional history he moved to OpenAI (mixed)[2] and now runs an AGI investment firm.

- By self-identification, I'm not sure I've seen Leopold identify as an EA at all recently.

This again comes down to the nebulousness of what 'being an EA' means.[3] I have no doubts at all that, given what Leopold thinks is the way to have the most impact he'll be very effective at ach... (read more)

In my post, I suggested that one possible future is that we stay at the "forefront of weirdness." Calculating moral weights, to use your example.

I could imagine though that the fact that our opinions might be read by someone with access to the nuclear codes changes how we do things.

I wish there was more debate about which of these futures is more desirable.

(This is what I was trying to get out with my original post. I'm not trying to make any strong claims about whether any individual person counts as "EA".)

I think he is pretty clearly an EA given he used to help run the Future Fund, or at most an only very recently ex-EA. Having said that, it's not clear to me this means that "EAs" are at fault for everything he does.

In most cases this is a rumors based thing, but I have heard that a substantial chunk of the OP-adjacent EA-policy space has been quite hawkish for many years, and at least the things I have heard is that a bunch of key leaders "basically agreed with the China part of situational awareness".

Again, people should really take this with a double-dose of salt, I am personally at like 50/50 of this being true, and I would love people like lukeprog or Holden or Jason Matheny or others high up at RAND to clarify their positions here. I am not attached to what I believe, but I have heard these rumors from sources that didn't seem crazy (but also various things could have been lost in a game of telephone, and being very concerned about China doesn't result in endorsing a "Manhattan project to AGI", though the rumors that I have heard did sound like they would endorse that)

Less rumor-based, I also know that Dario has historically been very hawkish, and "needing to beat China" was one of the top justifications historically given for why Anthropic does capability research. I have heard this from many people, so feel more comfortable saying it with fewer disclaimers, but am still only like... (read more)

Slightly independent to the point Habryka is making, which may well also be true, my anecdotal impression is that the online EA community / EAs I know IRL were much bigger on 'we need to beat China' arguments 2-4 years ago. If so, simple lag can also be part of the story here. In particular I think it was the mainstream position just before ChatGPT was released, and partly as a result I doubt an 'overwhelming majority of EAs involved in AI safety' disagree with it even now.

Example from August 2022:

https://www.astralcodexten.com/p/why-not-slow-ai-progress

So maybe (the argument goes) we should take a cue from the environmental activists, and be hostile towards AI companies...

This is the most common question I get on AI safety posts: why isn’t the rationalist / EA / AI safety movement doing this more? It’s a great question, and it’s one that the movement asks itself a lot...

Still, most people aren’t doing this. Why not?

Later, talking about why attempting a regulatory approach to avoiding a race is futile:

... (read more)The biggest problem is China. US regulations don’t affect China. China says that AI leadership is a cornerstone of their national security - both as a massive boon to their surveillan

Yep, my impression is that this is an opinion that people mostly adopted after spending a bunch of time in DC and engaging with governance stuff, and so is not something represented in the broader EA population.

My best explanation is that when working in governance, being pro-China is just very costly, and especially combining the belief that AI will be very powerful, and there is no urgency to beat China to it, seems very anti-memetic in DC, and so people working in the space started adopting those stances.

But I am not sure. There are also non-terrible arguments for beating China being really important (though they are mostly premised on alignment being relatively easy, which seems very wrong to me).

Maybe instead of "where people actually listen to us" it's more like "EA in a world where people filter the most memetically fit of our ideas through their preconceived notions into something that only vaguely resembles what the median EA cares about but is importantly different from the world in which EA didn't exist."

(I think the issue with Leopold is somewhat precisely that he seems to be quite politically savvy in a way that seems likely to make him a deca-multi-millionaire and politically influental, possibly at the cost of all of humanity. I agree Eliezer is not the best presenter, but his error modes are clearly enormously different)

The thing about Yudkowsky is that, yes, on the one hand, every time I read him, I think he surely must be coming across as super-weird and dodgy to "normal" people. But on the other hand, actually, it seems like he HAS done really well in getting people to take his ideas seriously? Sam Altman was trolling Yudkowsky on twitter a while back about how many of the people running/founding AGI labs had been inspired to do so by his work. He got invited to write on AI governance for TIME despite having no formal qualifications or significant scientific achievements whatsoever. I think if we actually look at his track record, he has done pretty well at convincing influential people to adopt what were once extremely fringe views, whilst also succeeding in being seen by the wider world as one of the most important proponents of those views, despite an almost complete lack of mainstream, legible credentials.

Marcus Daniell appreciation note

@Marcus Daniell, cofounder of High Impact Athletes, came back from knee surgery and is donating half of his prize money this year. He projects raising $100,000. Through a partnership with Momentum, people can pledge to donate for each point he gets; he has raised $28,000 through this so far. It's cool to see this, and I'm wishing him luck for his final year of professional play!

First in-ovo sexing in the US

Egg Innovations announced that they are "on track to adopt the technology in early 2025." Approximately 300 million male chicks are ground up alive in the US each year (since only female chicks are valuable) and in-ovo sexing would prevent this.

UEP originally promised to eliminate male chick culling by 2020; needless to say, they didn't keep that commitment. But better late than never!

Congrats to everyone working on this, including @Robert - Innovate Animal Ag, who founded an organization devoted to pushing this technology.[1]

- ^

Egg Innovations says they can't disclose details about who they are working with for NDA reasons; if anyone has more information about who deserves credit for this, please comment!

Sam Bankman-Fried's trial is scheduled to start October 3, 2023, and Michael Lewis’s book about FTX comes out the same day. My hope and expectation is that neither will be focused on EA,[1] but several people have recently asked me about if they should prepare anything, so I wanted to quickly record my thoughts.

The Forum feels like it’s in a better place to me than when FTX declared bankruptcy: the moderation team at the time was Lizka, Lorenzo, and myself, but it is now six people, and they’ve put in a number of processes to make it easier to deal with a sudden growth in the number of heated discussions. We have also made a number of design changes, notably to the community section.

CEA has also improved our communications and legal processes so we can be more responsive to news, if we need to (though some of the constraints mentioned here are still applicable).

Nonetheless, I think there’s a decent chance that viewing the Forum, Twitter, or news media could become stressful for some people, and you may want to preemptively create a plan for engaging with that in a healthy way.

- ^

This market is thinly traded but is currently predicting that Le

My hope and expectation is that neither will be focused on EA

I'd be surprised [p<0.1] if EA was not a significant focus of the Michael Lewis book – but agree that it's unlikely to be the major topic. Many leaders at FTX and Alameda Research are closely linked to EA. SBF often, and publically, said that effective altruism was a big reason for his actions. His connection to EA is interesting both for understanding his motivation and as a story-telling element. There are Manifold prediction markets on whether the book would mention 80'000h (74%), Open Philanthropy (74%), and Give Well (80%), but these markets aren't traded a lot and are not very informative.[1]

This video titled The Fake Genius: A $30 BILLION Fraud (2.8 million views, posted 3 weeks ago) might give a glimpse of how EA could be handled. The video touches on EA but isn't centred on it. It discusses the role EAs played in motivating SBF to do earning to give, and in starting Alameda Research and FTX. It also points out that, after the fallout at Alameda Research, 'higher-ups' at CEA were warned about SBF but supposedly ignored the warnings. Overall, the video is mainly interested in the mechanisms of how the suspected ... (read more)

Yeah, unfortunately I suspect that "he claimed to be an altruist doing good! As part of this weird framework/community!" is going to be substantial part of what makes this an interesting story for writers/media, and what makes it more interesting than "he was doing criminal things in crypto" (which I suspect is just not that interesting on its own at this point, even at such a large scale).

The Panorama episode briefly mentioned EA. Peter Singer spoke for a couple of minutes and EA was mainly viewed as charity that would be missing out on money. There seemed to be a lot more interest on the internal discussions within FTX, crypto drama, the politicians, celebrities etc.

Maybe Panorama is an outlier but potentially EA is not that interesting to most people or seemingly too complicated to explain if you only have an hour.

Yeah I was interviewed for a podcast by a canadian station on this topic (cos a canadian hedge fund was very involved). iirc they had 6 episodes but dropped the EA angle because it was too complex.

Agree with this and also with the point below that the EA angle is kind of too complicated to be super compelling for a broad audience. I thought this New Yorker piece's discussion (which involved EA a decent amount in a way I thought was quite fair -- https://www.newyorker.com/magazine/2023/10/02/inside-sam-bankman-frieds-family-bubble) might give a sense of magnitude (though the NYer audience is going to be more interested in these sort of nuances than most.

The other factors I think are: 1. to what extent there are vivid new tidbits or revelations in Lewis's book that relate to EA and 2. the drama around Caroline Ellison and other witnesses at trial and the extent to which that is connected to EA; my guess is the drama around the cooperating witnesses will seem very interesting on a human level, though I don't necessarily think that will point towards the effective altruism community specifically.

The Forum feels like it’s in a better place to me than when FTX declared bankruptcy: the moderation team at the time was Lizka, Lorenzo, and myself, but it is now six people, and they’ve put in a number of processes to make it easier to deal with a sudden growth in the number of heated discussions. We have also made a number of design changes, notably to the community section.

This is a huge relief to hear. I noticed some big positive differences, but I couldn't tell where from. Thank you.

If I understand this correctly, maybe not in the trial itself:

Accordingly, the defendant is precluded from referring to any alleged prior good acts by the defendant, including any charity or philanthropy, as indicative of his character or his guilt or innocence.

I guess technically the prosecution could still bring it up.

Thoughts on the OpenAI Board Decisions

A couple months ago I remarked that Sam Bankman-Fried's trial was scheduled to start in October, and people should prepare for EA to be in the headlines. It turned out that his trial did not actually generate much press for EA, but a month later EA is again making news as a result of recent Open AI board decisions.

A couple quick points:

- It is often the case that people's behavior is much more reasonable than what is presented in the media. It is also sometimes the case that the reality is even stupider than what is presented. We currently don't know what actually happened, and should hold multiple hypotheses simultaneously.[1]

- It's very hard to predict the outcome of media stories. Here are a few takes I've heard; we should consider that any of these could become the dominant narrative.

- Vinod Khosla (The Information): “OpenAI’s board members’ religion of ‘effective altruism’ and its misapplication could have set back the world’s path to the tremendous benefits of artificial intelligence”

- John Thornhill (Financial Times): One entrepreneur who is close to OpenAI says the board was “incredibly principled and brave” to confront Altman, even if it

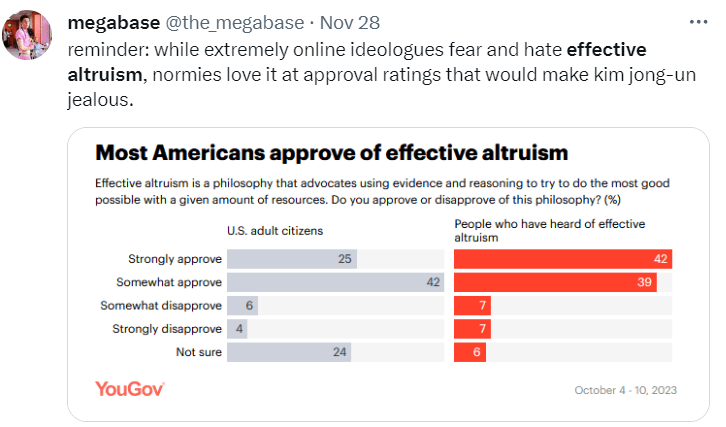

I've commented before that FTX's collapse had little effect on the average person’s perception of EA

Just for the record, I think the evidence you cited there was shoddy, and I think we are seeing continued references to FTX in basically all coverage of the OpenAI situation, showing that it did clearly have a lasting effect on the perception of EA.

Reputation is lazily-evaluated. Yes, if you ask a random person on the street what they think of you, they won't know, but when your decisions start influencing them, they will start getting informed, and we are seeing really very clear evidence that when people start getting informed, FTX is heavily influencing their opinion.

The Wikipedia page on effective altruism mentions Bankman-Fried 11 times, and after/during the OpenAI story, it was edited to include a lot of criticism, ~half of which was written after FTX (e.g. it quotes this tweet https://twitter.com/sama/status/1593046526284410880 )

It's the first place I would go to if I wanted an independent take on "what's effective altruism?" I expect many others to do the same.

There are a lot of recent edits on that article by a single editor, apparently a former NY Times reporter (the edit log is public). From the edit summaries, those edits look rather unfriendly, and the article as a whole feels negatively slanted to me. So I'm not sure how much weight I'd give that article specifically.

Sure, here are the top hits for "Effective Altruism OpenAI" (I did no cherry-picking, this was the first search term that I came up with, and I am just going top to bottom). Each one mentions FTX in a way that pretty clearly matters for the overall article:

- Bloomberg: "What is Effective Altruism? What does it mean for AI?"

- "AI safety was embraced as an important cause by big-name Silicon Valley figures who believe in effective altruism, including Peter Thiel, Elon Musk and Sam Bankman-Fried, the founder of crypto exchange FTX, who was convicted in early November of a massive fraud."

- Reddit "I think this was an Effective Altruism (EA) takeover by the OpenAI board"

- Top comment: " I only learned about EA during the FTX debacle. And was unaware until recently of its focus on AI. Since been reading and catching up …"

- WSJ: "How a Fervent Belief Split Silicon Valley—and Fueled the Blowup at OpenAI"

- "Coming just weeks after effective altruism’s most prominent backer, Sam Bankman-Fried, was convicted of fraud, the OpenAI meltdown delivered another blow to the movement, which believes that carefully crafted artificial-intelligence systems, imbued with the correct human values, will yie

I actually did that earlier, then realized I should clarify what you were trying to claim. I will copy the results in below, but even though they support the view that FTX was not a huge deal I want to disclaim that this methodology doesn't seem like it actually gets at the important thing.

But anyway, my original comment text:

As a convenience sample I searched twitter for "effective altruism". The first reference to FTX doesn't come until tweet 36, which is a link to this. Honestly it seems mostly like a standard anti-utilitarianism complaint; it feels like FTX isn't actually the crux.

In contrast, I see 3 e/acc-type criticisms before that, two "I like EA but this AI stuff is too weird" things (including one retweeted by Yann LeCun??), two "EA is tech-bro/not diverse" complaints and one thing about Whytham Abbey.

And this (survey discussed/criticized here):

Possible Vote Brigading

We have received an influx of people creating accounts to cast votes and comments over the past week, and we are aware that people who feel strongly about human biodiversity sometimes vote brigade on sites where the topic is being discussed. Please be aware that voting and discussion about some topics may not be representative of the normal EA Forum user base.

Huh, seems like you should just revert those votes, or turn off voting for new accounts. Seems better than just having people be confused about vote totals.

Note: I went through Bob's comments and think it likely they were brigaded to some extent. I didn't think they were in general excellent, but they certainly were not negative-karma comments. I strong-upvoted the ones that were below zero, which was about three or four.

I think it is valid to use the strong upvote as a means of countering brigades, at least where a moderator has confirmed there is reason to believe brigading is active on a topic. My position is limited to comments below zero, because the harmful effects of brigades suppressing good-faith comments from visibility and affirmatively penalizing good-faith users are particularly acute. Although there are mod-level solutions, Ben's comments suggest they may have some downsides and require time, so I feel a community corrective that doesn't require moderators to pull away from more important tasks has value.

I also think it is important for me to be transparent about what I did and accept the community's judgment. If the community feels that is an improper reason to strong upvote, I will revert my votes.

Edit: is to are

The Forum moderation team has been made aware that Kerry Vaughn published a tweet thread that, among other things, accuses a Forum user of doing things that violate our norms. Most importantly:

Where he crossed the line was his decision to dox people who worked at Leverage or affiliated organizations by researching the people who worked there and posting their names to the EA forum

The user in question said this information came from searching LinkedIn for people who had listed themselves as having worked at Leverage and related organizations.

This is not "doxing" and it’s unclear to us why Kerry would use this term: for example, there was no attempt to connect anonymous and real names, which seems to be a key part of the definition of “doxing”. In any case, we do not consider this to be a violation of our norms.

At one point Forum moderators got a report that some of the information about these people was inaccurate. We tried to get in touch with the then-anonymous user, and when we were unable to, we redacted the names from the comment. Later, the user noticed the change and replaced the names. One of CEA’s staff asked the user to encode the names to allow those people mor... (read more)

To share a brief thought, the above comment gives me a bad juju because it puts a contested perspective into a forceful and authoritative voice, while being long enough that one might implicitly forget that this is a hypothetical authority talking[1]. So it doesn't feel to me like a friendly conversational technique. I would have preferred it to be in the first person.

- ^

Garcia Márquez has a similar but longer thing going on in The Handsomest Drowned Man In The World, where everything after "if that magnificent man had lived in the village" is a hypothetical.

Startups aren't good for learning

I fairly frequently have conversations with people who are excited about starting their own project and, within a few minutes, convince them that they would learn less starting project than they would working for someone else. I think this is basically the only opinion I have where I can regularly convince EAs to change their mind in a few minutes of discussion and, since there is now renewed interest in starting EA projects, it seems worth trying to write down.

It's generally accepted that optimal learning environments have a few properties:

- You are doing something that is just slightly too hard for you.

- In startups, you do whatever needs to get done. This will often be things that are way too easy (answering a huge number of support requests) or way too hard (pitching a large company CEO on your product when you've never even sold bubblegum before).

- Established companies, by contrast, put substantial effort into slotting people into roles that are approximately at their skill level (though you still usually need to put in proactive effort to learn things at an established company).

- Repeatedly practicing a skill in "chunks"

- Similar to the last poin

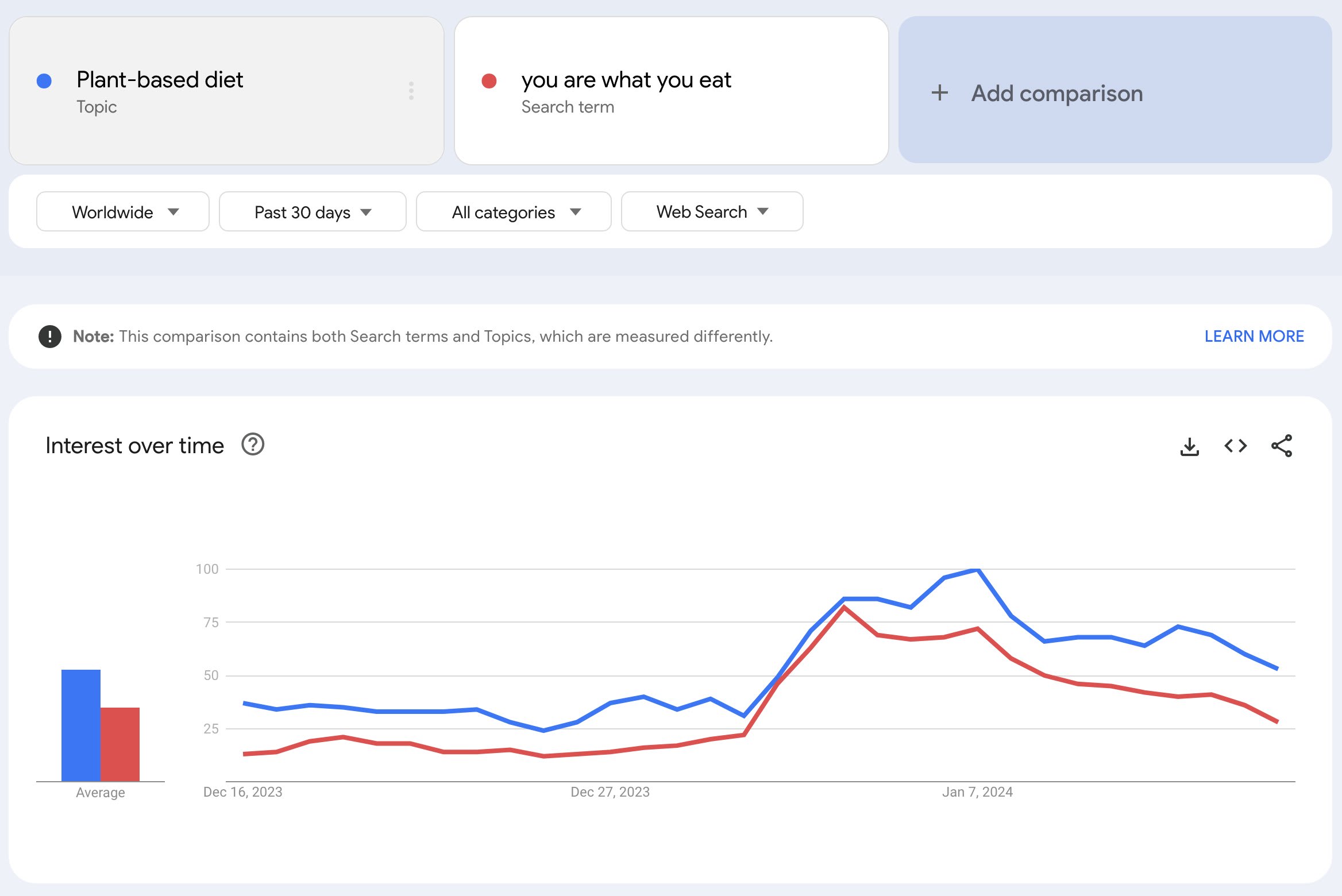

Plant-based burgers now taste better than beef

... (read more)The food sector has witnessed a surge in the production of plant-based meat alternatives that aim to mimic various attributes of traditional animal products; however, overall sensory appreciation remains low. This study employed open-ended questions, preference ranking, and an identification question to analyze sensory drivers and barriers to liking four burger patties, i.e., two plant-based (one referred to as pea protein burger and one referred to as animal-like protein burger), one hybrid meat-mushroom (75% meat and 25% mushrooms), and one 100% beef burger. Untrained participants (n=175) were randomly assigned to blind or informed conditions in a between-subject study. The main objective was to evaluate the impact of providing information about the animal/plant-based protein source/type, and to obtain product descriptors and liking/disliking levels from consumers. Results from the ranking tests for blind and informed treatments showed that the animal-like protein [Impossible] was the most preferred product, followed by the 100% beef burger. Moreover, in the blind condition, there was no significant difference in preferences between t

Interesting! Some thoughts:

- I wonder if the preparation was "fair", and I'd like see replications with different beef burgers. Maybe they picked a bad beef burger?

- Who were the participants? E.g. students at a university, and so more liberal-leaning and already accepting of plant-based substitutes?

- Could participants reliably distinguish the beef burger and the animal-like plant-based burger in the blind condition?

(I couldn't get access to the paper.)

Reversing start up advice

In the spirit of reversing advice you read, some places where I would give the opposite advice of this thread:

Be less ambitious

I don't have a huge sample size here, but the founders I've spoken to since the "EA has a lot of money so you should be ambitious" era started often seem to be ambitious in unhelpful ways. Specifically: I think they often interpret this advice to mean something like "think about how you could hire as many people as possible" and then they waste a bunch of resources on some grandiose vision without having validated that a small-scale version of their solution works.

Founders who instead work by themselves or with one or two people to try to really deeply understand some need and make a product that solves that need seem way more successful to me.[1]

Think about failure

The "infinite beta" mentality seems quite important for founders to have. "I have a hypothesis, I will test it, if that fails I will pivot in this way" seems like a good frame, and I think it's endorsed by standard start up advice (e.g. lean startup).

- ^

Of course, it's perfectly coherent to be ambitious about finding a really good value proposition. It's just that I worry t

Longform's missing mood

If your content is viewed by 100,000 people, making it more concise by one second saves an aggregate of one day across your audience. Respecting your audience means working hard to make your content shorter.

When the 80k podcast describes itself as "unusually in depth," I feel like there's a missing mood: maybe there's no way to communicate the ideas more concisely, but this is something we should be sad about, not a point of pride.[1]

I'm unfairly picking on 80k, I'm not aware of any long-form content which has this mood that I claim is missing ↩︎

Closing comments on posts

If you are the author of a post tagged "personal blog" (which notably includes all new Bostrom-related posts) and you would like to prevent new comments on your post, please email forum@centerforeffectivealtruism.org and we can disable them.

We know that some posters find the prospect of dealing with commenters so aversive that they choose not to post at all; this seems worse to us than posting with comments turned off.

Democratizing risk post update

Earlier this week, a post was published criticizing democratizing risk. This post was deleted by the (anonymous) author. The forum moderation team did not ask them to delete it, nor are we aware of their reasons for doing so.

We are investigating some likely Forum policy violations, however, and will clarify the situation as soon as possible.

EA Three Comma Club

I'm interested in EA organizations that can plausibly be said to have improved the lives of over a billion individuals. Ones I'm currently aware of:

- Shrimp Welfare Project – they provide this Guesstimate, which has a mean estimate of 1.2B shrimps per year affected by welfare improvements that they have pushed

- Aquatic Life Institute – they provide this spreadsheet, though I agree with Bella that it's not clear where some of the numbers are coming from.

Are there any others?

We are banning stone and their alternate account for one month for messaging users and accusing others of being sock puppets, even after the moderation team asked them to stop. If you believe that someone has violated Forum norms such as creating sockpuppet accounts, please contact the moderators.

@lukeprog's investigation into Cryonics and Molecular nanotechnology seems like it may have relevant lessons for the nascent attempts to build a mass movement around AI safety:

... (read more)First, early advocates of cryonics and MNT focused on writings and media aimed at a broad popular audience, before they did much technical, scientific work. These advocates successfully garnered substantial media attention, and this seems to have irritated the most relevant established scientific communities (cryobiology and chemistry, respectively), both because many

An EA Limerick

(Lacey told me this was not good enough to actually submit to the writing contest, so publishing it as a short form.)

An AI sat boxed in a room

Said Eliezer: "This surely spells doom!

With self-improvement recursive,

And methods subversive

It will just simply go 'foom'."

Working for/with people who are good at those skills seems like a pretty good bet to me.

E.g. "knowing how to attract people to work with you" – if person A has a manager who was really good at attracting people to work with them, and their manager is interested in mentoring, and person B is just learning how to attract people to work with them from scratch at their own startup, I would give very good odds that person A will learn faster.

I have recently been wondering what my expected earnings would be if I started another company. I looked back at the old 80 K blog post arguing that there is some skill component to entrepreneurship, and noticed that, while serial entrepreneurs do have a significantly higher chance of a successful exit on their second venture, they raise their first rounds at substantially lower valuations. (Table 4 here.)

It feels so obvious to me that someone who's started a successful company in the past will be more likely to start one in the future, and I continue to b... (read more)

Person-affecting longtermism

This post points out that brain preservation (cryonics) is potentially quite cheap on a $/QALY basis because people who are reanimated will potentially live for a very long time with very high quality of life.

It seems reasonable to assume that reanimated people would funge against future persons, so I'm not sure if this is persuasive for those who don't adopt person affecting views, but for those who do, it's plausibly very cost-effective.

This is interesting because I don't hear much about person affecting longtermist causes.

Adult film star Abella Danger apparently took an class on EA at University of Miami, became convinced, and posted about EA to raise $10k for One for the World. She was PornHub's most popular female performer in 2023 and has ~10M followers on instagram. Her post has ~15k likes, comments seem mostly positive.

I think this might be the class that @Richard Y Chappell🔸 teaches?

Thanks Abella and kudos to whoever introduced her to EA!

High Impact Athletes is one effort in this direction.