TLDR: I'd like to have the ability to hide the author(s) of all the posts on my news feed so that I see can read the content without knowing about the source. I have some intuitions for why this might be good but mainly I think adding the feature is easy and it lets us check whether or not it's good.

Is this the right place to post this? I can't remember if there's an obvious place to suggest forum features (I think there might have been a survey a while back but I might have imagined it). Since the bulk of this post is closer to "Why you might want this" than "Why you should add this to the forum you maintain" I think it's better posted publically. Also it seems likely this can be quickly made with a chrome extension[1].

The feature

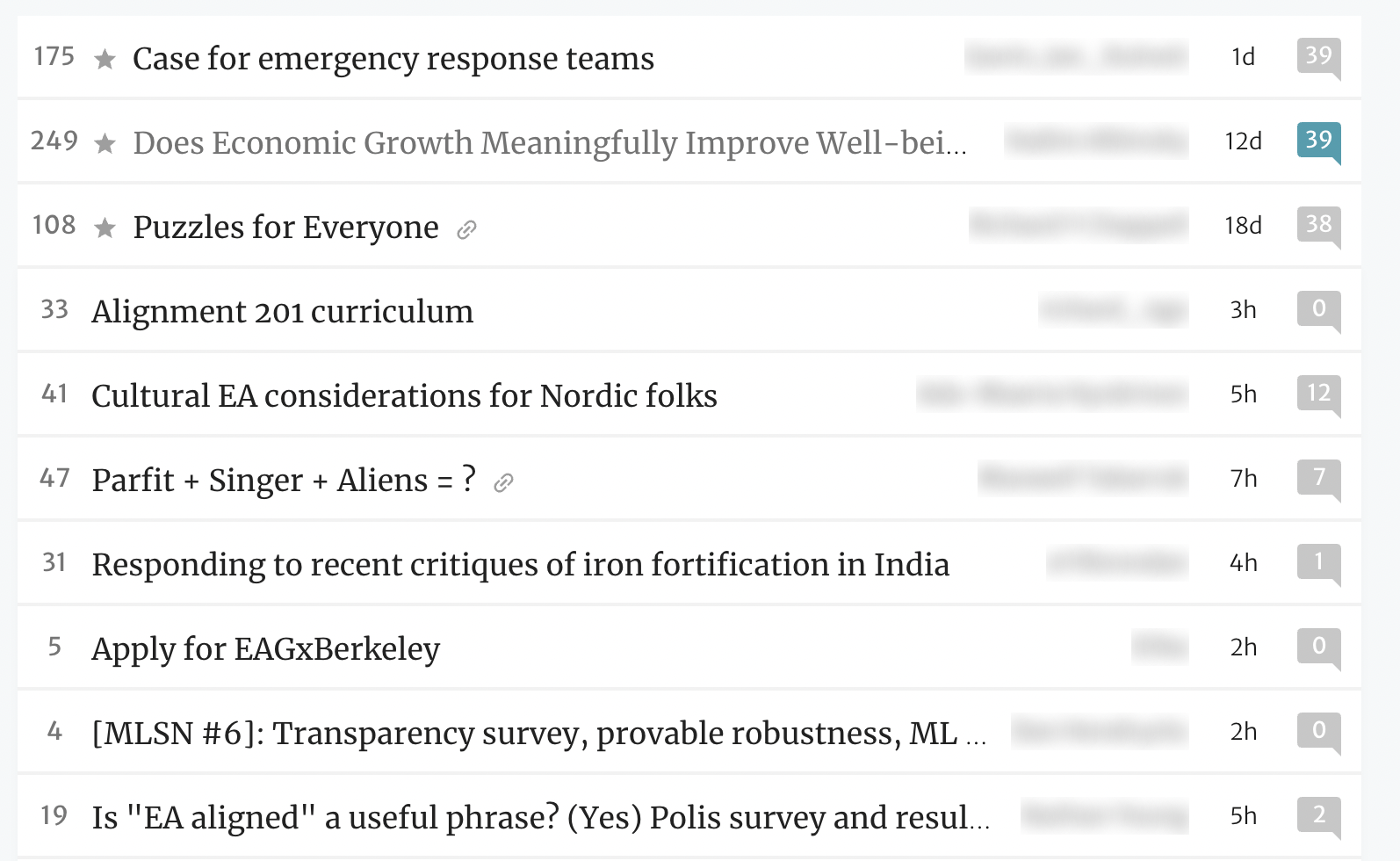

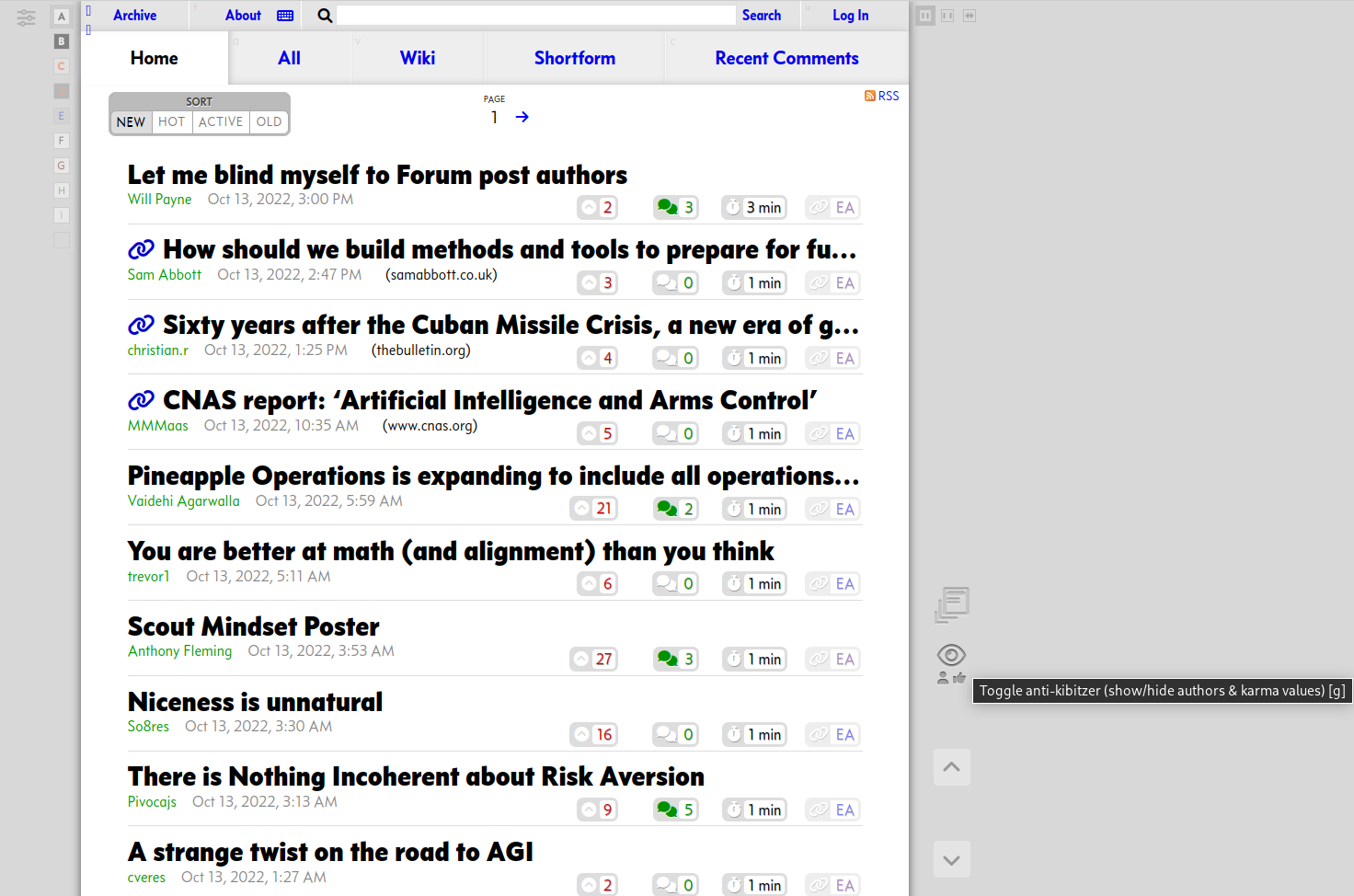

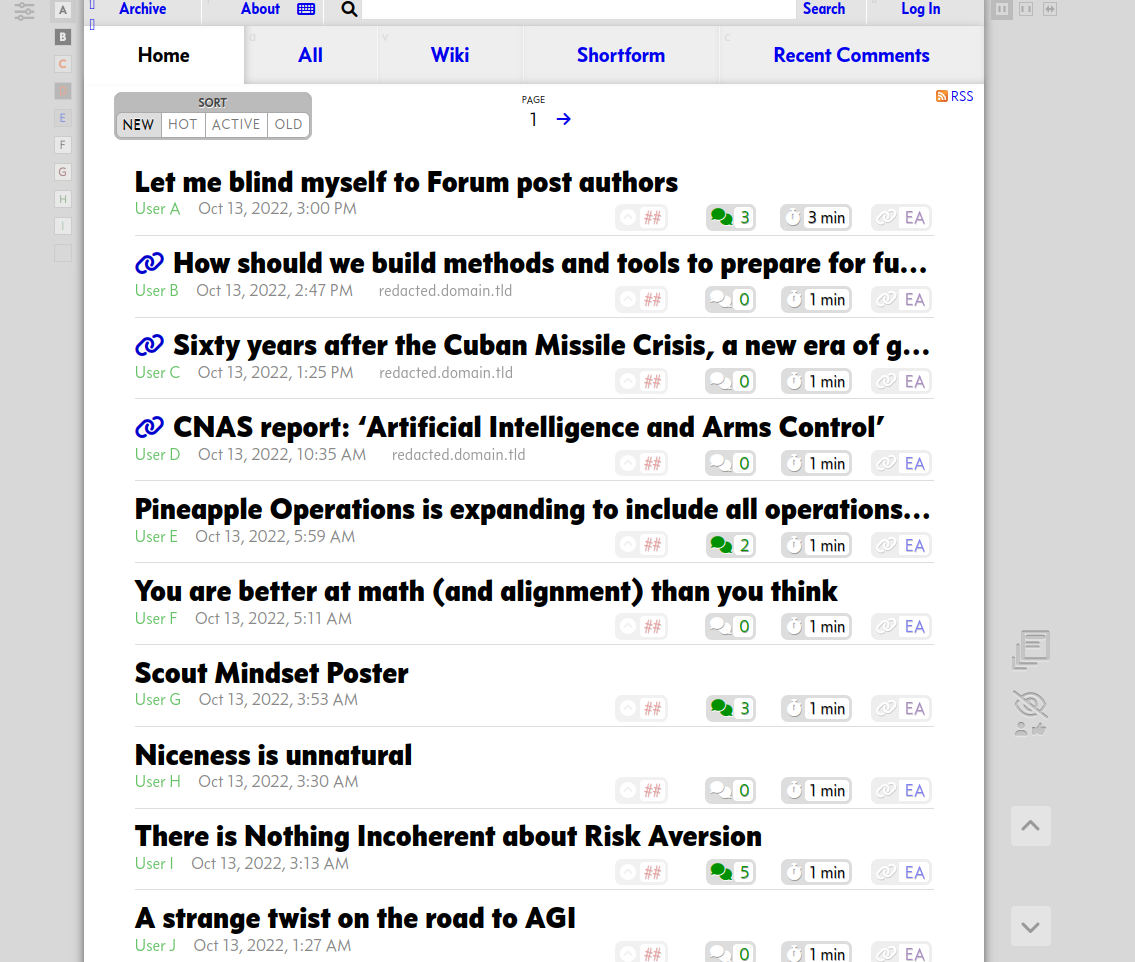

I'd like to be able to blur out author names wherever they appear like this...

(It could also be black boxes of regular size or random strings of nonsense characters like this ⏁⊑⟟⌇ ⟟⌇ ⋏⍜⋏⌇⟒⋏⌇⟒[2])

I'd like to be able to click on names to reveal them but would be ok with an easy toggle for the feature.

A larger project would be for folks at CEA to run an AB test where post authors are hidden for one group of users and not hidden for another and publish click through rates and karma from different groups. I think there will be a difference I'm not sure which setting I'd advocate for as a norm though (I'll go into why below).

EDIT: Since a lot of people are suggesting extensions / greaterwrong style solutions. One benefit of an integrated into the forum solution is the ability to separate blinded Karma from unblinded Karma (even if this is only on the back end). I'm mostly interested in what the frontpage looks like when karma is driven only by post content and not by authorship.

Why I might (or might not) want this

I don't think I say anything super surprising in this section, you're welcome to skip it.

It seems pretty obvious that the authorship of a post affects my click through rate. There are good reasons for this. If I recognise a name as someone who I've read content from before and found that content useful I think it's more likely I'll find their new content useful too. This is the same logic that led me to watch the new Game of Thrones spin off, buy a second pair of Levi jeans, and listen to the latest Dodie album.

However this policy makes me less great at exploring new sources of insight. Historically I'm significantly more likely to re-read Harry Potter and the Methods of Rationality than pick up any specific book on my to-read list[3].

This actually doesn't just affect me but also, through the karma system, everyone else. FYI this is the factor which made me decide to post this[4]. I can think of a few examples off hand where I opened a post I might not have otherwise because I know the author personally and want to know what they're up to, I then end up upvoting the post. If I extrapolate my policy to everyone else on the forum I'd expect posts from authors who have a lot of friends in the community to do better than the exact same post posted anonymously[5]. I could train myself to stop doing this (and suggest others do so) but it would be a lot easier to just anonymise posts.

Separately once I open a post I'd guess there are a bunch of associations happening at a level of my thinking I'm not aware of when I read a name I recognise at the top of a post (ala Halo/Horn effect). I mostly guessing about what these would be but I expect people I like / have agreed with before get more of the benefit of the doubt and are engaged with less critically. This would mean I'd be more open to novel or unintuitive seeming proposals from people I know who are already established in the community. I think this effect still exists when I do the obvious thing and approach posts with an open mind, you typically can't fix a bias by knowing about it.

Here are some other reasons I might or might not want this feature:

- Anonymising the forum lets EA community builders see it a bit more like newcomers to the community would (although we can't remove your jargon dictionary)

- Relying on just titles might incentivise better titles from authors

- Some posts might be upvoted purely because it's valuable for the community to know what influential person/org in EA is doing at the moment. If the posts are anonymised it might make these posts less visible which might be bad for coordination in the community

- ^

Here's some code which when run in the chrome console will blur authors on the front page, it might not work forever.

arr = Array.prototype.slice.call(document.getElementsByClassName("PostsUserAndCoauthors-lengthLimited")) arr.forEach(v => { v.style.color = "transparent" v.style.textShadow = "0 0 10px rgba(0,0,0,0.5)" }) - ^

I actually spent an embarrassingly long time looking for nice looking alien character translators and these aren't up to my standard but sadly I couldn't find any that were aesthetic enough so maybe we should stick with blur.

- ^

Probably the most embarrassing real world example in this post

- ^

E.g I consider this factor to be sufficient to suggest this feature and experiment but I don't know if it's necessary (in other words I don't know if none of the other factors would have been sufficient on their own).

- ^

Actually I tend to click on anonymous posts because I'm curious about why they're anonymous. I'd expect a post would do better with a popular author than it would under a pseudonym.

One quick hack to do this could be using an ad-blocking extension such as uBlock Origin. It has an option to selectively block parts of the website (Right click on the element and choose "Block element..." and then "Create")