[Note: [1]Big tent refers to a group that encourages "a broad spectrum of views among its members". This is not a post arguing for "fast growth" of highly-engaged EAs (HEAs) but rather a recommendation that as we inevitably get more exposure we try to represent and cultivate our diversity while ensuring we present EA as a question.]

This August, when Will MacAskill launches What We Owe The Future, we will see a spike of interest in longtermism and effective altruism more broadly. People will form their first impressions – these will be hard to shake.

After hearing of these ideas for the first time, they will be wondering things like:

- Who are these people? (Can I trust them? Are they like me? Do they have an ulterior agenda?)

- What can I do (literally right now and also how it might impact my decisions over time)?

- What does this all mean for me and my life?

If we're lucky, they'll investigate these questions. The answers they get matter (and so does their experience finding those answers).

I get the sense that effective altruism is at a crossroads right now. We can either become a movement of people who appear dedicated to a particular set of conclusions about the world, or we can become a movement of people that appear united by a shared commitment to using reason and evidence to do the most good we can.

In the former case, I expect to become a much smaller group, easier to coordinate our focus, but it's also a group that's more easily dismissed. People might see us as a bunch of nerds[2] who have read too many philosophy papers[3] and who are out of touch with the real world.

In the latter case, I'd expect to become a much bigger group. I'll admit that it's also a group that's harder to organise (people are coming at the problem from different angles and with varying levels of knowledge). However, if we are to have the impact we want: I'd bet on the latter option.

I don't believe we can – nor should – simply tinker on the margins forever nor try to act as a "shadowy cabal". As we grow, we will start pushing for bigger and more significant changes, and people will notice. We've already seen this with the increased media coverage of things like political campaigns[4] and prominent people that are seen to be EA-adjacent[5].

A lot of these first impressions we won't be able to control. But we can try to spread good memes about EA (inspiring and accurate ones), and we do have some level of control about what happens when people show up at our "shop fronts" (e.g. prominent organisations, local and university groups, conferences etc.).

I recently had a pretty disheartening exchange where I heard from a new GWWC member who'd started to help run a local group felt "discouraged and embarrassed" at an EAGx conference. They left feeling like they weren't earning enough to be "earning to give" and that they didn't belong in the community if they're not doing direct work (or don't have an immediate plan to drop everything and change). They said this "poisoned" their interest in EA.

Experiences like this aren't always easy to prevent, but it's worth trying.

We are aware that we are one of the "shop fronts" at Giving What We Can. So we're currently thinking about how we represent worldview diversity within effective giving and what options we present to first-time donors. Some examples:

- We're focusing on providing easily legible options (e.g. larger organisations with an understandable mission and strong track[6] record instead of more speculative small grants that foundations better make) and easier decisions (e.g. "I want to help people now" or "I want to help future generations").

- We're also cautious about how we talk about The Giving What We Can Pledge to ensure that it's framed as an invitation for those who want it and not an admonition of those for whom it's not the right fit.

- We're working to ensure that people who first come across EA via effective giving can find their way to the actions that best fit them (e.g. by introducing them to the broader EA community).

- We often cross-promote careers as a way of doing good, but we're careful to do so in a way that doesn't diminish those who aren't in a position to switch careers and would leave someone feeling good about their donations.

These are just small ways to make effective altruism more accessible and appealing to a wider audience.

Even if we were just trying to reach a small number of highly-skilled individuals, we don't want to make life difficult for them by having effective altruism (or longtermism) seem too weird to their family or friends (people are less likely to take actions when they don't feel supported by their immediate community). Even better, we want people's interest in these ideas and actions they take to spur more positive actions by those in their lives.

I believe we need the kind of effective altruism where:

- A university student says to their parents they're doing a fellowship on AI policy because their effective altruism group told them it'd be a good fit; their parents Google “effective altruism” and end up thrilled[7] (so much that they end up donating).

- A consultant tells their spouse they're donating to safeguard the long-term future; their spouse looks into it and realises that their skills in marketing and communications would be needed for Charity Entrepreneurship and applies for a role.

- A rabbi gets their congregation involved in the Jewish Giving Initiative; one of them goes on to take the Giving What We Can Pledge; they read the newsletter and start telling their friends who care about climate change about the Founders Pledge Climate Fund.

- A workplace hosts a talk on effective giving, many people donate to a range of high-impact causes, and one of them goes "down the rabbit hole", and their subsequent career shift is into direct work.

- A journalist is covering a local election and sees that one of the candidates has an affiliation with effective altruism; they understand the merits and write favourably about the candidate while communicating carefully the importance of the positions they are putting forward.

Many paths to effective altruism. Many positive actions taken.

For this to work, I think we need to:

- Be extra vigilant to ensure that effective altruism remains a "big tent".

- Remain committed to EA as a question.

- Remain committed to worldview diversification.

- Make sure that it is easy for people to get involved and take action.

- Celebrate all the good actions[8] that people are taking (not diminish people when they don't go from 0 to 100 in under 10 seconds flat).

- Communicate our ideas both in high fidelity while remaining brief and to the point (be careful of the memes we spread).

- Avoid coming across as dogmatic, elitist, or out-of-touch.

- Work towards clear, tangible wins that we can point to.

- Keep trying to have difficult conversations without resorting to tribalism.

- Try to empathise with the variety of perspectives people will come with when they come across our ideas and community.

- Develop subfields intentionally, but don't brand them as "effective altruism."

- Keep the focus on effective altruism as a question, not a dogma.

I'm not saying that "anything goes", and we should drop our standards and not be bold enough to make strong and unintuitive claims. I think we must continue to be truth-seeking to develop a shared understanding of the world and what we should do to improve it. But I think we need to keep our minds open to the fact that we're going to be wrong about a lot of things, new people will bring helpful new perspectives, and we want to have the type of effective altruism that attracts many people who have a variety of things to bring to the table.

- ^

After reading several comments I think that I could have done better by defining "big tent" at the beginning so I added this definition and clarification after this was posted.

- ^

I wear the nerd label proudly

- ^

And love me some philosophy papers

- ^

- ^

e.g. Despite it being a stretch: this

- ^

We are aware that this is often fungible with larger donors but we think that’s okay for reasons we will get into in future posts. We also expect that the type of donor who’s interested in fungibility is a great person to get more involved in direct work so we are working to ensure that these deeper concepts are still presented to donors and have a path for people to go “down the rabbit hole”.

- ^

As opposed to concerned as I've heard people share that their family or friends are worried about their involvement after looking into it.

- ^

Even beyond our typical recommendations. I’ve been thinking about “everyday altruism” having a presence within our local EA group (e.g. such as giving blood together, volunteering to teach ethics in schools, helping people to get to voting booths etc) – not skewing too much too this way, but having some presence could be good. As we’ve seen with Carrick’s campaign, doing some legible good within your community is something that outsiders will look for and will judge you on. Plus some of these things could (low confidence) make a decent case for considering how low cost they might be.

Ah, I think I was actually a bit confused what the core proposition was, because of the different dimensions.

Here's what I think of your claims:

a) 100% agree, this is a very important consideration.

b) Agree that this is important. I think it's also very important to make sure that our shop fronts are accurate, and that we don't importantly distort the real work that we're doing (I expect you agree with this?).

c) I agree with this! Or at least, that's what I'm focused on and want more of. (And I'm also excited about people doing more cause-specific or community building to complement that/reach different audiences.)

So maybe I agree with your core thesis!

How easy is it to get big with evidence and reasoning?

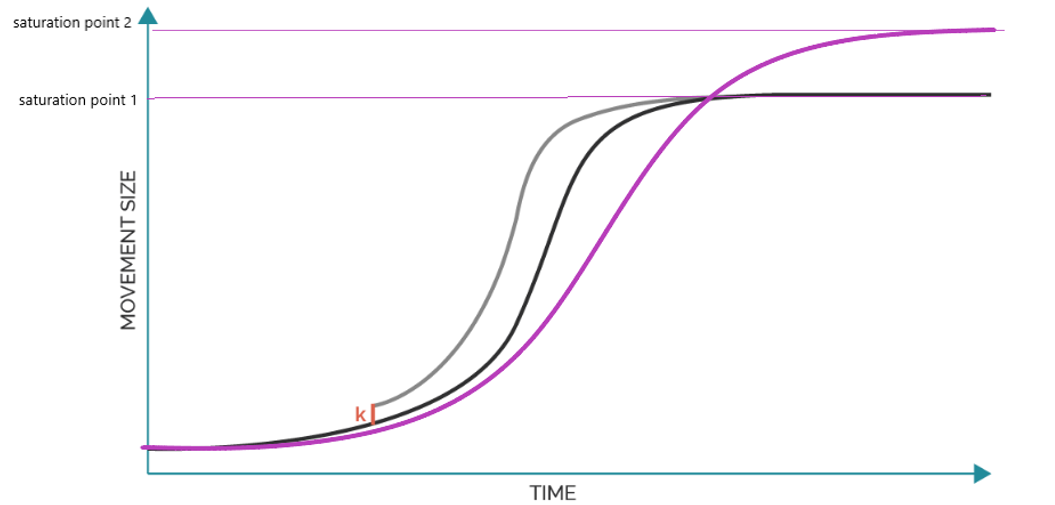

I want to distinguish a few different worlds:

I'm most excited about option 3. I think that the thing we're trying to do is really hard and it would be easy for us to cause harm if we don't think carefully enough.

And then I think that we're kind of just about at the level I'd like to see for 3. As we grow, I naturally expect regression to the mean, because we're adding new people who have had less exposure to this type of thinking and may be less inclined to it. And also because I think that groups tend to reason less well as they get older and bigger. So I think that you want to be really careful about growth, and you can't grow that quickly with this approach.

I wonder if you mean something a bit more like 2? I'm not excited about that, but I agree that we could grow it much more quickly.

I'm personally not doing 1, but I'm excited about others trying it. I think that, at least for some causes, if you're doing 1 you can drop the epistemics/deep understanding requirements, and just have a lot of people coordinate around actions. E.g. I think that you could build a community of people who are earning to give for charities, and deferring to GiveWell and OpenPhilanthropy and GWWC about where they give. I think that this thing could grow at >200%/year. (This is the thing that I'm most excited about GWWC being.) Similarly, I think you could make a movement focused on ending global poverty based on evidence and reasoning that grows pretty quickly - e.g. around lobbying governments to spend more on aid, and spend aid money more effectively. (I think that this approach basically doesn't work for pre-paradigmatic fields like AI safety, wild animal welfare, etc. though.)