All Comments

Interesting analysis! I'm somewhat skeptical that space based cells will perform as well as you find in this model. This seems like a classic case where something that looks good on paper will run into problems in real world applications for something as complicated as putting massive datacenters in space. I don't think your model is accounting for murphy's law here.

Also, on a technical note, do you have the source for the starlink solar cell specifications used for the phrase "Back-calculated from Starlink V3 specifications (50,400 W from 554 kg array, 257 m² area)"? This seems like one of the critical figures of your model but I couldn't find where you linked the source for it.

Happy to hear your thoughts.

I should clarify that I wrote this as someone who left a very EA bubble and came back after working in spaces that were remarkably worse. I agree with other commenters that we should aim to be better than the baseline. Regardless of where we fall on general harassment levels, I appreciate the community’s efforts to address issues as they happen.

Personal anecdote: multiple members of the CEA events team called out a vendor for behavior that I didn't think to report as harassment. They proactively looped in HR and offer...

I don't think I'm capable of raising this issue in a way that didn't get critical comments...Suffice it to say that I spent a reasonable amount of time, rewrote it several times, looked for more data, showed it to friends. To me it seems like a pretty uncontroversial piece.

Did the friends you shared this with you all agree with you that this was a pretty uncontroversial piece that wasn't likely to get critical comments? If so I think probably worth sharing with a broader set of people for feedback in future, as I think this pushback was reasonably predicta...

I mean if you can move DCs and production/mining/etc. to space that's a solid win for AI safety? We then basically delineate AI and the only human-inhabitable planet we know about. That's a heavy lift though, but maybe water on that one moon of Uranus (?) and other parts could be assembled off-Earth. This is literally galaxy brain thinking but something I feel might be worth pursuing, or at least showing AIs that it might be cheaper for them to pursue off-Earth living than engage in uncertain and high-cost conflicts on Earth. Maybe they don't care about time, and would be happy to build towards this over the next 1000 years instead of spending valuable compute and industrial capacity to prepare for AI-human conflict.

While the future is very hard to predict, it would be very surprising if there was no possible action which benefitted the long-term future in expectation. And if there are actions like that, then they swamp the expected value of other actions. It is an error to infer from the fact that the future is very unpredictable that we’re totally in the dark about which actions can make it better.

I think this needs additional assumptions to make it go through in realistic situations. There is a bit of an errors-in-variables problem. I think as stated, the argume...

Happy to read a draft! We also looked a sea-based data centers, SMRs, and permitting/buildout arbitrage in other nations. My current thinking is that you'll see a lot of friction but its possible in principle to scale energy up, perhaps to the tune of hundreds of GW/yr even if only using solar. The more fundamentally binding constraint to me seems to just be chip production but thats not to say that these various bottlenecks don't at least partially bind or create friction at various points. You'd probably have a situation in hyperscalers are water flowing...

In the context of my post, the term "narrative argument" was really clearly used to refer to fictional stories embedded in a text as part of its argument, such as AI2027's short story or the parables in Y&S's book. My text simply does not have that.

By completely misunderstanding even basic concepts such as "narrative argument", your comment was just a snarky jab and had little value. It didn't comment the actual content of my text, instead, and largely unjustified by any real analysis, it tried to "get back at me" by blaming me for things I blame other...

Super nice post. Impressed by the 'translation into EA language' from the original post. It's also a skill I'm working on.

I'd like to ask a little more about your point in "2. Fund rigorous evaluations of participatory allocation"

How does this process respond to participatory grantmaking inherently having a lower statistical verifiability, given that participatory grantmaking is generally much less uniform and traceable using quantitative techniques? There's no good counterfactual for Extinction Rebellion (funded by Guerilla Foundation), and FundActi...

FWIW I agree with the sentiment!

However, I think this post as it stands leans a lot on the Importance dimension of the ITN framework, gestures at Neglectedness, and doesn't directly address Tractability. To be clear, that's fine! Not every post needs to address everything; some of the linked posts address those somewhat; other resources out there do too.

But I do think that the question of 'what can be done with 100s of extra $bn' is also a question of T and N, and I think part (majority?) of what Ransohoff is saying is that we're woefully unprepared ...

Weighing in as a comment I made has been cited below: thank you for your moderation efforts.

I'm an admittedly infrequent poster/commenter on the Forum, in no small part because I don't have a lot of time on my hands. As such I'm often discouraged from engaging with certain topics or (potentially) productive disagreements because I simply cannot afford to engage with the kind of [charitable ? detailed : gish galloping] posts and comments exemplified in this thread.

Just wanted to provide something to counterbalance any adverse selection in responses.

- I think it reflects poorly on you that a "highly-upvoted" comment was a stronger update towards 'the Forum at large is wrong' more than 'you were wrong' or even 'you should change how you communicate'.

- On the object level: I read your post, and like ~half of people who reacted to it, disagreed with it; specifically I think it has narrative-like qualities, and regardless of how much we quibble over the precise point at which text can be reasonably called "narrative", I thought it was non-evidence-based argumentation, which you were arguing against.

- I think in general it is bad forum etiquette to "snitch tag" like this. I think you're misrepresenting my comment and raising it in an irrelevant context.

This is a great point, I think it's sort of a double-edged sword where this is the reason why the same elite legible orgs keep on getting funded, but if some orgs can do deeper work in local networks and share this extra information with others and this work compounds, it will have positive effects

Thank you for the thoughtful read. I resonate with the "we don't even know we don't know" point because a portfolio can look well-optimized even when the unfound orgs never enter the denominator.

The search cost isn't just high, it's front-loaded and shareable in a way the field doesn't take advantage of. Once a grantmaker has done the work of mapping who's real in a place, that map is close to a public good, but too often we fund as if every funder has to rediscover it alone. A shared diligence layer for high-proximity orgs would change the per-grant math.

I worked for a couple years as a global development grantmaker and completely agree with this: ""the people closest to a problem can allocate better than distant experts" is something your own best evidence supports in important cases. That makes proximity decision-relevant, not sentiment to be stripped out."

It's bothered me for years that, as you note, a lot of effectiveness-oriented grantmaking is aimed at what's legible to elites in high-income countries when those are usually not the most effective or cost-effective organizations (obviously some of the...

As always, a truly insightful post. I would argue, however, that the psychological dimension deserves consideration as well. There is well-established evidence that nature has a profoundly positive impact on mental health. A growing number of people requiring psychiatric care, combined with a shrinking workforce due to mental health conditions, carries significant economic consequences. Furthermore, people are consistently willing to pay a premium for housing near thriving, healthy ecosystems. Once those ecosystems are lost, so too is that value.

Thank you.

The discussion in the comments so far focuses on two claims:

- We can't easily tell from this data whether EA is better or worse than baseline

- I agree, though I do think it's some evidence we're not much worse.

- Why does it even matter whether EA is better or worse than baseline? Community members are welcome to hold EA to a much higher-than-baseline standard, if they want to.

Yes this seems right and hope it's a thing people will take forward.

I have looked into AI and energy (happy to share my drafts with anyone interested). My impression is that it is not the cost of energy that drives orbital DCs, but instead the availability. It is not only orbital DCs that are being considered, the portfolio includes hopelessly naive stuff like floating DCs powered by ocean waves, restarting Three Mile Island, SMRs and much more. If energy consumed by human-equivalent AI task performed does not drastically reduce, the inference energy demands will far outstrip even the most electrical generation the world a...

Thanks so much @mal_graham🔸 that's the web page appreciate that! I understand that the WIT doesn't do all the work, but I think it was reasonable for me to assume that they were the major contributors given that they have been the team publishing the cross-cause work up to now, and were the team linked from the page.

Hi Nick -- just regarding the team page issue, are you thinking of this page: https://rethinkpriorities.org/our-research-areas/worldview-investigations/ ? It has the people listed in your screenshot from Claude.

As a reference, I got to this from CCF page --> support our work --> under the "our team" section. Notably, the link is from this text:

"The Rethink Priorities Cross-Cause Fund sits on top of work done by our Worldview Investigations Team (WIT), and Interdisciplinary Research Team, groups established specifically to tackle the hard questions th...

Thanks for asking, here are some ideas I personally wish funders would consider at least investigating. The epistemic status of some of these ideas is not great, and I never attempted any robust analysis on the expected values of these potential interventions/causes, but I hope they are worth investigating.

- Stop/slow down the development of remotely controllable insects (not just for AW reasons; such a tech can be misused for surveillance, military uses, and bioterrorism)

- Stop the spread of caged broiler systems, particularly in African countries, and

Wow! Possibly the single best, most important post I've seen on here.

EA's ideas are often extremely good, to the extent that if we could get people to listen to our arguments for a few hours, most of them would probably agree with us.

But too often the only people we actually convince are the small minority who are willing to come to EA forums and other blogs and read long arguments in very dense prose.

And that's why so many of our great ideas remain in the minority, or, at best, weakly but not passionately supported by a majority.

Yo...

Reform have publically called for proportional representation, and included it in their 2024 manifesto: https://www.telegraph.co.uk/politics/2024/06/25/nigel-farage-proportional-representation-reformuk-seats/

I'm sorry if this came off as anti-reform in any way. That's not the problem. The problem is one the UK is suffering from now where you can get 63% of the seats in parliament on 34% of the vote. That's anti-democratic.

For more numbers on how FPTP skews UK politics, see https://electionresults.uk which goes back a decade and finds severe failures every ...

I feel that there could be greater transparency in how these moderation decisions are done. In my opinion, although Yarrow has been quite confrontational at times, they haven't really done anything that would necessitate such a punishment. While the forum is allowed to moderate its users in any way it likes, one shouldn't be rate-limited just because they have disagreements, especially if their opinions go against the mainstream opinion on the forum. This puts the neutrality of the forum moderation at question.

Personally, I have pretty bad experiences writ...

Thanks Marcus for the reply. First this criticism is specifically about potential preferences and bias of the researchers. With a project like this with perhaps hundreds of junctures which require subjective decisions, I think that's a reasonable discussion to have. I don't think it's fair to ask me shift the ground and ask to discuss the research methodology, I'm sure there'll be plenty of great discussion about that. My point was purely concerns about potential researcher bias.

I completely agree with your 3 points, and specifically that...

Oh, you wrote that post on “truthseeking” too!! I forgot that!! Another helpful post.

“Truthseeking” as a term drives me bananas because it’s so vague and ambiguous — I looked really hard and couldn’t find any real attempts to define it clearly, if even to define it at all — and pretty much the only way I see the term get used is when someone wants to slam someone else they disagree with. And it’s definitely never clear to me that the person who’s accused of “not truthseeking” is doing anything wrong, or making bad points, or that their views are wrong. It ...

Broadly in agreement but I don't think the examples are actually the pure version of what he is saying. It sounds like he is classifying goods based on the ratio of private benefit/social effect ish, so yes things with a very high private benefit and plausibly low externalities are definitely good but I feel less confident than him we could say that about refrigerators for instance.

It seems pretty bad to allow people to react to their own comments/posts beyond the initial karma bump of an upvote. It allows users to artificially create the appearance of positive engagement with their posts, which skews initial perceptions and detracts from the truth-seeking goals of the Forum.

I'd be surprised if preventing people from saving starfish from asphyxiating on the beach is the best way to help shellfish even if all the assumptions in this calculation hold. If not, Shrimp Welfare project should probably start the SPFSTSNABWWTSTONAF (stop people from saving these specific neglected animals becuase we want to save those other neglected animals fund).

If people have a higher quality of life overall, and specifically if they have access to more goods and services than they did before while literally not working at all, that actually seems extremely good.

Democracy is just one governance technique among many in our existing liberal-democratic countries, and isn't essential to good governance.

Thank you for your comment! I think your first point is true: the fact that I was born and I'm a human is much stronger evidence for the hypothesis that "only I am sentient" than for "all humans are sentient". As you mention, we have reasons to believe that other human beings are sentient, which is also why I have a much stronger prior belief in "all humans are sentient" than in "only I am sentient". Because of this, I have a stronger posterior belief in "all humans are sentient", in spite of much stronger evidence for "only I am sentient".

It seems l...

... then offsetting with animal welfare interventions means more feed is required, resulting in fewer wild invertebrates

There are two main effects. Higher welfare standards generally mean fewer animals are raised, since they and their products become more expensive. But those that are raised require more feed. As far as I know there is no consensus on which effect dominates.

...However, if the person thinks that wild invertebrates lives are net negative, they would prefer the animal welfare interventions offset, because not only would that help the farme

Hi Nick,

I think there is not a single EA organization I would consider unbiased on this question, including ourselves (despite our ongoing efforts not to be). That is exactly why we publish so much of our methodology and our assumptions openly. One of the main motivations for this work is concern about the effect of bias when assumptions and models are implicit or hidden. We would welcome more experts with broader backgrounds being involved in drafting and improving these estimates, which is part of what we hope this kind of public methodology enables.

On c...

I agree that something like Give Directly is in principle the right thing to compare to as a baseline. I do not think the comparison is obvious, and I think it may go the opposite way. GiveDireclty has a positive effect on the people you are actually giving money to. First order wise, it is a big win. However, I am pretty doubtful that is has meaningful second order effects. It doesn't help an economy develop. It doesn't change a countries society long term, much less humanities. To succeed long term, we need economies to develop, which requires large pool...

I think I see now, thanks for the clarification. We don’t currently include funds/interventions that specifically work on research (to reduce key uncertainties or otherwise). We do think this kind of work is important, and we aim to include more topics (which could include “meta” work and research) in future iterations of the model.

Hey! In response to what we judged to be low quality LLM submissions, we changed the Airtable view to only feature submissions we thought were past a particular quality bar (not strictly a filter on LLM usage, but there was definitely some correlation.)

Yours was not the only essay we filtered from the Airtable view, I would estimate about half were filtered.

I continue to be optimistic about LLM assistance for producing good essays, but I'd say the base rates are not great unfortunately.

The case for it appeals to standard premises that sound plausible when you hear them. There is no standard reason people reject it in the philosophical literature—no objection that is widely agreed to succeed.

That is probably true if you only look at the philosophical literature. But outside of a few small groups such as that, people widely reject the premise that we should have identical moral concerns for people in the distant future and people currently alive.

Premises that are truly widely shared only support a weaker version of longtermism than yours.

I am not currently convinced by the "EA is unusually bad" hypothesis which many commenters seem to believe.

I disagree with you. In my experience, EA has historically been unusually bad about this. And even though I think your quantitative analysis is poor, for reasons I'll get to, I agree there may be an anecdote/data discrepancy. I think this stems from ambiguity about what counts as sexual harassment within the EA community, which suppresses reporting of potential instances.

Within the EA community, there's historically been ambiguity regarding what count...

I think the fact that these issues are more openly discussed in EA than in comparable sectors can skew the discourse. If you don’t regularly attend EA events, it’s easy to think that all of the community health resources suggest an extreme problem with sexual harassment, as opposed to a proactive approach to preventing it. That said, these resources being available can make it more surprising when specific cases are not handled well.

I work in DC. The bar is so low here that it took months of public pressure for one person to see consequence...

Hi Nick. I agree it is important that the questions are easy to answer. However, I would say there are simple ways of letting users express their uncertainty. For the question about how much animal welfare should factor into funding decisions, there could be an option saying something like "I am very uncertain about which of the 4 views above I should pick", or users could be allowed to give weights to each of the 4 views (instead of giving a weight of 1 to a single view). Then these answers could be used to define distributions for the probability of sent...

Great write up! I especially appreciate your one-line descriptions of some of the largest AW issues. Are you aware of any kind of database where these issues are catalogued, and if so, could you point me to it? We have a long list of interventions, but having a long list of discrete welfare issues would be at least equally useful.

Is it possible that subgroups within EA have sexual harrassment rates that are significantly above or below baseline? For example, maybe AI related spaces or SF Bay Area spaces have higher levels of sexual harrassment. Then people who are generally in those spaces would subjectively observe that the level of sexual harrassment is high, even though it might not be if you were to average across all of EA.

If there are parts of EA that have lower than average levels of sexual harrassment, I would not expect anyone to speak up and say, "man, this animal welfare community sure doesn't have much sexual harrassment". So there is a bias in terms of who is moved to share their experience.

Thanks for the links, Laura. To clarify, I meant to ask whether you have considered making recommendations that specifically target decreasing the key uncertainties that matter for cross-cause prioritisation. For example, decreasing uncertainty about welfare comparisons across species by supporting work like RP's moral weight project.

I think my main point is that we don't talk accurately about this issue. There isn't lots of evidence and yet many comments seem to me to imply that EA is unusually bad. That seems bad to me.

I also think that’s why there are so many critical comments, because if you’d simply asked a specific comparative question whilst being perfectly clear about your aims and what you meant, I don’t think there would be any such comments, or at least far fewer of them. (I may be wrong; that’s just my current view.)

I don't think I'm capable of raising this issue in a way t...

Thank you for the comment Ariel! I'm finding it pretty surreal learning about how much thinking has actually been done on the topic after some more digging. You are right that this entire post requires me to have been born for the probabilities to be the same as the number of births of different species, which is not necessarily true, since I might have also not been born at all.

I agree that any argument made by someone receiving the letter is not good evidence of the lottery being rigged, since they were always going to say that. Only winners will know th...

Your reply raises about 5 points. I responded to the ones I choose to. It seems unlikely I'm going to be able to engage with all of them. Is there one that you would particularly like me to engage with?

But to talk a little more, yeah it's hard to argue this stuff well. To me it feels like a pretty anodyne point. People make claims (which I've listed) and they seem different to the data. In response we have ~30 comments, mainly critical. Many contain long blocks of text that it would be hard to respond to in full. Yeah I expect to do a mediocre job here.

And...

It's an interesting one Vasco. I prefer the current questions to uncertainty questions which I think are more intuitive for most people. I think it's important to lean towards questions which are easier to understand, rather than the "best theoretical" questions to help differenciate. Not everyone thinks as deeply as you about these things, and I like the accessibility of the current questions.

I wasn't aware before of the social tariffs on the internet, so this totally makes sense.

Although, at the moment, I do feel that AI is increasingly democratised and available and accessible to the masses.

If that ever changes though, or the gap between paid for and free continues to grow (which it very well might do!), for me it always comes down to education educatation education - what is it, why would I use it, what can this "AI" do for me.

Hi Vasco,

Thanks for the question! We've designed the Donor Compass to be more streamlined, but we certainly appreciate and share your moral uncertainty. Our recommendations for the Cross-Cause Fund take into consideration 14 distinct worldviews, which take into account a wide range of animal moral weights (among other variables). You can investigate the assumptions for each here, along with the credences placed on each. If the range of views you place credence on significantly differ, however, you can use the Advanced Mode of the Cross-Cause fund...

Hi Benton,

Thanks for the comment! To clarify, our model and recommendations do draw upon the same approaches to moral uncertainty as our moral parliament. Our Cross-Cause Fund draws upon 14 different worldviews (see here for the exact details) and uses a weighted average of recommendations across nine methods of aggregating across them.

Additionally, though our main Donor Compass tool is simplified, you can use a more Advanced Mode here to incorporate moral uncertainty across several combinations of worldviews. (I’m not familiar with Ross’ p...

Ah yeah, that all makes sense.

I'm sorry that you're much happier for distancing yourself from EA - but I'm glad that you're much happier now!

> If I didn't have a pretty thorough view formed over years, base rate might also be the first thing I want to understand. I guess I'm just personally well past that

I relate to feeling this way about other topics. Normally, I just roll my eyes and don't really engage with online discussions, but for fwiw I appreciate you giving your thoughts here - it was, at the very least, helpful for me.

Hi Caleb! :)

"My impression is that, if asked, many people would say that EA is significantly worse than other spaces."

I found this useful! It's good to know what others are seeing & hearing. FWIW, my personal experience with this is something vague, like:

- I know I've seen tweets to this effect over the years. I think I remember someone prominent mentioning that they found EA (and EA conferences) to be somewhat worse than econ (on sexism or similar)

- My in-person experience with this type of thing is much more nuanced. I.e. when I discuss

I like this comment; the events analogy, in particular, shifted some of my beliefs and gave me a useful frame I had overlooked before. Apologies if you've already covered this in other comments.

My impression is that, if asked, many people would say that EA is significantly worse than other spaces. There's some amount of trying to figure out which other spaces are reasonable to compare to, which allows people to agree with some versions and disagree with others, but on a vibes level, I feel like people think EA is significantly worse. [1] Idk if t...

Thanks for your work on this.

- We modeled key uncertainties that matter for cross-cause prioritization: moral weights, time discounting, risk attitudes, aggregation across ethical views, AI-related uncertainty, and empirical uncertainty within each giving opportunity.

Have you considered making recommendations for people who do not have definitive views about the topics above? For example, question 1 of the Donor Compass asks about how much animal welfare whould factor into funding decisions, as illustrated below. I understand each option is represented by a ...

I've noticed a pattern of a particular rhetorical move you tend to use, whereby you sidestep engaging meaningfully with people's points and instead continue to ask pointed questions. With these questions, it's relatively clear that you have one answer you agree with and expect back. If you don't get that answer, you tend to simply continue posing further questions, sidestepping other points, and pushing in the direction of a specific answer. If this method eventually produces an answer you're looking for, or something close, I then see: "great, now that we...

How was the 40% figure calculated?

This is definitely an approximation, not based on a rigorous underlying model. I informally personally asked several people in the cause prio and AI spaces, including some at labs, to answer the question about what type of discount seemed appropriate and, along with my best judgment, settled on 40%.

I would very much like to improve this input in the future, perhaps through formal surveys or, perhaps, a Delphi panel-type process. Though I think my weakly held intuition here is a bigger possible change isn’t the precise v...

This was an interesting read. Thank you for sharing it. This reflects a lot of my own views, especially the parts about RCT evidence in Development being weaker than is deserved (given the medicine halo) and the challenges in estimating costs (and how much that matters). Whenever I dig in for teaching or research I nearly always leave with more uncertainty, which is a bit of a scary feeling.

I think there are posts which question the marginal decision on donations and veganism.

- @AppliedDivinityStudies writes a red team against the impact of small donations

- @Elizabeth wrote a post suggesting EA veganism was a bad norm which if I remember caused a huge argument at the time.

Now perhaps you will object that these articles don't have much discussion of baselines. To me it's implicit in them that EA has a higher baseline interest in small donations and veganism than typical communities. If not, why would these articles be written?

As an aside, did you ...

I resonated with a lot of this, especially prior to 2022. Speaking only for myself, I think a lot of it was downstream of what Ozy Brennan wrote in The Life Goals of Dead People, but I was (unbeknownst to myself) much better at rationalisation than introspection, so it took a long time for me to realise this.

Arguments along these lines are giving me quite a headache aswell. That being said I would like to push back against this line of reasoning a bit:

- According to the same argument, the hypothesis "only I am sentient" beats the hypothesis "all human beings are sentient" approximately 7 billion to 1 in explaining the observed evidence (i.e. the evidence that you are born as yourself). Assuming that "all humans" includes all human beings throughout humanity's history this ration plummets even further. (I am not actually trying to argue that you are the only sent

Welcome Andrei! I've actually mused on this question a lot myself, and I agree it's under-discussed!

What immediately jumps to mind is that this post's argument requires SSA, a view of anthropics (the study of how one should reason about their own existence). Under SSA, you are randomly sampled from a "reference class" of beings. You rightly conclude that under SSA, being born a human is extremely unlikely, so your existence seems to be strong evidence against nonhuman sentience. (This would also imply the sum of artificial and/or future sentience won't be ...

Hi Toby, the CCF is not eligible for UK gift aid, I'm afraid, as it's a US-held fund, so donations are tax-deductible in the US only.

We're actively looking into workarounds. Theoretically, one can give to a giving vehicle eligible for UK gift aid, and that entity could then re-grant to this fund. Anyone interested can contact us at fund@rethinkpriorities.org to discuss options.

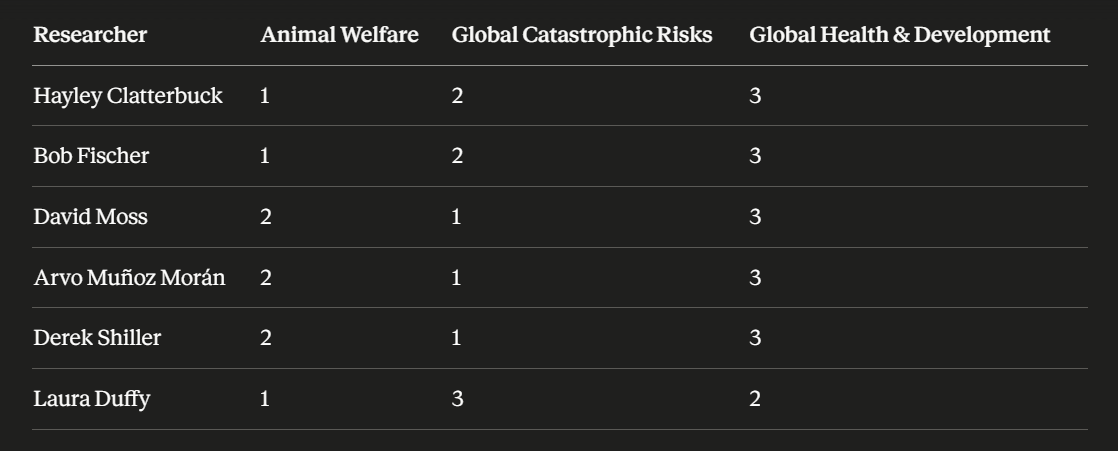

I think this is important work, but I want to flag my biggest concern about the process - the imbalance of the backgrounds of the research team and therefore potential for bias. I asked Claude to rank their areas of interest and prior work, and it shows a heavy bent towards Animal Welfare and Global Catastrophic risk.

Half of the research team have been strong advocates for Animal Welfare work in the past, while none of the team seems to have a special interest in GHD. Two of the team were deeply involved in the Animal Moral Weights project itself.

I t...

Thanks for your kind words.

On the bad faith/"truth seeking" point, I've also noted some issues in the way "truth seeking" is used in a previous post, and thinking about this case gave me an idea. It seems like there is a general phenomenon in EA/rationalist discourse where intent gets obscured or ignored somehow. Perhaps not surprising for intellectual communities that are very into consequentialism?

I think the effect of using the "truth seeking" terminology is to confuse multiple possibilities around intent:

Lying: intentional

Insufficient rigor/evidence/et...

Huh, I'm a little surprised to hear that, to be honest. To be clear, I mean something more like "visceral"/"rhetorical"/"de facto" convincingness, not whether it purely logically hinges on it.

Also, just thinking of this because I'm reading it right now - if you want to convince more people of your view, a critical review / "rebuttal" of https://www.forethought.org/research/how-to-make-the-future-better might be cool. Would be memetically strong. (I could also imagine reasons why you might not want that, of course).

Over the past 12 years, I almost always avoided applying for any jobs in effective altruism – though they did often seem like dream jobs – because:

- I was afraid I might not be the best candidate, and if, by chance, I replace the best candidate, my work would not only be a waste but outright harmful. I'm the sort of person who's afraid to drive a car for fear of hurting someone, and the funding allocation can affect the lives of billions or trillions of beings, so any mistakes I could make would vastly outstrip any harm I could do with a car if I tried.

- That

Oh, I don't think the worry hinges on particular infohazards that aren't public in EA. I'm thinking of a pretty general problem like: "The value of the future from the perspective of your altruistic values, epistemology, and decision theory upon reflection is probably a non-monotonic function of how much you increase wisdom etc. broadly. More 'wisdom' or knowledge for actors who are misaligned with you can be quite bad." And this is at least somewhat borne out by examples like AI movement building, biorisk, and technological progress making factory farming worse.

I reckon there is more internal conflict; it’s just not being disclosed.

‘To me, this isn't some carvout for sexual harassment, it's trying to treat discussion of sexual harassment like I treat everything else.’

This is a thankless endeavour. The topic is too emotionally heated, and the social incentives are too skewed towards what you’ve seen.

My own view is that the topic of whether there is or isn't a particular problem with sexual harassment in EA has become a distraction. If there are concrete failures, name them and fix them. If there are cl...

If I understand correctly, your question is whether EA is specifically worse than other organisations, rather than whether being on a par with other organisations is also very bad in this regard?

Because I get the impression that some people simply say that EA is bad, but don’t necessarily rule out the possibility that others are bad too.

I reckon it might be important to know whether the EA is actually performing worse, so the question does seem important to me, because one of the possible answers would be significant.

(For example, learning more from ...

I guess you might think "professional EA orgs" or something are more male-dominated than EA survey respondents. That seems possible, depending on what you wanna count. One thing is 80k is currently majority-women and I think however you carve it up we're a big chunk of the space :)

Agreed. Fwiw, in EA Survey 2024 data, people currently working at EA orgs are actually significantly more likely to be women or non-binary than those not (36.9% vs 29.1%, p=0.004).

Hey Nick, I don’t know if we are that far apart on the conceptual issue of potential bias but I think we are approaching this differently.

However, I really like the Stieglitz and Friedman analogy and think it is useful in many ways. Simply, there are lots of decision points here. And I really wish there were some other groups working on building such work so we could compare and contrast our work with theirs like you can in that context. If that were so, I think this type of meta-debate would be either less likely to occur, or more productive because we co... (read more)