It seems to me that the information that betting so heavily on FTX and SBF was an avoidable failure. So what could we have done ex-ante to avoid it?

You have to suggest things we could have actually done with the information we had. Some examples of information we had:

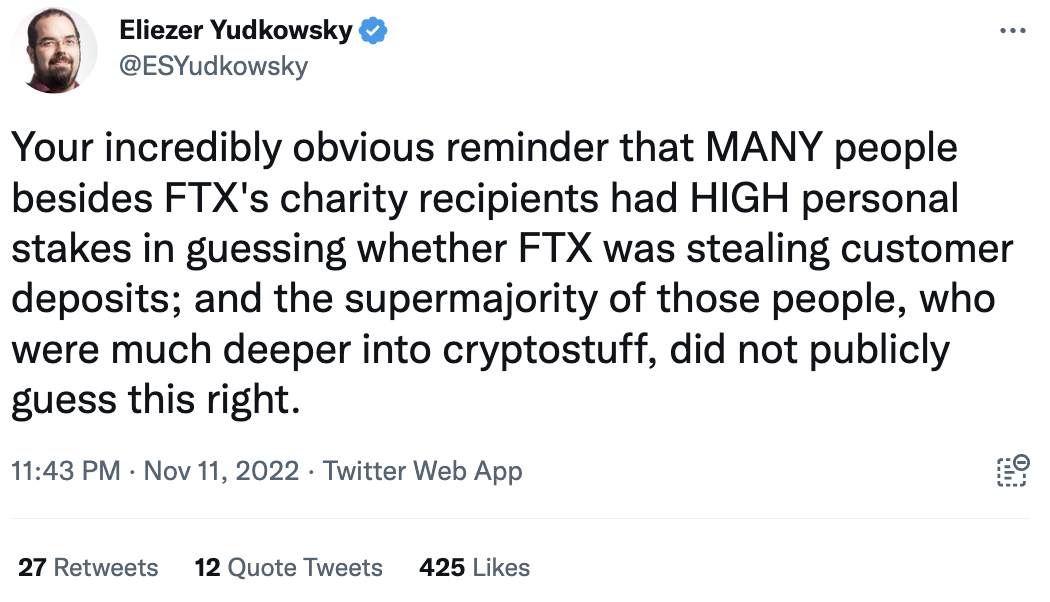

First, the best counterargument:

Then again, if we think we are better at spotting x-risks then these people maybe this should make us update towards being worse at predicting things.

Also I know there is a temptation to wait until the dust settles, but I don't think that's right. We are a community with useful information-gathering technology. We are capable of discussing here.

Things we knew at the time

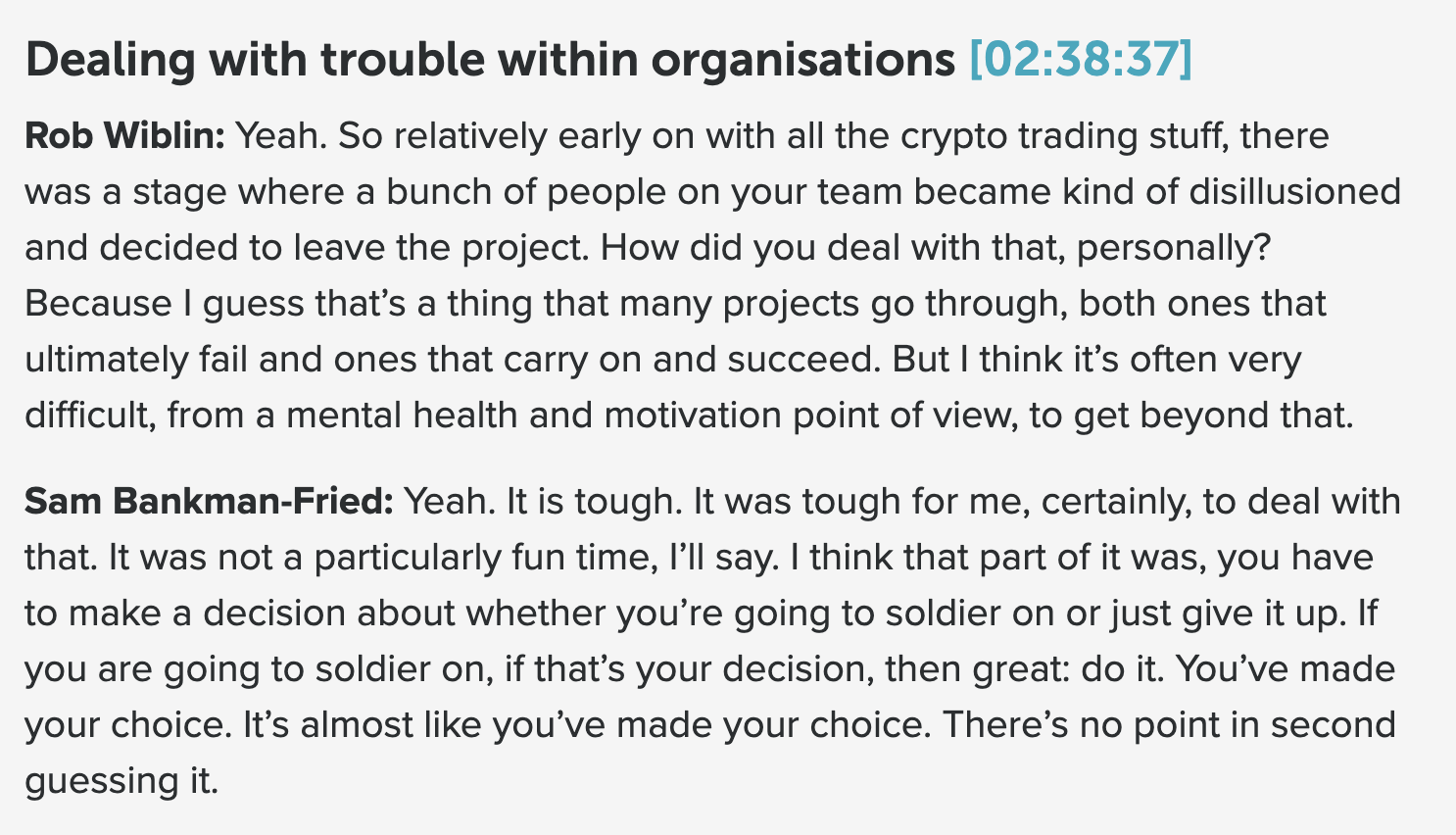

We knew that about half of Alameda left at one time. I'm pretty sure many are EAs or know them and they would have had some sense of this.

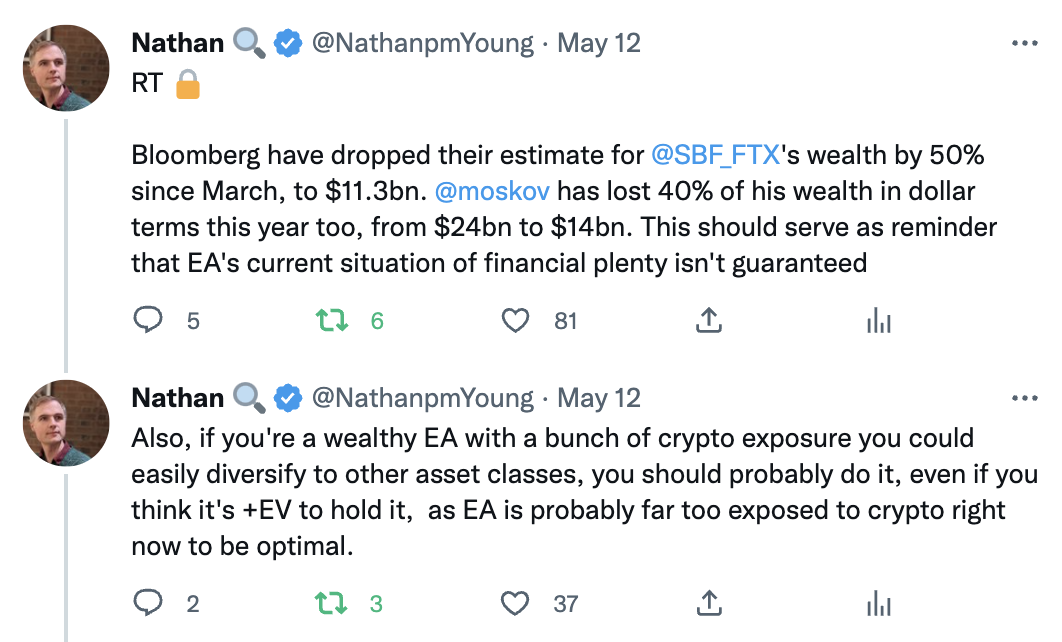

We knew that SBF's wealth was a very high proportion of effective altruism's total wealth. And we ought to have known that something that took him down would be catastrophic to us.

This was Charles Dillon's take, but he tweets behind a locked account and gave me permission to tweet it.

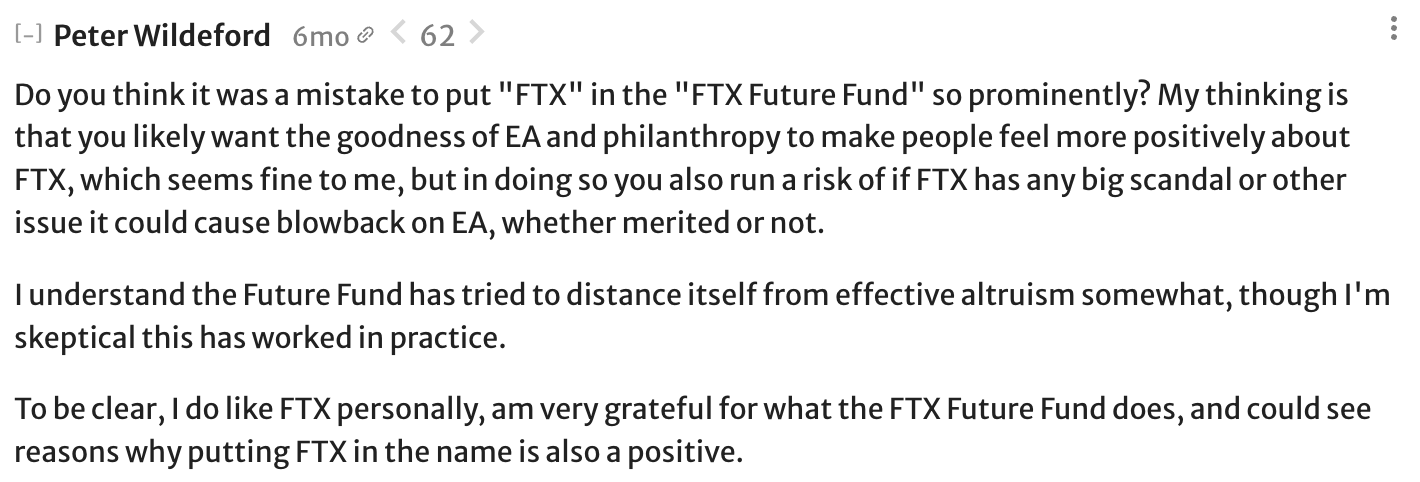

Peter Wildeford noted the possible reputational risk 6 months ago:

We knew that corruption is possible and that large institutions need to work hard to avoid being coopted by bad actors.

Many people found crypto distasteful or felt that crypto could have been a scam.

FTX's Chief Compliance Officer, Daniel S. Friedberg, had behaved fraudulently In the past. This from august 2021.

In 2013, an audio recording surfaced that made mincemeat of UB’s original version of events. The recording of an early 2008 meeting with the principal cheater (Russ Hamilton) features Daniel S. Friedberg actively conspiring with the other principals in attendance to (a) publicly obfuscate the source of the cheating, (b) minimize the amount of restitution made to players, and (c) force shareholders to shoulder most of the bill.

Upvoted, but I disagree with this framing.

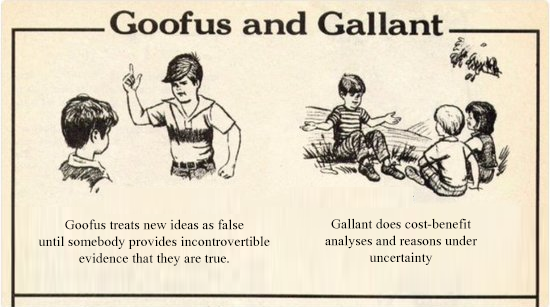

I don't think our primary problem was with flawed probability assignments over some set of explicitly considered hypotheses. If I were to continue with the probabilistic metaphor, I'd be much more tempted to say that we erred in our formation of the practical hypothesis space — that is, the finite set of hypothesis that the EA community considered to be salient, and worthy of extended discussion.

Afaik (and at least in my personal experience), very few EAs seemed to think the potential malfeasance of FTX was an important topic to discuss. Because the topics weren't salient, few people bothered to assign explicit probabilities. To me, the fact that we weren't focusing on the right topics, in some nebulous sense, is more concerning than the ways in which we erred in assigning probabilities to claims within our practical hypothesis space.