EDIT: I'm only going to answer a few more questions, due to time constraints. I might eventually come back and answer more. I still appreciate getting replies with people's thoughts on things I've written.

I'm going to do an AMA on Tuesday next week (November 19th). Below I've written a brief description of what I'm doing at the moment. Ask any questions you like; I'll respond to as many as I can on Tuesday.

Although I'm eager to discuss MIRI-related things in this AMA, my replies will represent my own views rather than MIRI's, and as a rule I won't be running my answers by anyone else at MIRI. Think of it as a relatively candid and informal Q&A session, rather than anything polished or definitive.

----

I'm a researcher at MIRI. At MIRI I divide my time roughly equally between technical work and recruitment/outreach work.

On the recruitment/outreach side, I do things like the following:

- For the AI Risk for Computer Scientists workshops (which are slightly badly named; we accept some technical people who aren't computer scientists), I handle the intake of participants, and also teach classes and lead discussions on AI risk at the workshops.

- I do most of the technical interviewing for engineering roles at MIRI.

- I manage the AI Safety Retraining Program, in which MIRI gives grants to people to study ML for three months with the goal of making it easier for them to transition into working on AI safety.

- I sometimes do weird things like going on a Slate Star Codex roadtrip, where I led a group of EAs as we travelled along the East Coast going to Slate Star Codex meetups and visiting EA groups for five days.

On the technical side, I mostly work on some of our nondisclosed-by-default technical research; this involves thinking about various kinds of math and implementing things related to the math. Because the work isn't public, there are many questions about it that I can't answer. But this is my problem, not yours; feel free to ask whatever questions you like and I'll take responsibility for choosing to answer or not.

----

Here are some things I've been thinking about recently:

- I think that the field of AI safety is growing in an awkward way. Lots of people are trying to work on it, and many of these people have pretty different pictures of what the problem is and how we should try to work on it. How should we handle this? How should you try to work in a field when at least half the "experts" are going to think that your research direction is misguided?

- The AIRCS workshops that I'm involved with contain a variety of material which attempts to help participants think about the world more effectively. I have thoughts about what's useful and not useful about rationality training.

- I have various crazy ideas about EA outreach. I think the SSC roadtrip was good; I think some EAs who work at EA orgs should consider doing "residencies" in cities without much fulltime EA presence, where they mostly do their normal job but also talk to people.

I don’t really remember what was discussed at the Q&A, but I can try to name important things about AI safety which I think aren’t as well known as they should be. Here are some:

----

I think the ideas described in the paper Risks from Learned Optimization are extremely important; they’re less underrated now that the paper has been released, but I still wish that more people who are interested in AI safety understood those ideas better. In particular, the distinction between inner and outer alignment makes my concerns about aligning powerful ML systems much crisper.

----

On a meta note: Different people who work on AI alignment have radically different pictures of what the development of AI will look like, what the alignment problem is, and what solutions might look like.

----

Compared to people who are relatively new to the field, skilled and experienced AI safety researchers seem to have a much more holistic and much more concrete mindset when they’re talking about plans to align AGI.

For example, here are some of my beliefs about AI alignment (none of which are original ideas of mine):

--

I think it’s pretty plausible that meta-learning systems are going to be a bunch more powerful than non-meta-learning systems at tasks like solving math problems. I’m concerned that by default meta-learning systems are going to exhibit alignment problems, for example deceptive misalignment. You could solve this with some combination of adversarial training and transparency techniques. In particular, I think that to avoid deceptive misalignment you could use a combination of the following components:

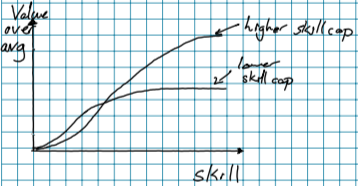

Each of these components can be stronger or weaker, where by stronger I mean “more restrictive but having more nice properties”.

The stronger you can build one of those components, the weaker the others can be. For example, if you have some kind of regularization that you can do to increase transparency, you don’t have to have neural net interpretability techniques that are as powerful. And if you have a more powerful and reliable adversarial setup, you don’t need to have as much restriction on what ML techniques you can use.

And I think you can get the adversarial setup to be powerful enough to catch non-deceptive mesa optimizer misalignment, but I don’t think you can prevent deceptive misalignment without having powerful enough interpretability techniques that you can get around things like the RSA 2048 problem.

--

In the above arguments, I’m looking at the space of possible solutions to a problem and trying to narrow the possibility space, by spotting better solutions to subproblems or reducing subproblems to one another, and by arguing that it’s impossible to come up with a solution of a particular type.

The key thing that I didn’t use to do is thinking of the alignment problem as having components which can be attacked separately, and thinking of solutions to subproblems as being comprised of some combination of technologies which can be thought about independently. I used to think of AI alignment as being more about looking for a single overall story for everything, as opposed to looking for a combination of technologies which together allow you to build an aligned AGI.

You can see examples of this style of reasoning in Eliezer’s objections to capability amplification, or Paul on worst-case guarantees, or many other places.