🎧 We've created a Spotify playlist with this years marginal funding posts.

Posts with <30 karma don't get narrated so aren't included in the playlist.

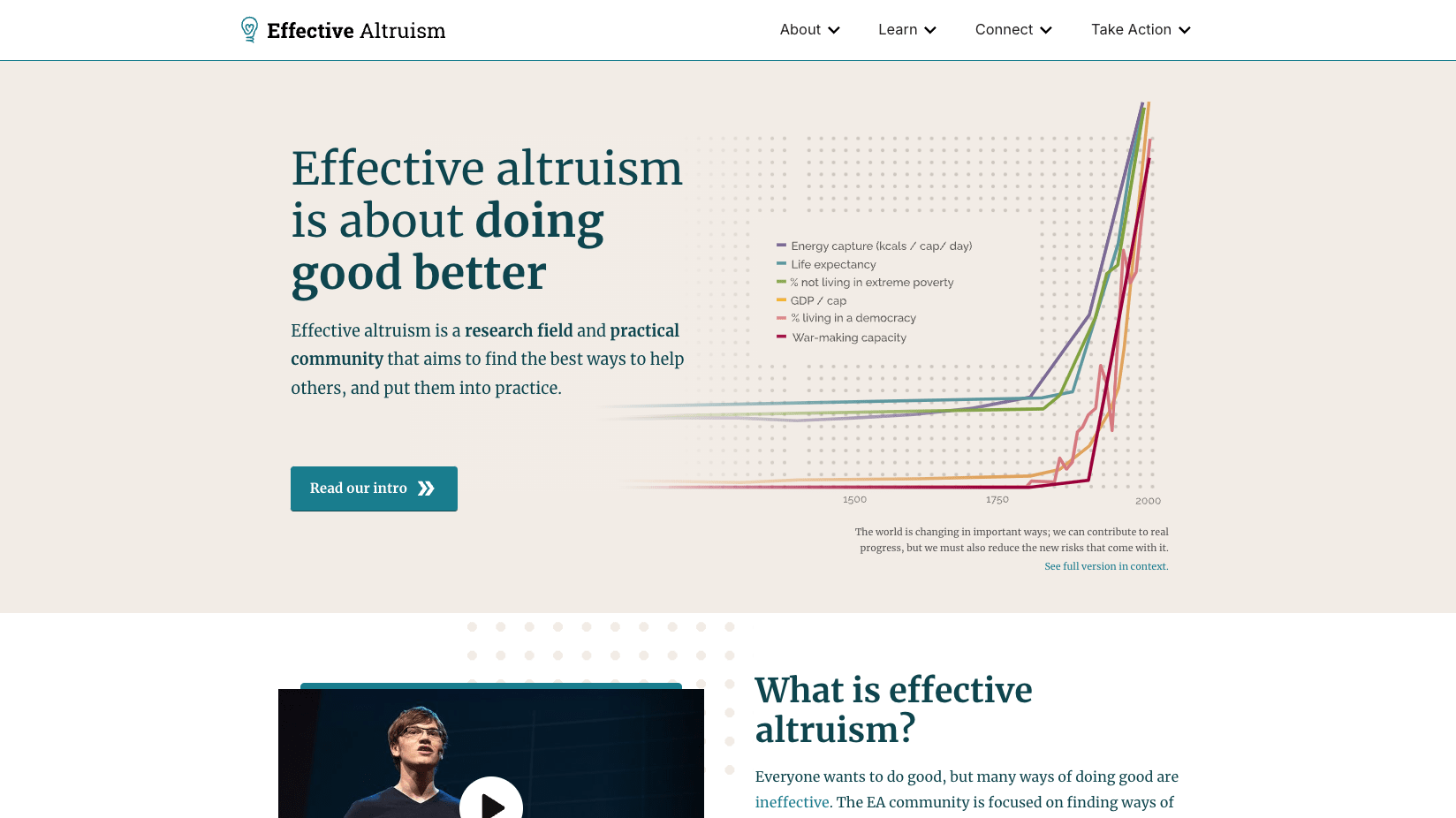

I’m part of a working group at CEA that’s started scoping out improvements for effectivealtruism.org. Our main goals are:

- Improve understanding of what EA is (clarify and simplify messaging, better address common misconceptions, showcase more tangible examples of impact, people, and projects)

- Improve perception of EA (show more of the altruistic and other-directedness parts of EA alongside the effective, pragmatic, results-driven parts, feature more testimonials and impact stories from a broader range of people, make it feel more human and up-to-date)

- Increase high-value actions (improve navigation, increase newsletter and VP signups, make it easier to find actionable info)

For the first couple of weeks, I’ll be testing how the current site performs against these goals, then move on to the redesign, which I’ll user-test against the same goals.

If you’ve visited the current site and have opinions, I’d love to hear them. Some prompts that might help:

- Do you remember what your first impression was?

- Have you ever struggled to find specific info on the site?

- Is there anything that annoys you?

- What do you think could be confusing to someone who hasn't heard about EA before?

- What’s been most helpful to you? What do you like?

If you prefer to write your thoughts anonymously you can do so here, although I’d encourage you to comment on this quick take so others can agree or disagree vote (and I can get a sense of how much the feedback resonates).

I think the website is already quite good. It includes almost everything that somebody new to the community might find useful without overcrowding. If I had to come up with a couple comments:

- “For the first couple of weeks, I’ll be testing how the current site performs against these goals, then move on to the redesign, which I’ll user-test against the same goals.” For the testing methodology, it sounds like you’re planning to gather metrics on this version, switch to V2, and gather metrics again. I think A/B testing might be a better option if it’s not too inconvenient, since that might get you more similarity between the groups on which you gather data.

- You could add a section on stories of people in effective altruism in video or text form. Learning about how other people got involved, their pasts, and their motivations, might inspire people to join in-person groups and EAVP more than reading or listening to podcasts. Ideally the people would be diverse (country of origin, gender, race, primary cause area, type of contribution, etc.).

Hope that helps!

🎧 We've created a Spotify playlist with this years marginal funding posts.

Posts with <30 karma don't get narrated so aren't included in the playlist.