I work on CEA’s Community Health and Special Projects Team. But these thoughts are my own (apart from question 6 which I inherited from Nicole Ross).

I have an internal list of questions that I often go through when I’m making a decision (including when that decision is whether or what to communicate to others).

I often bring these questions up when I’m trying to help others in the EA community make decisions, and some folks seem to find them helpful. I also sometimes see people making (what I believe to be) sub-optimal decisions and one or other of these questions may have helped things go better.

Maybe you’ll find them helpful too, and maybe you have your own questions you ask yourself (please share them in the comments!).

I’m afraid they don’t tell you how to make a decision[1] (sorry - I haven’t worked that bit out!), and they aren’t exhaustive. They are just questions that seem to help when I am making decisions - either by nudging me to generate new options, or to become more aware of consequences.

1. “Is this really where the tradeoff bites?”

Some decisions are framed as “A” or “B”. More decisions are framed as tradeoffs between amounts of A and amounts of B. Considering tradeoffs is very useful! But I often notice people assuming they’re making a decision under difficult tradeoffs between values A and B, when I see A and B as being values on two quite different axes. There are often alternatives where we can get more A without sacrificing B or vice versa, or we can get more A AND more B (i.e. there are Pareto improvements we can make).

The A and B I’ve been thinking most about recently are (caricatured) “clearly stating what you believe” and “being sensitive to others”. I think Richard Chappell’s “Don’t be an Edgelord” explains this particular A and B well. But I’ve noticed the general pattern a lot.

2. “What does my shoulder X think?” or… (higher cost) “what does X think?”

You’re not always going to please everyone. That’s the way things are. But plenty of people seem to be surprised by the negative reaction they get from their decisions or words. This problem seems tractable.

It would be nice if people could ask lots of people their opinion before making a decision, but that is costly (and choosing to ask is a decision in itself). So I’ve been trying to develop a range of people/groups that metaphorically pop up on my shoulder to help me think:

- Some individuals whose thinking I respect - some who I usually agree with, some who I regularly disagree with.

- Individuals or groups who would be affected by my decisions

I’m sure my shoulder people OFTEN don’t match the real person’s thoughts. But I still find them helpful to create new perspectives.

Some tips for developing or using shoulder people:

- If the stakes are high - don’t rely on your shoulder person. Talk to the real people, the real stakeholders, people who have a different background or a different way of thinking to you. This especially goes if you’ve noticed you are frequently surprised by people’s reactions to your actions or communications, or if you don’t know the stakeholders well.

- You don’t have to know the person/group to make a shoulder person - just read a bunch of what they’ve written. Some examples: I’ve often found insight from Julia Wise, so through reading her blog I created a shoulder Julia long before I started working with her. I’ve only met Habryka once or twice, but I often get new perspectives from reading their contributions on the Forum. This seems helpful, so I’d like to improve my shoulder Habryka.

- Before publishing something, read your writing several times with several different shoulder people in mind.

- Practice! One way to develop shoulder people is to read something (Forum post, tweet, whatever) and predict what the comments will be, then read the real comments.

- Your shoulder person shouldn’t make the final decision - you should!

3. “What incentives am I creating?”

If this decision won’t be seen by many people, or really is a once off situation, then maybe you don’t have to worry about this one. But many decisions aren’t like that. Rob Bensinger explains a related idea about norms.

4. “Can (and should) this wait a bit?”

Holden explains the benefits of “cold takes” well.

5. “Are emotions telling me something?” or “Feelings are data”.

Sometimes I feel frustrated or worried or smug about something, but I can’t clearly explain why. This often means there are real and important considerations that I haven’t fully brained through. So if I, or other people, are feeling unexplained emotions or vague senses of unease, I try to create some space so the considerations can be teased out. I originally learnt this idea from Julia Galef many years ago in a Rationally Speaking podcast and it stuck with me, but I can’t find the episode, quote her, or give any examples she gave. If anyone knows what I’m talking about please let me know!

6. “Do I stand by this?” / Integrity check

This is a final check to make me ask whether deep down I think this is the right decision. This might trigger me to think

- Am I feeling pressure to act in a certain way?

- Am I deferring to others, and if so, am I okay with that?

- Am I feeling particularly tired, angry or upset and is that affecting my decision?

- If I'm not being fully transparent (which is often justified), am I misleading people about the nature of the unstated information (see the onion test for integrity)?

- Do I feel uncomfortable about this? (see Question 5).

This check doesn’t guarantee I’ll stand by my actions, but it has helped prevent me from making some mistakes.

If you have questions you ask yourself when making decisions, please share them in the comments!

- ^

I like using Clearer Thinking’s decision making tool for big life decisions.

Pre-mortem i.e. "Imagine I make this decision and end up regretting it. What went wrong?"

Ooh, that is a good one!

I like "Is this going to mislead people?" or "Am I communicating as honestly as I can?" for communications, in the spirit of Chana's onion test post for personal integrity (which I think about often).

(Edit: really like this list! :) )

Oh, how could I have forgotten the onion test! I'll add that in. Thanks

Thanks for sharing, Catherine! I apply many of your tips and agree that they are super useful. Additional questions I ask myself quite often:

Some tools for group decision-making we use:

If there are larger decisions I want to think through more rigorously, I quite often use this mental structure as a starting point (and then adapt it): Recommendation/conclusion incl. my certainty in the conclusion, alternative options, my arguments for the recommendation, my arguments against, key uncertainties, key assumptions, downside risks and predictions

Probably stating the obvious for many here: I think the CFAR handbook also has great prompts for people who are interested in the topic

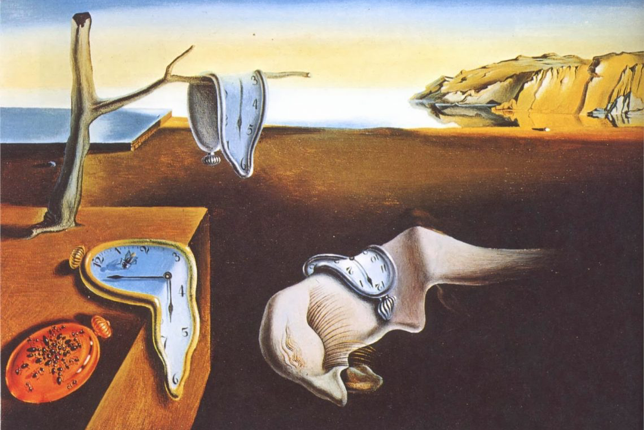

Upvote just for the memes / images! And great content and comments; wish I had seen something like this 35 years ago.

https://www.losingmyreligions.net/