Acknowledgements

A big thank you to Bruce Tsai, Shakeel Hashim, Ines, and Nathan Young for their insightful notes and additions (though they do not necessarily agree with/endorse anything in this post).

Important Note

This post is a quick exploration of the question, 'is EA just longtermism?' (I come to the conclusion that EA is not). This post is not a comprehensive overview of EA priorities nor does it dive into the question from every angle - it is mostly just my brief thoughts. As such, there are quite a few things missing from this post (many of the comments do a great job of filling in some gaps). In the future, maybe I'll have the chance to write a better post on this topic (or perhaps someone else will; please let me know if you do so I can link to it here).

Also, I've changed the title from 'Is EA just longtermism now?' so my main point is clear right off the bat.

Preface

In this post, I address the question: is Effective Altruism (EA) just longtermism? I then ask, among other questions, what factors contribute to this perception? What are the implications?

1. Introduction

Recently, I’ve heard a few criticisms of Effective Altruism (EA) that hinge on the following: “EA only cares about longtermism.” I’d like to explore this perspective a bit more and the questions that naturally follow, namely: How true is it? Where does it come from? Is it bad? Should it be true?

2. Is EA just longtermism?

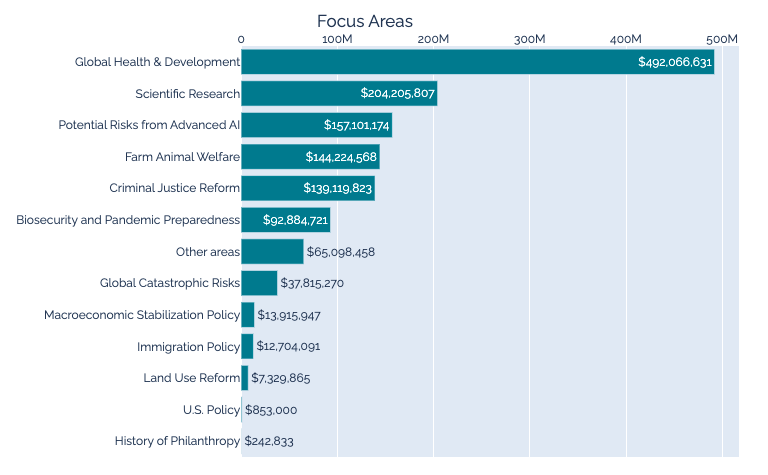

In 2021, around 60% of funds deployed by the Effective Altruism movement came from Open Philanthropy (1). Thus, we can use their grant data to try and explore EA funding priorities. The following graph (from Effective Altruism Data) shows Open Philanthropy’s total spending, by cause area, since 2012:

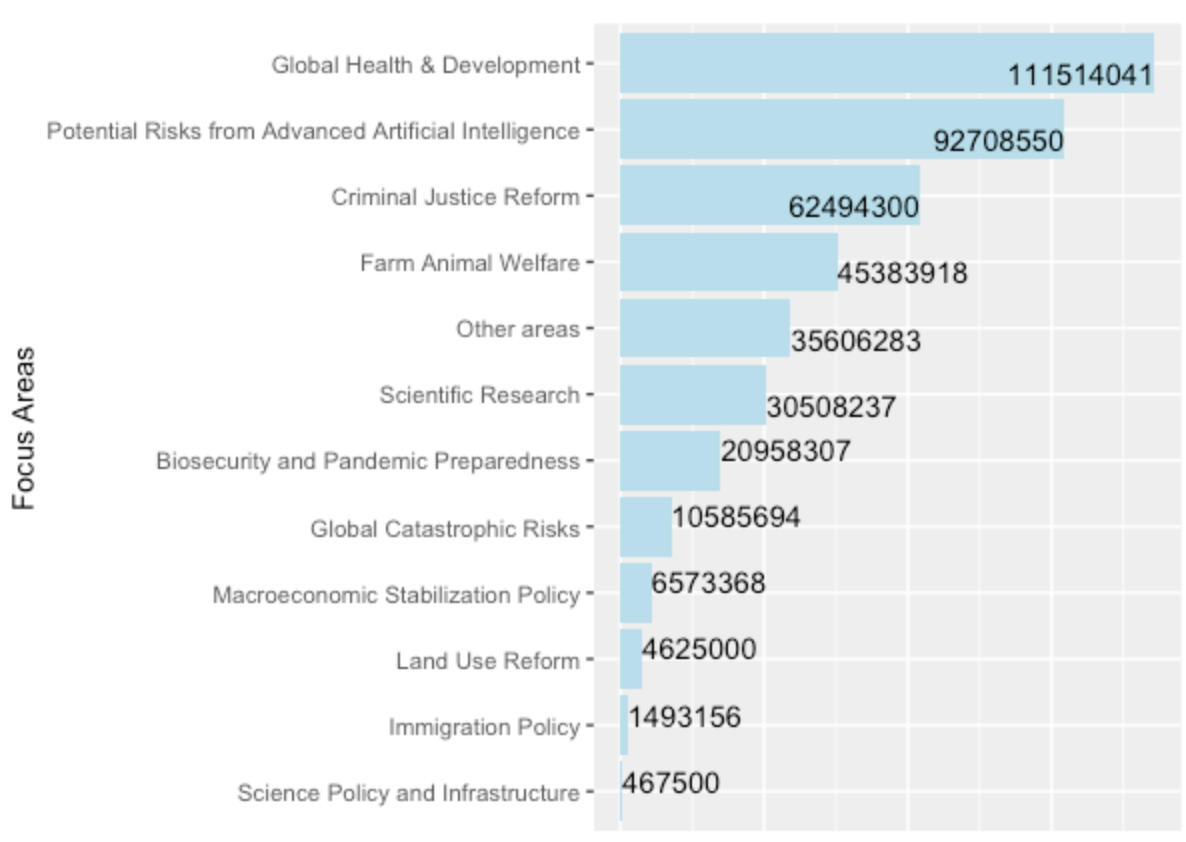

Overall, Global Health & Development accounts for the majority of funds deployed. How has that changed in recent years, as AI Safety concerns grow? We can look at this uglier graph (bear with me) showing Open Philanthropy grants deployed from January, 2021 to present (data from the Open Philanthropy Grants Database):

We see that Global Health & Development is still the leading fund-recipient; however, Risks from Advanced AI is now a closer second. We can also note that the third and fourth most funded areas, Criminal Justice Reform and Farm Animal Welfare, are not primarily driven by a goal to influence the long-term future

With this data, I feel pretty confident that EA is not just longtermism. However, it is also true (and well-known) that funding for longtermist issues, particularly AI Safety, has increased. Additionally, the above data doesn't provide a full picture of the EA funding landscape nor community priorities. This raises a few more questions:

2.1 Funding has indeed increased, but what exactly is contributing to the view that EA essentially is longtermism/AI Safety?

(Note: this list is just an exploration and not meant to claim whether the below things are good or bad, or true)

- William Macaskill’s upcoming book, What We Owe the Future, has generated considerable promotion and discussion. Following Toby Ord’s The Precipice, published in March, 2020, I imagine this has contributed to the outside perception that EA is becoming synonymous with longtermism.

- The longtermist approach to philanthropy is different from mainstream, traditional philanthropy. When trying to describe a concept like Effective Altruism, sometimes the thing that most differentiates it is what stands out, consequently becoming its defining feature.

- Of the longtermist causes, AI Safety receives the most funding, and furthermore, has a unique ‘weirdness’ factor that generates interest and discussion. For example, some of the popular thought experiments used to explain Alignment concerns can feel unrealistic, or something out of a sci-fi movie. I think this can serve to both: 1. draw in onlookers whose intuition is to scoff, 2. give AI-related discussions the advantage of being particularly interesting/compelling, leading to more attention.

- AI Alignment is an ill-defined problem with no clear solution and tons of uncertainties: What counts as AGI? What does it mean for an AI system to be fair or aligned? What are the best approaches to Alignment research? With so many fundamental questions unanswered, it’s easy to generate ample AI Safety discussion in highly visible places (e.g. forums, social media, etc.) to the point that it can appear to dominate EA discourse.

- AI Alignment is a growing concern within the EA movement, so it's been highlighted recently by EA-aligned orgs (for example, AI Safety technical research is listed as the top recommended career path by 80,000 Hours).

- Within the AI Safety space, there is cross-over between EA and other groups, namely tech and rationalism. Those who learn about EA through these groups may only interact with EA spaces focussed on AI Safety/crossing over into other groups–I imagine this shapes their understanding of EA as a whole.

- For some, the recent announcement of the FTX Future Fund seemed to solidify the idea that EA is now essentially billionaires distributing money to protect the long-term future.

- [Edit: There are many more factors to consider that others have outlined in the comments below :)]

2.2 Is this view a bad thing? If so, what can we do?

Is it actually a problem that some people feel EA is “just longtermism”. I would say, yes, insofar that it is better to have an accurate picture of an idea/movement versus an inaccurate one. Beyond that, such a perception may turn away people who could be convinced to work on cause areas more unrelated to longtermism, like farmed animal welfare, but would disagree with longtermist arguments. If this group is large enough, then it seems important to try and promote a clearer outside understanding of EA, allowing the movement to grow in various directions and find its different target audiences, rather than having its pieces eclipsed by one cause area or worldview.

What can we do?

I’m not sure, there are likely a few strategies (e.g. Shakeel Hashim suggested we could put in some efforts to promote older EA content, such as Doing Good Better, or organizations associated with causes like Global Health and Farmed Animal Welfare).

2.3 So EA isn’t “just longtermism,” but maybe it’s “a lot of longtermism”? And maybe it’s moving towards becoming “just longtermism”?

I have no clue if this is true, but if so, then the relevant questions are:

2.4 What if EA was just longtermism? Would that be bad? Should EA just be longtermism?

I’m not sure. I think it’s true that EA being “just longtermism” leads to worse optics (though this is just a notable downside, not an argument against shifting towards longtermism). We see particularly charged critiques like,

Longtermism is an excuse to ignore the global poor and minority groups suffering today. It allows the privileged to justify mistreating others in the name of countless future lives, when in actuality, they’re obsessed with pursuing profitable technologies that result in their version of ‘utopia’–AGI, colonizing mars, emulated minds–things only other privileged people would be able to access, anyway.

I personally disagree with this. As a counter-argument:

Longtermism, as a worldview, does not want present day people to suffer; instead, it wants to work towards a future with as much fluorishing as possible, for everyone. This idea is not as unusual as it is sometimes framed - we hear something very similar with climate change advocacy (i.e. “We need climate interventions to protect the future of our planet. Future generations could stand to suffer immensely poor environmental conditions due to our choices”). An individual or elite few individuals could twist longtermist arguments to justify poor behavior, but this is true of all philanthropy.

Finally, there are many conclusions one can draw from longtermist arguments–but the ones worth pursuing will be well thought-out. Critiques can often highlight niche tech rather than the prominent concerns held by the longtermist community at large: risks from advanced Artificial Intelligence, pandemic preparedness, and global catastrophic risks. Notably, working on these issues can often improve the lives of people living today (e.g. working towards safe advanced AI includes addressing already present issues, like racial or gender bias in today’s systems).

But back to the optics–so longtermism can be less intuitively digestible, it can be framed in a highly negative way–does that matter? If there is a strong case for longtermism, should we not shift our priorities towards it? In which case, the real question is, does the case for longtermism hold?

This leads me to the conclusion: if EA were to become "just longtermism," that’s fine, conditional on the arguments being incredibly strong. And if there are strong arguments against longtermism, the EA community (in my experience) is very keen to hear them.

Conclusion

Overall, I hope this post generates some useful discussion around EA and longtermism. I posed quite a few questions, and offered some of my personal thoughts; however, I hold all these ideas loosely and would be very happy to hear other perspectives.

Citations

I'll read any reply to this and make sure CEA sees it, but I don't plan to respond further myself, as I'm no longer working on this project.

Thanks for the response. I agree with some of your points and disagree with others.

To preface this, I wouldn't make a claim like "the 3rd edition was representative for X definition of the word" or "I was satisfied with the Handbook when we published it" (I left CEA with 19 pages of notes on changes I was considering). There's plenty of good criticism that one could make of it, from almost any perspective.

I agree.

Many of these have ideas that can be applied to either perspective. But the actual things they discuss are mostly near-term causes.

This is different from e.g. detailed pieces describing causes like malaria prevention or vitamin supplementation. I think that's a real gap in the Handbook, and worth addressing.

But it seems to me like anyone who starts the Handbook will get a very strong impression in those first three sections that EA cares a lot about near-term causes, helping people today, helping animals, and tackling measurable problems. That impression matters more to me than cause-specific knowledge (though again, some of that would still be nice!).

However, I may be biased here by my teaching experience. In the two introductory fellowships I've facilitated, participants who read these essays spent their first three weeks discussing almost exclusively near-term causes and examples.

I agree that the reading in these sections is more focused. Nonetheless, I still feel like there's a decent balance, for reasons that aren't obvious from the content alone:

I don't think I've seen Pascal's Mugging discussed in any non-longtermist context, unless you count actual religion. Do you have an example on hand for where people have applied the idea to a neartermist cause?

I agree. I wouldn't think of that piece as critical of longtermism.

I haven't gone back to check all the material, but I assume you're correct. I think it would be useful to add more content on this point.

This is another case where my experience as a facilitator warps my perspective; I think both of my groups discussed this, so it didn't occur to me that it wasn't an "official" topic.

I agree. That wasn't the purpose of selecting test readers; I mentioned them only because some of them happened to make useful suggestions on this front.

I wrote to four people, two of whom (including Michael) sent useful feedback . The other two also responded; one said they were busy, the other seemed excited/interested but never wound up sending anything.

A 50% useful-response rate isn't bad, and makes me wish I'd sent more of those emails. My excuse is the dumb-but-true "I was busy, and this was one project among many".

(As an aside, if someone wanted to draft a near-term-focused version of the Handbook, I think they'd have a very good shot at getting a grant.)

I'd probably have asked "what else should we include?" rather than "is this current stuff good?", but I agree with this in spirit.

(As another aside, if you specifically have ideas for material you'd like to see included, I'd be happy to pass them along to CEA — or you could contact someone like Max or Lizka.)