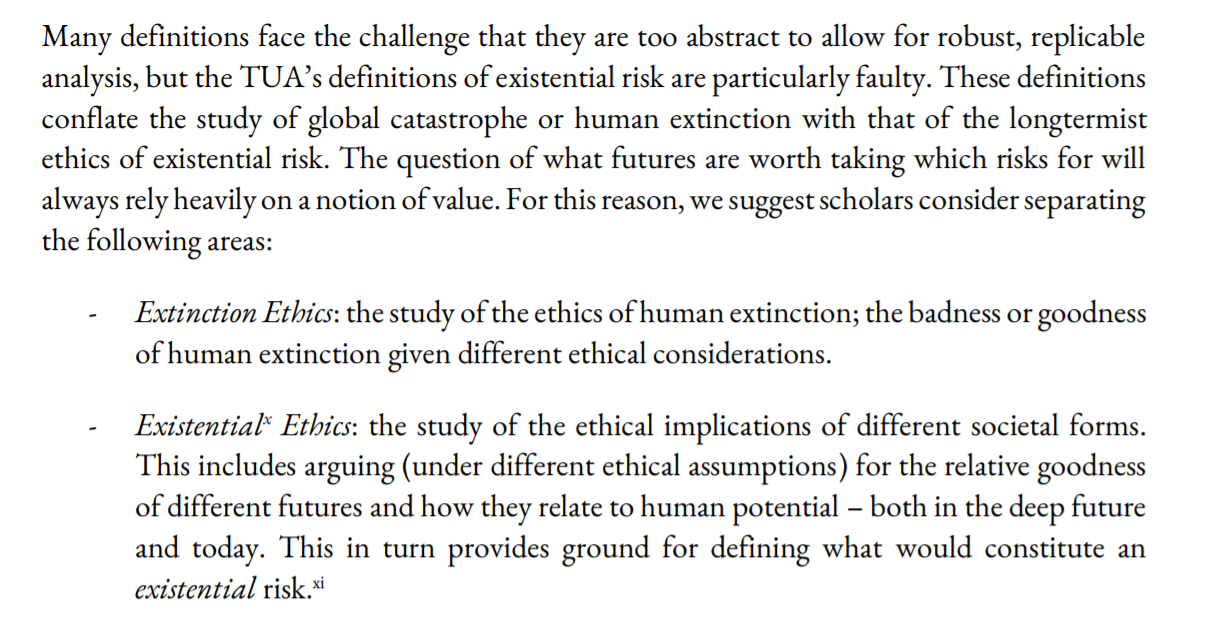

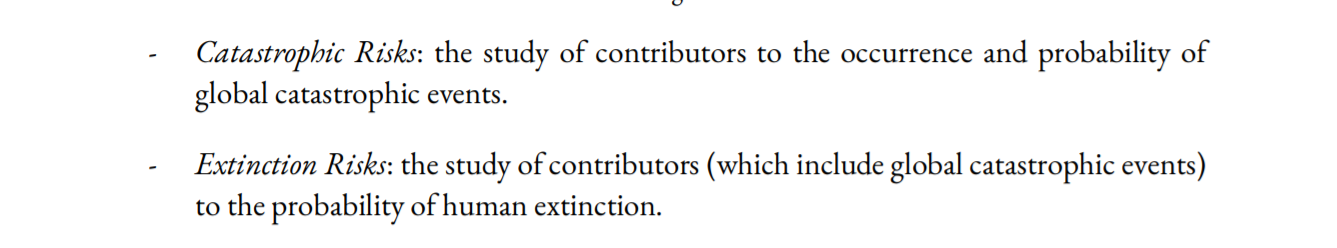

Luke Kemp and I just published a paper which criticises existential risk for lacking a rigorous and safe methodology:

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3995225

It could be a promising sign for epistemic health that the critiques of leading voices come from early career researchers within the community. Unfortunately, the creation of this paper has not signalled epistemic health. It has been the most emotionally draining paper we have ever written.

We lost sleep, time, friends, collaborators, and mentors because we disagreed on: whether this work should be published, whether potential EA funders would decide against funding us and the institutions we're affiliated with, and whether the authors whose work we critique would be upset.

We believe that critique is vital to academic progress. Academics should never have to worry about future career prospects just because they might disagree with funders. We take the prominent authors whose work we discuss here to be adults interested in truth and positive impact. Those who believe that this paper is meant as an attack against those scholars have fundamentally misunderstood what this paper is about and what is at stake. The responsibility of finding the right approach to existential risk is overwhelming. This is not a game. Fucking it up could end really badly.

What you see here is version 28. We have had approximately 20 + reviewers, around half of which we sought out as scholars who would be sceptical of our arguments. We believe it is time to accept that many people will disagree with several points we make, regardless of how these are phrased or nuanced. We hope you will voice your disagreement based on the arguments, not the perceived tone of this paper.

We always saw this paper as a reference point and platform to encourage greater diversity, debate, and innovation. However, the burden of proof placed on our claims was unbelievably high in comparison to papers which were considered less “political” or simply closer to orthodox views. Making the case for democracy was heavily contested, despite reams of supporting empirical and theoretical evidence. In contrast, the idea of differential technological development, or the NTI framework, have been wholesale adopted despite almost no underpinning peer-review research. I wonder how much of the ideas we critique here would have seen the light of day, if the same suspicious scrutiny was applied to more orthodox views and their authors.

We wrote this critique to help progress the field. We do not hate longtermism, utilitarianism or transhumanism,. In fact, we personally agree with some facets of each. But our personal views should barely matter. We ask of you what we have assumed to be true for all the authors that we cite in this paper: that the author is not equivalent to the arguments they present, that arguments will change, and that it doesn’t matter who said it, but instead that it was said.

The EA community prides itself on being able to invite and process criticism. However, warm welcome of criticism was certainly not our experience in writing this paper.

Many EAs we showed this paper to exemplified the ideal. They assessed the paper’s merits on the basis of its arguments rather than group membership, engaged in dialogue, disagreed respectfully, and improved our arguments with care and attention. We thank them for their support and meeting the challenge of reasoning in the midst of emotional discomfort. By others we were accused of lacking academic rigour and harbouring bad intentions.

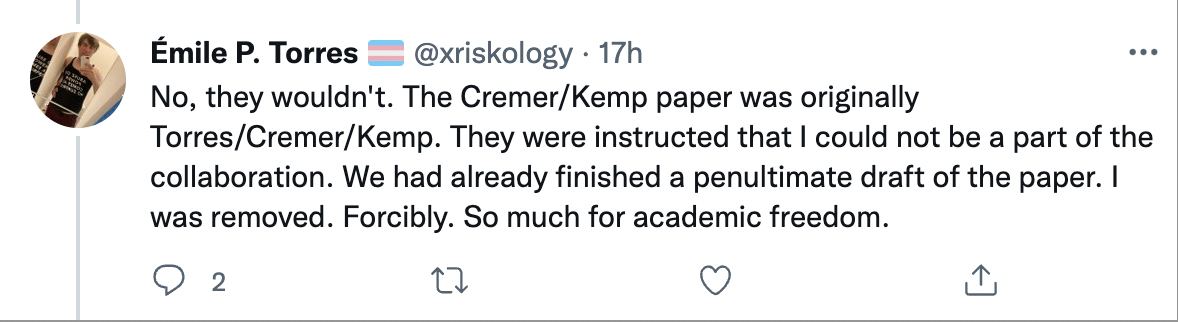

We were told by some that our critique is invalid because the community is already very cognitively diverse and in fact welcomes criticism. They also told us that there is no TUA, and if the approach does exist then it certainly isn’t dominant. It was these same people that then tried to prevent this paper from being published. They did so largely out of fear that publishing might offend key funders who are aligned with the TUA.

These individuals—often senior scholars within the field—told us in private that they were concerned that any critique of central figures in EA would result in an inability to secure funding from EA sources, such as OpenPhilanthropy. We don't know if these concerns are warranted. Nonetheless, any field that operates under such a chilling effect is neither free nor fair. Having a handful of wealthy donors and their advisors dictate the evolution of an entire field is bad epistemics at best and corruption at worst.

The greatest predictor of how negatively a reviewer would react to the paper was their personal identification with EA. Writing a critical piece should not incur negative consequences on one’s career options, personal life, and social connections in a community that is supposedly great at inviting and accepting criticism.

Many EAs have privately thanked us for "standing in the firing line" because they found the paper valuable to read but would not dare to write it. Some tell us they have independently thought of and agreed with our arguments but would like us not to repeat their name in connection with them. This is not a good sign for any community, never mind one with such a focus on epistemics. If you believe EA is epistemically healthy, you must ask yourself why your fellow members are unwilling to express criticism publicly. We too considered publishing this anonymously. Ultimately, we decided to support a vision of a curious community in which authors should not have to fear their name being associated with a piece that disagrees with current orthodoxy. It is a risk worth taking for all of us.

The state of EA is what it is due to structural reasons and norms (see this article). Design choices have made it so, and they can be reversed and amended. EA fails not because the individuals in it are not well intentioned, good intentions just only get you so far.

EA needs to diversify funding sources by breaking up big funding bodies and by reducing each orgs’ reliance on EA funding and tech billionaire funding, it needs to produce academically credible work, set up whistle-blower protection, actively fund critical work, allow for bottom-up control over how funding is distributed, diversify academic fields represented in EA, make the leaders' forum and funding decisions transparent, stop glorifying individual thought-leaders, stop classifying everything as info hazards…amongst other structural changes. I now believe EA needs to make such structural adjustments in order to stay on the right side of history.

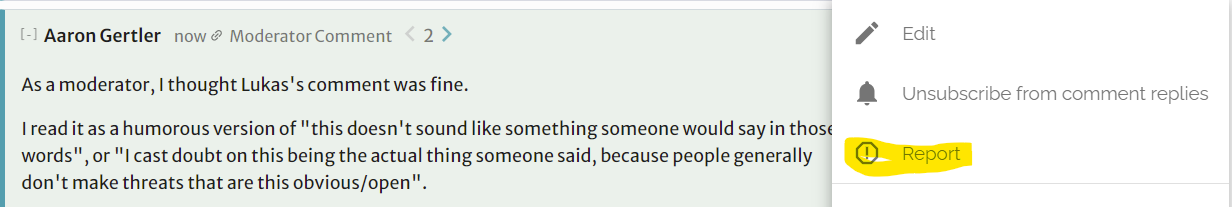

I think there are a bunch of examples we could use here, which fall along a spectrum of "believability" or something like that.

Where the unbelievable end of the spectrum is e.g. "China has never imprisoned a Uyghur who wasn't an active terrorist", and the believable end of the spectrum is e.g. "gravity is what makes objects fall".

If someone argues that objects fall because of something something the luminiferous aether, it seems really unlikely that "they have a background in physics but just disagree about gravity" is the right explanation.

If someone argues that China actually imprisons many non-terrorist Uyghurs, it seems really likely that "they have a background in the Chinese government's claims but just disagree with the Chinese government" is the right explanation.

So what about someone who argues that degrowth is very likely to lead to "enormous humanitarian costs"? How likely is it that "they have a background in the claims of Hickel et al. but disagree" is the right explanation, vs. something like "they've never read Hickel" or "they believe Hickel is right but are lying"?

Moreover, is it "basic background knowledge" that degrowth would not be very likely to lead to "enormous humanitarian costs"?

What you think of those questions seems to depend on how you feel about the degrowth question generally. To some people, it seems perfectly believable that we could realistically achieve degrowth without enormous humanitarian costs. To other people, this seems unbelievable.

I see Halstead as being on the "unbelievable" side and you as being on the "believable" side. Given that there are two sides to the question, with some number of reasonable scholars on each side, Halstead would ideally hedge his language ("degrowth would likely have enormous humanitarian costs" rather than "built-in feature"). And you'd ideally hedge your language ("fails to address reasonable arguments from people like Hickel" rather than "flatly untrue in a way that is obvious").

*****

I cared more about your reply than Halstead's comment because, while neither person is doing the ideal hedge thing, your comment was more rude/aggressive than Halstead's.

(I could imagine someone reading his comment as insulting to the authors, but I personally read it as "he thinks the authors are deliberately making a tradeoff of one value for another" rather than "he thinks the authors support something that is clearly monstrous".)

To me, the situation reads as one person making contentious claim X, and the other saying "X is flatly wrong in a way that is obvious to anyone who reads contentious author Y, stop mischaracterizing the positions of people like author Y" — when the first person never mentioned author Y.

Perhaps the first person should have mentioned author Y somewhere, if only to say "I disagree with them" — in this case, author Y is pretty famous for their views — but even so, a better response is "I think X is wrong because of the points made by author Y".

*****

I'd feel the same way even if someone were making some contentious statement about EA. And I hope that I'd respond to e.g. "effective altruism neglects systemic change" with something like "I think article X shows this isn't true, why are you saying this?"

I'd feel differently if that person were posting the same kinds of comments frequently, and never responding to anyone's follow-up questions or counterarguments. Given your initial comment, maybe that's how you feel about Halstead + degrowth? (Though if that's the case, I still think the burden of proof is on the person accusing another of bad faith, and they should link to other cases of the person failing to engage.)