I thought I'd run the listening exercise I'd like to see.

- Get popular suggestions

- Run a polis poll

- Make a google doc where we research consensus suggestions/ near consensus/consensus for specific groups

- Poll again

Stage 1

Give concrete suggestions for community changes. 1 - 2 sentences only.

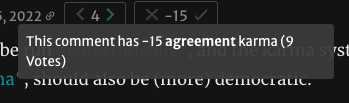

Upvote if you think they are worth putting in the polis poll and agreevote if you think the comment is true.

Agreevote if you think they are well-framed.

Aim for them to be upvoted. Please add suggestions you'd like to see.

I'll take the top 20 - 30

I will delete/move to comments top-level answers that are longer than 2 sentences.

Stage 2

Polis poll here: https://pol.is/5kfknjc9mj