Tl;dr: I’m kicking off a push for public discussions about EA strategy that will be happening June 12-24. You’ll see new posts under this tag, and you can find details about people who’ve committed to participating and more below.

Motivation and what this is(n’t)

I feel (and, from conversations in person and seeing discussions on the Forum, think that I am not alone in feeling) like there’s been a dearth of public discussion about EA strategy recently, particularly from people in leadership positions at EA organizations.

To help address this, I’m setting up an “EA strategy fortnight” — two weeks where we’ll put in extra energy to make those discussions happen. A set of folks have already volunteered to post thoughts about major strategic EA questions, like how centralized EA should be or current priorities for GH&W EA.

This event and these posts are generally intended to start discussion, rather than give the final word on any given subject. I expect that people participating in this event will also often disagree with each other, and participation in this shouldn’t imply an endorsement of anything or anyone in particular.

I see this mostly as an experiment into whether having a simple “event” can cause people to publish more stuff. Please don't interpret any of these posts as something like an official consensus statement.

Some people have already agreed to participate

I reached out to people through a combination of a) thinking of people who had shared private strategy documents with me before that still had not been published b) contacting leaders of EA organizations, and c) soliciting suggestions from others. About half of the people I contacted agreed to participate. I think you should view this as a convenience sample, heavily skewed towards the people who find writing Forum posts to be low cost. Also note that I contacted some of these people specifically because I disagree with them; no endorsement of these ideas is implied.

People who’ve already agreed to post stuff during this fortnight [in random order]:

- Habryka - How EAs and Rationalists turn crazy

- MaxDalton - In Praise of Praise

- MichaelA - Interim updates on the RP AI Governance & Strategy team

- William_MacAskill - Decision-making in EA

- Ardenlk - On reallocating resources from EA per se to specific fields

- Ozzie Gooen - Centralize Organizations, Decentralize Power

- Julia_Wise - EA reform project updates

- Shakeel Hashim - EA Communications Updates

- Jakub Stencel - EA’s success no one cares about

- lincolnq - Why Altruists Can't Have Nice Things

- Ben_West and 2ndRichter - FTX’s impacts on EA brand and engagement with CEA projects

- jeffsebo and Sofia_Fogel - EA and the nature and value of digital minds

- Anonymous – Diseconomies of scale in community building

- Luke Freeman and Sjir Hoeijmakers - Role of effective giving within E

- kuhanj - Reflections on AI Safety vs. EA groups at universities

- Joey - The community wide advantages of having a transparent scope

- JamesSnowden - Current priorities for Open Philanthropy's Effective Altruism, Global Health and Wellbeing program

- Nicole_Ross - Crisis bootcamp: lessons learned and implications for EA

- Rob Gledhill - AIS vs EA groups for city and national groups

- Vaidehi Agarwalla - The influence of core actors on the trajectory and shape of the EA movement

- Renan Araujo - Thoughts about AI safety field-building in LMICs

- ChanaMessinger - Reducing the social miasma of trust

- particlemania - Being Part of Systems

- jwpieters - Thoughts on EA community building

- MichaelPlant - The Hub and Spoke Model of Effective Altruism

- Quadratic Reciprocity - Best guesses for how public discourse and interest in AI existential risk over the past few months should update EA's priorities

- OllieBase - Longtermism

- Peter Wildeford and Marcus_A_Davis - Past and future of Rethink Priorities

If you would like to participate

- If you are able to pre-commit to writing a post: comment below and I will add you to this list.

- If not: you can publish a post normally, and then tag your post with this tag.

- And include the following at the bottom of your post:[1]

This post is part of EA Strategy Fortnight. You can see other Strategy Fortnight posts here.

How to follow posts from this event

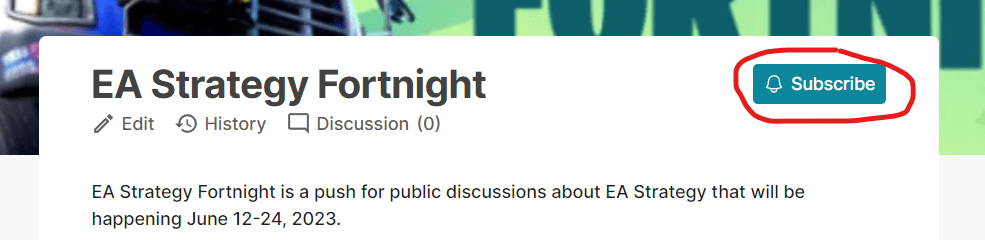

Posts will be tagged with this tag. As there is no formal posting schedule, you might want to subscribe to the tag to be notified when new posts get made.

If you want to start reading now, the Building Effective Altruism tag has a bunch of already-published posts on this subject.

- ^

Thanks to @Vaidehi Agarwalla for suggesting people do this

I had previously decided to work on EA community-building full-time, and have now mostly changed my mind. I want to write up my reasoning for this. I don't think this will be entirely relevant to general movement strategy, but I think it's worth making legible for others

Great, thanks! I added you to the list

I think this is totally within scope and I'd personally find it interesting to read!

Just for the sake of feedback, I think this makes me personally less inclined to post the ideas and drafts I have been toying with because it makes me feel like they are going to be completely steamrolled by a flurry of posts by people with higher status than me and it wouldn't really matter what I said.

I don't know who your target demo here is and it sounds like "flurry of posts by high status individuals" might have been your main intention anyways. However, please note, that this doesn't necessarily help you very much if you are trying to cultivate more outsider perspectives.

In any case, you're probably right that this will lead to more discussion and I am interested to see how it shakes out. I hope you'll write up a review post or something to summarize how the event went because it's going to be hard to follow that many posts about different topics and the corresponding they each generate.

Thanks for the feedback!

It's not entirely clear to me how this shakes out. I agree it is the case that posts cannibalize attention from each other to some extent, so you posting at the same time as a popular post could detract attention from yours. However, when people are on the Forum to read one thing they often click around on other stuff when they are done/get bored, meaning that you get more attention when posting during a popular time.

For example, in this graph you can see that, at least for the past ~year, when there is a spike in attention on community posts (usually caused by an exogenous scandal), we see a much smaller but still positive spike in attention to noncommunity posts, implying that the people who came for the popular thing tend to spend a (smaller, but still net positive) amount of time reading less popular stuff.

My guess is that it's weakly beneficial for you to post when something else popular is going on, but I'm not sure.

(Also pragmatically I expect that people are going to procrastinate, so if you post in the next ~week you probably won't have much competition.)

Thanks for setting this up, Ben.

I wonder -- conditioned on several less well-known people expressing intent to post and preference for a special setup -- whether it would be worthwhile to announce Fortnite Annex (June 26 to 29?) dedicated to less well-known voices, who could of course choose Main Fortnite if they preferred. Or you could identify ~2 specific days during Fortnite on which you ask the more well-known people not to release their posts. People could get some of the intended benefit by posting early, but that strategy doesn't give them much lead time at all.

I definitely see how having, say, a Will MacAskill post drop an hour after a less well-known person's post could lead to the latter poster feeling (and maybe being) overshadowed.

Can I encourage you to organize this, if you think it would be useful? Seems like the kind of thing which should be grassroots organized anyway, and it sounds like you have a better vision for it than I do.

I'm not convinced it would be net positive this time in the absence of several less well-known people expressing intent to post and preference for a special setup. I think there would be some downsides to each way the idea could be implemented a few days prior to start, so I'd wanted to see specific evidence that less well-known people would be more likely to post before endorsing a special setup this time.

Documenting the vision, my theory was that setting aside time for lesser-known voices (which basically means asking the well-known voices not to post at certain times) would mitigate concerns by less well-known voices that their contributions would "be completely steamrolled by a flurry of posts by people with higher status." (quoting Jacob, the original commenter above).

I agree that the effects here shake out in different directions -- though I hypothesize that the positive effect on a engagement with a given post comes more from general awareness of something bringing people to the Forum (e.g., there's a new scandal, it's Strategy Fortnight, etc.). In contrast, I speculate that the negative "cannibalizing" effect comes more from specific posts (look, there are fresh posts by X, Y, and Z with active engagement). Thus, I speculate that -- by judicious management of post timing -- we could capture much of the positive effect of the special event bringing in readers while mitigating the effect of prominent voices crowding other voices out. Of course, I could be wrong!

After thinking about it some more, it would probably be best to set aside space for lesser-known voices either at the beginning of an event or in a multi-day interlude in the middle of the event. Setting aside time at the very end of the event risks people having already had their fill of strategy talk; setting random days aside offers relatively limited isolation. However, most people who just learned about Strategy Fortnight wouldn't be ready to publish in the first few days, and I think it's too late to ask people who have already agreed to write for the event not to publish their post for a multi-day period.

So I think the best ways to test/implement the idea are off the table for this go-round.

Yeah, I would frame the event as "this is a topic being are going to be discussing something, now is the time to pitch in"

This makes sense, but it's also likely that the comments (and other engagement) on any one post from a high status individual is many times over that from the median forum post. So it's unclear how this nets out. OTOH, these posts being clumped might also mean they compete for attention.

One interesting aspect of this experiment is that it isn't a competition and there are no prizes

There were contests in the recent past. They haven't affected much practical change. My impression within effective altruism is that they were appreciated as an intellectual exercise but that they're isn't faith that another contest like that will provoke the desired reforms.

Some of the public criticism of EA I saw a few months ago was that the criticism contest was meant only to attract the kind of criticism the leadership of EA would want to hear. That criticisms of EA on a fundamental level were relegated to outside media was taken as a sign EA-sponsored self-criticism was a form of controlled and paid opposition. I'm personally ambivalent about that perception though, suffice to say, the criticism contest with prizes hasn't appeared too shift much outside perception there isn't much of a chance that EA as a movement will reform in the face of criticism.

To host competitions like that was worth a try. Yet this event is worth a try as well. Many of the individuals participating in this event have a role at the CEA or another organization affiliated with it. I've noticed there are 5-10 other leading figures with roles at EA-affiliated organizations that have agreed to participate in this event but also haven't commented. That means they were privately invited to participate before this event was publicly announced.

I imagine several leaders of various, leading EA-affiliated organizations (e.g., Joey Savoie, Oliver Habryka, Peter Wildeford & Marcus Davis, etc.) that already had annual budgets in the hundreds of thousands of dollars per year wouldn't have the time, the need for extra hard-to-come-by funds, nor an elevated platform to get attention from other leaders. They already had the means to have their criticisms taken seriously without the need to participate in a contest. That's why they wouldn't have bothered to participate in the criticism contest before.

Yet they've agreed to participate in this event after they were personally and privately invited. I assume they wouldn't have agreed in this event if they felt like there wasn't any significant change in EA strategy could be provoked.

I hadn't thought about that before, I think this is a great point!

Oh, excited to learn this is happening! I would write something. Most likely: a simplified and updated version of my post from a couple of weeks back (What is EA? How could it be reformed?). Working title: The Hub and Spoke Model of Effective Altruism.

That said, in the unlikely event someone wants to suggest something I should write, I'd be open to suggestions. You can comment below or send me a private message.

Great, thanks! I added you to the list

I like the notion that we should have bursts of discussion on this.

I will run 1 - 3 polis polls where people can try and find consensus statements on these topic.

I will probably be publishing a post on my best guesses for how public discourse and interest in AI existential risk over the past few months should update EA's priorities: what things seem less useful now, what things seem more useful, what things were surprising to me about the recent public interest that I suspect are also surprising to others. I will be writing this post as an EA and AI safety random, with the expectation that others who are more knowledgeable will tell me where they think I'm wrong.

Great, thanks! I added you to the list

Following this for sure!

In line with the EA Strategy Fortnight, we at EA Anywhere have decided to center this month's discussion around major strategic EA questions and host two virtual events this Sunday for different time zones:

Thanks for putting this together! I've written a post for this series about improving EA communications related to disability.

Thanks!

I have a short post about longtermism that this post prompted me to finish and publish, happy to-precommmit

I don't suppose there's a way to tag shortforms with this?

Dear all, I have produced this little piece for the EA Strategy Fortnigth:

https://forum.effectivealtruism.org/posts/txQJcvTGdsWyXuZLr/effective-altruism-and-the-trust-business

Looking forward to reading some interesting thoughts. :)